Abstract

International assessments of mathematics have shown persistent and widely intensified socioeconomic inequalities in achievement worldwide over the last decades. Such achievement gaps may partly be due to the differences in students’ personal and family characteristics. They may also be attributed to the schooling itself if school systems provide differentiated opportunity to learn (OTL) for children from privileged versus disadvantaged backgrounds. Previous research on the mechanism of the joint relationship among socioeconomic status (SES), academic achievement, and OTL produced inconclusive results. The main aim of the present study is to test whether schooling actually perpetuates social inequality in achievement, by reanalyzing PISA data. Specifically, we scrutinize the construct validity of the OTL measure in PISA that has been used in previous research. Our analyses reveal two latent dimensions of the OTL indicators in PISA, namely an unbiased OTL dimension and a self-concept dimension. The relationship between social background and mathematics achievement was only weakly mediated by OTL, when effect of students’ self-concept was controlled for. Our results suggest that the previous research finding that schooling perpetuates social gaps in mathematics performance suffers from a construct validity problem.

Similar content being viewed by others

1 Introduction

International comparative studies in different domains have shown persistent and sometimes even intensified socioeconomic inequalities in achievement worldwide (e.g., Mullis et al. 2012; OECD 2014). These achievement gaps may not be due only to the differences in student individual characteristics (e.g., cognitive abilities, grit, and health), home learning environments within families (e.g., parental education, homework support, and wealth), but also due to schooling itself. If school systems provide differentiated opportunities to learn (OTL) for children from privileged versus disadvantaged backgrounds, schooling may perpetuate social gaps in learning outcomes. We define OTL simply as the degree of exposure to the educational content being tested (see also Guiton and Oakes 1995; McDonnell 1995).

In a recent study, Schmidt et al. (2015) used international PISA data to explore whether OTL is a mediator for the effect of socioeconomic status (SES) on student achievement. Based on their findings, they claimed that schooling perpetuates social inequalities in student achievement, to the extent that “roughly a third of the SES relationship to literacy is due to its association with OTL” (p. 371). However, we identified a serious methodological flaw related to their measure of OTL that may have led to a biased overestimation of the OTL effect. Basically, we argue that the questionnaire items in PISA measure not only OTL but also students’ self-evaluation of their competence in mathematics. We believe that Schmidt at al. overestimated the correlation between OTL and achievement due to this construct-irrelevant source of variation. The main aim of the present study is to investigate this issue empirically by replicating their study with an OTL measure we adjusted for mathematics self-concept.

In the remainder of this paper, we first briefly summarize previous research on the impact of OTL on student performance. Thereafter, we discuss a methodological issue in the wording of response scales of some of the OTL measures in the PISA 2012 study, as used by Schmidt et al. (2015). Specifically, we hypothesize that the response alternative “Know it well, understand the concept” does not capture the degree of exposure to mathematics content (i.e., the definition of OTL) but mathematics self-concept. Consequently, conclusions based upon these OTL measures would be biased. In the empirical part, we adjusted the OTL measure used in the study by Schmidt at al. by means of multidimensional confirmatory factor analyses and replicated their mediation analyses. Finally, we discuss our findings from a methodological and a substantive standpoint and suggest consequences for the wording of questionnaire items.

1.1 Opportunity to learn as a research concept

The research concept OTL was introduced as part of the early international large-scale student assessment in the 1960s and 1970s, and it is an important conceptual framework for the international studies since then (Dahllöf 1971). The simple idea is that to learn something requires opportunities to learn. For this purpose, the OTL model conceptualizes curricula as functioning on three levels, as follows. (1) The level of the intended curriculum, which is what national educational policies intend students to learn and how the education system is organized to facilitate this learning (e.g., including versus segregating students with special needs). (2) The implemented curriculum, which relates to how the educational organizations (e.g., schools) implement such goals, what is actually taught in individual classrooms, who teaches it, and how it is taught. (3) Lastly, the attained curriculum, which describes what students have actually learned (e.g., as measured by scores on standardized tests) and what they think about it (e.g., their interest) as well as the emergence of educational inequality (e.g., social gaps).

There are different refinements of the OTL concept. Schmidt and McKnight (1995) propose four central research questions that organize the concept and the interrelationships among the three levels of the curriculum, namely, What are students expected to learn? Who delivers the instruction? How is the instruction organized? What have students learned? These research questions relate to the three levels of the curriculum and were examined at system, school, classroom, and individual levels, making the conceptual model a network of relationships among the constructs essential for opportunity to learn, not only for individual students in their classrooms and schools, but also across different educational systems. Obviously each question can be further refined, for example, there are three major channels through which the implemented curriculum is linked to the achieved curriculum. The first channel emphasizes how the characteristics of students and their peers affect instruction quality, and both in turn affect the achieved curriculum. The second channel emphasizes the effects of teacher-related factors on the achieved curriculum: teacher characteristics influence the instructional activities, which in turn affect student achievement. Here teacher characteristics, such as education, qualifications, experiences, pedagogic beliefs, and expectations, etc., affect student achievement both directly and indirectly through instructional activities. The third channel emphasizes how organizational differentiation affects teacher resources and teaching support, which in turn affect teaching activities or the implemented curriculum.

Although the OTL concept is suitable for deriving narrow and specific research questions, Schmidt et al. (2015) argue that OTL can also be interpreted in a broader sense as exposure to contents or contact coverage, for example, mathematics content. In the present paper we follow this approach and define OTL as exposure to mathematics content. Nonetheless, the previous remarks are important as they suggest that OTL (defined as content coverage) may vary and thereby perpetuate inequality in educational outcomes. Students are selected or self-selected into schools and classrooms by organizational factors such as tracking, ability grouping and school choice, and the selection is often related to students’ socioeconomic and ethnic background. This brings in equity aspects of OTL that affect both instructional quality and educational outcomes. Thus, one of the foci of the current study is to examine the extent to which schooling perpetuates educational inequality in mathematics performance in different educational systems. This research focus is operationalized by investigating the interrelationships among opportunity to learn, students’ family background and their mathematics achievement (see Fig. 1). One aspect of the OTL concept represents the degree to which the content in the national curriculum is actually taught, i.e., content coverage, on which emphasis was placed in our analysis, as measures of OTL.

The interrelationship among OTL, mathematics achievement and students’ social, cultural and economic backgrounds (Schmidt et.al. 2011)

1.2 Research on the impact of opportunity to learn on student achievement

OTL has been explored in numerous studies and their findings have been incorporated in meta-analyses. In the most recent overview, Scheerens (2017) reviewed three previous meta-analysesFootnote 1 as well as 51 research studies, the majority of which were carried out in the US during the past 20 years. The three meta-analyses reported the effect sizes d = 0.18, 0.30, and 0.88. However, Scheerens points out that the meta-analysis that reported the largest average effect size was based on only four studies, of which two reported exceptionally large effect sizes. The overview of the 51 recent studies revealed a moderate average effect size of OTL on achievement (d = 0.30), which is similar to the effect sizes reported in the two other meta-analyses.

Meta-analyses are often considered to provide more generalizable findings than single studies. Hedges and Nowell, however, point out that “[r]eviews and meta-analyses of data from nonrepresentative samples are not necessarily any more representative than the studies on which they are based” (1995, p. 41). According to this, the data from many small samples of convenience should be replaced by data from large probability samples. In addition, many meta-analyses and reviews use data from the US, which limits the generalizability to other countries. For this reason, we next summarize the findings from international large-scale assessments that draw large stratified random samples from a diverse set of countries around the world.

Using PISA 2012 data from 33 OECD countries, Schmidt et al. (2015) studied the relationship between OTL, SES, and mathematics achievement, and investigated whether schooling perpetuates social inequality. They argue that the relationship between students’ family socioeconomic status (SES) and their achievement is partly mediated by differences in opportunity to learn in school settings. In other words, students from privileged backgrounds attend schools that provide more learning opportunities, and these additional learning opportunities widen the existing achievement gap related to children from disadvantaged background (see Fig. 1). Schmidt at al. applied a mediation path model, where both SES and OTL have a direct effect on mathematics achievement, and SES has an additional indirect effect via OTL on mathematics achievement. The main finding of the study is a large average effect size of OTL on achievement: one unit increase in OTL corresponds with three-fifths of a standard deviation increase in performance. With respect to social inequalities, OTL mediated roughly one-third of the SES achievement gap on average. Such striking effects are particularly suspicious because they are not based on a small-scale high-quality program but on representative observational and cross-sectional international data. However, they are also in contrast to the findings from another international assessment.

Luyten (2017) examined the effect of OTL on mathematics achievement for the 22 countries that participated in both IEA (International Association for the Evaluation of Educational Achievement) TIMSS 2011 and OECD’s (Organisation for Economic Co-operation and Development) PISA (2012). To measure OTL, TIMSS asked teachers if 19 main topics addressed by the TIMSS mathematics tests (e.g. simple linear equations and inequalities; congruent figures and similar triangles) were mostly taught before this year (1), mostly taught this year (2), or not yet taught or just introduced (3). Using simple regression, Luyten regressed mathematics achievement on this OTL measure. Interestingly and in contrast to the previously reported PISA findings, the average effect size of OTL on achievement was close to zero (standardized regression coefficient was 0.03). The difference between analyses based on TIMSS and PISA cannot be explained by differences in the composition of countries in both assessments because Luyten replicated the large effect sizes reported by Schmidt et al. using data only from those countries that participated in both assessments (standardized regression coefficient was 0.37; this coefficient can be transformed to Cohen’s d = 0.80). Furthermore, Luyten aggregated the student-level OTL data from PISA on school-level to increase the comparability with TIMSS. Luyten (2017) hypothesized that the contradictory findings may be due to the fact that “TIMSS OTL measures were based on teacher responses and the PISA OTL measures on student responses” (p. 111). However, we propose an alternative explanation that is related to the response scales of the PISA items. In the next section, we will scrutinize the PISA items and elaborate on this idea.

1.3 Scrutinizing how opportunity to learn is measured in PISA: a methodological issue in the wording of the response scale

To understand our critique of PISA’s OTL measure, it is pertinent to revisit some foundations of the science of asking questions. Survey experts have pointed out that the question answering process involves various tasks and that apparently minor issues in the question wording or the response scale can have serious consequences for the meaning of survey data. Respondents have to understand a question, recall the information, form their judgments, translate it to the response alternatives, and edit their final answer (e.g., Schaeffer and Presser 2003; Schwarz et al. 2008). For the present study it is important to stress that the response alternatives may affect how respondents understand a question. Following Grice’s (1975) maxim of relation, respondents presume that the developers of a questionnaire constructed meaningful response alternatives that are related to the task at hand.

A closer look at how PISA measures OTL reveals a methodological flaw in the wording of the instrument. Schmidt et al. (2015, p. 373) explain that they aim to measure “the intensity of exposure to selected mathematics topics.” However, some of the items in the PISA questionnaire use a response scale that does not inquire only about the exposure to mathematical contents (OTL), but also about mathematical self-concept. In brief, PISA asked students how familiar they are with different mathematical terms, e.g., exponential functions (see Table 1 for the full question). The response alternatives are (1) never heard of it, (2) heard of it once or twice, (3) heard of it a few times, (4) heard of it often, and (5) know it well, understand the concept (see Table 1 for details). While the first four response categories indeed assess the intensity of exposure, the last does not.

Specifically, our critique relates to the last response alternative. While the first four response alternatives are about the frequencies of exposure to different algebra and geometry topics, the fifth one involves students’ self-evaluation of their competence in these topics. Therefore, the instrument not only captures OTL but also an unintended component that we refer to as mathematical self-concept in the following. We believe that the fifth response alternative brings errors into the measurement of the latent construct OTL. Consequently, any further analyses based upon this instrument may be biased. In this vein, we hypothesize that the remarkably high association between OTL and achievement reported by Schmidt et al. (2015) is due to the biased OTL measure in PISA. Our approach to test this is to use a direct measure of mathematical self-concept to adjust the OTL measure for the unintended source of variation. Such a scale of students’ self-concept in mathematics was indeed included in the PISA questionnaire and it explains on average 17.1 percent in the variance of mathematics achievement in OECD countries (OECD 2013), which corresponds to a correlation of 0.41. Based on this finding it is likely that Schmidt et al. (2015) overestimated the role of schooling in perpetuating social inequality in mathematics performance.

1.4 The present study

The main purpose of the present study is to estimate whether OTL mediates the relationship between socio-economic background and student achievement in mathematics using PISA data for a wide range of countries. Specifically, we replicate a previous study (Schmidt et al. 2015) of this issue with an adjusted measure of OTL. For this purpose, we use multidimensional confirmatory factor analysis (CFA) and compute an unbiased OTL measure by modeling construct irrelevant variance as a separate latent variable. We use this measure to replicate the aforementioned analyses and to achieve an unbiased estimate of the role of OTL as a mediator for the effect of SES on student achievement.

2 Data and analytical method

2.1 Samples

PISA 2012 data were used to reexamine the measurement properties of mathematics OTL and their effect on mathematics achievement. The PISA studies test the knowledge and skills of 15-year-old students in mathematics, science and reading literacy in a 3-year cycle. In each cycle, one of the core domains is tested in detail, taking up nearly two-thirds of the total testing time. In PISA 2012, mathematics was the key domain tested. It should be noted that PISA is not aligned with any specific national curriculum. Instead, it tests knowledge and abilities required in the society for the future life of the students. In the current analysis, 62 countries were included, and almost a half million students were examined.

It can be observed that countries vary greatly in their sample size. Mexico has the largest sample size, followed by Italy, Spain and Canada, with Liechtenstein’s sample being the smallest. It should also be noted that PISA samples are based on age and thus they cover students from different grades. This age-sampling implies that students in the same sample had different topic coverage in mathematics because they were enrolled in different grades. It should be noted that the participation rates in PISA were below 80 percent in 1 out of 4 countries, and some countries, for example, excluded students in special education. Furthermore, the cross-cultural validity of survey data is contentiously an under-researched issue in comparative research (see Johansson 2016; Rutkowski and Rutkowski 2016; Strietholt et al. 2013; Strietholt and Scherer 2017).

2.2 Variables

2.2.1 Opportunity to learn

Three variables were used to measure OTL. The first two components were scales that summarize the mathematical content items that are related to algebra and geometry (see Table 1). The algebra scale (ALGE) is defined as the mean of three items on exponential functions, quadratic functions, and linear equations; and the geometry scale (GEOM) is defined as the mean of the next four items (vectors, polygons, congruent figures, cosines). It should be noted that the first four alternatives of the response scale are about the degree of exposure to different mathematical content while the last alternative, “Know it well, understand the concept”, refers to a self-evaluation on how well the student understands the concept. The items were recoded to range from 0 to 4.

The third component of the OTL measure is a single item on how often students encounter formal problems in their mathematics lessons (FORM). Table 2 shows that two examples, an equation with one unknown and one on the volume of a box (not to be solved), define formal problems in mathematics. The response format of this single item is a frequency scale without any references to self-evaluation. The item was recoded to range from 0 to 3.

2.2.2 Mathematics self-concept

We use explicit measures of mathematics self-concept to model the construct irrelevant variance in some of the PISA OTL measures. For this purpose, we use the eight items from the student questionnaire that are listed in Table 3. To reduce the number of parameter estimates we used parceling and computed three items parcels, MSC1, MSC2, and MSC3. For the first parcel the first three items were averaged, for the second one the next three items, and for the third one the last two items. The items were recoded to range from 0 to 3 before the parceling.

2.2.3 Socio-economic background

To measure socio-economic background, the PISA data include the index of economic, social, and cultural status (ESCS; for details see OECD 2014, pp. 351–352). This index was created on the basis of student reported information on parental occupational status, parental level of education, household wealth, and possession of educational and cultural resources.

2.2.4 Mathematics achievement

The main outcome variable is the mathematics achievement score in PISA (MATH). The achievement scale has an OECD mean of 500 with a standard deviation of 100 points. In all analyses, we used the five plausible values and combine the results using Rubin’s (1987) rules.

2.2.5 Bivariate correlations with achievement

Table 4 shows that statistically significant correlations between mathematics achievement and the indicators of OTL (ALGE, GEOM, FORM), mathematic self-concept (MSC1, MSC2, MSC3), and ESCS can be observed in almost all countries. In general, mathematics achievement correlates more highly with the mathematics self-concept measures than with the respective OTL measures or the ESCS index. It should be noted that among the three OTL measures, the correlations are especially low for FORM. A possible explanation for this comparatively low correlation is that the wording of the response scale of the two other two indicators (ALGE and GEOM) led to an overestimation of the correlation with achievement. To test this possibility, we next outline our approach to remove construct irrelevant variation from the OTL measure, namely mathematics self-concept, by means of latent variable modelling.

2.2.6 Analytical method and process

Confirmatory factor analysis (CFA, see e.g., Brown 2014) was used to measure the psychometric properties and to examine construct validity of the PISA OTL instrument. A measurement model can be estimated in CFA through relating an unobserved construct, the so-called latent variable or factor, e.g., OTL, to its observed indicators, e.g., ALGE, GEOM, and FORM. As was mentioned previously, the response alternatives in ALGE and GEOM included a mathematics self-concept component. If not removed, this will bias the measurement property of the OTL construct and its effect on mathematics achievement. It also is arguable that the high OTL effect on mathematics achievement observed by Schmidt et al. (2015) might be attributed to the unintended involvement of the self-concept construct in the OTL indicators. In the current study, the measurement model of OTL was therefore specified in two ways, as an unadjusted model as replication of Schmidt et al. (2015), and as an adjusted model where the mathematics self-concept is singled out. Figure 2 illustrates the structure of the two measurement models.

The unadjusted model (2a) is a simple model with only one latent variable, OTL, indicated by ALGE, GEOM, and FORM. The adjusted model, on the other hand, accounted for the multidimensionality in the OTL measures by introducing a student’s mathematics self-concept factor indicated by MSC1, MSC2, and MSC3 as well as by ALGE and GEOM (2b).

The models were evaluated by goodness of fit indices. As Hu and Bentler (1999) suggested, combinations of incremental fit indices, such as Comparative Fit indices (CFI), and absolute fit indices, such as Root Mean Square Residual of Approximate (RMSEA) and Standardized Root Mean Square Residual (SRMR), were selected to be the key model fit criteria. The cutoff values recommended by Hu and Bentler (1999) for acceptable model fit were less than 0.08 for SRMR and RMSEA. A value of less than 0.06 for RMSEA is regarded as indicating a well-fitting model, with 0.08 being acceptable. For the CFI, values greater than 0.95 are generally considered a good fit (see also Brown 2014). Since the unadjusted model is a just identified model with zero degrees of freedom, the model has a perfect model fit, in which RMSEA and SRMR are 0 and CFI is 1. In the adjusted model, an acceptable model fit has been achieved (see Table 3).

Two sets of factor scores for the OTL latent variable estimated by the adjusted and unadjusted models were saved and further used as an observed variable of OTL to examine its effect on mathematics achievement, controlling for social, cultural and economic differences in family background (see Fig. 1). It is important to note that the factor scores were computed from the pooled data of all countries, assuming measurement invariance (Schmidt et al. 2015, make the same assumption). One advantage of this approach is that the countries use the same measures of OTL, so that factor score estimates were based on the same weight (i.e., factor loadings). Another advantage of the factor score approach is simplicity. It reduces the complexity of model structure so that both direct and indirect effects can be scrutinized in a path model. The indirect effect of ESCS on mathematics achievement, mediating through OTL, can be argued as the compensatory effect of schooling on students’ family background, on their academic achievement, thus indicating the degree of educational equity in different school systems.

The analytical work was done in Mplus version 7.4 (Muthén and Muthén 1998–2016), and the model complex option was applied in the modeling procedure to correct the underestimation of the standard error caused by the cluster sampling design in PISA studies (OECD 2014).

3 Results

In this section, the results from each of the analytical steps are presented. Since there are 62 countries involved in the analysis, we focus on the general patterns. Tables 6 and 7 in “Appendix” list the numerical results for the mediation analyses for each country.

3.1 Unadjusted and adjusted factor scores of opportunity to learn

Table 5 presented factor loadings estimated in both the unadjusted and the adjusted measurement models of OTL. For all three indicators of OTL, factor loadings are higher for the familiarity with different geometry and algebra concepts (i.e., GEOM and ALGE), but rather low for the experience of word problems in formal mathematics lessons or tests (FORM). When we compared the estimates between the unadjusted and the adjusted models, the factor loadings were higher in the unadjusted models. The estimates were 0.84 for ALGE, 0.69 for GEOM and 0.24 for FORM in the unadjusted model, while the average factor loadings were 0.78, 0.57 and 0.17 for ALGE, GEOM and FORM respectively in the adjusted model when the mathematics self-concept component was singled out. It should also be mentioned that rather substantial factor loadings were observed of ALGE and GEOM on the mathematics self-concept factor MSC. The decrease of the factor loadings of OTL indicators from the unadjusted model to the adjusted one indicates that the OTL indicators measured different concepts, OTL and MSC.

3.2 Opportunity to learn as a mediator of the relationship between SES and mathematics achievement

Factor scores estimated by both the adjusted and unadjusted OTL measurement models were used in a path model to replicate the analyses by Schmidt et al. (2015) and to investigate the role of OTL as a mediator for the effect of SES on student achievement, as suggested by Schmidt et al. (2011, see also Fig. 1). The parameter estimates for the direct effects are the coefficients in the regressions of MATH on ESCS, OTL on ESCS, and MATH on OTL (see Table 6 in “Appendix”). The total direct effect is the regression coefficient between ESCS and MATH, where MATH is the dependent variable affected by ESCS. The total indirect effect (i.e., the effect of ESCS on MATH mediated by OTL) can simply be calculated as a product of the two regression coefficients, namely, the regression parameter of OTL on ESCS times the parameter for the regression of MATH on OTL. The estimates of the direct and indirect effects of ESCS on mathematics achievement can be found in Table 7 in “Appendix”.

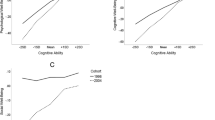

The average indirect effect is higher in the model with OTL factor scores estimated by the unadjusted model, at 0.10. It reduced to 0.04 in the model where OTL factor scores were estimated while adjusting for the student’s mathematics self-concept. The direct effect was found to follow an opposite change, being higher in the adjusted model (0.33) and lower in the unadjusted model (0.27). Great variation in the estimation of direct and indirect effect was also observed. In the adjusted estimates, the highest effect of ESCS on student’s mathematics performance was found in the Slovak Republic, where ESCS explained 22% of the differences among students’ mathematics achievement, while in Macao-China only about 2% of these differences were attributable to ESCS.

Focusing on the relationship between students’ family background and PISA performance as it is mediated by OTL, i.e., the indirect effect of ESCS on mathematics achievement via OTL, Fig. 3 shows the proportion of the direct and indirect effect on mathematics achievement with OTL factor scores estimated in both the adjusted and unadjusted models. Singapore, Australia, Liechtenstein, Austria, the Netherlands and Germany, for example, have rather high indirect effects, which implies that their schools perpetuate students’ family background through differentiated learning opportunities. In countries on the left-hand side of the figure, such as Iceland, Sweden, Tunisia, Greece, Russia, Peru and Poland, the proportion of the mediation effect was extremely low. Some indirect effects are even negative indicating that schooling reduced social inequality. However, since the standardized effect sizes are very small (≤ 0.01) and mostly statistically non- significant, we caution against over-interpreting this finding.

The indirect effects estimated by the unadjusted model were much higher compared to those estimated by the adjusted one. It can be argued that the indirect effect achieved by the unadjusted estimation of OTL factor scores might be overestimated, since part of the effect can be attributed to the unintended component of student’s mathematics self-concept. The average indirect effect of ESCS on mathematics achievement decreased by about 60%. In a small number of countries, including the Netherlands and Singapore, an increase in the indirect effect was found (see Table 7 in “Appendix”). Again, these were exceptions and we caution against over-interpreting these findings.

4 Discussion and conclusions

The current study focuses on one of the aspects in the conceptualization of OTL to examine whether or not “students have had the opportunity to study a particular topic or learn how to solve a particular type of problem presented by the test” (Husén 1967, pp. 162–163). In that definition, the content coverage or exposure to students is the key focus of the measurement of OTL. Another focus, in the current study, is to investigate OTL’s mediation effect of educational equity. Applied to data from the PISA 2012 study, the two-fold aims are investigated with multidimensional confirmatory factor analysis and a factor score approach.

In the context of international large-scale studies, OTL is typically measured by teachers’ perceptions of the extent to which certain topic areas within a domain were taught at school. It should be noted, however, that OTL measures in PISA 2012 were collected as students’ perceptions and experiences of some topics in different mathematics sub-domains that were exposed or encountered. The information from these students deviates from the typical OTL indicators in ways that threaten the construct validity of OTL, and also the inference credibility of the impact of OTL on students’ achievement and educational equity. This issue may also have profound consequences for educational practices and policy making.

4.1 Construct validity of opportunity to learn

Scrutinizing the instrument of opportunity to learn in PISA 2012, the current study found a construct-irrelevant component of students’ mathematics self-concept involved in the measurement of OTL. This construct-irrelevant component was due to one of the response alternatives in the subscales of OTL, namely the exposure of different topics and concepts in algebra and geometry. We thus argued that the self-concept component should be singled out from the OTL measures so that an unbiased OTL construct can be obtained. However, we observed that this construct validity problem has not been fully addressed in previous research.

By fitting a multidimensional confirmatory factor model, the study attempted to achieve an unbiased OTL construct by removing the unintended variation of student’s academic self-concept from the measures of OTL. A substantial amount of unintended variance was found in the measures of algebra and geometry subscales of OTL. The students’ mathematical self-concept factor accounted for about 13% of the variation in algebra and over 14% of the variation in geometry. Measured by the residual variance of these two OTL indicators, i.e., the adjusted indicators of algebra and geometry subscales, together with the measure of students’ experience with different formal mathematical concepts, the confounding component of student’s academic self-concept can be controlled for. We also observed that the algebra and geometry topic exposure indicators related to the latent construct OTL highly, even after controlling for students’ mathematical self-concept, although the size of the factor loadings reduced somewhat, compared to the factor loading estimated by the replication of Schmidt et al.’s (2015) conception of OTL in the unadjusted model. However, the students’ experience of different mathematics concepts indicator of OTL did not relate to the latent variable OTL as highly as the other two OTL indicators.

The empirical evidence points to crucial validity problems of the OTL measure in the PISA 2012 study. The unintended component of students’ academic self-concept caused by the response alternatives in OTL indicators blurred the conceptualization and measurement of OTL. Since students’ academic self-concept is positively related to their achievement level (e.g., Hattie 2009; Marsh and O’Mara 2008; Möller et al. 2009), and their family background (e.g., Mruk 2006; Twenge and Campbell 2002), such a biased OTL measure will lead to an overestimation of the mediation effect. The opposite pattern of changes in the direct versus indirect effect of ESCS on mathematics achievement after adjusting for students’ academic self-concept supports this interpretation.

Our analyses of the OTL measure in the PISA 2012 study illustrate how future secondary analyses of this data should be done to deal with the methodological issues related to the wording of the response scale. It is worthwhile emphasizing that it might also be useful to explore other analytical strategies to model the OTL data. The basic idea of modeling two latent factors is that students combine their perception of two continuous constructs, the degree of exposure to OTL and mathematical self-concept, when addressing items on the PISA OTL instruments. However, it may also be that students do not read all but only some response alternatives and then extrapolate the others. For example, a student who reads only the last response alternative “know it well, understand the concept” may extrapolate that the questions is only about mathematical self-concept. To gain a deeper understating of how students understand the PISA OTL instrument, think aloud protocols may provide a valuable source of information. Such qualitative data may also be used to develop and test hypotheses about student characteristics that are related to how students interpret survey questions (e.g., students with low and high self-concept may interpret questions differently).

Instead of modeling unintended sources of variance, the instruments of future PISA studies may also consider changing the wording of the response scale. For example, instead of using a five-point scale with the response alternative, “Never heard of it”, “Heard of it once or twice”, “Heard of it a few times”, “Heard of it often”, and “Know it well, understand the concept”, the developers of the instruments for future PISA cycles may simply remove the last alternative for a clearer interpretation of the items.

Another validity problem related to the OTL indicator students’ experiences of mathematics concepts is that such experiences can occur in different intertwined contexts in everyday life at home, with peers, in leisure time activities and at school. It is hard to separate the mathematics related experiences that are particularly connected to school settings from those encountered elsewhere. The fact that children’s experiences of knowledge and learning are constrained by their family socioeconomic background (e.g., Evans 2004) may therefore introduce an additional unintended variation into OTL measures. This may also explain why OTL was substantially related to student’s achievement in PISA but not in TIMSS (Luyten 2017). Another approach to achieve valid OTL measures may be to survey teachers who have the direct perception of their practices, as in, for example, IEA TIMSS studies.

4.2 Does schooling actually perpetuate educational inequality in mathematics performance?

Factor scores estimated by both the unadjusted and the adjusted model of OTL were further used to replicate Schmidt et al.’s (2015) analyses and to investigate the role of OTL as a mediator for the effect of SES on student mathematics achievement. The effects of OTL were shown to be higher when the unadjusted model was used to compute OTL scores, compared to those estimated by the adjusted model. After parsing the mathematics self-concept component from the OTL measures, and controlling for the family background differences, we observed a smaller OTL effect on mathematics achievement as well as a smaller indirect effect of family background on performance.

There has been a long-standing discussion of whether school makes a difference in students’ academic performance when taking into account family background (e.g., Coleman et al. 1966; Gamoran and Long 2007). While empirical evidence has consistently shown that students’ SES affects their academic achievement (e.g., Sirin 2005), the effects of school-related factors are at best weak, and often not significant. After adjusting for students’ mathematics self-concept, the current study confirmed that students’ socioeconomic status is positively related to their mathematics achievement in all the countries studied, with the same being true for OTL. We also found an additional positive and significant indirect effect of family background on mathematics achievement mediating through the differences in opportunity to learn. It seemed that schools do not close, but they widen, the social achievement gap. However, compared to the effects achieved in the Schmidt et al. study (2015), the effect size of the indirect effects is much lower, indicating an overestimation in the previous study by Schmidt and colleagues. It should be noted that the great variation observed in the indirect effect of students’ family social, cultural and economic background on their mathematics achievement mediating students’ educational opportunity indicates that different school systems maintain the socioeconomic status of their children to a varying degree. Even though the majority of the countries we studied showed a significant indirect effect, the effects are rather small in most of the countries. Moreover, due to the validity problems in the OTL in the PISA study, as we discussed before, the interpretation of the indirect effect needs to be cautious. It can be hypothesized that schooling may be differentiated for students from different social, economic and cultural background (see, e.g., Yang Hansen and Gustafsson 2016), schools may not only mediate the student’s family background but also compensate or anti-compensate the relationship between student’s family background and their academic achievement (Gustafsson et al. 2016). In order to offer a relatively complete picture of the question of whether schooling perpetuates educational inequality, a cross-level interaction between OTL and educational equity has to be examined.

4.3 Implications for educational practices and educational policy-making

One of the most important policy issues within the field of education is the degree to which nationally determined curricula affect the outcomes of education, and it is a challenging scientific task to determine the mechanisms involved. OTL is crucial in this context. As McDonnell (1995) pointed out, OTL is a generated research concept and policy instrument that “has changed how researchers, educators, and policy makers think about the determinants of student learning” (p. 305).

In international studies, OTL can be used not only for explaining the variation in students’ test results, but also for evaluating equity and quality of the educational environments. Especially when global educational reforms emphasize accountability, the concept OTL is used as an instrument to understand between country variations in academic achievement and to guide their educational reforms and policy-making related to national curricula. Given the importance of OTL, it is essential for researches to provide credible research evidence that is based on valid conceptualization and measurement of OTL.

It is necessary to emphasize that the frameworks of the IEA and the OECD assessments are based on different principles, the IEA taking a starting point in curricular goals, and PISA focusing on competencies (McGaw 2008a, b; Wagemaker 2008). This emphasis implies that the OTL information in the PISA studies is limited. Moreover, OTL measures in PISA were collected from individual students’ experiences in formative mathematics education of different mathematics concepts. One could argue that such measures may suffer from a lack of construct validity. Nevertheless, it is of interest to analyze the relations between curricula and outcomes, and to compare the IEA and OECD approaches to constructing assessment frameworks. One approach is to combine information from different studies, since many of the countries that participate in PISA also participate in TIMSS and PIRLS.

Notes

We disregard a fourth meta-analyses discussed by Scheerens because it operationalized OTL as enrichment programs for gifted children.

References

Brown, T. A. (2014). Confirmatory factor analysis for applied research. New York: Guilford Publications.

Coleman, J. S., Campbell, E. Q., Hobson, C. F., McPartland, A. M., Mood, A. M., Weinfield, F. D., & York, R. L. (1966). Equality of educational opportunity. Washington, DC: U.S. Government Pringting Office.

Dahllöf, U. (1971). Relevance and fitness analysis in comparative education. Scandinavian Journal of Educational Research, 15(3), 101–121.

Evans, G. W. (2004). The environment of childhood poverty. American Psychologist, 59(2), 77–92. https://doi.org/10.1037/0003-066X.59.2.77.

Gamoran, A., & Long, D. A. (2007). Equality of educational opportunity: a 40 year retrospective. In R. Teese, S. Lamb & M. Duru-Bellat, Helme S. (Eds.), International studies in educational inequality, theory and policy (pp. 23–46). Dordrecht: Springer.

Grice, H. P. (1975). Logic and conversation. In P. Cole & J. Morgan (Eds.), Syntax and semantics (pp. 41–58). New York: Academic Press.

Guiton, G., & Oakes, J. (1995). Opportunity to learn and conceptions of educational equality. Educational Evaluation and Policy Analysis, 17(3), 323–336.

Gustafsson, J.-E., Nielsen, T., & Yang Hansen, K. (2016). School characteristics moderating the relation between student socio-economic status and mathematics achievement in grade 8. Evidence from 50 countries in TIMSS 2011. Studies in Educational Evaluation. https://doi.org/10.1016/j.stueduc.2016.09.004 (Advance online publication).

Hattie, J. (2009). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. New York: Routledge.

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling, 6, 1–55.

Husén, T. (Ed.). (1967). International study of achievement in mathematics: A comparison of twelve countries (Vol. I). New York: Wiley.

Johansson, S. (2016). International large-scale assessments: what uses, what consequences? Educational Research, 58(2), 139–148. https://doi.org/10.1080/00131881.2016.1165559.

Luyten, H. (2017). Predictive power of OTL measures in TIMSS and PISA. In J. Scheerens (Ed.) Opportunity to learn, curriculum alignment and test preparation (pp. 103–119). New York: Springer International.

Marsh, H. W., & O’Mara, A. (2008). Reciprocal effects between academic self-concept, self-esteem, achievement, and attainment over seven adolescent years: Unidimensional and multidimensional perspectives of self-concept. Personality and Social Psychology Bulletin, 34(4), 542–552.

McDonnell, L. M. (1995). Opportunity to learn as a research concept and a policy instrument. Educational Evaluation and Policy Analysis, 17(3), 305–322.

McGaw, B. (2008a). The role of the OECD in international comparative studies of achievement. Assessment in Education: Principles, Policy & Practice, 15(3), 223–243.

McGaw, B. (2008b). Further reflections. Assessment in Education: Principles. Policy & Practice, 15(3), 279–282.

Möller, J., Pohlmann, B., Köller, O., & Marsh, H. W. (2009). A meta-analytic path analysis of the internal/external frame of reference model of academic achievement and academic self-concept. Review of Educational Research, 79, 1129–1167.

Mruk, C. J. (2006). Self-esteem research, theory, and practice: Toward a positive psychology of self-esteem. New York: Springer.

Mullis, I. V. S., Martin, M. O., Foy, P., & Drucker, K. T. (2012). PIRLS 2011 international results in reading. Chestnut Hill: TIMSS & PIRLS International Study Center, Boston College.

Muthén, L. K., & Muthén, B. O. (1998–2016). Mplus User’s Guide (7th edn.). Los Angeles, CA: Muthén & Muthén.

OECD. (2012). Student questionnaire Form B in PISA 2012. Document retrieved June 2016 from https://nces.ed.gov/surveys/pisa/pdf/MS12_StQ_FormB_ENG_USA_final.pdf.

OECD. (2013). PISA 2012 results: Ready to learn: Students’ engagement, drive and self-beliefs (Volume III). Paris: OECD Publishing.

OECD. (2014). PISA 2012 results: What students know and can do–Student performance in mathematics, reading and science (volume I, Revised edition, February 2014). Paris: OECD Publishing. https://doi.org/10.1787/9789264201118-en.

OECD. (2014). PISA 2012 technical report. Paris: OECD Publishing. Retrieved June 2016 from https://www.oecd.org/pisa/pisaproducts/PISA-2012-technical-report-final.pdf.

Rubin, D. B. (1987). Multiple imputation for nonresponse in surveys. Hoboken: Wiley.

Rutkowski, L., & Rutkowski, D. (2016). A call for a more measured approach to reporting and interpreting PISA results. Educational Researcher, 45(4), 252–257.

Schaeffer, N. C., & Presser, S. (2003). The science of asking questions. Annual Review of Sociology, 29, 65–88.

Scheerens, J. (2017). Opportunity to learn, curriculum alignment and test preparation: A research review. New York: Springer International.

Schmidt, W. H., Burroughs, N. A., Zoido, P., & Houang, R. T. (2015). The role of schooling in perpetuating educational inequality: An international perspective. Educational Researcher, 44(7), 371–386.

Schmidt, W. H., Cogan, L. S., Houang, R. T., & McKnight, C. C. (2011). Content coverage differences across districts/states: A persisting challenge for US education policy. American Journal of Education, 117(3), 399–427.

Schmidt, W. H., & McKnight, C. C. (1995). Surveying educational opportunity in mathematics and science: An international perspective. Educational evaluation and policy analysis, 17(3), 337–353.

Schwarz, N., Knäuper, B., Oyserman, D., & Stich, C. (2008). The psychology of asking questions. In E. D. de Leeuv, J. J. Hox & D. A. Dillman (Eds.), International handbook of survey methodology. New York: Lawrence Erlbaum Associates.

Sirin, S. R. (2005). Socioeconomic status and academic achievement: A meta-analytic review of research. Review of educational research, 75(3), 417–453.

Strietholt, R., Rosén, M., & Bos, W. (2013). A correction model for differences in the sample compositions: The degree of comparability as a function of age and schooling? Large-Scale Assessments in Education, 1(1), 1–20.

Strietholt, R., & Scherer, R. (2017). The contribution of international large-scale assessments to educational research: Combining individual and institutional data sources. Scandinavian Journal of Educational Research, 1–18.

Twenge, J. M., & Campbell, W. K. (2002). Self-esteem and socioeconomic status: A meta-analytic review. Personality and social psychology review, 6(1), 59–71.

Wagemaker, H. (2008). Choices and trade-offs: Reply to McGaw. Assessment in Education: Principles, Policy & Practice, 15(3), 267–278.

Yang Hansen, K., & Gustafsson, J. E. (2016). Causes of educational segregation in Sweden–School choice or residential segregation. Educational Research and Evaluation, 22(1–2), 23–44.

Author information

Authors and Affiliations

Corresponding author

Additional information

This paper is a close collaboration, in which both authors have equally contributed substantial parts to the research idea, analyses, writing and revisions.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Yang Hansen, K., Strietholt, R. Does schooling actually perpetuate educational inequality in mathematics performance? A validity question on the measures of opportunity to learn in PISA. ZDM Mathematics Education 50, 643–658 (2018). https://doi.org/10.1007/s11858-018-0935-3

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11858-018-0935-3