Abstract

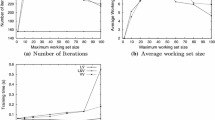

Decomposition techniques are used to speed up training support vector machines but for linear programming support vector machines (LP-SVMs) direct implementation of decomposition techniques leads to infinite loops. To solve this problem and to further speed up training, in this paper, we propose an improved decomposition techniques for training LP-SVMs. If an infinite loop is detected, we include in the next working set all the data in the working sets that form the infinite loop. To further accelerate training, we improve a working set selection strategy: at each iteration step, we check the number of violations of complementarity conditions and constraints. If the number of violations increases, we conclude that the important data are removed from the working set and restore the data into the working set. The computer experiments demonstrate that training by the proposed decomposition technique with improved working set selection is drastically faster than that without using the decomposition technique. Furthermore, it is always faster than that without improving the working set selection for all the cases tested.

Chapter PDF

Similar content being viewed by others

References

Osuna, E., Freund, R., Girosi, F.: An improved training algorithm for support vector machines. In: Proc. NNSP 1997, pp. 276–285 (1997)

Vapnik, V.: The Nature of Statistical Learning Theory. Springer, Heidelberg (1995)

Vapnik, V.: Statistical Learning Theory. John Wiley & Sons, Chichester (1998)

Lin, C.-J.: On the convergence of the decomposition method for support vector machines. IEEE Trans. Neural Networks 12(6), 1288–1298 (2001)

Keerthi, S.S., Gilbert, E.G.: Convergence of a generalized SMO algorithm for SVM classifier design. Machine Learning 46, 351–360 (2002)

Bennett, K.P.: Combining support vector and mathematical programming methods for classification. In: Schölkopf, B., et al. (eds.) Advances in Kernel Methods: Support Vector Learning, pp. 307–326. MIT Press, Cambridge (1999)

Abe, S.: Support Vector Machines for Pattern Classification. Springer, Heidelberg (2005)

Schölkopf, B., Smola, A.J.: Learning with Kernels: Support Vector Machines, Regularization, Optimization, and Beyond. MIT Press, Cambridge (2002)

Yamada, T.: lp.c., http://www.nda.ac.jp/~yamada/programs/lp.c.

Vanderbei, R.J.: Linear Programming, 2nd edn. Kluwer Academic Publishers, Dordrecht (2001)

Chavátal, V.: Linear Programming. W.H. Freeman and Company, New York (1983)

Takenaga, H., et al.: Input layer optimization of neural networks by sensitivity analysis and its application to recognition of numerals. Electrical Engineering in Japan 111(4), 130–138 (1991)

Hashizume, A., Motoike, J., Yabe, R.: Fully automated blood cell differential system and its application. In: Proc. IUPAC 3rd International Congress on Automation and New Technology in the Clinical Laboratory, pp. 297–302 (1988)

Weiss, S.M., Kapouleas, I.: An empirical comparison of pattern recognition, neural nets, and machine learning classification methods. In: Proc. IJCAI, pp. 781–787 (1989)

Lan, M.-S., Takenaga, H., Abe, S.: Character recognition using fuzzy rules extracted from data. In: Proc. 3rd IEEE International Conference on Fuzzy Systems, vol. 1, pp. 415–420 (1994)

Abe, S.: Pattern Classification: Neuro-Fuzzy Methods and Their Comparison. Springer, Heidelberg (2001)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2006 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Torii, Y., Abe, S. (2006). Fast Training of Linear Programming Support Vector Machines Using Decomposition Techniques. In: Schwenker, F., Marinai, S. (eds) Artificial Neural Networks in Pattern Recognition. ANNPR 2006. Lecture Notes in Computer Science(), vol 4087. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11829898_15

Download citation

DOI: https://doi.org/10.1007/11829898_15

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-37951-5

Online ISBN: 978-3-540-37952-2

eBook Packages: Computer ScienceComputer Science (R0)