Abstract

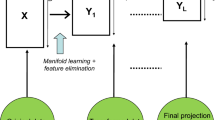

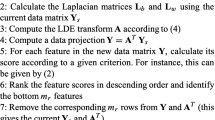

A very important aspect in manifold learning is represented by automatic estimation of the intrinsic dimensionality. Unfortunately, this problem has received few attention in the literature of manifold learning. In this paper, we argue that feature selection paradigm can be used to the problem of automatic dimensionality estimation. Besides this, it also leads to improved recognition rates. Our approach for optimal feature selection is based on a Genetic Algorithm. As a case study for manifold learning, we have considered Laplacian Eigenmaps (LE) and Locally Linear Embedding (LLE). The effectiveness of the proposed framework was tested on the face recognition problem. Extensive experiments carried out on ORL, UMIST, Yale, and Extended Yale face data sets confirmed our hypothesis.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Schölkopf, B., Smola, A., Müller, K.R.: Nonlinear component analysis as a kernel eigenvalue problem. Neural Computation 10, 1299–1319 (1998)

Saul, L.K., Roweis, S.T., Singer, Y.: Think globally, fit locally: Unsupervised learning of low dimensional manifolds. Journal of Machine Learning Research 4, 119–155 (2003)

Tenenbaum, J.B., de Silva, V., Langford, J.C.: A global geometric framework for nonlinear dimensionality reduction. Science 290(5500), 2319–2323 (2000)

Geng, X., Zhan, D., Zhou, Z.: Supervised nonlinear dimensionality reduction for visualization and classification. IEEE Transactions on Systems, Man, and Cybernetics-Part B: Cybernetics 35, 1098–1107 (2005)

Belkin, M., Niyogi, P.: Laplacian eigenmaps for dimensionality reduction and data representation. Neural Computation 15(6), 1373–1396 (2003)

Jia, P., Yin, J., Huang, X., Hu, D.: Incremental Laplacian Eigenmaps by preserving adjacent information between data points. Pattern Recognition Letters 30(16), 1457–1463 (2009)

Zhan, L., Qiao, L., Chen, S.: Graph-optimized locality preserving projections. Pattern Recognition 43, 1993–2002 (2010)

Dy, J.G., Brodley, C.E.: Feature selection for unsupervised learning. Journal of Machine Learning Research 5, 845–889 (2004)

Zhao, Z., Liu, H.: Spectral feature selection for supervised and unsupervised learning. In: Int. Conference on Machine Learning (2007)

Mitra, P., Murthy, C., Pal, S.: Unsupervised feature selection using feature similarity. IEEE Trans. Pattern Analysis and Machine Intelligence 24, 301–312 (2002)

He, X., Cai, D., Niyogi, P.: Laplacian score for feature selection. In: Advances in Neural Information Processing Systems 18 (2005)

Cai, D., Zhang, C., He, X.: Unsupervised feature selection for multi-cluster data. In: 16th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, KDD 2010 (2010)

Liu, H., Yu, L.: Toward integrating feature selection algorithms for classification and clustering. IEEE Trans. Knowledge Data Engineering 17, 494–502 (2005)

Srinivas, M., Patnaik, L.: Genetic algorithms: a survey. IEEE Computer 27(6), 17–26 (1994)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Dornaika, F., Assoum, A., Raducanu, B. (2012). Automatic Dimensionality Estimation for Manifold Learning through Optimal Feature Selection. In: Gimel’farb, G., et al. Structural, Syntactic, and Statistical Pattern Recognition. SSPR /SPR 2012. Lecture Notes in Computer Science, vol 7626. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-34166-3_63

Download citation

DOI: https://doi.org/10.1007/978-3-642-34166-3_63

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-34165-6

Online ISBN: 978-3-642-34166-3

eBook Packages: Computer ScienceComputer Science (R0)