Abstract

In this paper we are proposing classification algorithm for multifrequency Polarimetric Synthetic Aperture Radar (PolSAR) image. Using PolSAR decomposition algorithms 33 features are extracted from each frequency band of the given image. Then, a two-layer autoencoder is used to reduce the dimensionality of input feature vector while retaining useful features of the input. This reduced dimensional feature vector is then applied to generate superpixels using simple linear iterative clustering (SLIC) algorithm. Next, a robust feature representation is constructed using both pixel as well as superpixel information. Finally, softmax classifier is used to perform classification task. The advantage of using superpixels is that it preserves spatial information between neighboring PolSAR pixels and therefore minimizes the effect of speckle noise during classification. Experiments have been conducted on Flevoland dataset and the proposed method was found to be superior to other methods available in the literature.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

- Polarimetric Synthetic Aperture Radar (PolSAR)

- Multifrequency PolSAR image classification

- Autoencoder

- Superpixels

- Simple linear iterative clustering (SLIC)

- Optimized Wishart Network (OWN)

1 Introduction

Synthetic Aperture Radar (SAR) has been popularized in recent years as a technique that captures high resolution microwave images of the earth surface. With the SAR technique, an image can be taken regardless of weather conditions or time of the day unlike optical sensors. The other major reason to use SAR is its operability over multiple frequency bands, viz, from the X band to P band. The penetrability of the L and the P bands allows SAR to capture data from even below ground level. In case of PolSAR it draws information of the target in four polarization states which makes it an information rich technique. These are some of the reasons that establish the superiority of SAR over optical data capturing techniques.

One of the very early approaches of classification of multifrequency PolSAR data was attempted using DNN (Dynamic Neural Network) [1]. Deep learning based multiplayer autoencoder network was also proposed [2]. It uses Kronecker product of eigenvalues of coherency matrix to combine multiple bands information. Recently, an Optimized Wishart Network (OWN) for classification of multifrequency PolSAR data was reported [3].

Superpixel algorithms in conjunction with deep neural networks have gained popularity for capturing spatial information of a PolSAR image. Hou et al. presented [4] a way of using Pauli decomposition of PolSAR image to generate superpixels and autoencoder to extract features from the coherency matrix of each PolSAR pixel. Prediction of the network was then used to run a KNN (k nearest neighbors) algorithm in each superpixel to determine the class of the complete superpixel. Guo et al. introduced a method to apply Fuzzy clustering algorithm over PolSAR images to generate superpixels [5]. This method considered only those pixels which are similar to their neighbors, while pixels which are in all probability badly conditioned were ignored. In another work [6] Cloude decomposition features were used to generate superpixels and CNN (Convolutional Neural Network) to perform the classification. Adaptive nonlocal approach for extracting spatial information was also proposed [7]. It uses stacked sparse autoencoder to extract robust features.

In this paper we propose a classification algorithm for multifrequency PolSAR images. It is organized as follows: Sect. 2 discusses the proposed network architecture; Sect. 3 explains the experiments conducted on the Flevoland dataset; Sect. 4 concludes with our observations and the discussions based on the results.

2 Proposed Methodology

Phase information is very useful for PolSAR image classification. Since we are using a real valued neural network it may not be able to relate amplitude and phase information. It considers the real and imaginary components as separate features. This brings the need of decomposition features to the network instead of the raw features of coherency matrix. We have created 33 dimensional feature vector extracted from one frequency band of a PolSAR image as shown in Table 1. Hence combining information of all three bands we get 99 dimensional feature vector corresponding to each PolSAR pixel.

Freeman decomposition [8] has 3 features which describe the power of single-bounce(odd-bounce), double-bounce and volume scattering of the incident waves. The Huynen decomposition [9] aims to form a single scattering matrix to model the scattering mechanism of the surface. The Cloude decomposition [10] aims to model the surface scattering using the parameters such as Entropy, Anisotropy and other angles which describe the scattering mechanism. The Krogager decomposition [11] aims to factorize the scattering matrix as the combination of a sphere, a di-plane and a helix. The Yamaguchi decomposition [12] adds a Helix scattering component to the Freeman decomposition to model complicated man-made structures.

As shown in Fig. 1 our proposed network architecture contains three modules. The first module is a two layer autoencoder network. The purpose of this module is to reduce the dimensionality of the input vector by learning efficient representation of combined PolSAR frequency bands information.

Let \(\mathbf {X_i}\) be the input feature vector of \(i^{th}\) PolSAR pixel. Let \(\mathbf {W_{11}}, \mathbf {W_{12}}, \mathbf {b_{11}}\) and \(\mathbf {b_{12}}\) be weights and biases of two encoder layers. A hidden representation of input feature vector \(\mathbf {X_i}\) can be calculated as \(\mathbf {h_i} = f(\mathbf {W_{12}}^Tf(\mathbf {W_{11}}^T\mathbf {X_i} + \mathbf {b_{11}})+\mathbf {b_{12}})\), where f is a tanh activation function. Let \(\mathbf {W_{21}}, \mathbf {W_{22}}, \mathbf {b_{21}}\) and \(\mathbf {b_{22}}\) be weights and biases of two decoder layers. A reconstructed input vector can be calculated as \(\mathbf {X_i}^{'} = f(\mathbf {W_{22}}^Tf(\mathbf {W_{21}}^T\mathbf {h_i} + \mathbf {b_{21}})+\mathbf {b_{22}})\). We have used mean square error to train the autoencoder. The cost function of first module of the proposed network is given as follows:

Here \(\beta \) is a regularization parameter, \(\alpha \) is the learning rate, \(\gamma \) is the sparsity parameter, N is total number of training samples, \(U_1\) is a size of reduced dimensional feature vector \(\mathbf {h_i}, \rho \) is a sparsity parameter, \(\rho _t\) is the average activation value of the \(t^{th}\) hidden unit and \(KL(\rho \Vert \rho _t)\) is a Kullback-Leibler divergence which encourages sparsity in the hidden representation \(\mathbf {h_i}\). For our application we have set the value of \(U_1=5\). Once the training of the first module is complete we disconnect the decoder layers.

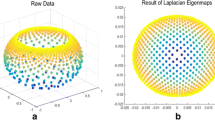

Next, we feed the entire PolSAR image as an input to this network to obtain hidden representations of all pixels of the PolSAR image. We will use this hidden representation of all pixels of the PolSAR image to generate superpixels. This process has two advantages: (i) it contains feature information of all bands and (ii) its dimensionality is substantially reduced in comparison to the input feature vector. To generate superpixels we have used an algorithm similar to simple linear iterative clustering (SLIC) [14]. Instead of giving an RGB image as input, we will give the hidden representation of PolSAR image obtained from using first module of the proposed network as input. Using SLIC we measure the distance between any two pixels which is given by Eq. 2:

where \(D_s = \sqrt{(x_i-x_j)^2+(y_i - y_j)^2}\) and \(D_h = ||\mathbf {h_i}-\mathbf {h_j} ||_2\). Here m is a parameter controlling the relative weight between \(D_s\) and \(D_h\) and s is a size of search space [14]. \((x_i, y_i)\) and \((x_j, y_j)\) are the positions of \(i^{th}\) and \(j^{th}\) PolSAR pixels on Euclidean plane. This distance measure was finally used for superpixel generation.

The second module of our proposed architecture combines each pixel and corresponding superpixel information to construct robust feature vector. This is done by letting \(\mathbf {S_j}\) be the \(j^{th}\) superpixel and \(\mathbf {h_i} \in \mathbf {S_j}\). Let \(\mathbf {c_j}\) be the cluster center of \(\mathbf {S_j}\). To extract robust feature using both pixel and superpixel information input for second autoencoder \(\mathbf {H_i}\) can be constructed as \(\mathbf {H_i} = [\mathbf {h_i}; \mathbf {c_j}]\) [7]. Since the dimensionality of the hidden representation \(h_i\) is low, a single layer autoencoder is sufficient for an effective reconstruction. Let \(\mathbf {r_i} = f(\mathbf {W_{13}}^T\mathbf {H_i} + \mathbf {b_{13}})\) be the activation value obtained at hidden layer of second autoencoder. Let \(\mathbf {H_i^{'}} = f(\mathbf {W_{23}}^T\mathbf {r_i} + \mathbf {b_{23}})\) be the reconstructed input. The cost function for the second autoencoder can now readily be described by:

Here \(U_2\) is the size of feature vector \(\mathbf {r_i}\). Once the training of the second module of the autoencoder is complete we again disconnect the decoder layer. Output of the second module of autoencoder contains both pixel and superpixel information. Finally we use softmax classifier to obtain predicted probability distribution.

3 Experiments

With this theoretical model we conduct the following experiments. We start with the details of dataset chosen for our experiments. After that we analyze the performance of the proposed network for different band combinations. Finally we will perform the analysis of performance for different feature decompositions. The complete experiment on the proposed network architecture was implemented using Python 3.6 and it was executed on a 1.60 GHz machine with 8 GB RAM for all the experiments.

Experiments have been conducted on a dataset of Flevoland [15], an agricultural tract in the Netherlands, whose data was captured by the NASA/Jet Propulsion Laboratory on 15 June, 1991. This dataset has often been viewed as the benchmark dataset for PolSAR applications. The intensities after Pauli decomposition of the dataset have been used to form an RGB image as shown in Fig. 2(a–c). The ground truth of the data set shown in Fig. 2(d) identifies a total of 15 classes of land cover.

Pauli decomposition of (a) L band, (b) P band and (c) C band Flevoland dataset [15]. (d) Ground truth map of Flevoland dataset

We have evaluated the accuracy of each class with respect to all possible combinations of the data acquired in the three frequency bands. Please note that all the overall accuracy mentioned in the paper is the precision. Figure 3 shows classification maps obtained by the proposed method and Table 2 shows classwise accuracies for all possible band combinations. From Table 2 it can be observed that while the network with just the C band information recognizes Onions and Lucerne with high accuracy, it fails in the case of classes such as Beet or Oats. While in the case of the C band, the network fails to recognize Wheat accurately. It can be noted that the lack of information to recognize Wheat is compensated by the help of the L band. In the case of the P band, the network fails to accurately identify Onions and Peas but correctly identifies the majority of the Rapeseed and Fruit. It can also be observed that the L band performs better individually than the C or the P bands.

A total of six PolSAR decomposition methods have been applied to the PolSAR data and their features have been given as input to the proposed network. The significance of each decomposition technique is evident from the Table 3. It can be seen that the Krogager and Freeman decompositions fail to provide enough information to recognize Beet and Onions. On the other hand Cloude decomposition and Huynen decomposition provide sufficient information for these crops respectively.

The proposed method for classification of multifrequency PolSAR image is also compared with other methods available in the literature. Comparison of overall accuracies is reported in Table 4. ANN [2] and OWN [3] have used small subset of Flevoland dataset containing 7 classes. On the other hand Stein-SRC [16] used ground truth with 14 classes. For parity in comparison with our results we have calculated the overall accuracy of the proposed method using ground truth of 7, 14 as well as 15 classes. We observe from Table 4 that the proposed method outperforms all the three methods [2, 3, 16].

4 Conclusion

In this paper a classification network for multifrequency PolSAR data is proposed. Proposed network involves three modules. First module reduces the dimensionality of the input feature vector. Output of the first module has been used to generate superpixels. The second module constructs robust feature vector using each pixel and its corresponding superpixel information. Finally the last module of the proposed network conducts classification using softmax classifier. It is observed that combining multiple frequency bands information improves overall classification accuracy. We validated our proposed network on Flevoland dataset resulted in 99.59% overall classification accuracy. Experimental result shows that this proposed network outperforms other reported methods available in the literature.

References

Chen, K.S., Huang, W.P., Tsay, D.H., Amar, F.: Classification of multifrequency polarimetric SAR imagery using a dynamic learning neural network. IEEE Trans. Geosci. Remote Sens. 34, 814–820 (1996)

De, S., Ratha, D., Ratha, D., Bhattacharya, A., Chaudhuri, S.: Tensorization of multifrequency PolSAR data for classification using an autoencoder network. IEEE Geosci. Remote Sens. Lett. 15, 542–546 (2018)

Gadhiya, T., Roy, A.K.: Optimized wishart network for an efficient classification of multifrequency PolSAR data. IEEE Geosci. Remote Sens. Lett. 15, 1720–1724 (2018)

Hou, B., Kou, H., Jiao, L.: Classification of polarimetric SAR images using multilayer autoencoders and superpixels. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 9, 3072–3081 (2016)

Guo, Y., Jiao, L., Wang, S., Wang, S., Liu, F., Hua, W.: Fuzzy superpixels for polarimetric SAR images classification. IEEE Trans. Fuzzy Syst. 26, 2846–2860 (2018)

Hou, B., Yang, C., Ren, B., Jiao, L.: Decomposition-feature-iterative-clustering-based superpixel segmentation for PolSAR image classification. IEEE Geosci. Remote Sens. Lett. 15, 1239–1243 (2018)

Hu, Y., Fan, J., Wang, J.: Classification of PolSAR images based on adaptive nonlocal stacked sparse autoencoder. IEEE Geosci. Remote Sens. Lett. 15, 1050–1054 (2018)

Freeman, A., Durden, S.L.: A three-component scattering model for polarimetric SAR data. IEEE Trans. Geosci. Remote Sens. 36, 963–973 (1998)

Huynen, J.R.: Phenomenological theory of radar targets. Electronmagnetic Scattering (1970)

Cloude, S.R., Pottier, E.: An entropy based classification scheme for land applications of polarimetric SAR. IEEE Trans. Geosci. Remote Sens. 35, 68–78 (1997)

Krogager, E.: New decomposition of the radar target scattering matrix. Electron. Lett. 26, 1525–1527 (1990)

Yamaguchi, Y., Moriyama, T., Ishido, M., Yamada, H.: Four-component scattering model for polarimetric SAR image decomposition. IEEE Trans. Geosci. Remote Sens. 43, 1699–1706 (2005)

Zhou, Y., Wang, H., Xu, F., Jin, Y.: Polarimetric SAR image classification using deep convolutional neural networks. IEEE Geosci. Remote Sens. Lett. 13, 1935–1939 (2016)

Achanta, R., Shaji, A., Smith, K., Lucchi, A., Fua, P., Süsstrunk, S.: SLIC superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 34, 2274–2282 (2012)

Dataset: AIRSAR, NASA 1991. Retrieved from ASF DAAC on 7 December 2018

Yang, F., Gao, W., Xu, B., Yang, J.: Multi-frequency polarimetric SAR classification based on riemannian manifold and simultaneous sparse representation. Remote Sens. 7(7), 8469–8488 (2015)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Gadhiya, T., Tangirala, S., Roy, A.K. (2019). Stacked Autoencoder Based Feature Extraction and Superpixel Generation for Multifrequency PolSAR Image Classification. In: Deka, B., Maji, P., Mitra, S., Bhattacharyya, D., Bora, P., Pal, S. (eds) Pattern Recognition and Machine Intelligence. PReMI 2019. Lecture Notes in Computer Science(), vol 11942. Springer, Cham. https://doi.org/10.1007/978-3-030-34872-4_37

Download citation

DOI: https://doi.org/10.1007/978-3-030-34872-4_37

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-34871-7

Online ISBN: 978-3-030-34872-4

eBook Packages: Computer ScienceComputer Science (R0)