Abstract

Introduction

The volume of adverse events (AEs) collected, analysed, and reported has been increasing at a rapid rate for over the past 10 years, largely due to the growth of solicited programmes. The proportion of various forms of solicited case data has evolved over time, with the main relative volume increase coming from Patient Support Programmes. In this study, we sought to examine the impact of the pooling of AE report data from solicited sources with data from spontaneous sources to safety signal detection using disproportionality analysis methods.

Methods

Two conditions were explored in which disproportionality scores from hypothetical drugs were evaluated in a simulated safety database. The first condition held occurrence of events constant and varied solicited case volume, while the second condition varied both proportion of occurrence of events and solicited case volume.

Results

In the first setting, where all AE terms have the same probability to occur with any drug, increasing volumes of solicited cases while keeping occurrence of events constant leads to reduced variability in disproportionality scores, consequently reducing or eliminating identified signals of disproportionate reporting. In the second setting, varying both case volume and reporting rates can mask true safety signals and falsely identify signals where there are none.

Conclusions

This analysis of simulated data suggests that pooling AE data from solicited sources with spontaneous case data may impact the results of disproportionality analyses, masking true safety signals and identifying false positives. Therefore, increased volumes of safety data do not necessarily correlate with improved safety signal detection.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Postmarketing signal detection on aggregate safety data often employs disproportionality analyses in order to identify quantitatively interesting data points worthy of further investigation. Disproportionality methods are a series of analytical approaches where the proportion of occurrence of an event for a given drug is compared with the proportion of occurrence of that event in the broader database [1]. In the case of postmarket safety surveillance, databases are comprised of spontaneous safety cases reported by patients or healthcare providers (HCPs) to the marketing authorisation holders (MAHs) or health authorities. However, the relative proportion of these spontaneous reports compared with ’solicited’ reports is decreasing [2]. As defined by the International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use (ICH) in ICH-E2D, solicited reports of suspected adverse reactions are those derived from organised data collection systems, which include clinical trials, registries, postapproval named patient use programmes, other patient support and disease management programmes, surveys of patients or HCPs, or information gathered regarding efficacy or patient compliance [3]. One of the most widespread sources of solicited reports in the postmarketing setting is from so-called Patient Support Programmes (PSPs), a generic name given to a variety of programmes designed to support patient medication care, but rarely, if ever, are designed to collect safety information. In general, PSPs are structured services, solutions or initiatives for patients and/or caregivers to assist patients in managing their disease, medication or behaviours through either gaining access to medication, administration of medication, monitoring or following up on treatment, or providing information related to the medication or disease (e.g. homecare services, disease awareness/management programmes, compliance adherence programmes, financial reimbursement schemes, coaching services). Some PSPs include services with the aim of patient assistance in the form of compensation/reimbursement/access programmes that are solely charitable in design, without intent to collect information relating to the use of the medicinal product. The volume of adverse event (AE) reports collected by MAHs and reported to health authorities have been increasing by 10–20% each year, corresponding to over a 400% increase in a decade [4]. PSPs, among other safety data sources, such as market research programmes (MRPs) and social media, make up a large proportion of this volume increase and thus it is important to consider how these data are best used [2]. This report considers whether it is appropriate to collect and analyse safety data reports with a single approach or whether one should consider a more tailored analysis.

The objective of safety signal detection in pharmacovigilance using disproportionality analysis is to identify a target, referred to as a signal, from a larger group of data points referred to as noise, non-signal, or background. A desirable signal detection system is one that correctly identifies targets while minimising erroneous identification of targets (false positives) and failing to detect targets (false negatives) [5]. Klein et al. assessed the impact of reports generated by PSPs, which he referred to as ‘precautionary reports’. Results indicated that spurious signals, i.e. false positives, resulted from inclusion of these precautionary reports within signal detection analyses. The authors also identified instances where false results disappeared when the solicited data were removed from the AE database. The team’s assessment of false negative or masked results occurred when solicited data increased the background reporting rate for the drug of interest and thereby increased the threshold for signals of disproportionate reporting (SDRs) to be detected. Klein et al. referred to these two types of error in pooling solicited and spontaneous data as precautionary reporting bias [6].

In this study, authors from TransCelerate BiopharmaFootnote 1 explore the impact of pooling different types of simulated solicited and spontaneous data on disproportionality scores. We examine the impact of the inclusion of solicited source data under two conditions—a simplified condition and a complex condition. We start with the simplified situation to examine the impact of increased solicited case volume on disproportionality analyses. In this analysis, the proportion of events occurring with hypothetical drugs in a simulated database is held constant. We then move to a more complex condition, varying both the quantities of events and the proportions of occurrence of events with hypothetical drugs. This example replicates a situation in which solicited case data increases both volumes of data and alters the proportion of certain types of reports. To determine if the results depend on a specific disproportionality score, several statistics, including reported odds ratio (ROR), proportional reporting ratio (PRR), empirical Bayes geometric means (EBGM), and EB05, will be included across the two analyses [7, 8].

The two conditions include both types of data—spontaneous and solicited. For this analysis, we assume there is only one source of solicited data (e.g. PSPs, MRPs, social media). For the purpose of the simulations, PSPs served as an illustrative example as PSP activity is the largest component of the increasing numbers of solicited case data. Ultimately, the data used for analyses are simulated and are representative of PSP data only in the sense of volume of data. Other sources of data, such as MRPs, social media, and various subsets of PSP data may result not only in volume increases in data but also stimulated reporting of specific events. Therefore, the reality of safety surveillance is much more complicated, with numerous programmes contributing data of various quantities and qualities. The present study simplifies the situation in order to illustrate complications that can result from a failure to consider the data source.

2 Methods and Results

2.1 Simplified Condition

The simplified condition represents a situation in which solicited programmes result in increased volume of data but do not alter the proportion of events occurring with each drug. That is, the solicited programme results in ‘more of the same’ data. This is consistent with the findings of TransCelerate analyses for many [2]. Here, PSPs are used as an illustrative example of such a solicited programme. We refer to this as ‘simplified’ as strong assumptions are made about the reporting patterns within the spontaneous and solicited conditions. This simulation assumes a spontaneous reporting dataset of 20 drugs that are equally likely to have reports of any one of 1000 types of AEs or Medical Dictionary for Regulatory Activities (MedDRA) Preferred Terms (PTs). This generates 20,000 possible drug–event pairs (DEPs). Each simulation will allow for random variation, such that there will not be an equal number of each DEP, simply that the probability of occurrence is equal during the simulation. Additionally, since the probability of occurrence of an event with any drug is random, any apparent association between ‘drug’ and ‘event’ in the data is purely coincidental.

Throughout these simulations for the spontaneous reporting, the probability of each PT for each drug remained the same, i.e. all equally likely, as did the total number of DEPs, i.e. 100,000 for each simulated dataset. For the solicited reporting simulation, the probability of each DEP remained the same, but the volume of data for one drug, identified as the PSP drug, was increased by 2, 5, 10 and 20 times relative to the number of events simulated for the other (non-PSP) drugs. That is, if there were approximately 1000 non-PSP DEPs, there would be approximately 2000, 5000, 10,000, and 20,000 PSP DEP. The multiples were selected based on survey of a subset of MAHs within the TransCelerate membership in order to be representative of actual case-volume multiples.

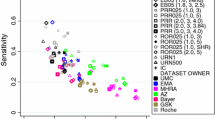

Using these assumptions of equal probability of occurrence of PTs and multipliers, for each of these total volumes of data, 10,000 simulated datasets of each with 100,000 DEPs were then generated. For each DEP, disproportionality scores were computed. The results are presented in Figs. 1, 2 and 3 as sets of pairs of plots, with the first of each pair showing the impact on the PSP drug, i.e. the drug that has data from both solicited and spontaneous sources (labelled ‘PSP drug’ in the plots), and the second plot showing the impact on any of the other drugs (labelled ‘non-PSP’ in the plots). The horizontal axis of each plot shows the impact of changing the volume of solicited data. The squares on the plots indicate the mean of the 10,000 simulations, and the bar shows the range of results from the minimum to the maximum computed disproportionality score.

Due to the large number of simulations, the mean PRRs were approximately 1.0, as might be expected. What might not be expected is how the range of PRRs varies. For the PSP drug, the range narrowed dramatically as the volume of data for the PSP drug increased from 1 to 20 times. A similar but much less dramatic trend is seen for the other drugs (non-PSP data). To lend interpretation to the result, none of the simulated PRR values exceeded 2, which is often used as an indicator of an SDR. Were this real data for a company conducting surveillance, this could mean that fewer SDRs would be selected for further medical assessment simply through PSP activity inflating the volume of reporting. As these simulations were conducted without imputed adverse drug reactions (ADRs), this reduction of variability would be viewed positively, i.e. reducing false positives. However, in a signal detection setting where data mining is completed to identify DEPs worth further evaluation, suppression of SDRs removes a necessary trigger to identify safety signals. In practice, if disproportionalities were suppressed to a value near 1.0, we would probably have to lower the decision threshold well below 2.0 so SDRs would be generated for assessment.

Similarly, the ranges (minimum to maximum) of EBGM in Fig. 2 follow the same trend as the PRR analysis of PSP data in Fig. 1.

The range of EB05 (the lower limit of the 90% confidence interval of EBGM) values in Fig. 3 also have a similar narrowing trend as the PRR and EBGM values. What is unique is the mean value of EB05 moves towards 1.0, which is the mean value of its respective EBGM. This is not unexpected as the EB05 measure is itself a measure of variability of a posterior distribution. To lend interpretation to the plot, very few EB05 values exceed 1 (a threshold employed by some companies).

2.2 Complex Condition

A motivating example for this simulation is shown in Table 1. We refer to the drug being assessed as drug X, and the AE of interest as AE Y. We arbitrarily set the proportion of spontaneous reports for drug X to be 16%, and 0.5% for AE Y. We assumed a different proportion of reports relating to drug X in the solicited data source. This could be due to social media campaigns for different drugs or a different demographic of the population using social media, and, more specifically, being prepared to respond to a social media campaign [9]. Here, one might imagine an active PSP campaign for drug X resulting in a higher proportion of solicited reports relating to drug X. In addition, for each PT we allowed for different reporting rates in the spontaneous and solicited data. This could be due to the lack of establishment of a causal relationship in solicited reports, an embarrassment of reporting certain events, possible exaggeration of events or indeed a difference in recall, depending on how the healthcare professional (HCP) questions the patient [6, 9]. This would lead to different reported odds for the AE of interest in spontaneous and solicited reporting.

The example has been constructed so that for each of the spontaneous and solicited simulated data sources separately, both the ROR and the PRR are exactly 1. However, when these two information sources are combined, we see an ROR of 1.3. Note that this effect has been introduced solely by the pooling, not due to random variation. This phenomenon of seeing an effect in the pooled data that was not present or was in the opposite direction in the separate data sources is called Simpsons paradox [10, 11].

We now generalise the previous example to explore a range of scenarios that are summarised in Table 2, and present the results using heat maps. The overall number of AE reports for spontaneous and solicited reporting remain the same as in the motivating example, as do the total number of spontaneous reports for hypothetical drug X. In addition, the reporting odds for AE Y for ‘other drugs’ are fixed at 0.5% for spontaneous reports and 1% for solicited reports. These values have been fixed solely in order to limit the amount of output.

In each heat map, the number of solicited reports for drug X varies from 4 to 64% of the total solicited reports, and this is represented by the ratio of solicited to spontaneous reporting rates for drug X ranging from 0.25 to 4 on the horizontal axis. Also in each heat map, the reporting odds for AE Y in the solicited reports vary from 0.25 × to 8 × that for the spontaneous reports on the vertical axis. Therefore, for example, when the reporting odds for AE Y in the spontaneous reports is 1%, the equivalent reporting odds for the solicited reports ranges from 0.25 to 4%. This quantity is referred to as the ratio of solicited to spontaneous reporting rates.

The heat map in Fig. 4 corresponds to the situation where drug X has no effect on AE Y, i.e. for solicited and spontaneous reports separately, the reporting odds for drug X is the same as for other drugs. Each point on the grid corresponds to a pair of 2 × 2 tables, similar to the motivating example. For each of these pairs of tables, when the spontaneous reports are treated separately to solicited reports, each ROR = 1. We can see that there is a region in the heat map where an expected odds ratio is more than 1.5, which will have a markedly increased risk of false positive SDRs. Note that this effect is entirely due to pooling and is not driven by random variation (which is not included in this analysis).

The heat map in Fig. 5 corresponds to the situation where drug X causally increases the incidence of AE Y, i.e. ADRs are expected. Here, we set the ROR to be 3 in both the spontaneous and solicited sources. Again, the impact of drug X on AE Y is entirely consistent between spontaneous and solicited sources. In the heat map, we see the effect of pooling the reports. Of note are the blue areas where the pooled RORs are noticeably lower than 3. Since, in this scenario, drug X has a causal effect on the AE rates, there is particular concern that pooling data may mask a true signal, especially when random variation is also included.

3 Discussion

We have explored both simple and more complex conditions in order to investigate the impact of altering volumes and proportions of AE reports in simulated conditions. In the simple condition, we first considered scenarios where the PTs were randomly assigned to drug without any prespecified relationship between occurrences of drug and occurrences of PTs. We demonstrated via simulation that the effect of including data from solicited sources is to reduce the range of the disproportionality statistics, particularly for the drug that has the increased volume of data. It remains to be seen whether this scenario, in which there are no drug-related effects, can be extrapolated to a pharmacovigilance surveillance system, where eliminating or reducing the number of identified SDRs could result in suppressed triggers for medical review, i.e. missed safety signals.

We then considered a setting where for each PT we allowed for different reporting rates in the spontaneous and solicited data. This analysis showed that the act of pooling the data could mask true safety signals (increased false negative results) and could also identify SDRs where there are no safety signals (increased false positive results). Thus, this work supports the contention of Klein et al. that PSP data might be detrimental to safety signal detection: when both volumes and proportions of AEs vary between spontaneous and solicited sources, the combination of both sources can result in Simpson’s paradox and obfuscate data mining results [12].

Signal detection by MAHs and health authorities through the use of disproportionality analysis is a staple of the pharmacovigilance ecosystem. Disproportionalities are a set of analytical tools utilised for screening large amounts of spontaneous report data. Of course, these techniques are not the only tools for signal identification. Together with other surveillance activities, such as literature surveillance, individual case safety review, other periodic, aggregate data reviews, and medical and scientific expertise are leveraged to identify true safety signals from among the high volumes of incoming safety data.

The challenge facing the screening of large amounts of AE data is the ever-increasing volume of AE reports. AE reports from solicited cases have been steadily increasing in volume, which is having an unknown impact on current approaches to safety data mining. One assumption driving the regulatory requirements for safety reporting has been that increasing amounts of data could only serve to improve surveillance, and therefore serve the public welfare. To the contrary, through a series of simulations and in conjunction with additional analyses conducted by the TransCelerate team, the results of the combined simulations suggest that a thoughtful approach to the role and application of disproportionality analyses is needed. Rather than application of these techniques exclusively, it is important to consider the role disproportionality analysis plays, the corresponding strengths and weaknesses of these approaches, and the interaction of these results along with the other surveillance activities conducted within the pharmacovigilance ecosystem [2].

A simple solution to the problem of falsely flagging and masking safety signals from combining solicited and spontaneous data is to state that the two data sources should be analysed separately. In fact, authors have suggested that subsetting data prior to computing disproportionalities is beneficial [13]. However, this is a solution much easier stated than implemented as most regulators do not routinely enable sponsors to submit data with data fields that would accurately identify various solicited sources of data and differentiate that data from other sources. Pharmaceutical manufacturers have only recently started to routinely identify the various sources of solicited data within their own corporate safety databases.

Even in situations where solicited and spontaneous sources were separately analysed, there is limited evidence for the contribution of solicited sources to the characterisation of a product’s safety profile. Jokinen et al. evaluated the identification of safety label updates, proportions of MedDRA PTs, system organ classes (SOCs), and high-level group terms (HLGTs) reported, as well as vigiGrade (case completeness) scores for PSPs, patient assistance programmes (PAPs), MRPs, and social media [2]. That study found that PAPs, MRPs, and social media provided no new information over and above traditional data sources and methods. Case completeness results suggested that many individual case reports from certain sources (e.g. market research and social media) are missing key data to permit evaluation of the cases. Nonetheless, these incomplete cases are routinely included in disproportionality analyses, which, as demonstrated in this study, could create misleading results. The impact of misleading results in data mining, combined with cases lacking critical information, results in resources being dedicated to efforts unlikely to generate valuable safety insights and potentially distracts from surveillance activities that may actually be valuable. Ultimately, a robust safety surveillance system should consider the limitations of any data sources and analyses applied to those data.

4 Conclusion

Taken together, the results of our analyses suggest that pooling data sources can have unintended consequences on the ability to identify SDRs. Certainly, conclusions from the current set of analyses should be tempered by limitations of the methods and analyses. First, both the simple and complex conditions depicted in the analyses fail to address the full complexity of the data that become part of corporate and regulatory databases used for safety surveillance. These are merely stripped-down attempts to understand the potential outcomes that may result from the increase in volume of data resulting from the proliferation of PSPs. Second, there are a number of open questions regarding the impact of signals within these simulated distributions. No attempt was made to mimic actual distributions of spontaneous report data with a variety of imputed signals within the present study. Inclusion of this variability would have an unknown impact on results. Future work should further investigate whether imputed signals in the distribution of data alter the results of these analyses or leverage an actual database with identified safety signals as the basis for examination of the impact of inclusion and exclusion of various sources of data on the ability to detect signals. Finally, and most importantly, the true impact of these results needs to be considered within the entirety of a safety surveillance system. Signal detection applied to large safety databases is but a single screening tool employed within an overall pharmacovigilance effort.

This analysis points to a more overarching problem with how safety data are viewed. Generally, all data are entered into the same safety database and are reported to regulators according to prescribed regulations, regardless of whether the case safety reports are from market research efforts, PSPs, social media, or clinical trials. This problem is likely to be compounded in the future as additional sources of data, e.g. mobile devices, electronic health records, or insurance claims, become part of the wealth of safety information that may be leveraged to ensure public health. Consideration should be given to the source of information, the relative value of that information, and where information should be leveraged within the pharmacovigilance ecosystem. Continued research is needed to further elucidate the appropriate uses, and appropriate analyses, of various forms of safety data.

Notes

TransCelerate Biopharma Inc. is a non-profit organisation that works across the biopharmaceutical research and development community to improve the health of people around the world. TransCelerate’s mission is to collaborate across the global biopharmaceutical research and development community to identify, prioritise, design and facilitate implementation of solutions designed to drive the efficient, effective and high-quality delivery of new medicines.

References

Candore G, Juhlin K, Manlik K, Thakrar B, Quarcoo N, Seabroke S, et al. Comparison of statistical signal detection methods within and across spontaneous reporting databases. Drug Saf. 2015;38(6):577–87.

Jokinen J, Bertin D, Donzanti B, Hombrey J, Simmons V, Li H, et al. Industry assessment of the contribution of patient support programs, market research programs, and social media to patient Safety. Ther Innov Regul Sci. 2019 (accepted).

The International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use (ICH). Post-Approval Safety Data Management: Definitions and Standards for Expedited Reporting E2D 2003. https://www.ich.org/fileadmin/Public_Web_Site/ICH_Products/Guidelines/Efficacy/E2D/Step4/E2D_Guideline.pdf. Accessed 28 Nov 2018.

US Food and Drug Administration. FDA Adverse Event Reporting System Public Dashboard. 2018. https://fis.fda.gov/sense/app/d10be6bb-494e-4cd2-82e4-0135608ddc13/sheet/7a47a261-d58b-4203-a8aa-6d3021737452/state/analysis. Accessed 28 Nov 2018.

Wisniewski AF, Bate A, Bousquet C, Brueckner A, Candore G, Juhlin K, et al. Good Signal Detection Practices: evidence from IMI PROTECT. Drug Saf. 2016;39(6):469–90.

Klein K, Scholl JHG, De Bruin ML, van Puijenbroek EP, Leufkens HGM, Stolk P. When more is less: an exploratory study of the precautionary reporting bias and its impact on safety signal detection. Clin Pharmacol Ther. 2018;103(2):296–303.

European Medicines Agency. Guideline on good pharmacovigilance practices (GVP) Module IX Addendum I—Methodological Aspects of Signal Detection from Spontaneous Reports of Suspected Adverse Reactions 2016. http://www.ema.europa.eu/docs/en_GB/document_library/Regulatory_and_procedural_guideline/2016/08/WC500211715.pdf. Accessed 04 Aug 2016.

Evans SJ, Waller PC, Davis S. Use of proportional reporting ratios (PRRs) for signal generation from spontaneous adverse drug reaction reports. Pharmacoepidemiol Drug Saf. 2001;10(6):483–6.

Sloane R, Osanlou O, Lewis D, Bollegala D, Maskell S, Pirmohamed M. Social media and pharmacovigilance: a review of the opportunities and challenges. Br J Clin Pharmacol. 2015;80(4):910–20.

Tu YK, Gunnell D, Gilthorpe MS. Simpson’s paradox, Lord’s paradox, and suppression effects are the same phenomenon: the reversal paradox. Emerg Themes Epidemiol. 2008;5:2.

Chuang-Stein C, Beltangady M. Reporting cumulative proportion of subjects with an adverse event based on data from multiple studies. Pharm Stat. 2011;10(1):3–7.

Arah OA. The role of causal reasoning in understanding Simpson’s paradox, Lord’s paradox, and the suppression effect: covariate selection in the analysis of observational studies. Emerg Themes Epidemiol. 2008;5:5.

Seabroke S, Candore G, Juhlin K, Quarcoo N, Wisniewski A, Arani R, et al. Performance of stratified and subgrouped disproportionality analyses in spontaneous databases. Drug Saf. 2016;39(4):355–64.

Acknowledgements

The authors would like to acknowledge the efforts and thoughtful comments provided by the TransCelerate Value of Safety Information Data Sources workstream

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

No sources of funding were used to assist in the preparation of this study.

Conflict of interest

Jeremy Jokinen is an employee and shareholder of Abbvie, Inc.; Thomas S. Hilzinger is an employee of PricewaterhouseCoopers, LLP; Rosalind J. Walley is an employee and shareholder of UCB Pharma; Peter Verdru is an employee and shareholder of UCB BioPharma SPRL; and Michael W. Colopy is an employee and shareholder of UCB Biosciences.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Jokinen, J.D., Walley, R.J., Colopy, M.W. et al. Pooling Different Safety Data Sources: Impact of Combining Solicited and Spontaneous Reports on Signal Detection In Pharmacovigilance. Drug Saf 42, 1191–1198 (2019). https://doi.org/10.1007/s40264-019-00843-0

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40264-019-00843-0