Abstract

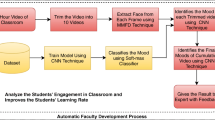

Effective teaching strategies improve the students’ learning rate within academic learning time. Inquiry-based instruction is one of the effective teaching strategies used in the classrooms. But these teaching strategies are not adapted in other learning environments like intelligent tutoring systems, including auto tutors. In this paper, we propose an automatic inquiry-based instruction teaching strategy, i.e., inquiry intervention using students’ affective states. The proposed model contains two modules: the first module consists of the proposed framework for predicting the unobtrusive multi-modal students’ affective states (teacher-centric attentive and in-attentive states) using the facial expressions, hand gestures and body postures. The second module consists of the proposed automated inquiry-based instruction teaching strategy to compare the learning outcomes with and without inquiry intervention using affective state transitions for both an individual and a group of students. The proposed system is tested on four different learning environments, namely: e-learning, flipped classroom, classroom and webinar environments. Unobtrusive recognition of students’ affective states is performed using deep learning architectures. After student-independent tenfold cross-validation, we obtained the students’ affective state classification accuracy of 77% and object localization accuracy of 81% using students’ faces, hand gestures and body postures. The overall experimental results demonstrate that there is a positive correlation with \(r=0.74\) between students’ affective states and their performance. Proposed inquiry intervention improved the students’ performance as there is a decrease of 65%, 43%, 43%, and 53% in overall in-attentive affective state instances using the inquiry interventions in e-learning, flipped classroom, classroom and webinar environments, respectively.

Similar content being viewed by others

Notes

More details on semi-automatic annotation is given in the “Appendix.”

The word “teacher” used in the entire manuscript includes the faculty member or instructor.

Details of all the affective state’s definitions are mentioned in “Appendix.”

More details on affective state classification and localization technique are mentioned in “Appendix.”

Sample questions used in the inquiry intervention module is given in “Appendix”

References

Ahlfeldt, S., Mehta, S., Sellnow, T.: Measurement and analysis of student engagement in university classes where varying levels of PBL methods of instruction are in use. Higher Educ. Res. Dev. 24(1), 5–20 (2005)

Alameda-Pineda, X., Staiano, J., Subramanian, R., Batrinca, L., Ricci, E., Lepri, B., Lanz, O., Sebe, N.: Salsa: a novel dataset for multimodal group behavior analysis. IEEE Trans. Pattern Anal. Mach. Intell. 38(8), 1707–1720 (2016)

Almeda, M.V.Q., Baker, R.S., Corbett, A.: Help avoidance: when students should seek help, and the consequences of failing to do so. In: Meeting of the Cognitive Science Society (2017)

Arroyo, I., Cooper, D.G., Burleson, W., Woolf, B.P., Muldner, K., Christopherson, R.: Emotion sensors go to school. AIED 200, 17–24 (2009)

Arroyo, I., Woolf, B.P., Burelson, W., Muldner, K., Rai, D., Tai, M.: A multimedia adaptive tutoring system for mathematics that addresses cognition, metacognition and affect. Int. J. Artif. Intell. Educ. 24(4), 387–426 (2014)

Ashwin, T., Guddeti, R.M.R.: Unobtrusive students’ engagement analysis in computer science laboratory using deep learning techniques. In: 2018 IEEE 18th International Conference on Advanced Learning Technologies (ICALT). IEEE, pp. 436–440 (2018)

Ashwin, T., Guddeti, R.M.R.: Automatic detection of students’ affective states in classroom environment using hybrid convolutional neural networks. Educ. Inf. Technol. 2018, 1–29 (2019a)

Ashwin, T., Guddeti, R.M.R.: Unobtrusive behavioral analysis of students in classroom environment using non-verbal cues. IEEE Access 7, 150,693–150,709 (2019b)

Ashwin, T., Jose, J., Raghu, G., Reddy, G.R.M.: An e-learning system with multifacial emotion recognition using supervised machine learning. In: 2015 IEEE Seventh International Conference on Technology for Education (T4E). IEEE, pp. 23–26 (2015)

Balaam, M., Fitzpatrick, G., Good, J., Luckin, R.: Exploring affective technologies for the classroom with the subtle stone. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems. ACM, pp. 1623–1632 (2010)

Bodily, R., Verbert, K.: Review of research on student-facing learning analytics dashboards and educational recommender systems. IEEE Trans. Learn. Technol. 10(4), 405–418 (2017)

Bonwell, C.C., Eison, J.A.: Active learning: creating excitement in the classroom. 1991 ASHE-ERIC Higher Education Reports. ERIC (1991)

Booth, B.M., Ali, A.M., Narayanan, S.S., Bennett, I., Farag, A.A.: Toward active and unobtrusive engagement assessment of distance learners. In: 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII). IEEE, pp. 470–476 (2017)

Bosch, N., D’mello, S.K., Ocumpaugh, J., Baker, R.S., Shute, V.: Using video to automatically detect learner affect in computer-enabled classrooms. ACM Trans. Interact. Intell. Syst. 6(2), 17 (2016)

Brown, B.W., Saks, D.H.: Measuring the effects of instructional time on student learning: evidence from the beginning teacher evaluation study. Am. J. Educ. 94(4), 480–500 (1986)

Burnik, U., Zaletelj, J., Košir, A.: Video-based learners’ observed attention estimates for lecture learning gain evaluation. Multimed. Tools Appl. 77, 16903–16926 (2017)

Calvo, R.A., D’Mello, S.: Affect detection: an interdisciplinary review of models, methods, and their applications. IEEE Trans. Affect. Comput. 1(1), 18–37 (2010)

Castellanos, J., Haya, P., Urquiza-Fuentes, J.: A novel group engagement score for virtual learning environments. IEEE Trans. Learn. Technol. 99, 1 (2017)

Chi, M., VanLehn, K., Litman, D., Jordan, P.: Empirically evaluating the application of reinforcement learning to the induction of effective and adaptive pedagogical strategies. User Model. User Adapt. Int. 21(1–2), 137–180 (2011)

Coffrin, C., Corrin, L., de Barba, P., Kennedy, G.: Visualizing patterns of student engagement and performance in moocs. In: Proceedings of the Fourth International Conference on Learning Analytics and Knowledge. ACM, pp. 83–92 (2014)

Conati, C.: Probabilistic assessment of user’s emotions in educational games. Appl. Artif. Intell. 16(7–8), 555–575 (2002)

Dhall, A., Goecke, R., Gedeon, T.: Automatic group happiness intensity analysis. IEEE Trans. Affect. Comput. 6(1), 13–26 (2015)

Dhamija, S.: Learning based visual engagement and self-efficacy. In: 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII). IEEE, pp. 581–585 (2017)

Dhamija, S., Boult, T.E.: Automated mood-aware engagement prediction. In: 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII). IEEE, pp. 1–8 (2017)

D’mello, S., Graesser, A.: Autotutor and affective autotutor: learning by talking with cognitively and emotionally intelligent computers that talk back. ACM Trans. Interact. Intell. Syst. 2(4), 23 (2012)

D’Mello, S., Picard, R.W., Graesser, A.: Toward an affect-sensitive autotutor. IEEE Intell. Syst. 22(4), 53 (2007)

D’Mello, S.K., Lehman, B., Person, N.: Monitoring affect states during effortful problem solving activities. Int. J. Artif. Intell. Educ. 20(4), 361–389 (2010)

D’Mello, S.K., Mills, C., Bixler, R., Bosch, N.: Zone out no more: mitigating mind wandering during computerized reading. In: EDM (2017)

D’Mello, S.: Monitoring affective trajectories during complex learning. In: Seel, N.M. (ed.) Encyclopedia of the Sciences of Learning. Springer, Boston, pp. 2325–2328 (2012)

Edwards, S.: Active learning in the middle grades. Middle Sch. J. 46(5), 26–32 (2015)

Ekman, P.: An argument for basic emotions. Cognit. Emot. 6(3–4), 169–200 (1992)

Girshick, R.: Fast r-cnn. In: Proceedings of the IEEE international conference on computer vision, pp. 1440–1448 (2015)

Girshick, R., Donahue, J., Darrell, T., Malik, J.: Rich feature hierarchies for accurate object detection and semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 580–587 (2014)

Grafsgaard, J.F., Wiggins, J.B., Boyer, K.E., Wiebe, E.N., Lester, J.C.: Embodied affect in tutorial dialogue: student gesture and posture. In: International Conference on Artificial Intelligence in Education. Springer, pp. 1–10 (2013)

Grafsgaard, J.F., Wiggins, J.B., Vail, A.K., Boyer, K.E., Wiebe, E.N., Lester, J.C.: The additive value of multimodal features for predicting engagement, frustration, and learning during tutoring. In: Proceedings of the 16th International Conference on Multimodal Interaction. ACM, pp. 42–49 (2014)

Grann, J., Bushway, D.: Competency map: visualizing student learning to promote student success. In: Proceedings of the Fourth International Conference on Learning Analytics and Knowledge. ACM, pp. 168–172 (2014)

Gupta, A., D’Cunha, A., Awasthi, K., Balasubramanian, V.: Daisee: Towards user engagement recognition in the wild (2016). arXiv preprint arXiv:1609.01885

Gupta, S.K., Ashwin, T.S., Guddeti, R.M.R.: Students’ affective content analysis in smart classroom environment using deep learning techniques. Multimed. Tools Appl. 78(18), 25,321–25,348 (2019). https://doi.org/10.1007/s11042-019-7651-z

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Holmes, M., Latham, A., Crockett, K., O’Shea, J.D.: Near real-time comprehension classification with artificial neural networks: decoding e-learner non-verbal behavior. IEEE Trans. Learn. Technol. 11(1), 5–12 (2018)

Hrastinski, S.: Asynchronous and synchronous e-learning. Educ. Q. 31(4), 51–55 (2008)

Hu, M., Li, H.: Student engagement in online learning: a review. In: 2017 International Symposium on Educational Technology (ISET). IEEE, pp. 39–43 (2017)

Huang, X., Dhall, A., Goecke, R., Pietikäinen, M., Zhao, G.: Multimodal framework for analyzing the affect of a group of people. IEEE Trans. Multimed. 20(10), 2706–2721 (2018)

Hutt, S., Mills, C., Bosch, N., Krasich, K., Brockmole, J., D’Mello, S.: Out of the fr-eye-ing pan: towards gaze-based models of attention during learning with technology in the classroom. In: Proceedings of the 25th Conference on User Modeling, Adaptation and Personalization. ACM, pp. 94–103 (2017)

Kim, Y., Jeong, S., Ji, Y., Lee, S., Kwon, K.H., Jeon, J.W.: Smartphone response system using twitter to enable effective interaction and improve engagement in large classrooms. IEEE Trans. Educ. 58(2), 98–103 (2015)

Klein, R., Celik, T.: The wits intelligent teaching system: detecting student engagement during lectures using convolutional neural networks. In: 2017 IEEE International Conference on Image Processing (ICIP). IEEE, pp. 2856–2860 (2017)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: Pereira, F., Burges, C.J.C., Bottou, L., Weinberger, K.Q. (eds.) Proceedings of the 25th International Conference on Neural Information Processing Systems - Volume 1 (NIPS’12), vol. 1. Curran Associates Inc., Lake Tahoe, pp. 1097–1105 (2012)

Ku, K.Y., Ho, I.T., Hau, K.T., Lai, E.C.: Integrating direct and inquiry-based instruction in the teaching of critical thinking: an intervention study. Instr. Sci. 42(2), 251–269 (2014)

Kulik, J.A., Fletcher, J.: Effectiveness of intelligent tutoring systems: a meta-analytic review. Rev. Educ. Res. 86(1), 42–78 (2016)

Lallé, S., Conati, C., Carenini, G.: Predicting confusion in information visualization from eye tracking and interaction data. In: IJCAI, pp. 2529–2535 (2016)

Liu, M., Calvo, R.A., Pardo, A., Martin, A.: Measuring and visualizing students’ behavioral engagement in writing activities. IEEE Trans. Learn. Technol. 8, 215–224 (2015)

Liu, W., Anguelov, D., Erhan, D., Szegedy, C., Reed, S., Fu, C.Y., Berg, A.C.: Ssd: Single shot multibox detector. In: European Conference on Computer Vision. Springer, pp. 21–37 (2016)

Maneeratana, K., Tiamsa-Ad, U., Ruengsomboon, T., Chawalitrujiwong, A., Aksomsiri, P., Asawapithulsert, K.: Class-wide course feedback methods by student engagement program. In: 2017 IEEE 6th International Conference on Teaching, Assessment, and Learning for Engineering (TALE). IEEE, pp. 393–398 (2017)

Mills, C., Wu, J., D’Mello, S.: Being sad is not always bad: the influence of affect on expository text comprehension. Discourse Process. 56(2), 99–116 (2019)

Monkaresi, H., Bosch, N., Calvo, R.A., D’Mello, S.K.: Automated detection of engagement using video-based estimation of facial expressions and heart rate. IEEE Trans. Affect. Comput. 8(1), 15–28 (2017)

Moore, S., Stamper, J.: Decision support for an adversarial game environment using automatic hint generation. In: International Conference on Intelligent Tutoring Systems. Springer, pp. 82–88 (2019)

Patwardhan, A.S., Knapp, G.M.: Affect intensity estimation using multiple modalities. In: The Twenty-Seventh International Flairs Conference (2014)

Picard, R.W., Picard, R.: Affective Computing, vol. 252. MIT Press, Cambridge (1997)

Psaltis, A., Apostolakis, K.C., Dimitropoulos, K., Daras, P.: Multimodal student engagement recognition in prosocial games. In: IEEE Transactions on Computational Intelligence and AI in Games (2017)

Radeta, M., Maiocchi, M.: Towards automatic and unobtrusive recognition of primary-process emotions in body postures. In: 2013 Humaine Association Conference on Affective Computing and Intelligent Interaction (ACII). IEEE, pp. 695–700 (2013)

Rajendran, R., Iyer, S., Murthy, S.: Personalized affective feedback to address students frustration in its. In: IEEE Transactions on Learning Technologies (2018)

Redmon, J., Divvala, S., Girshick, R., Farhadi, A.: You only look once: unified, real-time object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 779–788 (2016)

Ren, S., He, K., Girshick, R., Sun, J.: Faster r-cnn: Towards real-time object detection with region proposal networks. In: Advances in Neural Information Processing Systems, pp. 91–99 (2015)

Rowe, J., Mott, B., McQuiggan, S., Robison, J., Lee, S., Lester, J.: Crystal island: a narrative-centered learning environment for eighth grade microbiology. In: Workshop on Intelligent Educational Games at the 14th International Conference on Artificial Intelligence in Education. Brighton, UK, pp. 11–20 (2009)

Russell, J.A.: A circumplex model of affect. J. Pers. Soc. Psychol. 39(6), 1161 (1980)

Sekachev, Boris, Nikita, M., Andrey, Z.: Computer vision annotation tool: a universal approach to data annotation (2019). https://github.com/opencv/cvat

Sidney, K.D., Craig, S.D., Gholson, B., Franklin, S., Picard, R., Graesser, A.C.: Integrating affect sensors in an intelligent tutoring system. In: Affective Interactions: The Computer in the Affective Loop Workshop, pp. 7–13 (2005)

Silfver, E., Jacobsson, M., Arnell, L., Bertilsdotter-Rosqvist, H., Härgestam, M., Sjöberg, M., Widding, U.: Classroom bodies: affect, body language, and discourse when schoolchildren encounter national tests in mathematics. Gend. Educ. 1, 1–15 (2018)

Silva, P., Costa, E., de Araújo, J.R.: An adaptive approach to provide feedback for students in programming problem solving. In: International Conference on Intelligent Tutoring Systems. Springer, pp. 14–23 (2019)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition (2014). arXiv preprint arXiv:1409.1556

Sinatra, G.M., Heddy, B.C., Lombardi, D.: The challenges of defining and measuring student engagement in science. Educ. Psychol. 50(1), 1–13 (2015)

Singh, A., Karanam, S., Kumar, D.: Constructive learning for human-robot interaction. IEEE Potentials 32, 13–19 (2013)

Slater, S., Joksimović, S., Kovanovic, V., Baker, R.S., Gasevic, D.: Tools for educational data mining: a review. J. Educ. Behav. Stat. 42(1), 85–106 (2017)

Stewart, A., Bosch, N., Chen, H., Donnelly, P., D’Mello, S.: Face forward: Detecting mind wandering from video during narrative film comprehension. In: International Conference on Artificial Intelligence in Education. Springer, pp. 359–370 (2017)

Sun, B., Wei, Q., Li, L., Xu, Q., He, J., Yu, L.: Lstm for dynamic emotion and group emotion recognition in the wild. In: Proceedings of the 18th ACM International Conference on Multimodal Interaction. ACM, pp. 451–457 (2016)

Sun, M.C., Hsu, S.H., Yang, M.C., Chien, J.H.: Context-aware cascade attention-based rnn for video emotion recognition. In: 2018 First Asian Conference on Affective Computing and Intelligent Interaction (ACII Asia). IEEE, pp. 1–6 (2018)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Szegedy, C., Ioffe, S., Vanhoucke, V., Alemi, A.A.: Inception-v4, inception-resnet and the impact of residual connections on learning. In: AAAI, pp. 4278–4284 (2017)

Thomas, C., Jayagopi, D.B.: Predicting student engagement in classrooms using facial behavioral cues. In: Proceedings of the 1st ACM SIGCHI International Workshop on Multimodal Interaction for Education. ACM, pp. 33–40 (2017)

Tiam-Lee, T.J., Sumi, K.: Analysis and prediction of student emotions while doing programming exercises. In: International Conference on Intelligent Tutoring Systems. Springer, pp. 24–33 (2019)

Tucker, B.: The flipped classroom. Educ. Next 12(1), 82–83 (2012)

Van der Sluis, F., Ginn, J., Van der Zee, T.: Explaining student behavior at scale: the influence of video complexity on student dwelling time. In: Proceedings of the Third ACM Conference on Learning@ Scale. ACM, pp. 51–60 (2016)

Walker, E., Ogan, A., Aleven, V., Jones, C.: Two approaches for providing adaptive support for discussion in an ill-defined domain. Intelligent Tutoring Systems for Ill-Defined Domains: Assessment and Feedback in Ill-Defined Domains 1 (2008)

Wang, S., Ji, Q.: Video affective content analysis: a survey of state-of-the-art methods. IEEE Trans. Affect. Comput. 6(4), 410–430 (2015)

Watson, D., Tellegen, A.: Toward a consensual structure of mood. Psychol. Bull. 98(2), 219 (1985)

Whitehill, J., Serpell, Z., Lin, Y.C., Foster, A., Movellan, J.R.: The faces of engagement: automatic recognition of student engagement from facial expressions. IEEE Trans. Affect. Comput. 5(1), 86–98 (2014)

Woolf, B., Burleson, W., Arroyo, I., Dragon, T., Cooper, D., Picard, R.: Affect-aware tutors: recognising and responding to student affect. Int. J. Learn. Technol. 4(3–4), 129–164 (2009)

Xia, X., Liu, J., Yang, T., Jiang, D., Han, W., Sahli, H.: Video emotion recognition using hand-crafted and deep learning features. In: 2018 First Asian Conference on Affective Computing and Intelligent Interaction (ACII Asia). IEEE, pp. 1–6 (2018)

Yousuf, B., Conlan, O.: Supporting student engagement through explorable visual narratives. IEEE Trans. Learn. Technol. 11, 307 (2017)

Yu, Y.C.: Teaching with a dual-channel classroom feedback system in the digital classroom environment. IEEE Trans. Learn. Technol. 10(3), 391–402 (2017)

Yun, W.H., Lee, D., Park, C., Kim, J., Kim, J.: Automatic recognition of children engagement from facial video using convolutional neural networks. IEEE Trans. Affect. Comput. 6, 209 (2018)

Zaletelj, J., Košir, A.: Predicting students’ attention in the classroom from kinect facial and body features. EURASIP J. Image Video Process. 2017(1), 80 (2017)

Acknowledgements

The authors wish to thank undergraduates, postgraduates and doctoral research students of Department of Information Technology, National Institute of Technology Karnataka Surathkal, Mangalore, India, for their voluntary help in creating the affective database for both e-learning and classroom environments.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethical approval

The experimental procedure, participants and the course contents used in the experiment are approved by the Institutional Ethics Committee (IEC) of NITK Surathkal, Mangalore, India. The participants were also informed that they had the right to quit the experiment at any time. The video recordings of the subjects were included in the experiment only after they gave written consent for the use of their videos for this research experiment. All the subjects were also agreed to use their facial expressions, hand gestures and body postures for all the process involved in the completion of the entire project.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

-

1.

Affective State Definitions

We used the definitions mentioned in (D’Mello et al. 2010; Whitehill et al. 2014; D’Mello et al. 2007).

Boredom: uninterested in the current problem.

Confusion: poor comprehension of material, attempts to resolve erroneous belief.

Disgust: annoyance and/or irritation with the material and/or their abilities.

Fear: feelings of panic and/or extreme feelings of worry.

Frustration: difficulty with the material and an inability to fully grasp the material.

Happiness: satisfaction with performance, feelings of pleasure about the material.

Neutral: displays no visible affect, at a state of homeostasis.

Sadness: feelings of melancholy, beyond negative self-efficacy.

Surprise: genuinely does not expect an outcome or feedback.

Delight: a high degree of satisfaction.

Sleepy: extremely not interested and in a mental state of sleep.

Engaged: a state of interest that results from involvement in an activity.

-

2.

Inquiry Intervention Module

These are the sample questions asked in the inquiry intervention module.

-

(a)

Examples of questions to stimulate critical thinking.

Q: What point you think the Schachter and Singer is making in their proposed third interpretation [312a]?

This illustrates a question that asks students for clarification.

Q: What are the notable similarities and/or differences between the items on your list and those provided by D A Norman’s Emotion and Design?

This question challenges the students to compare and contrast ideas provided by two sources.

Q: What are the possible underlying assumptions behind Shneiderman’s criteria?

This question challenges the students to demonstrate the critical skill of identifying assumptions made by an author.

Q: What alternative assumptions might an HCI Analyst make?

This question further probes students’ understanding of assumptions by asking to identify alternative assumptions that might be made.

Q: Do you personally agree with Shneiderman’s criteria and what types of evidence can you offer to support your position?

These two critical thinking questions ask students to both take a personal position and to provide relevant information or evidence to support their decision.

-

(b)

Examples of questions to stimulate creative thinking.

Q: How many different heuristics can you list that are the most important and will receive attention in heuristic evaluation stated in Jakob Nielsen’s ten heuristics?

This question challenging students to come up with as many possible different ideas as they can and elicits the type of creative thinking process that has been described in the literature as fluency.

Q: If Shneiderman wanted to identify Eight Golden Rules of Interface Design instead of only five, what three additional possibilities would you suggest him to add to his list?

This question, requiring students to embellish or expand upon ideas, elicits a type of creative thinking process that has been described in the literature as elaboration.

Q: What novel, unique, or unusual types of course activities and assignments do you think they are most likely to inspire today‘s college and university students?

This type of question illustrates one approach to stimulate the creative thinking skill of originality.

-

(c)

Examples of questions to stimulate curiosity.

Q: Who is Don Norman and why might people be interested in his perspective on this topic?

Q: What was Jakob Nielsen like as a student?

The questions asked are not having the similar pattern mentioned in the examples for all the subjects, but the underlying concepts for framing the questions remains the same.

-

(a)

-

3.

Duplications removal during training phase dataset annotation

The image frames obtained from classroom data with spontaneous expressions are collected at the rate of 60 fps. There is a chance that two successive frames may have more than 80% similarity. In order to avoid this, we considered the image frames with a gap of 5 frames. For multi-person’ posed expressions, we selected 2 to 5 image frames with peak emotion intensity where all the students are present in that single frame image. If the expression or pose in those image frames are similar (more than 80%), then the redundant image frames are discarded. Thus, we avoided the duplications. We did not remove the duplications during the real-time affective content analysis.

-

4.

Affective State Classification and Localization Technique

Inception-V3 (Szegedy et al. 2016) and YOLOv2 (Redmon et al. 2016) are the base architectures for the affective state classification and localization, respectively. We modified the existing architecture to perform better in real time for students’ affective state classification and localization. The details are mentioned as follows. The proposed architecture is divided into four major parts. The first part is stem, which contains the convolutional and pooling layers until the factorization of convolutional layers. The second part contains the factorization into smaller convolutions using filter concats. The third part contains the auxiliary classifiers, and the last part contains fully connected layers and softmax classifier. The entire proposed architecture consists of 80 layers with stride 2 and 6 pooling layers which are used for an independent evaluation over each activation maps and for size reduction. The last pooling layer is connected to two fully connected layers. Those fully connected layers are connected to the softmax layer.

Training phase starts with the backpropagation using the recursive chain rule for computing the gradients for all inputs, parameters and intermediates. The entire process of backpropagation is stored in a graph structure. The forward pass computes the results of an operation and stores the gradient, value whereas the backward pass computes the gradient of loss function w.r.t. the inputs.

Equation 2 shows the loss function performed using multinomial logistic regression where \(y_i\) is the ith image label and \(x_i\) is ith image frame, using these, the loss for the ith image \(L_i\) is calculated. The overall mean of training loss of the entire training data \(L_t\) is defined as the total data loss given in Eq. 2.

$$\begin{aligned} L_{t}= & {} \frac{1}{N}\sum _{i=1}^{N}L_{i}\nonumber \\ \mathrm{where}, \; L_{i}= & {} -\log \left( \frac{e^{sy_{i}}}{\sum _{j}e^{s_{j}}}\right) \end{aligned}$$(2)Since the data are used for analyzing multiple class in a single image frame (dense labeling), we used the activation function which neither saturates for both positive and negative values nor dies for negative values. Hence, Leaky ReLU (Rectified Linear Unit) is used as an activation function (Eq. 3) where x is an is an input column vector containing all pixel data of the image.

$$\begin{aligned} f\left( x \right) = \max (\alpha x,x) \; \mathrm{where} \; \alpha = 0.01 \end{aligned}$$(3)Xavier initialization (Eq. 4 where \(n\_in\) & \(n\_out\) are input neurons from current & next layer and r is to calculate zero mean) is used for weight initialization. Batch normalization is also used along with the hyperparameter optimization. Minibatch stochastic gradient descent is used in a loop structure where it performs 4 major steps; Step 1: data are divided into N samples. Step 2: forward propagate to get the loss. Step 3: calculate the gradients using backpropagation. Step 4: from the calculated gradients, update the parameters.

$$\begin{aligned} W=random.r(n\_in,n\_out)/sqrt(n\_in) \end{aligned}$$(4)Parameters update is performed using RMSProp. Learning rate is a hyperparameter for RMSProp. Regularization is performed using Dropout. Monte Carlo approximation is used in dropout where several forward passes with different dropout masks are performed and final prediction is average of all predictions. Numeric gradient is used for gradient check.

Group Engagement Score Generally, the data collected from e-learning environment contain single student in a single image frame, but the students’ spontaneous classroom data can have different affective states for each student present in a single image frame. Hence we used feature fusion to calculate the same. The multi-modal (here, it is an intra-image multi-modality where the features of different students with their facial expressions, hand gestures and body postures present within that image frame are considered) feature fusion vector \(V_f\) for any pixel \(p_i\) and normalized prediction vector \(N_{P_i}\) uses normalized predicted probability distribution \(N_{P_i,a}\) of class a using the softmax function (Eq. 5).

where W is the temporary weight matrix used to learn the features. The training generally converges in \(T=6000\) epocs. The final collective average affective state score \(A_{S_i}\) is given by Eq. 6.

Data augmentation Data augmentation has increased training data size by tenfold. Following are the different data augmentation techniques which we performed on our datasets.

-

channel_shift_range: Random channel shifts of the image.

-

zca_whitening: Applies ZCA whitening to the image.

-

rotation_range: Random rotation of image with a degree range.

-

width_shift_range: Random horizontal shifts of the image with a fraction of total width.

-

height_shift_range: Random vertical shifts of the image with a fraction of total height.

-

shear_range: Shear intensity of the image where the shear angle is in the counter-clockwise direction as radian.

-

zoom_range: Random zoom of the image where the lower value is 1-room_range and upper value is 1+zoom_range.

-

fill_mode: If any of constant, nearest, reflect or wrap are filled according to the given mode, if any points outside the boundaries of the input.

-

horizontal_flip: Randomly flip the inputs horizontally. Table 12 shows the details of different data augmentations performed on our dataset.

-

5.

Student Engagement Level Analysis using its Graphical Representation

A sample snapshot of student’s engagement level for a mini-concept of 15 min duration is shown in Fig. 12. There is a drop in the engagement score (Attentive State to In-Attentive state) of the student from 10 to 12.5 min range. Initially, we observed a similar drop after 5 to 6 min of mini-concept video completion for more than 30% of students in e-learning environment. After introducing InIvs, the engagement drop is reduced from 30% to 7%.

The distribution of attentive state and in-attentive state instances for a sample 5 min duration of a mini-concept (the same concept is taught in all four learning environments) is shown in Fig. 13. Though the number of students is significantly more in the classroom environment, we can observe that the median is almost the same.

-

6.

Semi-automatic Annotation Process The semi-automatic annotation process speeds up the process. Initially, annotations are performed manually. Once sufficient annotated image frames are obtained for training, we used the semi-automatic annotation process (Sekachev et al. 2019) to make the annotation process faster. Here, we used deep learning architectures for object localization and classification, where the deep learning architecture predicts the bounding box and the affective state. The annotator manually checks and corrects if the bounding boxes and classified affective state is not correct. For example, if the bounding box predicted by the deep learning architecture is not a tight bounding box, then the annotator adjusts it to make it close instead of drawing the bounding box from scratch for all the multitude of modalities present in that image frame.

Rights and permissions

About this article

Cite this article

Ashwin, T.S., Guddeti, R.M.R. Impact of inquiry interventions on students in e-learning and classroom environments using affective computing framework. User Model User-Adap Inter 30, 759–801 (2020). https://doi.org/10.1007/s11257-019-09254-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11257-019-09254-3