Abstract

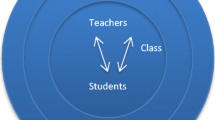

This research contributes to the ongoing debate about differences in teachers’ performance. We introduce a new methodology that combines production frontier and impact evaluation insights that allows using DEA as an identification strategy of a treatment with high and low quality teachers within schools to assess their performance. We use a unique database of primary schools in Spain that, for every school, supplies information on two classrooms at 4th grade where students and teachers were randomly assigned into the two classrooms. We find considerable differences in teachers’ efficiency across schools with significant effects on students’ achievement. In line with previous findings, we find that neither teacher experience nor academic training explains teachers’ efficiency. Conversely, being a female teacher, having worked five or more years in the same school or having smaller class sizes positively affects the performance of teachers.

Similar content being viewed by others

Notes

For the sake of simplicity, the model is described for two groups. In the case of more groups, the model extension is trivial taking a group k as reference and calculating k-1 differences.

As the number of students in each group is limited and \(\gamma _{ik}\) represents an average, we could also say that randomization brings about that the expected value of the ratio of average unobserved characteristics of students belonging to different classes in the same school is equal to one; \(E\;\left( {\frac{{\gamma _{i1}}}{{\gamma _{i2}}}} \right) = 1\).

The use of ratios instead of differences is necessary to isolate the difference in teachers’ performance. Calculating \(u_{i1} - u_{i2} = (\gamma _{i1} \cdot \tau _i \cdot \omega _{i1}) - (\gamma _{i2} \cdot \tau _i \cdot \omega _{i2}) = (\omega _{i1} - \omega _{i2})\gamma _i\tau _i\), implies that the \(\gamma _i\tau _i\) term is not the same for every school in the sample confounding again the difference in teachers’ performance. For example, assuming two schools A and B with an identical efficiency level for both teachers \(\omega _{AT} = \omega _{BT} = 0.945\), \(\omega _{AC} = \omega _{BC} = 0.90\), we conclude that \(u_{i1}/u_{i2} = 1.05\) in both schools regardless \(\gamma _i\) and \(\tau _i\) values. The interpretation and comparison through \(u_{i1} - u_{i2}\) could dramatically bias these results depending on \(\gamma _i\) and \(\tau _i\) values in each school. Although we know that \((\omega _{i1} - \omega _{i2}) = 0.045\) giving \(\gamma _A = 0.9\) and \(\tau _A = 0.9\) for school A and \(\gamma _B = 0.7\) and \(\tau _B = 0.7\) for school B, results point out now \(u_{A1} - u_{A2} = 0.045 \times 0.9 \times 0.9 = 0.03645\) and \(u_{B1} - u_{B2} = 0.045 \times 0.7 \times 0.7 = 0.02205\) providing downwards biased results and a misleading comparison between both teachers in the two schools.

Note here that for maintaining the coherence with the definitions made in Eq. (2) the efficiency measure used in this paper \(u_{ik}\) is the inverse of the term \(\varphi _{ik}\) by which the production of all output quantities could be increased to reach the estimated production frontier for the given input level.

Note that when both efficiency indexes are exactly equal \(\hat u_{iT} = \hat u_{iC}\) the decision about which group is ‘treated’ and ‘control’ is taken randomly. In our empirical example, this happened in 4.26% of cases.

EGD comes from Evaluación General de Diagnóstico, its name in Spanish. A detailed description of this database including sample design and included variables can be found in INEE (2010).

As a referee suggests it is worth to highlight, for being precise, that ‘surnames alphabetical order’, ‘balance between girls and boys’ and ‘pursuing heterogeneity among students’ are not selective but neither pure random criteria.

This index was calculated by EGD analysts through a factor analysis considering four components: highest educational attainment of parents; highest professional status of parents; number of books in the household and level of domestic resources.

This index was computed through a factor analysis of the teachers’ responses to four questions related to the scarcity or lack of: educational materials, computers for teaching, instructional support staff and other support staff. The higher the index, the better the quality of the school’s resources.

Selected variables include: ‘gender’ which takes the value one when the teacher is a female; ‘graduated’ which takes the value one when the teacher holds a bachelor’s diploma; ‘less10experience’ which takes the value one when the teacher has less than ten years of teaching experience; ‘more30experience’ which takes the value one when the teacher has more than thirty years of teaching experience; ‘less5seniority’ which takes the value one when the teacher has been working in the school less than five years and ‘tutor’ which takes the value one when the teacher has been the teacher of the evaluated classroom in the last two academic years, i.e. third and fourth grades, and zero otherwise (just the current fourth year).

Selected variables include: ‘public’ which takes the value one when the school’s ownership is public; ‘rural’ which takes the value one when the school is located in a town with less than 10,000 inhabitants; ‘big city’ which takes the value one when the school is located in a city with more than 500,000 inhabitants; ‘female-principal’ which takes the value one when the principal is a female; and ‘less5experience-principal’ which takes the value one when the school principal has less than 5 years of experience.

As it is shown in Table 2, the average results in reading and mathematics test in the 422 analysed classrooms are 508.0 and 506.6, with a standard deviation of 42.4 and 41.9 respectively.

Note that this impact is very close to the impact measured through the differences in outputs. This result reinforces the fact that although differences in inputs serve to compare and point out the better treated teacher inside every school, these differences are non-statistically significant so differences in efficiency practically measure differences in outputs.

This association between teachers’ seniority and efficiency should be cautiously interpreted, as it is only significant at the 10% level of confidence.

International evidence suggests that in systems where exists this type of entrance examinations, the score that teachers obtain is positively related to their effectiveness in terms of student outcomes (Clotfelter et al. 2007).

On average, classrooms in our sample have 24 students with a standard deviation of 2.77.

See Chingos (2013) for a detailed review of class size literature based on experiments or quasi-experiments.

It is not strange that during the course some students leave from the school or arrive to the school due to different reasons as change jobs, residence or family structure.

References

Aaronson D, Barrow L, Sander W (2007) Teachers and student achievement in the Chicago public high schools. J Labor Econ 25:95–135

Akerhielm K (1995) Does class size matter? Econ Educ Rev 14:229–241

Angrist JD, Lavy V (1999) Using Maimonides’ rule to estimate the effect of class size on scholastic achievement. Q J Econ 114:533–575

Angrist JD, Lavy V, Leder-Luis J, and Shany A (2017). Maimonides Rule Redux (No. w23486). National Bureau of Economic Research.

Banker RD, Charnes A, Cooper WW (1984) Some models for estimating technical and scale inefficiencies in data envelopment analysis. Manage Sci 30:1078–1092

Barro RJ, Lee JW (1996) International measures of schooling years and schooling quality. Am Econ Rev 86:218–223

Bietenbeck J (2014) Teaching practices and cognitive skills. Labour Econ 30:143–153

Boozer M, Rouse C (2001) Intraschool variation in class size: patterns and implications. J Urban Econ 50:163–189

Charnes A, Cooper WW, Rhodes E (1978) Measuring the efficiency of decision making units. Eur J Oper Res 2:429–444

Charnes A, Cooper WW, Rhodes E (1981) Evaluating program and managerial efficiency: an application of data envelopment analysis to program follow through. Manage Sci 27:668–697

Chetty R, Friedman JN, and Rockoff JE (2011). The long-term impacts of teachers: teacher value-added and student outcomes in adulthood. NBER: wp17699, National Bureau of Economic Research.

Chingos MM (2013) Class size and student outcomes: research and policy implications. J Policy Anal Manag 32:411–438

Chudgar A, Sankar V (2008) The relationship between teacher gender and student achievement: evidence from five Indian states. Compare 38:627–642

Clotfelter CT, Ladd HF, Vigdor JL (2006) Teacher-student matching and the assessment of teacher effectiveness. J Hum Resour. 41:778–820

Clotfelter CT, Ladd HF, Vigdor JL (2007) Teacher credentials and student achievement: longitudinal analysis with student fixed effects. Econ Educ Rev 26:673–682

Coleman JS, Campbell EQ, Hobson CJ, McPartland J, Mood AM, Weinfeld FD, York R (1966) Equality of educational opportunity. Washington DC, 1066-5684.

Cooper ST, Cohn E (1997) Estimation of a frontier production function for the South Carolina educational process. Econ Educ Rev 16:313–327

Cordero JM, Cristobal V, & Santín D (2017). Causal inference on education policies: a survey of empirical studies using PISA, TIMSS and PIRLS. J Econ Surv doi: https://doi.org/10.1111/joes.12217.

Cordero JM, Santín D, Sicilia G (2015) Testing the accuracy of DEA estimates under endogeneity through a Monte Carlo simulation. Eur J Oper Res 244:511–518

Dee TS (2005) A teacher like me: does race, ethnicity, or gender matter? Am Econ Rev 95(2):158–165

Dee TS (2007) Teachers and the gender gaps in student achievement. J Hum Resour 42(3):528–554

Dee TS, Wyckoff J (2015) Incentives, selection, and teacher performance: evidence from IMPACT. J Policy Anal Manag 34:267–297

De la Fuente A (2011) Human capital and productivity. Nordic Econ Policy Rev 2:103–132

De Witte K, Rogge N (2011) Accounting for exogenous influences in performance evaluations of teachers. Econ Educ Rev 30:641–653

De Witte K, Van Klaveren C (2014) How are teachers teaching? A nonparametric approach. Educ Econ 22:3–23

Escardíbul JO, Mora T (2013) Teacher gender and student performance in mathematics. Evidence from Catalonia (Spain). J Educ Train Stud 1:39–46

Gordon RJ, Kane TJ, Staiger D (2006) Identifying effective teachers using performance on the job. Brookings Institution, Washington DC

Hanushek EA (1979) Conceptual and empirical issues in the estimation of educational production functions. J Hum Resour 14:351–388

Hanushek EA (1997) Assessing the effects of school resources on student performance: An update. Educ Eval Policy Anal 19:141–164

Hanushek EA, Kimko DD (2000) Schooling, labor-force quality, and the growth of nations. Am Econ Rev 90:1184–1208

Hanushek, E. A. (2003) The Failure of Input-based Schooling Policies. The Economic Journal 113:64–98

Hanushek EA, Rivkin SG (2006) Teacher quality. In: Hanusheck EA, Welch F (eds) Handbook of the Economics of Education. 2. North-Holland, Amsterdam

Hanushek EA, Rivkin SG (2010) Generalizations about using value-added measures of teacher quality. Am Econ Rev 100:267–271

Hanushek EA, Rivkin SG (2012) The distribution of teacher quality and implications for policy. Annu Rev Econ 4:131–157

Hanushek EA, Woessmann L (2012) Do better schools lead to more growth? Cognitive skills, economic outcomes, and causation. J Econ Growth 17:267–321

Heckman JJ, Kautz T (2013). Fostering and measuring skills: Interventions that improve character and cognition. NBER wp19656. National Bureau of Economic Research.

Hoxby CM (1999) The productivity of schools and other local public goods producers’. J Public Econ 74:1–30

Hoxby CM (2000) The effects of class size on student achievement: New evidence from population variation. Q J Econ 115:1239–1285

INEE. (2010). Evaluación general de diagnóstico 2009. Educación primaria. Cuarto curso. Informe de resultados. Ministerio de Educación. Madrid.

Kane TJ, Rockoff JE, Staiger DO (2008) What does certification tell us about teacher effectiveness? Evidence from New York City. Econ Educ Rev 27:615–631

Kane TJ, Staiger DO (2008). Estimating teacher impacts on student achievement: an experimental evaluation. NBER wp14607. National Bureau of Economic Research.

Koedel C, Mihaly K, Rockoff JE (2015) Value-added modeling: a review. Econ Educ Rev 47:180–195

Krieg JM (2005) Student gender and teacher gender: what is the impact on high stakes test scores. Curr Issues Educ 8:1–16

Levin HM (1974) Measuring efficiency in educational production’. Public Finan Q 2:3–24

Peyrache A, Coelli T (2009) Testing procedures for detection of linear dependencies in efficiency models. Eur J Oper Res 198(2):647–654

Rivkin SG, Hanushek EA, Kain JF (2005) Teachers, schools, and academic achievement. Econometrica 73:417–458

Rockoff JE (2004) The impact of individual teachers on student achievement: evidence from panel data. Am Econ Rev 94:247–252

Rothstein J (2010) Teacher quality in educational production: Tracking, decay, and student achievement. Q J Econ 125:175–214

Sacerdote B (2001) Peer effects with random assignment: results for dartmouth roommates. Q J Econ 116:681–704

Santín D, Sicilia G (2017) Dealing with endogeneity in data envelopment analysis applications. Expert Syst Appl 68:173–184

Schlotter M, Schwerdt G, Woessmann L (2011) Econometric methods for causal evaluation of education policies and practices: a non‐technical guide. Educ Econ 19:109–137

Slater H, Davies NM, Burgess S (2012) Do teachers matter? measuring the variation in teacher effectiveness in England. Oxf Bull Econ Stat 74:629–645

Webbink D (2005) Causal effects in education. J Econ Surv 19:535–560

Acknowledgements

We thank two anonymous referees for helpful discussions and suggestions. Research support from the Fundación Ramón Areces is acknowledged by the authors. Gabriela Sicilia thanks financial support received from the Agencia Nacional de Investigación e Innovación.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no competing interests.

Rights and permissions

About this article

Cite this article

Santín, D., Sicilia, G. Using DEA for measuring teachers’ performance and the impact on students’ outcomes: evidence for Spain. J Prod Anal 49, 1–15 (2018). https://doi.org/10.1007/s11123-017-0517-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11123-017-0517-3