Abstract

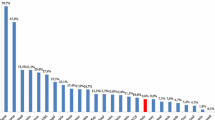

It is important for policymakers and managers of higher education institutions knowing how well their universities are operating. This article aimed to show that data envelopment analysis (DEA) can be an excellent benchmarking instrument in higher education. First, by using several inputs and outputs at the institutional level, DEA can identify technically efficient institutions that may work as a benchmark in the sector becoming a reliable tool for ranking universities. Second, a bootstrapped–truncated regression allows us to understand the factors affecting technical efficiency of the institutions under evaluation. The case of Spanish public universities is taken as an example to verify the usefulness of the proposed methods. Our empirical strategy was based on a two-stage procedure to evaluate their internal efficiency in the provision of teaching and research. In the first stage, we estimated a technical efficiency score for each university. The average efficiency among Spanish universities was about 92%. In the second stage, we regressed the efficiency scores against a set of covariates to investigate their association with the level of university (in)efficiency. We found that universities with a higher percentage of grantees tend to be less inefficient, and a higher percentage of academics with tenure enhances the productive efficiency of the Spanish higher education sector. Finally, we computed Spearman’s rank correlations between DEA efficiency scores and the classification of Spanish institutions in university rankings such as the SCImago and Shanghai rankings. The results revealed that the ranking positions given by DEA scores to Spanish universities matched their positions in recognized rankings.

Similar content being viewed by others

Notes

The Shanghai Jiao Tong University Institute of Higher Education Academic Ranking of World Universities (ARWU), also known as the Shanghai Ranking.

Docampo’s findings (2011) support the use of the Shanghai ranking at the aggregate level to monitor the research performance of the different university systems around the world.

Position #85 in ARWU 2018.

The DEA results are sensitive to the choice of inputs and outputs that are chosen in the efficiency analysis.

Technology, in the sense of the economic theory, refers to the known ways of transforming inputs into outputs.

Efficiency requires knowledge of the outputs of universities, inputs going into those outputs, and the production relationship between them (Johnes and Taylor 1990).

Closely related to the analysis of educational production is the study of education costs. However, the study of the production process from an economic point of view is outside the scope of this paper.

For example, it would be useless to produce many theoretical physicists efficiently if society does not need them.

On the other hand, allocative efficiency exhibits the ability of the firm to choose an optimal set of resources given the input prices.

DEA is a nonparametric technique that, through linear programming, approximates the true but unknown production function without imposing any restriction on the sample distribution.

See Thanassoulis et al. (2016) for a comprehensive review of applications of DEA in secondary and tertiary education.

Named after Charnes et al. (1978).

Charnes et al. (1978) gave the model in two orientations: input and output orientations.

Named after Banker et al. (1984).

In most countries, HEIs are mainly funded by public funds. It seems reasonable to assume that the objective of the universities is oriented toward making the best use of available resources.

[(ϕ − 1) 100] is the percentage increase in outputs that could be achieved by the DMU under study with input quantities held constant.

It is likely that the production technology is different for the latter.

Before the university reform of 2010, university degrees in Spain were simultaneously made up of short-cycle university studies (3 years, equivalent to undergraduate studies) and long-cycle university studies (4 or 5 years, equivalent to graduate studies).

Using the number of university graduates as an output has two problems. On the one hand, the temporal dimension, since it is not known how long the students have been at the university until they obtain the diploma (many students need more years than those programmed to achieve their university degree). On the other hand, it does not take into account dropouts.

In this paper, we considered the contribution of a variable to the total efficiency as determined by its level (of input or output) times the weight. See Angulo-Meza and Lins (2002) for further details.

We had no information to measure the so-called third mission of universities.

We already discussed in footnote 21 the limitations of using the number of (under)graduates as output.

The Spanish university grading system is based on decimal grades from 0 to 10 (the highest score), passing with a 5.

Grants from the Spanish Ministry of Education, 2008/2009 academic year (AY).

Quotient between current operating expenses in 2008 (€) and total number of students enrolled in the 2008/2009 AY.

In Spain, to get tenure, academics must show high scientific productivity.

When a CRS technology applies, the input-oriented DEA gives the same technical efficiency scores—in this case, between 0 and 1, but they are the inverse of those scores shown in the second column in Table 2. Therefore, this is an advantage in our study by having assumed an output-oriented DEA.

DEA produces a measure of efficiency relative to that achieved by the other producers or DMUs in the sample.

We have chosen the classification of 2012.

Pérez, F. (Dir.) (2013). Rankings ISSUE 2013. Indicadores sintéticos de las universidades españolas. Valencia, Spain: IVIE-Fundación BBVA. Available at: http://www.u-ranking.es/.

The ISSUE-V ranking of the Spanish public universities also takes into account the size of each university.

Adaptation made by D. Docampo of the 2012 Shanghai ranking and published by the IVIE in the source cited in footnote number 32.

There was a strong (positive) correlation mainly with the SCImago and Shanghai rankings.

As long as the inputs and outputs of the educational production process are properly chosen. In our case, hypothesis testing shown in Table 5 reveals that the choice of inputs and outputs was adequate.

Through using DEA, each DMU can select the best input and output weights through solving a linear programming problem in order to get a higher efficiency (Davoodi and Rezai 2012).

References

Abbott, M., & Doucouliagos, C. (2003). The efficiency of Australian universities: A data envelopment analysis. Economics of Education Review,22(1), 89–97.

Agasisti, T., & Dal Bianco, A. (2009). Reforming the university sector: Effects on teaching efficiency—Evidence from Italy. Higher Education,57(4), 477–498.

Agasisti, T., & Gralka, S. (2019). The transient and persistent efficiency of Italian and German universities: A stochastic frontier analysis. Applied Economics,51(46), 5012–5030.

Agasisti, T., & Pérez-Esparrells, C. (2010). Comparing efficiency in a cross-country perspective: The case of Italian and Spanish state universities. Higher Education,59(1), 85–103.

Agasisti, T., & Wolszczak-Derlacz, J. (2014). Exploring universities’ efficiency differentials between countries in a multi-year perspective: An application of bootstrap DEA and Malmquist index to Italy and Poland, 2001–2011 (IRLE Working Paper No. 113-14). Retrieved from http://irle.berkeley.edu/workingpapers/113-14.pdf.

Agasisti, T., & Wolszczak-Derlacz, J. (2015). Exploring efficiency differentials between Italian and Polish universities, 2001–2011. Science and Public Policy,43(1), 128–142.

Aigner, D., Lovell, C. A. K., & Schmidt, P. (1977). Formulation and estimation of stochastic frontier production function models. Journal of Econometrics,6(1), 21–37.

Angulo-Meza, L., & Lins, M. P. E. (2002). Review of methods for increasing discrimination in data envelopment analysis. Annals of Operations Research,116(1–4), 225–242.

Badunenko, O., & Mozharovskyi, P. (2016). Nonparametric frontier analysis using Stata. The Stata Journal,16(3), 550–589.

Ball, R., & Halwachi, J. (1987). Performance indicators in higher education. Higher Education,16(4), 393–405.

Banker, R. D., Charnes, A., & Cooper, W. W. (1984). Some models for estimating technical and scale inefficiencies in data envelopment analysis. Management Science,30, 1078–1092.

Banker, T., & Natarajan, R. (2008). Evaluating contextual variables affecting productivity using data envelopment analysis. Operations Research,56(1), 48–58.

Billaut, J. C., Bouyssou, D., & Vincke, P. (2009). Should you believe in the Shanghai ranking? An MCDM view. Scientometrics,84(1), 237–263.

Bougnol, M. L., & Dulá, J. H. (2006). Validating DEA as a ranking tool: An application of DEA to assess performance in higher education. Annals of Operations Research,145(1), 339–365.

Cave, M. (1997). The use of performance indicators in higher education: A critical analysis of developing practice. London: Kingsley.

Chang, Y. T., Lee, S., & Park, H. K. (2017). Efficiency analysis of major cruise lines. Tourism Management,58, 78–88.

Charnes, A., Cooper, W. W., & Rhodes, E. (1978). Measuring the efficiency of decision making units. European Journal of Operational Research,2, 429–444.

Coelli, T. J., Rao, D. S. P., O’Donnell, C. J., & Battese, G. E. (2005). An introduction to efficiency and productivity analysis (2nd ed.). Dordrecht: Springer.

Cohn, E., Rhine, S., & Santos, M. (1989). Institutions of higher education as multi-product firms: Economies of scale and scope. Review of Economics and Statistics,71(2), 284–290.

Davoodi, A., & Rezai, H. Z. (2012). Common set of weights in data envelopment analysis: A linear programming problem. Central European Journal of Operations Research,20(2), 355–365.

De Mesnard, L. (2012). On some flaws of university rankings: The example of the SCImago report. Journal of Socio-Economics,41(5), 495–499.

Docampo, D. (2011). On using the Shanghai ranking to assess the research performance of university systems. Scientometrics,86(1), 77–92.

Dynarski, S. M. (2003). Does aid matter? Measuring the effect of student aid on college attendance and completion. American Economic Review,93(1), 279–288.

Emrouznejad, A., Parker, B. R., & Tavares, G. (2008). Evaluation of research in efficiency and productivity: A survey and analysis of the first 30 years of scholarly literature in DEA. Journal of Socio-Economics Planning Science,42(3), 151–157.

Färe, R., Grosskopf, S., Norris, M., & Zhongyang, Z. (1994). Productivity growth, technical progress and efficiency change in industrialized countries. American Economic Review,84(1), 66–83.

Färe, R., & Primont, D. (1995). Multi-output production and duality: Theory and applications. Boston: Kluwer.

Farrell, M. (1957). The measurement of productive efficiency. Journal of the Royal Statistical Society (Series A),120, 253–281.

Flegg, T., Allen, D., Field, K., & Thurlow, T. W. (2004). Measuring the efficiency of British universities: A multi-period data envelopment analysis. Education Economics,12(3), 231–249.

Florian, R. V. (2007). Irreproducibility of the results of the Shanghai academic ranking of world universities. Scientometrics,72(1), 25–32.

Fried, H. O., Lovell, C. A. K., & Schmidt, S. S. (Eds.). (2008). The measurement of productive efficiency and productivity growth. Oxford: Oxford University Press.

Gillen, D., & Lall, A. (1997). Developing measures of airport productivity and performance: An application of data envelopment analysis. Transportation Research Part E: Logistics and Transportation Review,33(4), 261–273.

Gralka, S., Wohlrabe, K., & Bornmann, L. (2019). How to measure research efficiency in higher education? Research grants vs. publication output. Journal of Higher Education Policy and Management,41(3), 322–341.

Gulbrandsen, M., & Slipersæer, S. (2007). The third mission and the entrepreneurial university model. In A. Bonaccorsi & C. Daraio (Eds.), Universities and strategic knowledge creation. Specialization and performance in Europe (pp. 112–143). Cheltenham: Edward Elgar.

Halkos, G., Tzeremes, N. G., & Kourtzidis, S. A. (2012). Measuring public owned university departments’ efficiency: A bootstrapped DEA approach. Journal of Economics and Econometrics,55(2), 1–24.

Hazelkorn, E. (2007). The impact of league tables and ranking systems on higher education decision making. Higher Education Management and Policy,19(2), 1–24.

Hazelkorn, E. (2015a). Rankings and the reshaping of higher education: The battle for world-class excellence (2nd ed.). London: Palgrave Macmillan.

Hazelkorn, E. (2015b). Globalization, internationalization and rankings. International Higher Education,53, 8–10.

Johnes, J. (2006). Data envelopment analysis and its application to the measurement of efficiency in higher education. Economics of Education Review,25(3), 273–288.

Johnes, J. (2014). Efficiency and mergers in English higher education 1996/1997 to 2008/2009: Parametric and non-parametric estimation of the multi-input multi-output distance function. Manchester School,82(4), 465–487.

Johnes, J. (2016). Performance indicators and rankings in higher education. In R. Barnett, P. Temple & P. Scott (Eds.), Valuing higher education: An appreciation of the work of Gareth Williams (Ch. 4). London: UCL IOE Press.

Johnes, J. (2018). University rankings: What do they really show? Scientometrics,115(1), 585–606.

Johnes, J., & Taylor, J. (1990). Performance indicators in higher education. Buckingham: Society for Research into Higher Education and Open University Press.

Jöns, H., & Hoyler, M. (2013). Global geographies of higher education: The perspective of world university rankings. Geoforum,46, 45–59.

Kao, C., & Hung, H. T. (2008). Efficiency analysis of university departments: An empirical study. Omega,36(4), 653–664.

Krahn, H., & Bowlby, J. W. (1997). Good teaching and satisfied university graduates. Canadian Journal of Higher Education,27(2/3), 157–179.

Lassibille, G., & Navarro-Gómez, M. L. (2011). How long does it take to earn a higher education degree in Spain? Research in Higher Education,52(1), 63–80.

Liu, J. S., Lu, L. Y. Y., Lu, W. M., & Lin, B. J. Y. (2013). A survey of DEA applications. Omega,41, 893–902.

McMillan, M. L., & Datta, D. (1998). The relative efficiencies of Canadian universities: A DEA perspective. Canadian Public Policy/Analyse de Politiques,24(4), 485–511.

Meeusen, W., & van den Broeck, J. (1977). Efficiency estimation from Cobb-Douglas production functions with composed error. International Economic Review,18(2), 435–444.

Ng, S. W. (2011). Can Hong Kong export its higher education services to the Asian markets? Educational Research for Policy and Practice,10(2), 115–131.

Nunamaker, T. R. (1985). Using data envelopment analysis to measure the efficiency of non-profit organizations: A critical evaluation. Managerial and Decision Economics,6, 50–58.

OECD. (2017). Benchmarking higher education system performance: Conceptual framework and data. Enhancing higher education system performance. Paris: OECD.

Palomares-Montero, D., & García-Aracil, A. (2011). What are the key indicators for evaluating the activities of universities? Research Evaluation,20(5), 353–363.

Propper, C., & Wilson, D. (2003). The use and usefulness of performance measures in the public sector. Oxford Review of Economic Policy,19(2), 250–267.

Salas-Velasco, M. (2019). Can educational laws improve efficiency in education production? Assessing students’ academic performance at Spanish public universities, 2008–2014. Higher Education,77(6), 1103–1123.

Santelices, M. V., Catalán, X., Kruger, D., & Horn, C. (2016). Determinants of persistence and the role of financial aid: Lessons from Chile. Higher Education,71(3), 323–342.

Seiford, L. M. (1997). A bibliography for data envelopment analysis (1978–1996). Annals of Operations Research,73, 393–438.

Sheil, T. (2016). Managing expectations. An Australian perspective on the impact and challenges of adopting a university rankings narrative. In M. Yudkevich, P. G. Altbach, & L. E. Rumbley (Eds.), The global academic rankings game. Changing institutional policy, practice, and academic life (1st ed.). London: Routledge.

Shen, W. F., Zhang, D. Q., Liu, W. B., & Yang, G. L. (2016). Increasing discrimination of DEA evaluation by utilizing distances to anti-efficient frontiers. Computers & Operations Research,75, 163–173.

Shin, J. C., & Toutkoushian, R. K. (2011). The past, present, and future of university rankings. In J. C. Shin, R. K. Toutkoushian, & U. Teichler (Eds.), University rankings: Theoretical basis, methodology and impacts on global higher education (pp. 1–18). Dordrecht: Springer.

Simar, L., & Wilson, P. (2007). Estimation and inference in two-stage, semi-parametric models of production processes. Journal of Econometrics,136(1), 31–64.

Tauchmann, H. (2016). SIMARWILSON: Stata module to perform Simar & Wilson efficiency analysis. Statistical Software Components. Retrieved from https://ideas.repec.org/c/boc/bocode/s458156.html.

Taylor, P., & Braddock, R. (2007). International university ranking systems and the idea of university excellence. Journal of Higher Education Policy and Management,29(3), 245–260.

Thanassoulis, E., De Witte, K., Johnes, J., Johnes, G., Karagiannis, G., & Portela, C. S. (2016). Applications of data envelopment analysis in education. In J. Zhu (Ed.), Data envelopment analysis: A handbook of empirical studies and applications (pp. 367–438). New York: Springer.

Titus, M. A., & Eagan, K. (2016). Examining production efficiency in higher education: The utility of stochastic frontier analysis. In M. B. Paulsen (Ed.), Higher education: Handbook of theory and research (Vol. 31, pp. 441–512). New York: Springer.

Tyagi, P., Yadav, S. P., & Singh, S. P. (2009). Relative performance of academic departments using DEA with sensitivity analysis. Evaluation and Program Planning,32(2), 168–177.

Wolszczak-Derlacz, J., & Parteka, A. (2011). Efficiency of European public higher education institutions: A two-stage multicountry approach. Scientometrics,89(3), 887–917.

Xavier, C. A., & Alsagoff, L. (2013). Constructing “world-class” as “global:” A case study of the National University of Singapore. Educational Research for Policy and Practice,12(3), 225–238.

Yang, R. (2003). Globalization and higher education development: A critical analysis. International Review of Education,49(3/4), 269–291.

Zhu, J. (Ed.). (2016). Data envelopment analysis: A handbook of empirical studies and applications. New York: Springer.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Salas-Velasco, M. The technical efficiency performance of the higher education systems based on data envelopment analysis with an illustration for the Spanish case. Educ Res Policy Prac 19, 159–180 (2020). https://doi.org/10.1007/s10671-019-09254-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10671-019-09254-5

Keywords

- Higher education policy

- Data envelopment analysis

- Benchmarking

- Bootstrapped–truncated regression

- University rankings