Collection

Information Geometry for Algorithms: Learning, Vision, Signal Processing

- Submission status

- Closed

In recent years, as the use of data in various research fields has increased exponentially, probability models have been playing an important role in representing or describing the information contained in data. For example, deep neural networks have become a powerful tool for object recognition problems in computer vision, where their typical function consists of extracting features from the input image and finally outputting the probability of the recognition label.

It can be viewed as a problem of estimating the conditional distribution of the output label given the input image. In other words, one of the key issues in the process of extracting knowledge from data is how to estimate or approximate the probability model.

Information geometry provides a framework for discussing the relationship between the ground truth probability of data and the probability described by a model in geometric terms, and investigating properties and dynamics of estimation processes, i.e. algorithms.

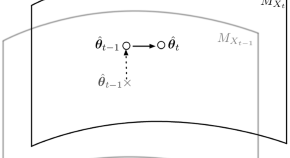

It is not limited to discussing only simple relationship between two points, but also more complex relationship between subsets of probabilities. For example, in the EM algorithm, the geometric relationship between a model manifold and a possible complete data manifold composed of incomplete observations can be clearly considered. In such problems, geometric rich structures such as flatness and curvature of the model play important roles in the analysis.

The aim of this issue is to comprise original theoretical or experimental research articles for geometrical method in information science, which focus on recent developments in understanding and improving algorithms in various fields.

Topics of interest include but are not limited to the following themes:

Applications of information geometry

- in machine learning

- in signal processing

- in computer vision

- in explainable artificial intelligence

- for the sciences (e.g. Neuroscience, Physics, Chemistry)

and more specific topics would be, for example,

- optimization in non-linear, convex, and multi-objective problems

- expectation-maximization algorithms and their variants

- natural gradient methods and their extensions

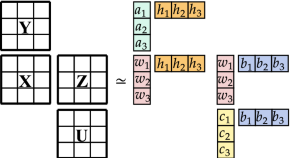

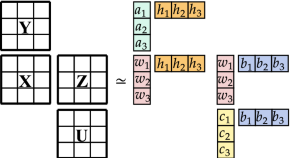

- independent component analysis

- topological data analysis in material informatics

Editors

-

Noboru Murata

Professor, Waseda University, Japan

-

Klaus-Robert Müller

Professor, Technical University of Berlin, Germany

-

Aapo Hyvärinen

Professor, University of Helsinki, Finland

-

Shotaro Akaho

Researcher, National Institute of Advanced Industrial Science and Technology (AIST), Japan

Articles (4 in this collection)

-

-

Coarse geometric kernels for networks embedding

Authors

- Emil Saucan

- Vladislav Barkanass

- Jürgen Jost

- Content type: Research Paper

- Open Access

- Published: 26 January 2023

- Pages: 157 - 169

-

The Fisher–Rao loss for learning under label noise

Authors

- Henrique K. Miyamoto

- Fábio C. C. Meneghetti

- Sueli I. R. Costa

- Content type: Research Paper

- Published: 02 December 2022

- Pages: 107 - 126

-

Active learning by query by committee with robust divergences

Authors

- Hideitsu Hino

- Shinto Eguchi

- Content type: Research Paper

- Published: 21 November 2022

- Pages: 81 - 106