Abstract

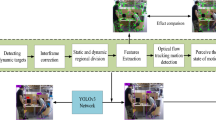

To enhance the localization accuracy and robustness of the visual SLAM algorithm in dynamic environments, this paper proposes a methodology that relies on target detection and direct geometric constraints. The algorithm first obtains static feature points and possible dynamic feature points of the current frame using a YOLOV7 target detection network. It then judges the real dynamic target using the geometric change relationship between the edges connecting the feature points of two adjacent frames. Based on the motion information of the dynamic target in past frames, the potential dynamic targets of the current frame are again examined, all feature points in the dynamic target frame are removed. Comparative experiments on the TUM dataset show that the proposed algorithm reduces the absolute trajectory error by an average of 94.69% compared to ORB-SLAM2. It outperforms mainstream dynamic vision SLAM schemes such as Dyna-SLAM and DS_SLAM in terms of localization accuracy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Huang, S.D., Dissanayake, G.: A critique of current developments in simultaneous localization and mapping. Int. J. Adv. Robot. Syst. 13(5) (2016)

Qin, T., Li, P., Shen, S.: VINS-mono: a robust and versatile monocular visual-inertial state estimator. IEEE Trans. Robot. 34(4), 1004–1020 (2018)

Mur-Artal, R., Tardós, J.D.: ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans. Robot. 33(5), 1255–1262 (2017). https://doi.org/10.1109/TRO.2017.2705103

Campos, C., Elvira, R., Rodríguez, J.J.G., et al.: ORBSLAM3: an accurate open-source library for visual, visual–inertial, and multimap SLAM. J. IEEE Trans. Robot. 37(6), 1874–1890 (2021)

Wang, C.Y., Bochkovskiy, A., Liao, H.Y.M.: YOLOv7: trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv preprint arXiv:2207.02696 (2022)

Wang, K., Yao, X., Huang, Y., et al.: Review of visual SLAM in dynamic environment. J. Robot. 43(6), 715–732 (2021)

Sun, Y., Liu, M., Meng, M.Q.H.: Improving RGB-D SLAM in dynamic environments: a motion removal approach. Robot. Auton. Syst. 89, 110–122 (2017). https://doi.org/10.1016/j.robot.2016.11.012

Fu, D., Xia, H., Qiao, Y.: Monocular visual-inertial navigation for dynamic environment. Remote Sens. 13(9), 1610 (2021). https://doi.org/10.3390/rs13091610

Bescos, B., Fácil, J.M., Civera, J., et al.: DynaSLAM: tracking, mapping, and inpainting in dynamic scenes. J. IEEE Robot. Autom. Lett. 3(4), 4076–4083 (2018)

He, K., Gkioxari, G., Dollár, P., et al.: Mask RCNN. In: The IEEE International Conference on Computer Vision, Venice, Italy, pp. 2980–2988 (2017)

Yu, C., Liu, Z., Liu, X., et al.: DS-SLAM: a semantic visual SLAM towards dynamic environments. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, pp. 1168–1174 (2018)

Badrinarayanan, V., Kendall, A., Cipolla, R.: SegNet: a deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 39(12), 2481–2495 (2017). https://doi.org/10.1109/TPAMI.2016.2644615

Wu, W., Guo, L., Gao, H., et al.: YOLO-SLAM: a semantic SLAM system towards dynamic environment with geometric constraint. J. Neural Comput. Appl. 34(8), 6011–6026 (2021)

Redmon, J., Divvala, S., Girshick, R., et al.: You only look once: unified, real-time object detection. In: IEEE Conference on Computer Vision and Pattern Recognition, Piscataway, USA. IEEE (2016)

Yan, L., Hu, X., Zhao, L., et al.: DGS-SLAM: a fast and robust RGBD SLAM in dynamic environments combined by geometric and semantic information. J. Remote Sens. 14(3), 795–819 (2022)

Saputra, M.R.U., Markham, A., Trigoni, N.: Visual SLAM and structure from motion in dynamic environments: a survey. ACM Comput. Surv. 51(2), 1–36 (2018). https://doi.org/10.1145/3177853

Sheng, C., Pan, S.G., Gao, W.: Dynamic-DSO: direct sparse odometry using objects semantic information for dynamic environments. Appl. Sci. 10(4), 1467 (2020). https://doi.org/10.3390/app10041467

Soares, J.C.V., Gattass, M., Meggiolaro, M.A.: Visual SLAM in human populated environments: exploring the trade-off between accuracy and speed of YOLO and mask R-CNN. In: 19thInternational Conference on Advanced Robotics, Piscataway, USA. IEEE (2019)

Dai, W.C., Zhang, Y., Li, P., et al.: RGB-D SLAM in dynamic environments using point correlations. IEEE Trans. Pattern Anal. Mach. Intell. 44, 373–389 (2020)

Barber, B.C., Dobkin, P.D., Huhdanpaa, H.: The Quickhull algorithm for convex hulls. ACM Trans. Math. Softw. 22(4), 469–483 (1996). https://doi.org/10.1145/235815.235821

Yang, S.Q., Fan, G.H., Bai, L.L., et al.: MGC-VSLAM: a meshingbased and geometric constraint VSLAM for dynamic indoor environments. IEEE Access 8, 81007–81021 (2020)

Sun, L., Wei, J., Su, S., et al.: SOLO-SLAM: a parallel semantic SLAM algorithm for dynamic scenes. Sensors 22(18), 6977 (2022)

Acknowledgements

This research was supported by the Project of China West Normal University under Grant 17YC046.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Lin, J., Feng, Z., Tang, J. (2023). Visual SLAM Algorithm Based on Target Detection and Direct Geometric Constraints in Dynamic Environments. In: Yongtian, W., Lifang, W. (eds) Image and Graphics Technologies and Applications. IGTA 2023. Communications in Computer and Information Science, vol 1910. Springer, Singapore. https://doi.org/10.1007/978-981-99-7549-5_7

Download citation

DOI: https://doi.org/10.1007/978-981-99-7549-5_7

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-7548-8

Online ISBN: 978-981-99-7549-5

eBook Packages: Computer ScienceComputer Science (R0)