Abstract

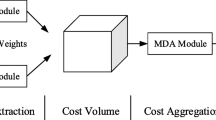

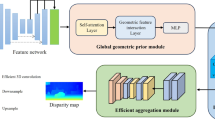

In many applications of depth estimation, accurate disparity map needs to be generated quickly. In order to obtain accurate disparity map, the current mainstream algorithm mostly adopts deep complex network architecture, which requires a large amount of computation and is difficult to be applied in real-time scenes. However, some real-time networks have low disparity accuracy, which also limits their application scenarios. Based on the above shortcomings, this paper improves AnyNet stereo matching algorithm and proposes a stereo matching algorithm with high real-time performance and high accuracy. First, a multi-scale feature extraction module is designed to capture and fuse contextual feature information, and then an attention module is constructed to reduce the mismatch problem of ill-posed regions (repetitive, no/weak texture regions). The algorithm proposed in this paper can predict the disparity map in multiple stages during reasoning, weigh the amount of calculation and accuracy according to actual needs, and select the corresponding stage adaptively. Evaluated on the KITTI 2015 dataset, compared with the reference algorithm AnyNet, the final predicted disparity map error rate is reduced by 2.43%, and the running speed is only 1.24% slower.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Luo, W., Schwing, A.G., Urtasun, R.: Efficient deep learning for stereo matching. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 5695–5703 (2016)

Hirschmuller, H.: stereo processing by semiglobal matching and mutual information. IEEE Trans. Pattern Anal. Mach. Intell. 30(2), 328–341 (2008)

Wang, Y., et al.: Anytime stereo image depth estimation on mobile devices. In: Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), pp. 5893–5900 (2019)

Mayer, N., et al.: A large dataset to train convolutional networks for disparity, optical flow, and scene flow estimation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, pp. 4040–4048. IEEE, New York (2016)

Kendall, A., et al.: End-to-end learning of geometry and context for deep stereo regression. In: Proceedings of the IEEE Conference on Computer Vision (ICCV), Oct. 22–29, 2017, Venice, Italy, pp. 66–75. IEEE, New York (2017)

Chang, J., Chen, Y.: Pyramid stereo matching network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, pp. 5410–5418. IEEE, New York (2018)

Khamis, S., Fanello, S., Rhemann, C., Kowdle, A., Valentin, J., Izadi, S.: StereoNet: guided hierarchical refinement for real-time edge-aware depth prediction. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11219, pp. 596–613. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01267-0_35

Lin, T., et al.: Feature pyramid networks for object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, pp. 936–944 (2017)

Liu, S., et al.: Path aggregation network for instance segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, pp. 8759–8768 (2018)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, pp. 7132–7141. IEEE, New York (2018)

Wang, Q., et al.: ECA-Net: efficient channel attention for deep convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 11531–11539 (2020)

Geiger, A., Lenz, P., Urtasun, R.: Are we ready for autonomous driving? The KITTI vision benchmark suite. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3354–3361. IEEE, Providence, RI, USA (2012)

Menze, M., Geiger, A.: Object scene flow for autonomous vehicles. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3061–3070. IEEE, Boston, MA, USA (2015)

Yang, G., Zhao, H., Shi, J., Deng, Z., Jia, J.: SegStereo: exploiting semantic information for disparity estimation. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11211, pp. 660–676. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01234-2_39

Tonioni, A., et al.: Realtime self-adaptive deep stereo. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 195–204 (2019)

Acknowledgment

This work was supported by the National Natural Science Foundation of China (61761034) and the Natural Science Foundation of Inner Mongolia (2020MS06022).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Tang, H., Wang, S., Wang, Z. (2022). An Improved Stereo Matching Algorithm Based on AnyNet. In: Liang, Q., Wang, W., Liu, X., Na, Z., Zhang, B. (eds) Communications, Signal Processing, and Systems. CSPS 2021. Lecture Notes in Electrical Engineering, vol 878. Springer, Singapore. https://doi.org/10.1007/978-981-19-0390-8_32

Download citation

DOI: https://doi.org/10.1007/978-981-19-0390-8_32

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-0389-2

Online ISBN: 978-981-19-0390-8

eBook Packages: EngineeringEngineering (R0)