Abstract

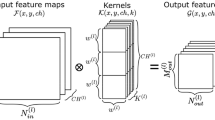

Convolutional Neural Networks (CNNs) is one of the core algorithms for implementing artificial intelligence (AI), which has the characteristics of high parallelism and large amount of computations. With the rapid development of AI applications, general purpose processors such as CPU/GPU can’t meet the requirements for performance, power consumption and real-time performance of CNN. However, ASIC can fully exploit the parallelism of CNN and improve resource utilization to meet its requirements This paper has designed and implemented a new CNN accelerator based on parallel memory technology, which can support multiple parallelisms. A super processing unit (SPU) with kernel buffer and output buffer is proposed to make computation and data fetching more streamline then ensure the performance of accelerator. In addition, a two-dimensional buffer which can provide conflict-free non-aligned block access with different steps and aligned continuous access to meet the data requirements of varies parallelisms. The synthesis results show it can work at 1 GHz frequency with area overhead of 4.51 mm2 and on-chip buffer cost of 192 KB. We evaluated our design with varies CNN workloads, the efficiency of our design over 90% in most cases. Compared with the state-of-the-art accelerator architectures, the hardware cost of our design is smaller under the same performance.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ji, S., Xu, W., Yang, M., et al.: 3D convolutional neural networks for human action recognition. IEEE Trans. Pattern Anal. Mach. Intell. 35(1), 221–231 (2013)

Khalil-Hani, M., Sung, L.S.: A convolutional neural network approach for face verification. In: International Conference on High PERFORMANCE Computing & Simulation, pp. 707–714. IEEE (2014)

Wang, C., Zhang, H., Yang, L, et al.: Deep people counting in extremely dense crowds. In: ACM International Conference on Multimedia, pp. 1299–1302. ACM (2015)

Chakradhar, S., Sankaradas, M., Jakkula, V., et al.: A dynamically configurable coprocessor for convolutional neural networks. ACM Sigarch Comput. Architect. News 38(3), 247–257 (2010)

Rumelhart, D.E., Hinton, G.E., Williams, R.J.: Learning internal representations by error propagation, pp. 318–362. MIT Press (1988)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: International Conference on Neural Information Processing Systems, pp. 1097–1105 (2012)

Szegedy, C., Liu, W., Jia, Y., et al.: Going deeper with convolutions, pp. 1–9 (2014)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. Comput. Sci. (2014)

He, K., Zhang, X., Ren, S., et al.: Deep residual learning for image recognition, pp. 770–778 (2015)

Chen, T., Du, Z., Sun, N., et al.: DianNao: a small-footprint high-throughput accelerator for ubiquitous machine-learning. ACM Sigplan Notices, vol. 49, no. 4, pp. 269–284 (2014)

Chen, Y., Luo, T., Liu, S., et al.: DaDianNao: a machine-learning supercomputer. In: IEEE/ACM International Symposium on Microarchitecture, pp. 609–622. IEEE (2014)

Liu, D., Chen, T., Liu, S., et al.: PuDianNao: a polyvalent machine learning accelerator. In: Twentieth International Conference on Architectural Support for Programming Languages and Operating Systems, pp. 369–381 (2015)

Du, Z., Fasthuber, R., Chen, T., et al.: ShiDianNao: shifting vision processing closer to the sensor. In: ACM Sigarch Computer Architecture News, vol. 43, no. 3, pp. 92–104 (2015)

Zhang, S., et al.: Cambricon-X: an accelerator for sparse neural networks. In: The 49th Annual IEEE/ACM International Symposium on Microarchitecture, MICRO (2016)

Jouppi, N.P., Young, C., Patil, N., et al.: In-datacenter performance analysis of a tensor processing unit. In: International Symposium on Computer Architecture, pp. 1–12. ACM (2017)

Chen, Y.H., Emer, J., Sze, V.: Eyeriss: a spatial architecture for energy-efficient dataflow for convolutional neural networks. IEEE Micro PP(99), 1 (2016)

Chen, Y.H., Krishna, T., Emer, J.S., et al.: Eyeriss: an energy-efficient reconfigurable accelerator for deep convolutional neural networks. IEEE J. Solid-State Circ. 52(1), 127–138 (2017)

Han, S., Liu, X., Mao, H., et al.: EIE: efficient inference engine on compressed deep neural network. In: ACM Sigarch Computer Architecture News, vol. 44, pp. 3, pp. 243–254 (2016)

Chakradhar, S., Sankaradas, M., Jakkula, V., Cadambi, S.: A dynamically configurable coprocessor for convolutional neural networks. In: SIGARCH Computer Architecture News, vol. 38, no. 3, pp. 247–257, June 2010

Farabet, C., Poulet, C., Han, J.Y., et al.: CNP: an FPGA-based processor for convolutional networks. In: International Conference on Field Programmable Logic and Applications, pp. 32–37. IEEE (2009)

Farabet, C., Martini, B., Corda, B., et al.: NeuFlow: a runtime reconfigurable dataflow processor for vision. In: Computer Vision and Pattern Recognition Workshops, pp. 109–116. IEEE (2012)

Zhang, C., Li, P., Sun, G., et al.: Optimizing FPGA-based accelerator design for deep convolutional neural networks, pp. 161–170 (2015)

Gou, C., Kuzmanov, G.K., Gaydadjiev, G.N.: SAMS: single-affiliation multiple-stride parallel memory scheme, pp. 350–368. ACM (2008)

Peemen, M., Setio, A.A.A., Mesman, B., et al.: Memory-centric accelerator design for convolutional neural networks. In: IEEE International Conference on Computer Design, pp. 13–19. IEEE (2013)

Lu, W., Yan, G., Li, J., et al.: FlexFlow: a flexible dataflow accelerator architecture for convolutional neural networks. In: IEEE International Symposium on High Performance Computer Architecture, pp. 553–564. IEEE (2017)

Lang, T., Valero, M., Peiron, M., et al.: Conflict-free access for streams in multimodule memories. IEEE Trans. Comput. 44(5), 634–646 (1995)

Chetlur, S., Woolley, C., Vandermersch, P., et al.: cuDNN: efficient primitives for deep learning. Comput. Sci. (2014)

Vanhoucke, V., Senior, A., Mao, M.Z.: Improving the speed of neural networks on CPUs. In: Deep Learning and Unsupervised Feature Learning Workshop, NIPS 2011 (2011)

Acknowledgment

This paper is supported by the National Science and Technology Major Project—intelligent computing unit for data center (cloud platform) and cluster computing (No. 2018ZX01031101) and the National Nature Science Foundation of China (No. 61602493, name: researches on efficient parallel memory techniques for wide vector dsps).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Tan, H., Liu, S., Chen, H., Sun, H., Li, H. (2019). A Convolutional Neural Networks Accelerator Based on Parallel Memory. In: Xu, W., Xiao, L., Li, J., Zhu, Z. (eds) Computer Engineering and Technology. NCCET 2019. Communications in Computer and Information Science, vol 1146. Springer, Singapore. https://doi.org/10.1007/978-981-15-1850-8_15

Download citation

DOI: https://doi.org/10.1007/978-981-15-1850-8_15

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-1849-2

Online ISBN: 978-981-15-1850-8

eBook Packages: Computer ScienceComputer Science (R0)