Abstract

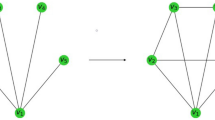

We propose a new method for detecting changes in Markov network structure between two sets of samples. Instead of naively fitting two Markov network models separately to the two data sets and figuring out their difference, we directly learn the network structure change by estimating the ratio of Markov network models. This density-ratio formulation naturally allows us to introduce sparsity in the network structure change, which highly contributes to enhancing interpretability. Furthermore, computation of the normalization term, which is a critical computational bottleneck of the naive approach, can be remarkably mitigated. Through experiments on gene expression and Twitter data analysis, we demonstrate the usefulness of our method.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Bishop, C.M.: Pattern Recognition and Machine Learning. Springer, New York (2006)

Wainwright, M.J., Jordan, M.I.: Graphical models, exponential families, and variational inference. Foundations and Trends® in Machine Learning 1(1-2), 1–305 (2008)

Koller, D., Friedman, N.: Probabilistic graphical models: principles and techniques. MIT Press (2009)

Ravikumar, P., Wainwright, M.J., Lafferty, J.D.: High-dimensional ising model selection using ℓ1-regularized logistic regression. The Annals of Statistics 38(3), 1287–1319 (2010)

Lee, S.I., Ganapathi, V., Koller, D.: Efficient structure learning of Markov networks using l 1-regularization. In: Schölkopf, B., Platt, J., Hoffman, T. (eds.) Advances in Neural Information, vol. 19, pp. 817–824. MIT Press, Cambridge (2007)

Gutmann, M., Hyvärinen, A.: Noise-contrastive estimation of unnormalized statistical models, with applications to natural image statistics. Journal of Machine Learning Research 13, 307–361 (2012)

Hastie, T., Tibshirani, R., Friedman, J.: The Elements of Statistical Learning: Data Mining, Inference, and Prediction. Springer, New York (2001)

Banerjee, O., El Ghaoui, L., d’Aspremont, A.: Model selection through sparse maximum likelihood estimation for multivariate Gaussian or binary data. Journal of Machine Learning Research 9, 485–516 (2008)

Friedman, J., Hastie, T., Tibshirani, R.: Sparse inverse covariance estimation with the graphical lasso. Biostatistics 9(3), 432–441 (2008)

Tibshirani, R., Saunders, M., Rosset, S., Zhu, J., Knight, K.: Sparsity and smoothness via the fused lasso. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 67(1), 91–108 (2005)

Zhang, B., Wang, Y.: Learning structural changes of Gaussian graphical models in controlled experiments. In: Proceedings of the Twenty-Sixth Conference on Uncertainty in Artificial Intelligence (UAI 2010), pp. 701–708 (2010)

Liu, H., Lafferty, J., Wasserman, L.: The nonparanormal: Semiparametric estimation of high dimensional undirected graphs. The Journal of Machine Learning Research 10, 2295–2328 (2009)

Liu, H., Han, F., Yuan, M., Lafferty, J., Wasserman, L.: The nonparanormal skeptic. In: Proceedings of the 29th International Conference on Machine Learning, ICML 2012 (2012)

Sugiyama, M., Suzuki, T., Kanamori, T.: Density Ratio Estimation in Machine Learning. Cambridge University Press, Cambridge (2012)

Vapnik, V.N.: Statistical Learning Theory. Wiley, New York (1998)

Schmidt, M.W., Murphy, K.P.: Convex structure learning in log-linear models: Beyond pairwise potentials. Journal of Machine Learning Research - Proceedings Track 9, 709–716 (2010)

Meinshausen, N., Bühlmann, P.: High-dimensional graphs and variable selection with the lasso. The Annals of Statistics 34(3), 1436–1462 (2006)

Sugiyama, M., Suzuki, T., Nakajima, S., Kashima, H., von Bünau, P., Kawanabe, M.: Direct importance estimation for covariate shift adaptation. Annals of the Institute of Statistical Mathematics 60(4), 699–746 (2008)

Tsuboi, Y., Kashima, H., Hido, S., Bickel, S., Sugiyama, M.: Direct density ratio estimation for large-scale covariate shift adaptation. Journal of Information Processing 17, 138–155 (2009)

Zou, H., Hastie, T.: Regularization and variable selection via the elastic net. Journal of the Royal Statistical Society, Series B 67(2), 301–320 (2005)

Neal, R.M.: Slice sampling. The Annals of Statistics 31(3), 705–741 (2003)

Van den Bulcke, T., Van Leemput, K., Naudts, B., van Remortel, P., Ma, H., Verschoren, A., De Moor, B., Marchal, K.: SynTReN: A generator of synthetic gene expression data for design and analysis of structure learning algorithms. BMC Bioinformatics 7(1), 43 (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Liu, S., Quinn, J.A., Gutmann, M.U., Sugiyama, M. (2013). Direct Learning of Sparse Changes in Markov Networks by Density Ratio Estimation. In: Blockeel, H., Kersting, K., Nijssen, S., Železný, F. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2013. Lecture Notes in Computer Science(), vol 8189. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-40991-2_38

Download citation

DOI: https://doi.org/10.1007/978-3-642-40991-2_38

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-40990-5

Online ISBN: 978-3-642-40991-2

eBook Packages: Computer ScienceComputer Science (R0)