Abstract

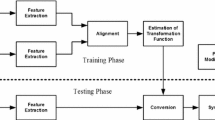

In this paper, an emotional speech conversion method using pitch-synchronous harmonic and non-harmonic (PS-HNH) modeling of speech is proposed. The proposed method converts neutral speeches into expressive ones by controlling emotional parameters for each syllable of the neutral speech. To this end, the proposed method first carries out syllable labeling by Viterbi decoding using acoustic hidden Markov models of the neutral corpus. Next, the PS-HNH analysis is performed on the neutral speech to modify the emotional parameters by the linear modification model of target emotion in a syllable-wise manner. Finally, the modified parameters are synthesized back into the emotional speech by the PS-HNH synthesis. The performance of the proposed method is evaluated by a subjective AB preference test for four types of target emotions (fear, sadness, anger, and happiness). It is shown from the preference test that the proposed method give better speech quality than the conventional method that is based on speech transformation and representation using adaptive interpolation of weighted spectrum (STRAIGHT).

Chapter PDF

Similar content being viewed by others

References

Jaimes, A., Sebe, N.: Multimodal human-computer interaction: a survey. Computer Vision and Image Understanding 108(1–2), 116–134 (2007)

Tao, J., Kang, Y., Li, A.: Prosody conversion from neutral speech to emotional speech. IEEE Transactions on Audio, Speech, and Language Processing 14(4), 1145–1154 (2006)

Aihara, R., Takashima, R., Takiguchi, T., Ariki, Y.: GMM-based emotional voice conversion using spectrum and prosody features. American Journal of Signal Processing 2(5), 134–138 (2012)

Kim, S.M., Kim, H.K., Kim, M.B., Kim, S.R.: Probabilistic spectral gain modification applied to beamformer-based noise reduction in a car environment. IEEE Transactions on Consumer Electronics 57(2), 866–872 (2011)

Park, J.H., Kim, H.K., Kim, M.B., Kim, S.R.: A user voice reduction algorithm based on binaural signal separation for portable digital imaging devices. IEEE Transactions on Consumer Electronics 58(2), 679–684 (2012)

Oh, Y.R., Yoon, J.S., Kim, H.K., Kim, M.B., Kim, S.R.: A voice-driven scene-mode recommendation service for portable digital imaging devices. IEEE Transactions on Consumer Electronics 55(4), 1739–1747 (2009)

Kang, J.A., Chun, C.J., Kim, H.K., Kim, M.B., Kim, S.R.: A smart background music mixing algorithm for portable digital imaging devices. IEEE Transactions on Consumer Electronics 57(3), 1258–1263 (2011)

Kang, J.A., Kim, H.K.: An adaptive packet loss recovery method based on real-time speech quality assessment and redundant speech transmission. International Journal of Innovative Computing, Information and Control 7(12), 6773–6783 (2011)

Kang, J.A., Kim, H.K.: Adaptive redundant speech transmission over wireless multimedia sensor networks based on estimation of perceived speech quality. Sensors 11(9), 8469–8484 (2011)

Kawahara, H.: Speech representation and transformation using adaptive interpolation of weighted spectrum: vocoder revisited. In: Proceedings of IEEE International Conference on Acoustic, Speech, and Signal Processing (ICASSP), Munich, Germany, pp. 1303–1306 (1997)

Jeon, K.M., Kim, H.K.: High-quality speech modification based on pitch-synchronous harmonic and non-harmonic modeling of speech. Advanced Science and Technology Letters 14, 176–179 (2012)

Zen, H., Tokuda, K., Black, A.W.: Statistical parametric speech synthesis. Speech Communication 51(11), 1039–1064 (2009)

Stylianou, Y.: Applying the harmonic plus noise model in concatenative speech synthesis. IEEE Transactions on Speech and Audio Processing 9(1), 21–29 (2001)

Kominek, J., Black, A.W.: The CMU ARCTIC speech databases. In: Proceedings of the 5th ISCA Tutorial and Research Workshop on Speech Synthesis (SSW-5), Pittsburgh, PA, pp. 223–224 (2004)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Jeon, K.M., Park, N.I. (2013). Emotional Speech Conversion Using Pitch-Synchronous Harmonic and Non-harmonic Modeling of Speech. In: Stephanidis, C. (eds) HCI International 2013 - Posters’ Extended Abstracts. HCI 2013. Communications in Computer and Information Science, vol 373. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-39473-7_68

Download citation

DOI: https://doi.org/10.1007/978-3-642-39473-7_68

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-39472-0

Online ISBN: 978-3-642-39473-7

eBook Packages: Computer ScienceComputer Science (R0)