Abstract

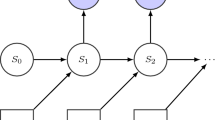

Inverse reinforcement learning (IRL) is the task of learning the reward function of a Markov Decision Process (MDP) given the transition function and a set of observed demonstrations in the form of state-action pairs. Current IRL algorithms attempt to find a single reward function which explains the entire observation set. In practice, this leads to a computationally-costly search over a large (typically infinite) space of complex reward functions. This paper proposes the notion that if the observations can be partitioned into smaller groups, a class of much simpler reward functions can be used to explain each group. The proposed method uses a Bayesian nonparametric mixture model to automatically partition the data and find a set of simple reward functions corresponding to each partition. The simple rewards are interpreted intuitively as subgoals, which can be used to predict actions or analyze which states are important to the demonstrator. Experimental results are given for simple examples showing comparable performance to other IRL algorithms in nominal situations. Moreover, the proposed method handles cyclic tasks (where the agent begins and ends in the same state) that would break existing algorithms without modification. Finally, the new algorithm has a fundamentally different structure than previous methods, making it more computationally efficient in a real-world learning scenario where the state space is large but the demonstration set is small.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Argall, B.D., Chernova, S., Veloso, M., Browning, B.: A survey of robot learning from demonstration. Robotics and Autonomous Systems 57(5), 469–483 (2009)

Kautz, H., Allen, J.F.: Generalized plan recognition. In: Proceedings of the Fifth National Conference on Artificial Intelligence, pp. 32–37. AAAI (1986)

Verma, D., Rao, R.: Goal-Based Imitation as Probabilistic Inference over Graphical Models. In: Advances in Neural Information Processing Systems 18, vol. 18, pp. 1393–1400 (2006)

Baker, C.L., Saxe, R., Tenenbaum, J.B.: Action understanding as inverse planning.. Cognition 113(3), 329–349 (2009)

Jern, A., Lucas, C.G., Kemp, C.: Evaluating the inverse decision-making approach to preference learning. Processing, 1–9 (2011)

Ng, A.Y., Russell, S.: Algorithms for inverse reinforcement learning. In: Proc. of the 17th International Conference on Machine Learning, pp. 663–670 (2000)

Abbeel, P., Ng, A.Y.: Apprenticeship learning via inverse reinforcement learning. In: Twentyfirst International Conference on Machine learning ICML 2004, p. 1 (2004)

Ratliff, N.D., Bagnell, J.A., Zinkevich, M.A.: Maximum margin planning. In: Proc. of the 23rd International Conference on Machine Learning, pp. 729–736 (2006)

Ramachandran, D., Amir, E.: Bayesian inverse reinforcement learning. In: IJCAI, pp. 2586–2591 (2007)

Neu, G., Szepesvari, C.: Apprenticeship learning using inverse reinforcement learning and gradient methods. In: Proc. UAI (2007)

Syed, U., Schapire, R.E.: A Game-Theoretic Approach to Apprenticeship Learning. In: Advances in Neural Information Processing Systems 20, vol. 20, pp. 1–8 (2008)

Ziebart, B.D., Maas, A., Bagnell, J.A., Dey, A.K.: Maximum Entropy Inverse Reinforcement Learning. In: Proc. AAAI, pp. 1433–1438. AAAI Press (2008)

Lopes, M., Melo, F., Montesano, L.: Active Learning for Reward Estimation in Inverse Reinforcement Learning. In: Buntine, W., Grobelnik, M., Mladenić, D., Shawe-Taylor, J. (eds.) ECML PKDD 2009, Part II. LNCS, vol. 5782, pp. 31–46. Springer, Heidelberg (2009)

Bertsekas, D.P., Tsitsiklis, J.N.: Neuro-Dynamic Programming. The Optimization and Neural Computation Series, vol. 5. Athena Scientific (1996)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press (1998)

Gelman, A., Carlin, J.B., Stern, H.S., Rubin, D.B.: Bayesian Data Analysis. Texts in statistical science, vol. 2. Chapman & Hall/CRC (2004)

Geman, S., Geman, D.: Stochastic Relaxation, Gibbs Distributions, and the Bayesian Restoration of Images. IEEE Transactions on Pattern Analysis and Machine Intelligence PAMI-6(6), 721–741 (1984)

Sudderth, E.B.: Graphical Models for Visual Object Recognition and Tracking by. Thesis 126(1), 301 (2006)

Escobar, M.D., West, M.: Bayesian density estimation using mixtures. Journal of the American Statistical Association 90(430), 577 (1995)

Neal, R.M.: Markov Chain Sampling Methods for Dirichlet Process Mixture Models. Journal Of Computational And Graphical Statistics 9(2), 249 (2000)

Andrieu, C., De Freitas, N., Doucet, A., Jordan, M.I.: An Introduction to MCMC for Machine Learning. Science 50(1), 5–43 (2003)

Berger, J.O.: Statistical Decision Theory and Bayesian Analysis. Springer Series in Statistics. Springer (1985)

Neal, R.M.: Probabilistic Inference Using Markov Chain Monte Carlo Methods. Intelligence 62, 144 (1993)

Roberts, G.O., Sahu, S.K.: Updating schemes, correlation structure, blocking and parameterisation for the Gibbs sampler. Journal of the Royal Statistical Society - Series B: Statistical Methodology 59(2), 291–317 (1997)

Sutton, R.S., Precup, D., Singh, S.: Between MDPs and semi-MDPs: A framework for temporal abstraction in reinforcement learning. Artificial Intelligence 112(1-2), 181–211 (1999)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Michini, B., How, J.P. (2012). Bayesian Nonparametric Inverse Reinforcement Learning. In: Flach, P.A., De Bie, T., Cristianini, N. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2012. Lecture Notes in Computer Science(), vol 7524. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33486-3_10

Download citation

DOI: https://doi.org/10.1007/978-3-642-33486-3_10

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-33485-6

Online ISBN: 978-3-642-33486-3

eBook Packages: Computer ScienceComputer Science (R0)