Abstract

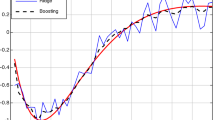

It is an admitted fact that mainstream boosting algorithms like AdaBoost do not perform well to estimate class conditional probabilities. In this paper, we analyze, in the light of this problem, a recent algorithm, unn, which leverages nearest neighbors while minimizing a convex loss. Our contribution is threefold. First, we show that there exists a subclass of surrogate losses, elsewhere called balanced, whose minimization brings simple and statistically efficient estimators for Bayes posteriors. Second, we show explicit convergence rates towards these estimators for unn, for any such surrogate loss, under a Weak Learning Assumption which parallels that of classical boosting results. Third and last, we provide experiments and comparisons on synthetic and real datasets, including the challenging SUN computer vision database. Results clearly display that boosting nearest neighbors may provide highly accurate estimators, sometimes more than a hundred times more accurate than those of other contenders like support vector machines.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Schapire, R.E., Freund, Y., Bartlett, P., Lee, W.S.: Boosting the margin: a new explanation for the effectiveness of voting methods. Annals of Statistics 26, 1651–1686 (1998)

Nock, R., Nielsen, F.: On the efficient minimization of classification-calibrated surrogates. In: NIPS*21, pp. 1201–1208 (2008)

Schapire, R.E., Singer, Y.: Improved boosting algorithms using confidence-rated predictions. Machine Learning Journal 37, 297–336 (1999)

Friedman, J., Hastie, T., Tibshirani, R.: Additive Logistic Regression: a Statistical View of Boosting. Annals of Statistics 28, 337–374 (2000)

Buja, A., Mease, D., Wyner, A.-J.: Comment: Boosting algorithms: regularization, prediction and model fitting. Statistical Science 22, 506–512 (2007)

Bühlmann, P., Hothorn, T.: Boosting algorithms: regularization, prediction and model fitting. Statistical Science 22, 477–505 (2007)

Nock, R., Piro, P., Nielsen, F., Bel Haj Ali, W., Barlaud, M.: Boosting k-NN for categorization of natural scenes. International Journal of Computer Vision (to appear, 2012)

Piro, P., Nock, R., Nielsen, F., Barlaud, M.: Leveraging k-NN for generic classification boosting. Neurocomputing 80, 3–9 (2012)

Bartlett, P., Jordan, M., McAuliffe, J.D.: Convexity, classification, and risk bounds. Journal of the Am. Stat. Assoc. 101, 138–156 (2006)

Nock, R., Nielsen, F.: Bregman divergences and surrogates for learning. IEEE Trans. on Pattern Analysis and Machine Intelligence 31(11), 2048–2059 (2009)

Kearns, M.J., Mansour, Y.: On the boosting ability of top-down decision tree learning algorithms. Journal of Comp. Syst. Sci. 58, 109–128 (1999)

Amari, S.-I., Nagaoka, H.: Methods of Information Geometry. Oxford University Press (2000)

Müller-Funk, U., Pukelsheim, F., Witting, H.: On the attainment of the Cramér-Rao bound in L r -differentiable families of distributions. Annals of Statistics, 1742–1748 (1989)

Cover, T.-M., Hart, P.: Nearest neighbor pattern classification. IEEE Trans. on Information Theory 13, 21–27 (1967)

Devroye, L., Györfi, L., Lugosi, G.: A Probabilistic Theory of Pattern Recognition. Springer (1996)

Sebban, M., Nock, R., Lallich, S.: Boosting Neighborhood-Based Classifiers. In: Proc. of the 18th International Conference on Machine Learning, pp. 505–512. Morgan Kaufmann (2001)

Sebban, M., Nock, R., Lallich, S.: Stopping criterion for boosting-based data reduction techniques: from binary to multiclass problems. J. of Mach. Learn. Res. 3, 863–885 (2003)

Kakade, S., Shalev-Shwartz, S., Tewari, A.: Applications of strong convexity–strong smoothness duality to learning with matrices. Technical Report CoRR abs/0910.0610, Computing Res. Repository (2009)

Platt, J.-C.: Probabilistic outputs for support vector machines and comparisons to regularized likelihood methods. In: Advances in Large Margin Classifiers, pp. 61–74. MIT Press (1999)

Xiao, J., Hays, J., Ehringer, K.-A., Oliva, A., Torralba, A.: SUN database: Large-scale scene recognition from abbey to zoo. In: Proc. of IEEE International Conference on Computer Vision and Pattern Recognition, pp. 3485–3492 (2010)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

D’Ambrosio, R., Nock, R., Ali, W.B.H., Nielsen, F., Barlaud, M. (2012). Boosting Nearest Neighbors for the Efficient Estimation of Posteriors. In: Flach, P.A., De Bie, T., Cristianini, N. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2012. Lecture Notes in Computer Science(), vol 7523. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33460-3_26

Download citation

DOI: https://doi.org/10.1007/978-3-642-33460-3_26

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-33459-7

Online ISBN: 978-3-642-33460-3

eBook Packages: Computer ScienceComputer Science (R0)