Abstract

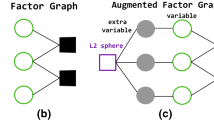

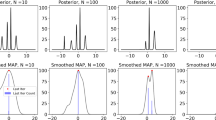

Maximum a-posteriori (MAP) estimation is an important task in many applications of probabilistic graphical models. Although finding an exact solution is generally intractable, approximations based on linear programming (LP) relaxation often provide good approximate solutions. In this paper we present an algorithm for solving the LP relaxation optimization problem. In order to overcome the lack of strict convexity, we apply an augmented Lagrangian method to the dual LP. The algorithm, based on the alternating direction method of multipliers (ADMM), is guaranteed to converge to the global optimum of the LP relaxation objective. Our experimental results show that this algorithm is competitive with other state-of-the-art algorithms for approximate MAP estimation.

Chapter PDF

Similar content being viewed by others

Keywords

References

Afonso, M., Bioucas-Dias, J., Figueiredo, M.: Fast image recovery using variable splitting and constrained optimization. IEEE Transactions on Image Processing 19(9), 2345–2356 (2010)

Bertsekas, D.P., Tsitsiklis, J.N.: Parallel and distributed computation: numerical methods. Prentice-Hall, Inc., Upper Saddle River (2003)

Duchi, J., Shalev-Shwartz, S., Singer, Y., Chandra, T.: Efficient projections onto the l1-ball for learning in high dimensions. In: Proceedings of the 25th International Conference on Machine learning, pp. 272–279 (2008)

Eckstein, J., Bertsekas, D.P.: On the douglas-rachford splitting method and the proximal point algorithm for maximal monotone operators. Mathematical Programming 55, 293–318 (1992)

Gabay, D., Mercier, B.: A dual algorithm for the solution of nonlinear variational problems via finite-element approximations. Computers and Mathematics with Applications 2, 17–40 (1976)

Gimpel, K., Smith, N.A.: Softmax-margin crfs: training log-linear models with cost functions. In: Human Language Technologies: The 2010 Annual Conference of the North American Chapter of the Association for Computational Linguistics, pp. 733–736 (2010)

Globerson, A., Jaakkola, T.: Fixing max-product: Convergent message passing algorithms for MAP LP-relaxations. In: Advances in Neural Information Processing Systems, pp. 553–560 (2008)

Glowinski, R., Marrocco, A.: Sur lapproximation, par elements finis dordre un, et la resolution, par penalisation-dualité, dune classe de problems de dirichlet non lineares. Revue Française d’Automatique, Informatique, et Recherche Opérationelle 9, 41–76 (1975)

Goldfarb, D., Ma, S., Scheinberg, K.: Fast alternating linearization methods for minimizing the sum of two convex functions. Technical report, UCLA CAM (2010)

Hazan, T., Shashua, A.: Norm-product belief propagation: Primal-dual message-passing for approximate inference. IEEE Transactions on Information Theory 56(12), 6294–6316 (2010)

He, B.S., Yang, H., Wang, S.L.: Alternating direction method with self-adaptive penalty parameters for monotone variational inequalities. Journal of Optimization Theory and Applications 106, 337–356 (2000)

Johnson, J.: Convex Relaxation Methods for Graphical Models: Lagrangian and Maximum Entropy Approaches. PhD thesis, EECS, MIT (2008)

Jojic, V., Gould, S., Koller, D.: Fast and smooth: Accelerated dual decomposition for MAP inference. In: Proceedings of International Conference on Machine Learning (2010)

Kolmogorov, V.: Convergent tree-reweighted message passing for energy minimization. IEEE Transactions on Pattern Analysis and Machine Intelligence 28(10), 1568–1583 (2006)

Komodakis, N., Paragios, N.: Beyond loose LP-relaxations: Optimizing mRFs by repairing cycles. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008, Part III. LNCS, vol. 5304, pp. 806–820. Springer, Heidelberg (2008)

Komodakis, N., Paragios, N., Tziritas, G.: Mrf energy minimization and beyond via dual decomposition. IEEE Transactions on Pattern Analysis and Machine Intelligence 33, 531–552 (2011)

Martins, A.F.T., Figueiredo, M.A.T., Aguiar, P.M.Q., Smith, N.A., Xing, E.P.: An augmented lagrangian approach to constrained map inference. In: International Conference on Machine Learning (June 2011)

Meshi, O., Sontag, D., Jaakkola, T., Globerson, A.: Learning efficiently with approximate inference via dual losses. In: Proceedings of the 27th International Conference on Machine Learning, pp. 783–790 (2010)

Nesterov, Y.: Smooth minimization of non-smooth functions. Mathematical Programming 103, 127–152 (2005)

Ravikumar, P., Agarwal, A., Wainwright, M.: Message-passing for graph-structured linear programs: proximal projections, convergence and rounding schemes. In: Proc. of the 25th International Conference on Machine Learning, pp. 800–807 (2008)

Rush, A.M., Sontag, D., Collins, M., Jaakkola, T.: On dual decomposition and linear programming relaxations for natural language processing. In: Proceedings of the 2010 Conference on Empirical Methods in Natural Language Processing, EMNLP (2010)

Schlesinger, M.I.: Syntactic analysis of two-dimensional visual signals in noisy conditions. Kibernetika 4, 113–130 (1976)

Sontag, D., Globerson, A., Jaakkola, T.: Introduction to dual decomposition for inference. In: Sra, S., Nowozin, S., Wright, S.J. (eds.) Optimization for Machine Learning. MIT Press, Cambridge (2011)

Sontag, D., Meltzer, T., Globerson, A., Jaakkola, T., Weiss, Y.: Tightening LP relaxations for MAP using message passing. In: Proc. of the 24th Annual Conference on Uncertainty in Artificial Intelligence, pp. 503–510 (2008)

Taskar, B., Guestrin, C., Koller, D.: Max margin Markov networks. In: Thrun, S., Saul, L., Schölkopf, B. (eds.) Advances in Neural Information Processing Systems, vol. 16, pp. 25–32. MIT Press, Cambridge (2004)

Tosserams, S., Etman, L., Papalambros, P., Rooda, J.: An augmented lagrangian relaxation for analytical target cascading using the alternating direction method of multipliers. Structural and Multidisciplinary Optimization 31, 176–189 (2006)

Wainwright, M.J., Jordan, M.: Graphical models, exponential families, and variational inference. Foundations and Trends in Machine Learning 1(1-2), 1–305 (2008)

Werner, T.: A linear programming approach to max-sum problem: A review. IEEE Transactions on Pattern Analysis and Machine Intelligence 29, 1165–1179 (2007)

Yanover, C., Meltzer, T., Weiss, Y.: Linear programming relaxations and belief propagation – an empirical study. Journal of Machine Learning Research 7, 1887–1907 (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2011 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Meshi, O., Globerson, A. (2011). An Alternating Direction Method for Dual MAP LP Relaxation. In: Gunopulos, D., Hofmann, T., Malerba, D., Vazirgiannis, M. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2011. Lecture Notes in Computer Science(), vol 6912. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-23783-6_30

Download citation

DOI: https://doi.org/10.1007/978-3-642-23783-6_30

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-23782-9

Online ISBN: 978-3-642-23783-6

eBook Packages: Computer ScienceComputer Science (R0)