Abstract

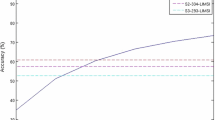

In this paper we focus on auditory analysis as the sensory stimulus, and on vocalization synthesis as the output signal. Our scenario is to have one robot interacting with one human through vocalization channel. Notice that vocalization is far beyond speech; while speech analysis would give us what was said, vocalization analysis gives us how was said. A social robot shall be able to perform actions in different manners according to its emotional state. Thus we propose a novel Bayesian approach to determine the emotional state the robot shall assume according to how the interlocutor is talking to it. Results shows that the classification happens as expected converging to the correct decision after two iterations.

Chapter PDF

Similar content being viewed by others

References

Gratch, J., Marsella, S., Petta, P.: Modeling the cognitive antecedents and consequents of emotion. Cognitive Systems 10(1), 1–5 (2008)

Schroder, M.: The semaine api: Towards a standards-based framework for building emotion oriented systems. Advances in Human-Computer Interaction, article ID 319406, 21 (2010) doi:10.1155/2010/319406

Lee, C.M., Narayanan, S.S., Pieraccini, R.: Classifying emotions in human-machine spoken dialogs. In: ICME (2002)

Wang, Y., Guan, L.: Recognizing human emotion from audiovisual information. In: ICASSP. IEEE, Los Alamitos (2005)

Cowie, R., Douglas-Cowie, E., Karpouszis, K., Caridakis, G., Wallace, M., Kollias, S.: Recognition of Emotional States in Natural Human-Computer Interaction. Queen’s University, School of Psychology (2007)

Ekman, P., Rosenberg, E.L.: What the face reveals: basic and applied studies of spontaneous expression using the facial action coding system (FACS), 2nd edn. Oxford University Press, Oxford (2004)

Spinoza, Ethics, 1677

Damasio, A.: Looking for Spinoza. Harcourt, Inc. (2003) ISBN 978-0-15-100557-4

Damasio, A.: The feeling of what happens. Harcourt, Inc., Harcourt (2000) ISBN 978-0-15-601075-7

Sondhi, M.M.: New methods of pitch extraction. IEEE Trans. on Audio and Electroacoustics 16(2), 262–266 (1968)

Lopes, C., Perdigão, F.: VTLN through frequency warping based on pitch. Revista da Sociedade Brasileira de Telecomunicações 18(1), 86–95 (2003)

Lopes, C., Perdigão, F.: VTLN through frequency warping based on pitch. In: Proc. IEEE International Telecommunications Symp., Natal, Brazil (September 2002)

Lopes, C., Perdigão, F.: On the use of pitch to perform speaker normalization. In: Proc. International Conf. on Telecommunications, Electronics and Control, Santiago de Cuba, Cuba (July 2002)

Zieliński, S.: Papers from work on comb transformation method of pitch detection (Description of assumptions of comb transformation Comb transformation - implementation and comparison with another pitch detection methods). Technical University of Gdansk (1997)

Cook, P.R., Morill, D., Smith, J.O.: An automatic pitch detection and MIDI control system for brass instruments. In: Proc. of Special Session on Automatic Pitch Detection (1992)

Hess, W.: Pitch Determination of Speech Signals. Springer, Heidelberg (1983)

Hoelper, C., Frankort, A., Erdmann, C.: Voiced/unvoiced/silence classification for offline speech coding. In: Proceedings of International Student Conference on Electrical Engineering, Prague (2003)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2011 IFIP International Federation for Information Processing

About this paper

Cite this paper

Prado, J.A., Simplício, C., Dias, J. (2011). Robot Emotional State through Bayesian Visuo-Auditory Perception. In: Camarinha-Matos, L.M. (eds) Technological Innovation for Sustainability. DoCEIS 2011. IFIP Advances in Information and Communication Technology, vol 349. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-19170-1_18

Download citation

DOI: https://doi.org/10.1007/978-3-642-19170-1_18

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-19169-5

Online ISBN: 978-3-642-19170-1

eBook Packages: Computer ScienceComputer Science (R0)