Abstract

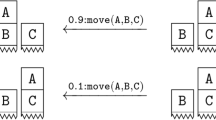

Probabilistic relational models are an efficient way to learn and represent the dynamics in realistic environments consisting of many objects. Autonomous intelligent agents that ground this representation for all objects need to plan in exponentially large state spaces and large sets of stochastic actions. A key insight for computational efficiency is that successful planning typically involves only a small subset of relevant objects. In this paper, we introduce a probabilistic model to represent planning with subsets of objects and provide a definition of object relevance. Our definition is sufficient to prove consistency between repeated planning in partially grounded models restricted to relevant objects and planning in the fully grounded model. We propose an algorithm that exploits object relevance to plan efficiently in complex domains. Empirical results in a simulated 3D blocksworld with an articulated manipulator and realistic physics prove the effectiveness of our approach.

Chapter PDF

Similar content being viewed by others

References

Anderson, J.: Rules of the mind. Lawrence Erlbaum, Hillsdale (1993)

Baddeley, A.: The episodic buffer: a new component of working memory? Trends in Cognitive Sciences 4(11), 417–423 (1999)

Botvinick, M.M., An, J.: Goal-directed decision making in prefrontal cortex: a computational framework. In: Advances in Neural Information Processing Systems, NIPS (2009)

Boutilier, C., Dean, T., Hanks, S.: Decision-theoretic planning: Structural assumptions and computational leverage. Artificial Intelligence Research 11, 1–94 (1999)

Boutilier, C., Reiter, R., Price, B.: Symbolic dynamic programming for first-order MDPs. In: IJCAI, pp. 690–700. Morgan Kaufmann, San Francisco (2001)

Croonenborghs, T., Ramon, J., Blockeel, H., Bruynooghe, M.: Online learning and exploiting relational models in reinforcement learning. In: Proc. of the Int. Conf. on Artificial Intelligence, IJCAI (2007)

Driessens, K., Ramon, J., Gärtner, T.: Graph kernels and Gaussian processes for relational reinforcement learning. Machine Learning (2006)

Dzeroski, S., de Raedt, L., Driessens, K.: Relational reinforcement learning. Machine Learning 43, 7–52 (2001)

Gardiol, N.H., Kaelbling, L.P.: Envelope-based planning in relational MDPs. In: Proc. of the Conf. on Neural Information Processing Systems, NIPS (2003)

Gardiol, N.H., Kaelbling, L.P.: Action-space partitioning for planning. In: Proc. of the National Conference on Artificial Intelligence (AAAI), Vancouver, Canada (2007)

Gardiol, N.H., Kaelbling, L.P.: Adaptive envelope MDPs for relational equivalence-based planning. Technical Report MIT-CSAIL-TR-2008-050, MIT CS & AI Lab, Cambridge, MA (2008)

Halbritter, F., Geibel, P.: Learning models of relational MDPs using graph kernels. In: Proc. of the Mexican Conference on Artificial Intelligence (2007)

Kersting, K., Otterlo, M.V., de Raedt, L.: Bellman goes relational. In: Proc. of the Int. Conf. on Machine Learning, ICML (2004)

Lang, T., Toussaint, M.: Approximate inference for planning in stochastic relational worlds. In: Proc. of the Int. Conf. on Machine Learning, ICML (2009)

Pasula, H.M., Zettlemoyer, L.S., Kaelbling, L.P.: Learning symbolic models of stochastic domains. Artificial Intelligence Research 29 (2007)

Ruchkin, D.S., Grafman, J., Cameron, K., Berndt, R.S.: Working memory retention systems: a state of activated long-term memory. Behavioral and Brain Sciences 26, 709–777 (2003)

Sanner, S., Boutilier, C.: Approximate solution techniques for factored first-order MDPs. In: Proc. of the Int. Conf. on Automated Planning and Scheduling, ICAPS (2007)

Toussaint, M., Storkey, A.: Probabilistic inference for solving discrete and continuous state Markov decision processes. In: Proc. of the Int. Conf. on Machine Learning, ICML (2006)

van Otterlo, M.: The Logic of Adaptive Behavior. IOS Press, Amsterdam (2009)

Weld, D.S.: Recent advances in AI planning. AI Magazine 20(2), 93–123 (1999)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Lang, T., Toussaint, M. (2009). Relevance Grounding for Planning in Relational Domains. In: Buntine, W., Grobelnik, M., Mladenić, D., Shawe-Taylor, J. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2009. Lecture Notes in Computer Science(), vol 5781. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-04180-8_65

Download citation

DOI: https://doi.org/10.1007/978-3-642-04180-8_65

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-04179-2

Online ISBN: 978-3-642-04180-8

eBook Packages: Computer ScienceComputer Science (R0)