Abstract

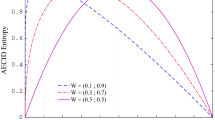

Learning from unbalanced datasets presents a convoluted problem in which traditional learning algorithms may perform poorly. The objective functions used for learning the classifiers typically tend to favor the larger, less important classes in such problems. This paper compares the performance of several popular decision tree splitting criteria – information gain, Gini measure, and DKM – and identifies a new skew insensitive measure in Hellinger distance. We outline the strengths of Hellinger distance in class imbalance, proposes its application in forming decision trees, and performs a comprehensive comparative analysis between each decision tree construction method. In addition, we consider the performance of each tree within a powerful sampling wrapper framework to capture the interaction of the splitting metric and sampling. We evaluate over this wide range of datasets and determine which operate best under class imbalance.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Japkowicz, N.: Class Imbalance Problem: Significance & Strategies. In: International Conference on Artificial Intelligence (ICAI), pp. 111–117 (2000)

Kubat, M., Matwin, S.: Addressing the Curse of Imbalanced Training Sets: One-Sided Selection. In: International Conference on Machine Learning (ICML), pp. 179–186 (1997)

Batista, G., Prati, R., Monard, M.: A Study of the Behavior of Several Methods for Balancing Machine Learning Training Data. SIGKDD Explorations 6(1), 20–29 (2004)

Van Hulse, J., Khoshgoftaar, T., Napolitano, A.: Experimental perspectives on learning from imbalanced data. In: ICML, pp. 935–942 (2007)

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: SMOTE: Synthetic Minority Over-sampling Technique. Journal of Artificial Intelligence Research 16, 321–357 (2002)

Quinlan, J.R.: Induction of Decision Trees. Machine Learning 1, 81–106 (1986)

Chawla, N.V., Japkowicz, N., Kołcz, A. (eds.): Proceedings of the ICML 2003 Workshop on Learning from Imbalanced Data Sets II (2003)

Breiman, L., Friedman, J., Stone, C.J., Olshen, R.: Classification and Regression Trees. Chapman and Hall, Boca Raton (1984)

Flach, P.A.: The Geometry of ROC Space: Understanding Machine Learning Metrics through ROC Isometrics. In: ICML, pp. 194–201 (2003)

Dietterich, T., Kearns, M., Mansour, Y.: Applying the weak learning framework to understand and improve C4.5. In: Proc. 13th International Conference on Machine Learning, pp. 96–104. Morgan Kaufmann, San Francisco (1996)

Drummond, C., Holte, R.: Exploiting the cost (in)sensitivity of decision tree splitting criteria. In: ICML, pp. 239–246 (2000)

Zadrozny, B., Elkan, C.: Obtaining calibrated probability estimates from decision trees and naive Bayesian classifiers. In: Proc. 18th International Conf. on Machine Learning, pp. 609–616. Morgan Kaufmann, San Francisco (2001)

Kailath, T.: The Divergence and Bhattacharyya Distance Measures in Signal Selection. IEEE Transactions on Communications 15(1), 52–60 (1967)

Rao, C.: A Review of Canonical Coordinates and an Alternative to Corresponence Analysis using Hellinger Distance. Questiio (Quaderns d’Estadistica i Investigacio Operativa) 19, 23–63 (1995)

Demsar, J.: Statistical Comparisons of Classifiers over Multiple Data Sets. Journal of Machine Learning Research 7, 1–30 (2006)

Provost, F., Domingos, P.: Tree Induction for Probability-Based Ranking. Machine Learning 52(3), 199–215 (September 2003)

Chawla, N.V.: C4.5 and Imbalanced Data Sets: Investigating the Effect of Sampling Method, Probabilistic Estimate, and Decision Tree Structure. In: ICML Workshop on Learning from Imbalanced Data Sets II (2003)

Vilalta, R., Oblinger, D.: A Quantification of Distance-Bias Between Evaluation Metrics In Classification. In: ICML, pp. 1087–1094 (2000)

Chawla, N.V., Cieslak, D.A., Hall, L.O., Joshi, A.: Automatically countering imbalance and its empirical relationship to cost. Utility-Based Data Mining: A Special issue of the International Journal Data Mining and Knowledge Discovery (2008)

Elkan, C.: The Foundations of Cost-Sensitive Learning. In: International Joint Conference on Artificial Intelligence (IJCAI), pp. 973–978 (2001)

Dietterich, T.G.: Approximate statistical test for comparing supervised classiffication learning algorithms. Neural Computation 10(7), 1895–1923 (1998)

Kubat, M., Holte, R.C., Matwin, S.: Machine Learning for the Detection of Oil Spills in Satellite Radar Images. Machine Learning 30, 195–215 (1998)

Radivojac, P., Chawla, N.V., Dunker, A.K., Obradovic, Z.: Classification and knowledge discovery in protein databases. Journal of Biomedical Informatics 37, 224–239 (2004)

Chang, C., Lin, C.: LIBSVM: a library for support vector machines (2001), http://www.csie.ntu.edu.tw/~cjlin/libsvm

Asuncion, A., Newman, D.: UCI Machine Learning Repository (2007)

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Cieslak, D.A., Chawla, N.V. (2008). Learning Decision Trees for Unbalanced Data. In: Daelemans, W., Goethals, B., Morik, K. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2008. Lecture Notes in Computer Science(), vol 5211. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-87479-9_34

Download citation

DOI: https://doi.org/10.1007/978-3-540-87479-9_34

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-87478-2

Online ISBN: 978-3-540-87479-9

eBook Packages: Computer ScienceComputer Science (R0)