Abstract

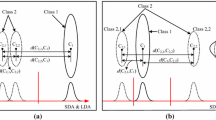

Subclass Discriminant Analysis (SDA) [10] is a dimensionality reduction method that has been proven to be successful for different types of class distributions. The advantage of SDA is that since it does not treat the class-conditional distributions as uni-modal ones, the nonlinearly separable problems can be handled as linear ones. The problem with this strategy, however, is that to estimate the number of subclasses needed to represent the distribution of each class, i.e., to find out the best partition, all possible solutions should be verified. Therefore, this approach leads to an associated high computational cost. In this paper, we propose a method that optimizes the computational burden of SDA-based classification by simply reducing the number of classes to be examined through choosing a few classes of the training set prior to the execution of SDA. To select the classes to be partitioned, the intra-set distance is employed as a criterion and a k-means clustering is performed to divide them. Our experimental results for an artificial data set and two face databases demonstrate that the processing CPU-time of the optimized SDA could be reduced dramatically without sacrificing either the classification accuracy or the computational complexity.

This work was supported by the Korea Research Foundation Grant funded by the Korea Government (MOEHRD-KRF-2007-313-D00714).

Chapter PDF

Similar content being viewed by others

References

Fukunaga, K.: Introduction to Statistical Pattern Recognition, 2nd edn. Academic Press, San Diego (1990)

Belhumeur, P.N., Hespanha, J.P., Kriegman, D.J.: Eigenfaces vs. Fisherfaces: Recognition using class specific linear projection. IEEE Trans. Pattern Anal. and Machine Intell. PAMI 19(7), 711–720 (1997)

Yu, H., Yang, J.: A direct LDA algorithm for high-dimensional data - with application to face recognition. Pattern Recognition 34, 2067–2070 (2001)

Yang, M.-H.: Kernel Eigenfaces vs. kernel Fisherfaces: Face recognition using kernel methods. In: Proceedings of Fifth IEEE International Conference on Automatic Face and Gesture Recognition, pp. 215–220 (2002)

Loog, M., Duin, R.P.W.: Linear Dimensionality Reduction via a Heteroscedastic Extension of LDA: The Cherno Criterion. IEEE Trans. Pattern Anal. and Machine Intell. PAMI 26(6), 732–739 (2004)

Rueda, L., Herrera, M.: A New approach to multi-class linear dimensionality reduction. In: Martínez-Trinidad, J.F., Carrasco Ochoa, J.A., Kittler, J. (eds.) CIARP 2006. LNCS, vol. 4225, pp. 634–643. Springer, Heidelberg (2006)

Roweis, S., Saul, L.K.: Nonlinear dimensionality reduction by locally linear embedding. Science 290, 2323–2326 (2000)

Frley, C., Raftery, A.E.: How many clusters? Which clustering method? Answers via model-based cluster analysis. The Computer Journal 41(8), 578–588 (1998)

Halbe, Z., Aladjem, M.: Model-based mixture discriminant analysis - An experimental study. Pattern Recognition 38, 437–440 (2005)

Zhu, M., Martinez, A.M.: Subclass discriminant analysis. IEEE Trans. Pattern Anal. and Machine Intell. PAMI 28(8), 1274–1286 (2006)

Kim, S.-W., Duin, R.P.W.: On using a pre-clustering technique to optimize LDA-based classifiers for appearance-based face recognition. In: Rueda, L., Mery, D., Kittler, J. (eds.) CIARP 2007. LNCS, vol. 4756, pp. 466–476. Springer, Heidelberg (2007)

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Kim, SW. (2008). On Optimizing Subclass Discriminant Analysis Using a Pre-clustering Technique. In: Ruiz-Shulcloper, J., Kropatsch, W.G. (eds) Progress in Pattern Recognition, Image Analysis and Applications. CIARP 2008. Lecture Notes in Computer Science, vol 5197. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-85920-8_36

Download citation

DOI: https://doi.org/10.1007/978-3-540-85920-8_36

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-85919-2

Online ISBN: 978-3-540-85920-8

eBook Packages: Computer ScienceComputer Science (R0)