Abstract

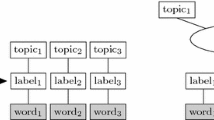

Robustly estimating the state-transition probabilities of high-order Markov processes is an essential task in many applications such as natural language modeling or protein sequence modeling. We propose a novel estimation algorithm called Hierarchical Separated Dirichlet Smoothing (HSDS), where Dirichlet distributions are hierarchically assumed to be the prior distributions of the state-transition probabilities. The key idea in HSDS is to separate the parameters of a Dirichlet distribution into the precision and mean, so that the precision depends on the context while the mean is given by the lower-order distribution. HSDS is designed to outperform Kneser-Ney smoothing especially when the number of states is small, where Kneser-Ney smoothing is currently known as the state-of-the-art technique for N-gram natural language models. Our experiments in protein sequence modeling showed the superiority of HSDS both in perplexity evaluation and classification tasks.

Chapter PDF

Similar content being viewed by others

Keywords

- Posterior Distribution

- Prior Distribution

- Unlabeled Data

- Markov Chain Monte Carlo Method

- Dirichlet Distribution

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Chen, S., Goodman, J.: An empirical study of smoothing techniques for language modeling. Technical Report TR-10-98, Harvard Computer Science (1998)

Ganapathiraju, M., Manoharan, V., Klein-Seetharaman, J.: BLMT: Statistical sequence analysis using n-grams. Applied Bioinformatics 3 (November 2004)

Netzer, O., Lattin, J.M., Srinivasan, V.: A Hidden Markov Model of Customer Relationship Dynamics. Stanford GSB Research Paper (July 2005)

Kneser, R., Ney, H.: Improved backing-off for m-gram language modeling. In: Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, vol. 1, pp. 181–184 (May 1995)

Pitman, J., Yor, M.: The two-parameter Poisson-Dirichlet distribution derived from a stable subordinator. The Annals of Probability 25(2), 855–900 (1997)

Goldwater, S., Griffiths, T., Johnson, M.: Interpolating between types and tokens by estimating power-law generators. In: Advances in Neural Information Processing Systems (NIPS), vol. 18 (2006)

Teh, Y.W.: A Bayesian interpretation of interpolated Kneser-Ney. Technical Report TRA2/06, School of Computing, National University of Singapore (2006)

Teh, Y.W.: A hierarchical Bayesian language model based on Pitman-Yor processes. In: Proceedings of the Annual Meeting of the Association for Computational Linguistics, vol. 44 (2006)

MacKay, D.J.C., Peto, L.: A hierarchical Dirichlet language model. Natural Language Engineering 1(3), 1–19 (1994)

Minka, T.: Estimating a Dirichlet distribution. Technical report, Microsoft Research (2003)

Minka, T.: Beyond Newton’s method. Technical report, Microsoft Research (2000)

Ney, H., Essen, U., Kneser, R.: On structuring probabilistic dependences in stochastic language modeling. Computer, Speech, and Language 8, 1–38 (1994)

Witten, I.H., Bell, T.C.: The zero-frequency problem: Estimating the probabilities of novel events in adaptive text compression. IEEE Transactions on Information Theory 37(4), 1085–1094 (1991)

Lewis, D.D.: Reuters-21578 text categorization test collection distribution 1.0 (1997), Available at http://www.daviddlewis.com/resources/testcollections/reuters21578/

Guo, T., Sun, Z.: Dbsubloc: Database of protein subcellular localization (2005), Available at http://www.bioinfo.tsinghua.edu.cn/~guotao/

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2007 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Takahashi, R. (2007). Separating Precision and Mean in Dirichlet-Enhanced High-Order Markov Models. In: Kok, J.N., Koronacki, J., Mantaras, R.L.d., Matwin, S., Mladenič, D., Skowron, A. (eds) Machine Learning: ECML 2007. ECML 2007. Lecture Notes in Computer Science(), vol 4701. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-74958-5_36

Download citation

DOI: https://doi.org/10.1007/978-3-540-74958-5_36

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-74957-8

Online ISBN: 978-3-540-74958-5

eBook Packages: Computer ScienceComputer Science (R0)