Abstract

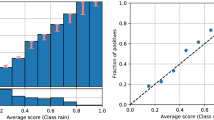

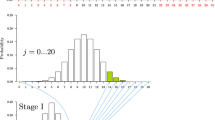

A calibrated classifier provides reliable estimates of the true probability that each test sample is a member of the class of interest. This is crucial in decision making tasks. Procedures for calibration have already been studied in weather forecasting, game theory, and more recently in machine learning, with the latter showing empirically that calibration of classifiers helps not only in decision making, but also improves classification accuracy. In this paper we extend the theoretical foundation of these empirical observations. We prove that (1) a well calibrated classifier provides bounds on the Bayes error (2) calibrating a classifier is guaranteed not to decrease classification accuracy, and (3) the procedure of calibration provides the threshold or thresholds on the decision rule that minimize the classification error. We also draw the parallels and differences between methods that use receiver operating characteristic (ROC) curves and calibration based procedures that are aimed at finding a threshold of minimum error. In particular, calibration leads to improved performance when multiple thresholds exist.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

DeGroot, M., Fienberg, S.: The comparison and evaluation of forecasters. The statistician 32, 12–22 (1983)

Fundenberg, D., Levine, D.: An easier way to calibrate. Games and economic behavior 29, 131–137 (1999)

Foster, D., Vohra, R.V.: Asymptotic calibration. Biometrika 85, 379–390 (1998)

Zadrozny, B., Elkan, C.: Obtaining calibrated probability estimates from decision trees and naive Bayesian classifiers. In: ICML (2001)

Zadrozny, B., Elkan, C.: Transforming classifier scores into accurate multiclass probability estimates. In: Knowledge Discovery and Data Mining (2002)

Fawcett, T.: ROC graphs: Notes and practical considerations for data mining representation. Technical Report HPL-2003-4, Hewlett-Packard Labs, Palo Alto, CA (2003)

Lachiche, N., Flach, P.: Improving accuracy and cost of two-class and multi-class probabilistic classifiers using ROC curves. In: ICML, pp. 416–423 (2003)

Devroye, L., Gyorfi, L., Lugosi, G.: A Probabilistic Theory of Pattern Recognition. Springer, New York (1996)

Brier, G.: Verification of forecasts expressed in terms of probability. Monthly weather review 78, 1–3 (1950)

Duda, R.O., Hart, P.E., Stork, D.: Pattern Classification. John Wiley and Sons, New York (2001)

Friedman, N., Geiger, D., Goldszmidt, M.: Bayesian network classifiers. Machine Learning 29, 131–163 (1997)

Cozman, F.G., Cohen, I., Cirelo, M.: Semi-supervised learning of mixture models. In: ICML, pp. 99–106 (2003)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2004 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Cohen, I., Goldszmidt, M. (2004). Properties and Benefits of Calibrated Classifiers. In: Boulicaut, JF., Esposito, F., Giannotti, F., Pedreschi, D. (eds) Knowledge Discovery in Databases: PKDD 2004. PKDD 2004. Lecture Notes in Computer Science(), vol 3202. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-30116-5_14

Download citation

DOI: https://doi.org/10.1007/978-3-540-30116-5_14

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-23108-0

Online ISBN: 978-3-540-30116-5

eBook Packages: Springer Book Archive