Abstract

Presently for various reasons people are concerned about directly touching the biometric scanners, therefore touch-less and non-invasive approach seems to be useful in practical applications. For the importance and complexity of handwritten character input in human-computer interaction system, a touch-less character input method has been proposed. A person uses his Finger Gesture Movement spot for writing in the air in this method, the video of Finger Gesture movement in air is recorded by a camera and processed utilizing computer vision technology, then the handwritten character image is reconstructed, finally, the reconstructed character is recognized. The algorithm of Finger Gesture spot detection and character image recovery is given, and their effectively has been verified experimentally in this paper. Depending on the hand motion dynamics, treated as a bio-metrics; as an input pattern for recognition system, finger Gesture movement can be a sufficient base for efficient user identification.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

- Human Computer Interaction

- Finger Gesture Movement

- Figure gesture recognition

- Finger spot detection

- Computer vision

1 Introduction

With the development of computer technology, the way of human computer interaction, from the initial punch card to keyboard mouse and to touch handwritten input has been in evolution and improvement. At present, use of touch screen input gives people an experience of new fashion. However, many mobile devices such as mobile phone are limited by size, resulting in that the handwriting input cannot be performed on the big screen. So, looking for more convenient, more efficient and more intelligent human-computer interaction method is an important research topic in the field of embedded human computer interaction. Touch-less handwritten input using Finger Gesture Movement instead of touch screen with camera is more people-oriented. The fundamental is recognizing the Finger Gesture of hand in the air firstly, and then tracking the finger’s image sequence acquired frame by frame using digital image processing technology so as to construct an image of the character written, recognize the character finally. Along with widely use of the electronic devices equipped with camera, the method of character input proposed in this paper will be a good choice, especially in all kinds of multimedia teaching and intelligent information processing. Using the Finger Gesture Movement spot’s characteristics of color, pixel area, and shape to overcome the environmental Finger Gesture Movement unstable factors impacting on the results of Finger Gesture Movement spot detection, so as to detected the Finger Gesture Movement spot effectively from the image sequences acquired by the camera, and it is very important for the subsequently recovering handwritten character information.

2 Related Work

A symbol of physical behavior or emotional expression is named Gesture. The mix of body gesture and hand gesture makes it. Static gesture [1,2,3,4] and Dynamic gesture [5,6,7,8] are main categories. For the previous, a proof is denoted by the posture of the body or the gesture of the hand. For the latter, some messages area unit sent by the movement of the body or the hand. As a tool of communication between computer and human, gesture is employed [9,10,11]. It is greatly completely different from the standard hardware based mostly strategies. Through gesture recognition, it can accomplish human-computer interaction. The gesture or movement of the body or body parts is recognized by the gesture recognition determines the user intent. Several researchers have strived to enhance the hand gesture recognition technology in within the past decades. To recognize America sign language, the authors discover the hand region; then track and analyze the moving path [12, 13]. The import role is contend by hand gesture recognition in several applications like sign language recognition [12,13,14,15], augmented reality (virtual reality) [16–19], signing interpreters for the disabled [20], and robot control [21, 22]. From completely different sources like vision, the recognition systems are ready to gather information regarding user’s performed gestures [23], accelerometers [24], touch-sensitive surfaces [25] gyroscopes, or perhaps magnetic field sensors. The last 3 parts are usually integrated as MEMS within mobile devices. Also, for authentication functions, the gestures are perceived as helpful data source within the field of security. Following the most plan of using gestures rather than commonplace text password completely different analysis and implementations are developed involving accelerometer-based recognition [24], remote palm-based gestures [26, 27] or touch-based drawing gestures [25]. Owing to their usability in sensible applications, we tend to specialize in the supposed natural kind of gestures. Although it may be classified as behavioral biometrics like handwritten signatures [28, 29]; over physiological biometric, this sort of gestures inherits additionally some blessings.

3 Proposed Approach

The proposed method comprises of 2 phases:

-

1.

Finger Gesture Recognition (Fig. 1)

Fig. 1.

(Source: [32])

Examples of natural gestures

-

2.

Visible Spot Detection and Character Recognition

3.1 Figure and Gesture Recognition

The summary of the fingers gesture recognition is represented in Fig. 2. First, the hand is detected using the background subtraction methodology and therefore the result of hand detection is remodeled to a binary image. Then, the fingers and palm are segmented therefore as to facilitate the finger recognition. Moreover, the fingers are detected and recognized.

3.1.1 Detection of Hand

When camera mood on and the hand is in the camera reason, then with a standard camera i.e. webcam these images are captured. In Fig. 3, for hand gesture recognition, the original images employed in the work are incontestable. Under identical conditions, these hand images are taken. It is necessary that background of those images is identical. Therefore, using the background subtraction methodology, it is straightforward and effective to find the hand region from the original image. However, in some cases, there are alternative moving objects enclosed within the results of background subtraction. The coloring is used to discriminate the hand region from the opposite moving objects. The color of the skin is measured with the HSV (hue, saturation, and value) model.

The procedure of hand detection (Source: [30]). (Color figure online)

3.1.2 Fingers and Palm Segmentation

The output of the hand detection is a binary image during which the white pixels are the members of the hand region, whereas the black pixels belong to the background. Then, the subsequent procedure is enforced on the binary hand image to segment the fingers and palm

-

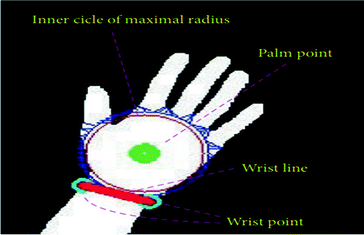

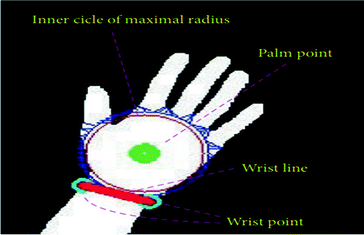

Point of Palm. The point of palm is defined as the center point of the palm. By using distance transform, it is found. Distance transform also called distance map is a representation of an image. In the distance transform image, each pixel records the distance of it and the nearest boundary pixel. To measure the distances between the pixels and the nearest boundary pixels, the block city distance is used.

-

Maximal Radius’ Inner Circle. It can draw a circle with the palm point because the center point within the palm once the palm point is found. Because of it’s included within the palm, the circle is termed the inner circle. Until it reaches the edge of the palm, the radius of the circle gradually increases. Once the black pixels are enclosed within the circle that is the radius of the circle stops to extend.

-

Wrist Points and Palm Mask. When the radius of the maximal inner circle is acquired, a larger circle the radius of which is 1.2 times of that of the maximal inner circle is produced (Fig. 4).

Fig. 4.

(Source: [30])

Maximal radius’ wrist points, palm point, the wrist line and the inner circle

3.1.3 Recognition of Fingers’ Gesture

The labeling algorithmic rule is applied to mark the regions of the fingers within the segmentation image of fingers. The detected regions within which the amount of pixels is just too small is thought to be buzzing regions and discarded within the results of the labeling technique. Solely the regions of enough sizes are thought to be fingers and stay. For every remained region, that is, a finger, the minimal bounding box is found to surround the finger. Then, the center of the minimal bounding box is employed to represent the center point of the finger.

-

Thumb’s Detection and Recognition. The centers of the fingers are lined to the palm point. Then, the degrees between the lines and the gliding joint line are computed. It implies that the thumb seems within the hand image, if there is a degree smaller than 50°. The corresponding center is that the center purpose of the thumb, the thumb does not exist within the image, if all the degrees are larger than 50°.

-

Other Fingers’ Detection and Recognition. In order to observe and recognize the other fingers, the palm line parallels to the wrist joint line. The palm line is searched within the way: begin from the row of the wrist joint line. A line paralleling to the wrist joint line crosses the hand for every row. The road shifts upward, if there is only one connected set of white pixels within the intersection of the road and therefore the hand. The road is considered a candidate of the palm line, once there are quite one connected sets of white pixels within the intersection of the road and therefore the hand. The road crossing the hand with quite one connected sets of white pixels in their inter-section is chosen because the palm line, within the case of the thumb not detected. The road continues to maneuver upward with the edge points of the palm rather than the thumb because the starting point of the road, within the case of the thumb existing. There is only one connected set of pixels within the intersection of the road and therefore the hand, since the thumb is removed. It falls into sure components, according to the horizontal coordinate of the center point of a finger. It is the forefinger, if the finger falls into the first half. It is the center finger, if the finger belongs to the second part. The third part corresponds to the ring finger. The fourth part is that the little finger.

3.2 Finger Visible Spot Detection and Character Recognition

Using the finger spot’s characteristics of color, pixel area, and shape to beat the environmental light unstable factors impacting on the results of finger spot detection, thus on detected the finger spot effectively from the image sequences uninheritable by the camera, and it is important for the after convalescent handwritten character information.

3.2.1 The Improvement of Visible Finger Spot Detection

Finger spot detection is that the basis and premise for the following synthesis and recognition of handwritten character operations. The hardiness of finger spot detection technique adopted in paper is low and it is not appropriate for the nice changes setting. Additionally, it solely uses the objects visual property to segment object, within which H plane is employed to segment object roughly, then the thing is segmented intimately through the S or L plane that has the same H element [31]. So as to create the finger spot detection additional sturdy, over one characteristic of finger spot like color, pixel area, and form is adopted to notice finger spot effectively from the image sequence during this paper.

The specific segmentation method is as follows:

-

At first, the images of visual finger spot movement in air is segmented roughly utilizing the light spot color feature frame by frame

-

Secondly, using binary morphology operation the segmented images are processed so as to remove tiny noise and fill-in object voids.

-

Lastly, the processed images are filtered utilizing characteristic parameters - shape and pixel area of the finger spot to remove the big pixel area noise except the finger spot.

The detection of finger spot flow chart is shown in Fig. 5.

The result of image segmentation is not an equivalent in numerous color space. Therefore, HSL color space has been chosen so as to segment finger spot from the image sequence effectively. The elaborated reason is that R, G, B color plane are robust correlation with one another in RGB color space, however, in HSL color space, solely H and S contain the image color information, and L is tangential with color information [33]. The result of image segmentation is not an equivalent in numerous color space. Therefore, HSL color space has been chosen so as to segment finger spot from the image sequence effectively. The elaborated reason is that R, G, B color plane are robust correlation with one another in RGB color space, however, in HSL color space, solely H and S contain the image color information, and L is tangential with color information [34]. The second technique above is chosen in this paper, the rationale is as follows. Once somebody is writing some characters with a finger in air, the camera captures not solely the finger spot image, however conjointly the one who are writing in air. The person as a part of the background has been regularly moving, as a result the background of the finger spot is correspondingly ever-changing and background threshold has also modified, this makes it laborious to spot applicable threshold to segment the finger spot. So the gray threshold segmentation technique is not appropriate for this case, the second image segmentation technique using color features is adopted during this paper. The precise segmentation steps are: get the color histogram of the finger spot in HSL color space within the first place, so acquire the binary image segmentation of finger spot roughly through the color histogram. As a result of color histogram is three-dimensional, so as to look at the distribution of each color plane intuitively, 3 separated one-dimension histogram is adopted to show H, S, and L color histogram of the finger spot. Finger spot color is misdistribution (the marginal color of the finger spot is darker), this end in that the H element histogram seems two peaks at intervals the scope of 0–2 and 210–215. So as to filter the background and different interference color effectively, H threshold hand-picked should embrace all pixels’ color. Therefore we decide 0–2 and 210–215 because the H threshold range. The S element seems single-peak in histogram as color saturation, all pixels’ color saturation distribute in 0–10 interval in L is brightness element, and it’s irrelevant with the color information, that has a single-peak within the scope of 250–255.

The segmentation of the finger spot in above uses solely color information of the finger spot, however the instability of environmental light leads to stronger background noise. It will be seen from the light outside of the window is brighter than the light within the space, and also the camera is facing to associate unstable surroundings light, which ends therein the segmental finger spot binary image not solely has terribly little particles noise, however additionally has giant area noise. So as to attain ideal segmentation impact, we should always adopt binary morphology operation and particle removal methodology to get rid of completely different styles of noise severally. Binary morphology open operation has operated of filter little noise and also the shut operation can swish object contour and fill-in object voids. Once the higher than operations with 3 × 3 square structural components. It will be said that the tiny noise is filtered effectively by binary morphology operation, however the noise whose component space is much outweigh the visible particle noise still exists. Therefore, it is necessary to filter to get rid of giant noise. As a result of the finger spot has bound pixel area and its image approximate to circular shape, the particle removal operation has been accustomed.

According to the above analysis, we can conclude that the measurement of shape and pixel area characteristic parameters of the finger spot is that the key. If characteristic screening parameters utilized in filter deviate from the practice price apparently, the finger spot are filtered or the noise will not be removed. Therefore it is necessary to measure and statistic the shape and pixel area parameters range of finger spot. The histograms of pixel space and shape parameter of the finger spot are calculated, in which 200–400 distribution of pixels area and 1.01–1.19 distribution of shape parameter. If detected finger spot is simply too massive, the recovered handwritten character stroke will be too thick in order that the character cannot be recognized. The detected finger spot is replaced with circle, within which center position is finger spot’s center coordinate and nine pixels is adopted as radius r (combined with the national standard characters related regulations) to revive the finger spot so as to ensure the character recognition rate. Ultimately mirroring image sequence of the finger spot to show within the right direction in image window after finger spot is restored.

3.2.2 Handwritten Input Character’s Synthesis and Recognition

In order to make an entire character image, logical ‘or’ operation has been per-formed on the all above processed images. a new frame image can be uninheritable once a logical ‘or’ operation is completed between the first frame image and also the second frame image, then a logical ‘or’ operation is completed once more between the new frame image and also the third frame image and then on, so that the finger spot movement will be continuously and displayed in image window at the same time, achieving writing by hand whereas simultaneously showing the handwritten character. By comparing the synthesis characters with the feature database, the character that has the highest similarity is that the final recognition result. In this paper, we use OCR training Interface module to complete character threshold segmentation, specify the interested region and regulate the kerning after completing single character division, then recognize every characters and output the result. The experiment result indicates that the OCR training Interface module can effectively identify synthetic handwritten character and provides a convenient way for character recognition.

3.3 Experimental Results

In the experiments, 2 data sets of hand gestures are accustomed appraise the performance of the projected technique. The data set 1 is an image assortment of thirteen gestures. For every gesture, a hundred pictures area unit captured. So, there are total 1300 images for hand gesture recognition. All the gesture images belong to three females and four males. The dimensions of one gesture image is 640 × 480 (Fig. 6).

(Source: [30])

Experimental phase of the recognition of finger gesture

The accuracy rate of this Hand Written Technique Using Touch-Less Finger Gesture Movement for Human Computer Interaction is about 80.21% (Fig. 7).

4 Conclusion

A kind of hand written character input method based on computer vision is presented here. After detecting the finger gesture movement spot, we synthesize a character which conforms to the state prescribed standards of the input character and recognize it on line. By using the handwritten character input method adopted here, it is very helpful for future users to get rid of the traditional man-machine interactive way of keyboard, mouse and so on.

References

Bagdanov, A.D., Del Bimbo, A., Seidenari, L., Usai, L.: Real-time hand status recognition from RGB-D imagery. In: Proceedings of the 21st International Conference on Pattern Recognition (ICPR 2012), pp. 2456–2459, November 2012

Elmezain, M., Al-Hamadi, A., Michaelis, B.: A robust method for hand gesture segmentation and recognition using forward spotting scheme in conditional random fields. In: Proceedings of the 20th International Conference on Pattern Recognition (ICPR 2010), pp. 3850–3853, August 2010

Lee, C.-S., Chun, S.Y., Park, S.W.: Articulated hand configuration and rotation estimation using extended torus manifold embedding. In: Proceedings of the 21st International Conference on Pattern Recognition (ICPR 2012), pp. 441–444, November 2012

Malgireddy, M.R., Corso, J.J., Setlur, S., Govindaraju, V., Mandalapu, D.: A framework for hand gesture recognition and spotting using sub-gesture modeling. In: Proceedings of the 20th International Conference on Pattern Recognition (ICPR 2010), pp. 3780–3783, August 2010

Suryanarayan, P., Subramanian, A., Mandalapu, D.: Dynamic hand pose recognition using depth data. In: Proceedings of the 20th International Conference on Pattern Recognition (ICPR 2010), pp. 3105–3108, August 2010

Park, S., Yu, S., Kim, J., Kim, S., Lee, S.: 3D hand tracking using Kalman filter in depth space. Eurasip J. Adv. Signal Process. 2012(1), Article 36 (2012)

Raheja, J.L., Chaudhary, A., Singal, K.: Tracking of fingertips and centers of palm using KINECT. In: Proceedings of the 2nd International Conference on Computational Intelligence, Modelling and Simulation (CIMSim 2011), pp. 248–252, September 2011

Wang, Y., Yang, C., Wu, X., Xu, S., Li, H.: Kinect based dynamic hand gesture recognition algorithm research. In: Proceedings of the 4th International Conference on Intelligent Human-Machine Systems and Cybernetics (IHMSC 2012), pp. 274– 279, August 2012

Panwar, M.: Hand gesture recognition based on shape parameters. In: Proceedings of the International Conference on Computing, Communication and Applications (ICCCA 2012), pp. 1–6, February 2012

Meng, Z.Y., Pan, J.-S., Tseng, K.-K., Zheng, W.: Dominant points based hand finger counting for recognition under skin color extraction in hand gesture control system. In: Proceedings of the 6th International Conference on Genetic and Evolutionary Computing (ICGEC 2012), pp. 364–367, August 2012

Harshitha, R., Syed, I.A., Srivasthava, S.: HCI using hand gesture recognition for digital sand model. In: Proceedings of the 2nd IEEE International Conference on Image Information Processing (ICIIP 2013), pp. 453–457 (2013)

Yang, R., Sarkar, S., Loeding, B.: Handling movement epenthesis and hand segmentation ambiguities in continuous sign language recognition using nested dynamic programming. IEEE Trans. Pattern Anal. Mach. Intell. 32(3), 462–477 (2010)

Zafrulla, Z., Brashear, H., Starner, T., Hamilton, H., Presti, P.: American sign language recognition with the Kinect. In: Proceedings of the 13th ACM International Conference on Multimodal Interfaces (ICMI 2011), pp. 279–286, November 2011

Uebersax, D., Gall, J., Van den Bergh, M., Van Gool, L.: Realtime sign language letter and word recognition from depth data. In: Proceedings of the IEEE International Conference on Computer Vision Workshops (ICCV 2011), pp. 383–390, November 2011

Pugeault, N., Bowden, R.: Spelling it out: real-time ASL fingerspelling recognition. In: Proceedings of the IEEE International Conference on Computer Vision Workshops (ICCV 2011), pp. 1114–1119, November 2011

Wickeroth, D., Benolken, P., Lang, U.: Markerless gesture based interaction for design review scenarios. In: Proceedings of the 2nd International Conference on the Applications of Digital Information and Web Technologies (ICADIWT 2009), pp. 682–687, August 2009

Frati, V., Prattichizzo, D.: Using Kinect for hand tracking and rendering in wearable haptics. In: Proceedings of the IEEE World Haptics Conference (WHC 2011), pp. 317–321, June 2011

Choi, J., Park, H., Park, J.-I.: Hand shape recognition using distance transform and shape decomposition. In: Proceedings of the 18th IEEE International Conference on Image Processing (ICIP 2011), pp. 3605–3608, September 2011

Tan, T.-D., Guo, Z.-M.: Research of hand positioning and gesture recognition based on binocular vision. In: Proceedings of the IEEE International Symposium on Virtual Reality Innovations (ISVRI 2011), pp. 311–315, March 2011

Zeng, J., Sun, Y., Wang, F.: A natural hand gesture system for intelligent human-computer interaction and medical assistance. In: Proceedings of the 3rd Global Congress on Intelligent Systems (GCIS 2012), pp. 382–385, November 2012

Droeschel, D., Stuckler, J., Behnke, S.: Learning to interpret pointing gestures with a time-of-flight camera. In: Proceedings of the 6th ACM/IEEE International Conference on Human-Robot Interaction (HRI 2011), pp. 481–488, March 2011

Hu, K., Canavan, S., Yin, L.: Hand pointing estimation for human computer interaction based on two orthogonal-views. In: Proceedings of the 20th International Conference on Pattern Recognition (ICPR 2010), pp. 3760–3763, August 2010

Hachaj, T., Ogiela, M.R., Piekarczyk, M.: Dependence of Kinect sensors number and position on gestures recognition with Gesture Description Language semantic classifier. In: Proceedings of the FedCSIS. Series: Annals of Computer Science and Information Systems, vol. 1, pp. 571–575 (2013)

Wu, J., Pan, G., Zhang, D., Qi, G., Li, S.: Gesture recognition with a 3-D accelerometer. In: Zhang, D., Portmann, M., Tan, A.-H., Indulska, J. (eds.) UIC 2009. LNCS, vol. 5585, pp. 25–38. Springer, Heidelberg (2009). https://doi.org/10.1007/978-3-642-02830-4_4

Sae-bae, N., et al.: Multitouch gesture-based authentication. IEEE Trans. Inf. Forensics Secur. 9(4), 568–582 (2014)

Piekarczyk, M., Ogiela, M.R.: On using palm and finger movements as a gesture-based biometrics. In: Proceedings of International Conference on Intelligent Networking and Collaborative Systems, pp. 211–216. IEEE, Los Alamitos (2015). https://doi.org/10.1109/incos.2015.83

Piekarczyk, M., Ogiela, M.R.: The touchless person authentication using gesture-types emulation of handwritten signature templates. In: Proceedings of Tenth International Conference on Broadband and Wireless Computing, Communication and Applications, pp. 132–136. IEEE, Los Alamitos (2015)

Piekarczyk, M., Ogiela, M.R.: Hierarchical graph-grammar model for secure and efficient handwritten signatures classification. J. Univer. Comput. Sci. 17(6), 926–943 (2011). https://doi.org/10.3217/jucs-017-06-0926

Piekarczyk, M., Ogiela, M.R.: Matrix-based hierarchical graph matching in off-line handwritten signatures recognition. In: Proceedings of 2nd IAPR Asian Conference on Pattern Recognition, pp. 897–901. IEEE, Los Alamitos (2013). https://doi.org/10.1109/acpr.2013.164

Chen, Z., Kim, J.-T., Liang, J., Zhang, J., Yuan, Y.-B.: Real-time hand gesture recognition using finger segmentation. Sci. World J. 2014, Article ID 267872, 9 pages (2014)

Yun, S.N., Zhong, L.X.: Experimental research on handwritten character written in the air recognition based on computer vision. In: 2011 4th International Congress on Image and Signal Processing Image and Signal Processing (CISP) (2011)

Piekarczyk, M., Ogiela, M.R.: Touch-less personal verification using palm and fingers movements tracking. In: Dang, P., Ku, M., Qian, T., Rodino, L. (eds.) New Trends in Analysis and Interdisciplinary Applications. Trends in Mathematics. Birkhäuser, Cham (2017)

Cheng, H.D., Jiang, X.H., Sun, Y., et al.: Color image segmentation: advances and prospects. Pattern Recogn. 34(12), 2259–2281 (2001)

Kurugollu, F., Sankur, B., Harmanci, A.E.: Color image segmentation using histogram multithresholding and fusion. Image Vis. Comput. 19(13), 915–928 (2001)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer International Publishing AG, part of Springer Nature

About this paper

Cite this paper

Joarder, Y.A., Hossain, M.B., Uddin, M.J., Islam, M.Z. (2018). A Novel Hand Written Technique Using Touch-Less Finger Gesture Movement for Human Computer Interaction. In: Kurosu, M. (eds) Human-Computer Interaction. Interaction Technologies. HCI 2018. Lecture Notes in Computer Science(), vol 10903. Springer, Cham. https://doi.org/10.1007/978-3-319-91250-9_22

Download citation

DOI: https://doi.org/10.1007/978-3-319-91250-9_22

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-91249-3

Online ISBN: 978-3-319-91250-9

eBook Packages: Computer ScienceComputer Science (R0)