Abstract

With the prevalence of data online, consumers increasingly shop not only for the product that best fits their needs, but also for the best time to purchase the product in order to reduce its cost. In line with this behavior, ecommerce websites often not only offer products, but also provide analytics based statements and recommendations relating to the best time to purchase a perishable product (e.g., air travel). This study examines the effects of such purchase timing statements and recommendations on consumers’ trusting beliefs in the recommendation facility. Our theoretical background comes from Toulmin’s (1958) argumentation model and the literature related to the role of explanation facilities in enhancing consumers’ trust. Results from our pilot study show evidence for the different roles Toulmin elements have, serving as explanation facilities in the context of predictive analytics.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

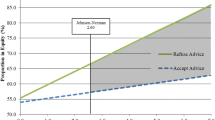

Ecommerce websites often offer not only products, but also advice and arguments related to purchase timing. Most notably, ecommerce sites in the travel industry increasingly provide information intended to affect purchase timing. Sites increasingly show recommendation functionality related to better deal purchasing. Further, the sites provide different statements that relate to the recommendation functionality (See Fig. 1). While the use of such mechanisms in ecommerce websites is becoming increasingly popular, their impact is still not clear. More specifically, do such statements and advice affect the purchase timing? Do the statements increase consumers’ trust in the e-commerce site and its recommendation? If so, which statements affect which trusting beliefs?

In this research we examine the effect of purchase timing related statements and advice on trusting beliefs in the site’s recommendation facility. In order to analyze statements provided by websites, we refer to Toulmin’s [14] model of argumentation, which helps us to categorize statements according to their role in increasing the strength of an argument. It has long been shown that well-structured trust assuring arguments can increase consumers’ trust in a website and the intention to transact in it [7]. More recent studies have shown that well-structured arguments in the context of health related information are more highly trusted [9], and that the perception about social capital of team members in virtual teams is affected by the quality of the argumentations provided in their profiles [2]. We extend this line of research to analyze how supporting an argument establishes trusting beliefs in a recommendation agent driven by analytics.

Unlike other domains, analytics based recommendations are derived from highly complex processes and algorithms, which are difficult to grasp by most users. As a result, often only very partial information is provided to the user, and, as shown in the examples above, their evaluation may be difficult, and their implication may be unclear. This is in contrast to other Recommendation Agents (RA), such as product fit RA, in which the logic of recommendation can be more easily conveyed and understood [16]. Therefore, in the context of analytics, the effect of the soundness of the argument is not clear, as information is always very partial. Further, a question that arises is whether partial arguments still effect trust, and if different partial arguments do this in different ways. Arguments associated with analytics about purchase timing are especially interesting, as while generally statements supporting an argument are expected to enhance trust, in the predictive analytics domain they may potentially have adverse effects on trust as well, as they may expose the user to a sense of lack of privacy and surveillance.

By referring to Toulmin’s [14] model elements as explanation facilities [16], we theorize on the different effects each individual element of the model can have on trust. The different elements defined by Toulmin are (1) data, the facts and grounds for our recommendation, (2) warrants, the way facts are used to arrive at the recommendation, (3) backings, the justification of why the warrant is valid, and (4) rebuttals and qualifiers, the extent to which the recommendation is sound, as well as the conditions under which it may not hold. We suggest that each one of these can be referred to as an explanation facility, thereby potentially enhancing trust even when brought individually, and potentially in different ways on different trusting beliefs.

Our initial pilot study, shows support for the notion that individual statements can enhance trusting beliefs in the context of predictive business analytics. Backing arguments appear to be mostly associated with benevolence and competence trust. Rebuttals enhance integrity trust. Data enhance all trusting beliefs.

2 Theoretical Background

2.1 Toulmin’s Model of Arguments

Toulmin [14] models arguments as composed of different statements to support a claim, or assertion. In the context of our study, the claim referred to is the recommendation provided to the user. According to Toulmin, the core elements of argumentation that come to support a claim are data, warrant, backing and rebuttal. Data relates to facts that helped establish the claim. Warrants relate to the bearing of the claim, or the step made from the data to arrive at the claim. While data are more specific, warrants are general, hypothetical statements, which can act as bridges, and authorize the sort of step to which our particular argument commits. Backings are assurances for the warrant’s authority and currency [15]. According to Toulmin’s model, as one moves from supporting an argument by providing data alone, to augmenting it also with warrants, backings as rebuttals, the claim is sounder. We suggest that due to the complexity of the predictive analytics domain, and thus the difficulty in providing a sound argument, the use of warrants, backing or rebuttals alone, may still enhance trust. Further we suggest each of the different type of statements may enhance different trusting beliefs.

2.2 Trusting Beliefs

Trusting beliefs refer to the perceptions of the trustee about a trusted entity, with respect to three different dimensions. Namely, these dimensions are competence, benevolence, and integrity [8]. This view of trusting beliefs in the technological artefacts is adopted from the traditional view of trusting beliefs in interpersonal communication [13], since people treat computers as social actors and apply social rules to them [11]. In the context of RA’s, competence trust refers a user’s perception that the RA has the ability, skills, and expertise to perform effectively in specific domains. Benevolence trust is a user’s perception that an RA cares about the user and acts in the user’s interest. Integrity trust is the perception that an RA adheres to a set of principles (e.g., honesty and keeping promises) that are generally accepted by consumers [16].

It has been noted that an important distinction exists between competence trust, which is a judgment of ability, and benevolence and integrity trusts, which relate to the morality of the RA [17]. Research suggests that socially, morality related perceptions are given more weight than ability perceptions when people form impressions of others [4]. In the context of ecommerce, evidence for increased satisfaction was found to be associated with increased benevolence trust [17]. It was also found that increased benevolence trust in a seller can explain much of the price premium [10]. Therefore, understanding how such trusting beliefs can be enhanced can potentially have unique practical implications.

2.3 Explanation Facilities

The topic of explanatory capabilities emerged in expert systems, as an attempt to imitate behavior that has been found to be a characteristic of trusted entities such as consultations with human experts [6]. Explanation facilities provide information such as what some terms mean, why certain questions were asked by the system, how conclusions were reached, and why other conclusions were not reached [5]. Different ways have been proposed to classify explanations provided by Knowledge Based Systems and Decision Support Systems [3], and in the context of ecommerce advice, Wang and Benbasat [16] identify ‘why’, ‘how’ and ‘tradeoff’ explanation facilities as potential trust enhancing mechanisms that can reduce agency concerns when shopping online. ‘How’ explanations reveal the line of reasoning by outlining the logical process involved. ‘Why’ explanations justify the importance and purpose of the input used by the recommendation facility, in addition to providing justifications for the recommendations provided. ‘Tradeoff’ explanations provide decisional guidance to enlighten or sway users as they structure and execute their decision-making processes [12, 16]. Wang and Benbasat [16] find that ‘how’ explanations increases users’ competence and benevolence beliefs. They also find that ‘why’ explanation enhance benevolence beliefs, and ‘trade-off’ explanations increase integrity beliefs.

3 Hypothesis Development

While each of the how, why, and tradeoff explanation facilities of RAs, refer to a comprehensive explanation, such explanations are largely impractical in the context of analytics based recommendations. The reason is that in analytics recommendations complex algorithms and mathematical computations are involved to processes an excessive amount of data. These are both very challenging to convey by the agent, and to comprehend by the user. To illustrate, consider the explanation facilities provided in the different contexts: ‘how’ explanations in the context of knowledge based systems refer to showing the entire reasoning tree; in the context of recommendation agents, these explanation provide details about the recommendation rules and prioritiesFootnote 1. Taken to the context of analytics, explaining to a typical user the algorithm used for the analytical forecast, is hard to conceive. ‘Why’ explanations, in both knowledge based systems and recommendation agents, provide full details of how user input may affect the recommendationFootnote 2. However, once again, explaining the effect of specific data points collected on analytics based recommendation, is challenging at best.

Indeed, analytics based recommendations typically do not provide explanation facilities. Rather, as previously pointed out, they often provide short statements of partial information to accompany the recommendation. Essentially these statements come to support a claim (the recommendation); thus, together with the recommendation, these statements help form an argument. While well-formed arguments can help alleviate challenges on claims made (e.g. [7]), it is not clear if partial arguments can help achieve the same or in what way. Further it is not clear how the different components of an argument suggested by Toulmin affect trusting beliefs.

Due to the inherent difficulties in providing explanation facilities in the analytics domain, we suggest that in this domain consumers are receptive to abstract arguments, and that the components of an argument, namely Toulmin’s warrant, data, backings, and rebuttals, can be viewed as explanation facilities in this context. Thus, two unique aspects are hypothesized in the analytics based recommendations domain: (1) Since components of an argument may be viewed as explanation facilities, they may enhance the levels of different trusting beliefs; and (2) Since components of an argument are viewed as explanation facilities, even if an argument is not well formed (e.g. includes isolated components, such as only claim and warrant) trusting beliefs may still be enhanced.

We hypothesize about the role of each of the different Toulmin elements in this context, according to our analysis of the correspondence each has to an explanation facility.

Warrants indicate the bearing on the conclusion from the data used. That is, warrants pertain to the nature and justification of the step made taking data to a claim. Warrants are “general, hypothetical statements, which can act as bridges, and authorize the sort of step to which our particular argument commits us and may normally be written very briefly” [15]. Thus, while warrants are provided as part of an argument, and while abstract and brief, we suggest these ideas closely relate to ‘how’ explanation facilities described in the previous section. Hence, our first hypothesis:

H1: As warrants closely relate to ‘how’ explanation facilities, the inclusion of warrants in an analytics based recommendation will enhance competence and benevolence trusting beliefs.

Backings come in an argument, to convey the appropriateness of the step made to arrive at the claim. As stated by Toulmin, “ ‘Is this calculation mathematically impeccable?’ may be a very different one from the question ‘Is this the relevant calculation?’ ” [15]. That is, the backing in an argument explains why, in general, the warrant should be accepted as having authority. Backings relate to the more general issue of the applicability of a claim. They are assurances, for the authority and currency of the warrants. These ideas closely relate to ‘why’ explanation facilities described in the previous section. ‘Why’ explanations are more comprehensive and explain the logic in using the data. Backings help assure that the way the data have been used to arrive at the conclusion is fundamentally valid. Hence, our next hypothesis:

H2: As backings closely relate to ‘why’ explanation facilities, the inclusion of backings in an analytics based recommendation will enhance benevolence trusting belief.

Rebuttals comment implicitly on the bearing of a warrant. They indicate circumstances in which the general authority of the warrant would have to be set aside. While somewhat different from trade-off explanation facilities, these are very much analogous. While tradeoff explanation elaborate on how a decision between alternatives can be arrived at, rebuttals implicitly imply the consideration of competing alternatives.

H3: As rebuttals closely relate to ‘trade-off’ explanation facilities, the inclusion of rebuttals in an analytics based recommendation will enhance integrity trusting belief.

Data is the foundation for a claim, or the ground which we produce as support for the assertion. Data are the facts one will present in order to support a claim when it is challenged. Unlike warrants, backings and rebuttals, data does not have an equivalent in an explanation facility. However, data is a fundamental part of an argument. We suggest that data statements have three important aspects that directly relate to trust perceptions. First, they help to close the knowledge gap between the user and the system, or enable the user to “find out easily what the program knows about a particular subject” [1], thus enhancing competence trust; Second, they consists of facts, that are less subject to manipulation, and thus its presentation may enhance integrity trust. Finally, data statements help to show the effort put in the RA design to support objectivity. Thus, potentially enhancing also benevolence trust. Namely, our fourth set of hypotheses:

H4a: Since data statements consists of objective facts, which are less subject to subjective description, data statements enhance integrity trust.

H4b: Since data statements provide information about the knowledge the system has, they help close the knowledge gap between the user and the system and enhance competence trust.

H4c: Since data statements expose the effort put in the RA design to support objectivity they enhance benevolence trust.

4 Pilot Study

4.1 Experiment Design

In a pilot study, 64 subjects were provided a flight purchase scenario for a purchase timing decision task. They were asked to view two recommendations snapshots, which were said to have been provided by two separate recommendation facilities of ecommerce websites. One of the presented recommendations included a Toulmin element of either data, warrant, backing, or rebuttal (Henceforward the Toulmin site), and the other did not (Henceforward the non-Toulmin site). Example of a setting is provided in Fig. 2, a list pertaining to statements of type warrant, backing, rebuttal, and data are provided in Table 1.

The two snapshots presented provided opposing recommendations (i.e. one proposed to buy, and the other to wait, see Fig. 2). To counterbalance differences associated with buy recommendations vs wait recommendations, half of the respondents were provided by the Toulmin site a recommendation to buy, and the other half to wait. The respondents were then asked to decide on whether purchase the flight ticket immediately rather than wait, and then to rate their trusting perception (adopted from Wang and Benbasat [16]) about the two provided sites, comparing between them. Similarly to Kim and Benbasat [7], our measures were on a 15-point scale (i.e., −7 to +7) and respondents were asked to compare the Toulmin site to the non-Toulmin site.

5 Results

Table 2 presents the results with respect to the consumer trusting beliefs, comparing the four types of Toulmin recommendations sites, to the base recommendation site.

As shown in the table, apparently warrants and how explanation facilities are significantly different. H1 was not supported, suggesting that possibly warrants are too abstract and do not sufficiently reduce the knowledge gap between the recommendation agent and the user.

The table also shows support for H2 that backings enhance benevolence trust. Apparently backings reduce the agency gap between the user and the recommending site. Interestingly, warrants also enhance integrity and competence trust. Still, there is an apparent difference in the levels of trust between the trusting beliefsFootnote 3, with integrity beliefs being lower than competence and benevolence. This is in line with our hypothesis as benevolence and competence are known to be correlated: a perception of an entity working in one’s favor implies competence of that entity, and the other way around. Similarly, the increased integrity trust can be explained by the idea that if an ecommerce site works for the users’ favor rather than only its own, a level of integrity is implied.

With respect to rebuttals, H3 is supported. As expected, rebuttals relate to trade-off explanations and enhance integrity trust. They do not relate to competence of benevolence.

Finally, Hypotheses H4A–H4C are all supported, suggesting the crucial role of data in enhancing trust. These results about data as trust enhancing show an important role of another explanation facility not previously considered. Data as an explanation facility enhances all types of trust, as it reduces the knowledge gap, exposes the efforts made in the RA design, and provides information perceived as unbiased.

6 Summary

In this research we analyze the effect of components of Toulmin’s model elements on trusting beliefs in the context of the business analytics domain. Our initial results from a pilot study support the notion that Toulmin elements of an argumentation can not only construct an argument and thus enhance the acceptance of a claim. Rather, they can also serve as explanation facilities, and thus may have different roles in enhancing different trusting beliefs. Further, we show the important role of the data element as an explanation facility, enhancing all trusting beliefs. Data is an explanation facility about “how”, which may also be perceived as unbiased, as well as reflect on the efforts made in the RA design to support the needs of the user. In the next steps we plan to continue and analyze the role of Toulmin explanation facilities in enhancing different trusting beliefs, by considering interaction effects between Toulmin elements. We are also analyzing further effects of trust enhancement in this domain, such as analyzing propensity for recommendation adoption and propensity to purchase in the e-commerce website. These are a few of our next steps in this project. We believe this research improves our understanding about antecedents of trusting beliefs in the business analytics domain, as well as the role of Toulmin elements as explanation facilities, in this domain, and possibly others as well.

Notes

- 1.

An example provided for a recommendation agent (Wang and Benbasat 2008): if you want a camera that will focus on subjects farther away, the camera with a stronger optical zoom level will have higher priority in my recommendations. Specifically, the four options will determine the following zoom levels: 1. 2X optical zoom and below; 2. Between 2X and 5X optical zoom; 3. 4X optical zoom and above; 4. No minimum requirement in zoom capability.

- 2.

An example provided for a Knowledge Based Systems (Dhaliwal and Benbasat 1996): Race of the patient is one of the 5 parameters that identify a patient. It may also be relevant later in the consultation when determining the organisms (other than those seen on cultures or smears) which might be causing the infection.

- 3.

The difference between competence and integrity is significant (t = 2.0464).

References

Buchanan, B.G., Shortliffe, E.H.: Rule-based Expert Systems: The MYCIN Experiments of the Stanford Heuristic Programming Project. Addison-Wesley, Reading (1984)

Cummings, J., Dennis, A.: Do SNS impressions matter? Virtual team and impression formation in the era of social technologies. In: 20th Americas Conference on Information Systems, Savannah (2014)

Dhaliwal, J.S., Benbasat, I.: The use and effects of knowledge-based system explanations: theoretical foundations and a framework for empirical evaluation. Inf. Syst. Res. 7(3), 342–362 (1996)

Goodwin, G.: Moral character is person perception. Current Dir. Psychol. Sci. 24, 38–44 (2015)

Gregor, S., Benbasat, I.: Explanations from intelligent systems: theoretical foundations and implications for practice. MIS Q. 23(4), 497–530 (1999)

Kidd, A.L.: The consultative role of an expert system. In: Johnson, P., Cook, S. (eds.) People and Computers: Designing the Interface. Cambridge University Press, Cambridge (1998)

Kim, D., Benbasat, I.: The effects of trust-assuring arguments on consumer trust in Internet stores: application of Toulmin’s model of argumentation. Inf. Syst. Res. 17(3), 286–300 (2006)

McKnight, D.H., Choudhury, V., Kacmar, C.: Developing and validating trust measures for e-commerce: an integrative typology. Inf. Syst. Res. 13(3), 334–359 (2002)

Mun, Y.Y., Yoon, J.J., Davis, J.M., Lee, T.: Untangling the antecedents of initial trust in web-based health information: the roles of argument quality, source expertise, and user perceptions of information quality and risk. Decis. Support Syst. 55(1), 284–295 (2013)

Pavlou, P.A., Dimoka, A.: The nature and role of feedback text comments in online marketplaces: implications for trust building, price premiums, and seller differentiation. Inf. Syst. Res. 17(4), 391–412 (2006)

Reeves, B., Nass, C.: The Media Equation: How People Treat Computers, Television, and New Media Like Real People and Places. Cambridge University Press, New York (1996)

Silver, M.S.: Decisional guidance for computer-based support. MIS Q. 15(1), 105–122 (1991)

Sztompka, P.: Trust: A Sociological Theory. Cambridge University Press, Cambridge (1999)

Toulmin, S.E.: The Use of Argument. Cambridge University Press, Cambridge (1958)

Toulmin, S.E.: The Uses of Argument. Cambridge University Press, Cambridge (2003)

Wang, W., Benbasat, I.: Recommendation agents for electronic commerce: effects of explanation facilities on trusting beliefs. J. Manage. Inf. Syst. 23(4), 217–246 (2007)

Xu, D.J., Cenfetelli, R.T., Aquino, K.: Do different kinds of trust matter? An examination of the three trusting beliefs on satisfaction and purchase behavior in the buyer-seller context. J. Strateg. Inf. Syst. 25(1), 15–31 (2016)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2017 Springer International Publishing AG

About this paper

Cite this paper

Rubin, E., Argyris, Y.A., Benbasat, I. (2017). Consumers’ Trust in Price-Forecasting Recommendation Agents. In: Nah, FH., Tan, CH. (eds) HCI in Business, Government and Organizations. Supporting Business. HCIBGO 2017. Lecture Notes in Computer Science(), vol 10294. Springer, Cham. https://doi.org/10.1007/978-3-319-58484-3_6

Download citation

DOI: https://doi.org/10.1007/978-3-319-58484-3_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-58483-6

Online ISBN: 978-3-319-58484-3

eBook Packages: Computer ScienceComputer Science (R0)