Abstract

Maximal average margin classifiers (MAMCs) maximize the average margin without constraints. Although training is fast, the generalization abilities are usually inferior to support vector machines (SVMs). To improve the generalization abilities of MAMCs, in this paper, we propose optimizing slopes and bias terms of separating hyperplanes after the coefficient vectors of the hyperplanes are obtained. The bias term is optimized so that the number of misclassifications is minimized. To optimized the slope, we introduce a weight to the average of mapped training data for one class and optimize the weight by cross-validation. To improve the generalization ability further, we propose equally constrained MAMCs and show that they reduce to least squares SVMs. Using two-class problems, we show that the generalization ability of the unconstrained MAMCs are inferior to those of the constrained MAMCs and SVMs.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

1 Introduction

Since the introduction of support vector machines (SVMs) [1, 2] various variants have been developed to improve the generalization ability. Because SVMs do not assume a specific data distribution, a priori knowledge on the data distribution can improve the generalization ability. The Mahalanobis distance, instead of the Euclidean distance is useful for this purpose. One approach reformulates SVMs so that the margin is measured by the Mahalanobis distance [3–7], and another approach uses Mahalanobis kernels, which calculate the kernel value according to the Mahalanobis distance [8–13].

In SVMs, the minimum margin is maximized. But in AdaBoost [14], the margin distribution, instead of the minimum margin, has been known to be important in improving the generalization ability [15, 16].

Several approaches have been proposed to control the margin distribution in SVM-like classifiers [17–22]. In [18], a maximum average margin classifier (MAMC) is proposed, in which instead of maximizing the minimum margin, the margin mean for the training data is maximized without slack variables. In [21, 22], in addition to maximizing the margin mean, the margin variance is minimized and the classifier is called large margin distribution machine (LDM). According to the computer experiments in [21], the generalization ability of MAMCs is inferior to SVMs and LDMs.

In this paper, we clarify why MAMCs perform poorly for some classification problems and propose two methods to improve the generalization ability. Because the MAMC does not include constraints associated with training data, the determined bias term depends only on the difference between the numbers of training data for the two classes. To solve this problem, after the weight vector is obtained by the MAMC, we optimize the bias term so that the classification error is minimized. Then to improve the generalization ability further, we introduce a weight parameter to the average vector of one class and determine the parameter value by cross-validation. This results in optimizing the slope of the separating hyperplane. To improve the generalization ability further, we define the equality-constrained MAMC (EMAMC), which is shown to be equivalent to the least squares (LS) SVM. Using two-class problems, we show that the generalization ability of the unconstrained MAMCs with the optimized bias term and slopes are inferior to that of the EMAMC.

In Sect. 2, we explain the architecture of the MAMC and clarify the problems of MAMC. Then, we propose bias term optimization and slope optimization and develop the EMAMC. In Sect. 3, we compare the generalization abilities of the MAMC with those of the proposed MAMC with optimized bias terms and slopes, the EMAMC, and the SVM.

2 Maximum Average Margin Classifiers

2.1 Architecture

In the following we explain the maximum average margin classifiers (MAMCs) according to [18].

We consider a classification problem with M training input-output pairs \(\{\mathbf{x}_i, y_i\}\) (\(i = 1, \ldots , M\)), where \(\mathbf{x}_i\) are m-dimensional training inputs and belong to Class 1 or 2 and the associated labels are \(y_i = 1\) for Class 1 and \(-1\) for Class 2. We map the m-dimensional input vector \(\mathbf{x}\) into the l-dimensional feature space using the nonlinear vector function \(\varvec{\phi }(\mathbf{x})\). In the feature space, we determine the decision function that separates Class 1 data from Class 2 data:

where \(\mathbf{w}\) is the l-dimensional vector, \({\top }\) denotes the transpose of a vector (matrix), and b is the bias term.

The margin of \(\mathbf{x}_i\), \(\delta _i\), which is the distance from the hyperplane, is given by

Under the assumption of

(2) becomes

With \(b=0\), the MAMC, which maximizes the average margin, is defined by

Introducing the Lagrange multiplier \(\lambda \, (>0)\), we obtain the unconstrained optimization problem:

Taking the derivative of Q with respect to \(\mathbf{w}\), we obtain the optimal \(\mathbf{w}\):

In [18], \(\lambda \) is determined using (6) and (8), but \(\lambda \) can take on any positive value because that does not change the decision boundary. Therefore, in the following we set \(\lambda =1\).

In calculating the decision function given by (1), we use kernels \(K(\mathbf{x}, \mathbf{x}') = \varvec{\phi }^{\top }(\mathbf{x}) \, \varvec{\phi }(\mathbf{x})\) to avoid treating the variables in the feature space explicitly.

The resulting decision function \(f(\mathbf{x})\) with \(b=0\) is given by

Among several kernels, radial basis function (RBF) kernels are widely used and thus in the following study we use RBF kernels:

where m is the number of inputs for normalization and \(\gamma \) is to control a spread of a radius.

2.2 Problems with MAMCs

The MAMC is derived without a bias term, i.e., \(b=0\). To include the bias term we change (7) to

Here, we replace \(\lambda \) with 1 and delete the constant term. Then, Q is maximized when

From (13), b is determined by the deference of the numbers of the data belonging to Classes 1 and 2, not by the distributions of the data belonging to the two classes. And if the numbers are the same, \(b=0\), irrespective of \(\mathbf{x}_i \, (i=1,\ldots ,M)\). This occurs because the coefficient of b becomes zero in (11); the value of b does not affect optimality of the solution.

This means that the constraints are lacking for determining the optimal value of b. Similar to SVMs, the addition of inequality or equality constraints for the training data may solve the problem, which will be discussed later.

2.3 Bias Term Optimization

In this section we propose two-stage training; in the first stage, we determine the coefficient vector \(\mathbf{w}\) by (12), and in the second stage, we optimize the value of b by

where \(E_{\mathrm R}\) is the number of misclassifications, \(\rho \) is a positive constant, \(\xi _i \, (\ge 0)\) is a slack variable, \(I(\xi _i) = 0\) for \(\xi _i=0\) and \(I(\xi _i) = 1\) for \(\xi _i>0\). If there are multiple b values that minimize (14), we break the tie by

where \(E_{\mathrm S}\) is the sum of slack variables.

First we consider the case where the classification problem is separable in the feature space. Suppose that

is satisfied, where training data belonging to Class 2 are correctly classified but some of the training data belonging to Class 1 are misclassified. Because of the first inequality in (17), by setting a proper value to b, all the training data are correctly classified.

Let

Then, from (15), to make \(\mathbf{x}_i\) and \(\mathbf{x}_j\) be correctly classified with margin \(\rho \),

must be satisfied. Thus,

The above equations are also valid when

where some of the training data for Class 2 are misclassified.

It is clear that (20) satisfies \(E_{\mathrm R} = E_{\mathrm S} =0\) and that \(\rho \) is the maximum.

Now consider the inseparable case. Let the misclassified training data for Class 1 be \(\mathbf{x}_{i_k} \, (k=1,\ldots ,p)\) and

Likewise, let the misclassified training data for Class 2 be \(\mathbf{x}_{j_k} \, (k=1,\ldots ,n)\) and

Similar to the separable case, it is clear that the optimal b occurs at (20) with \(i=i_k \,(k\in \{1,\ldots ,p\})\) and j being given by

or with \(j=j_k \,(k \in \{1,\ldots ,n\})\) and i being given by

Let \(E_{\mathrm R}(i,j)\) and \(E_{\mathrm S}(i,j)\) denote that \(E_{\mathrm R}\) and \(E_{\mathrm S}\) are evaluated with b determined using \(\mathbf{x}_i\) and \(\mathbf{x}_j\) by (20), where \(i=i_k \,(k\in \{1,\ldots ,p\})\) and j is given by (24) or \(j=j_k \, (k \in \{1,\ldots ,n\})\) and i is given by (25). For each pair of i and j, we calculate \(E_{\mathrm R}(i,j)\) and select the value of b that minimizes \(E_{\mathrm R}(i,j)\). If there are multiple pairs of i and j, we select the value of b that minimizes \(E_{\mathrm S}(i,j)\).

2.4 Extension of MAMCs

Characteristics of Solutions. Rewriting (8) with \(\lambda = 1\),

where

and \(\bar{\varvec{\phi }}_+\) and \(\bar{\varvec{\phi }}_-\) are the averages of the mapped training data belonging to Classes 1 and 2, respectively, and \(M_+\) and \(M_-\) are the numbers of training data belonging to Classes 1 and 2, respectively.

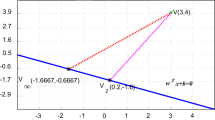

If \(M_+ = M_-\), \(\mathbf{w}\) is the vector which is from \(\bar{\varvec{\phi }}_-/2\) to \(\bar{\varvec{\phi }}_+/2\). Therefore the decision function is orthogonal to the vector. If \(M_+ \ne M_-\), the decision function is orthogonal to \(\bar{\varvec{\phi }}_+ - (M_-/M_+) \, \bar{\varvec{\phi }}_-\).

Slope Optimization. To control the decision function, we introduce a positive hyperparameter \(C_\mathrm{m}\) as follows:

where \(C_\mathrm{m}\) works to lengthen or shorten the length of vector \(\bar{\varvec{\phi }}_-\) according to whether \(C_\mathrm{m}>1\) or \(0< C_\mathrm{m}<1\). Therefore, by changing the value of \(C_\mathrm{m}\), the slope of the decision function is changed.

Then the decision function becomes

We determine the value of \(C_\mathrm{m}\) by cross-validation.

In k-fold cross-validation, we divide the training data set into k almost-equal-size subsets and train the classifier using the \(k-1\) subsets and test the trained classifier using the remaining subset. We iterate this procedure k times for different combinations and calculate the classification error.

Calculation of the classification error for a given \(C_\mathrm{m}\) value is as follows:

-

1.

Calculate (29) with \(b=0\) using the \(k-1\) subsets.

-

2.

Calculate the bias term using the method discussed in Sect. 2.3.

-

3.

Calculate the classification error for the remaining subset using the decision function generated in Steps 1 and 2.

Repeat the above procedure for the k different combinations and calculate the classification error for the decision function.

For a given set of \(C_\mathrm{m}\) values, we calculate the classification errors and select the value of \(C_\mathrm{m}\) with the minimum classification error.

2.5 Equality-Constrained MAMCs

To improve the generalization ability of MAMCs further, we consider equality-constrained MAMCs (EMAMCs) as follows:

where \(C_\mathrm{a}\) and C are parameters to control the trade-off between the generalization ability and the classification error for the training data, and \(\xi _i\) are the slack variables for \(\mathbf{x}_i\).

We solve (30) and (31) in the empirical feature space [2] and define

Solving (31) for \(\xi _i\) and substituting it into (30), we obtain the unconstrained optimization problem:

If we delete the second term (the average margin) in the above equation, the optimization problem result in the least squares (LS) SVM defined in the empirical feature space [2].

Taking the partial derivative of (33) with respect to \(\mathbf{w}\) and b and setting the results to zero, we obtain the optimality conditions:

The above optimality conditions can be solved for \(\mathbf{w}\) and b by matrix inversion. The coefficient \(({C_\mathrm{a}}/{M}+C)\) can be deleted because it is a scaling factor and does not change the decision boundary. Then, because \(C_\mathrm{a}\) is not included in the left-hand sides of (34) and (35), the value of \(C_\mathrm{a}\) does not influence the location of the decision boundary. This means that the second term in (33) can be safely deleted.

In addition, if we delete the \(\mathbf{w}^{\top }\, \mathbf{w}\) term from (33), all the terms in the left-hand sides of (34) and (35) include C, thus C can be deleted; C does not work to control the trade-off. Therefore, the \(\mathbf{w}^{\top }\, \mathbf{w}\) term is essential.

Accordingly, dividing (34) and (35) by C and deleting the constant term \(({C_\mathrm{a}}/{C \, M}+1)\) from the right-hand sides of (34) and (35), we obtain

The above formulation is exactly the same as the LS SVM defined in the empirical feature space. Therefore, the EMAMC results in the LS SVM.

3 Performance Evaluation

3.1 Experimental Conditions

We compared the proposed MAMC including the EMAMC (LS SVM) with the plain MAMC and the L1 SVM using two-class data sets [23]. The L1 SVM we used is as follows:

where \(\alpha _{i}\) are Lagrange multipliers associated with \(\mathbf{x}_i\) and \(C \,(>0)\) is a margin parameter that controls the trade-off between the classification error of the training data and the generalization ability.

Table 1 lists the numbers of inputs, training data, test data, and data set pairs of two class problems. Each data set pair consists of the training data set and the test data set. We trained classifiers using the training data set and evaluated the performance using the test data set. Then we calculated the average accuracy and the standard deviation for all the data set pairs. We determined the parameter values by fivefold cross-validation. We selected the \(\gamma \) value of the RBF kernels from {0.01, 0.1, 0.5, 1, 5, 10, 15, 20, 50, 100, 200, 300, 400, 500, 600, 700}. For the \(C_\mathrm{m}\), we selected from {0.05, 0.1, 0.2, ...,0.9,1.0,1.1111,..., 20}. For the EMAMC and L1 SVM, we selected the \(\gamma \) value from 0.01 to 200 and the C value, from {0.1, 1, 10, 50, 100, 500, 1000, 2000}. We trained the L1 SVM using SMO-NM [24], which fuses SMO (Sequential minimal optimization) and NM (Newton’s method).

3.2 Results

Table 2 shows the average accuracies and their standard deviations of the six classifiers with RBF kernels. In the table, MAMC is given by (12) and (13) and the \(\gamma \) value is optimized by cross-validation. In MAMC\(_\mathrm{b}\), the bias term is optimized as discussed in Sect. 2.3. In MAMC\(_\mathrm{bs}\), after \(\gamma \) value is optimized with \(C_\mathrm{m} = 1\), the \(C_m\) value is optimized. We call this strategy line search in contrast to grid search. In MAMC\(_\mathrm{bsg}\), the \(\gamma \) and \(C_\mathrm{m}\) values are optimized by grid search.

Among the six classifiers including the L1 SVM the best average accuracy is shown in bold and the worst average accuracy is underlined. The “Average” row shows the average accuracy of the 13 average accuracies and the two numerals in the parentheses show the numbers of the best and worst accuracies in the order. We performed Welch’s t test with the confidence intervals of 95 %. The “W/T/L” row shows the results; W, T, and L denote the numbers that the MAMC\(_\mathrm{bs}\) shows statistically better than, the same as, and worse than the remaining five classifiers, respectively.

From the “Average” row, the EMAMC performed best in the average accuracy and the L1 SVM the second best. The difference between MAMC\(_\mathrm{bsg}\) and MAMC\(_\mathrm{bs}\) is small. The MAMC is the worst. From the “W/T/L” row, the accuracies of the MAMC\(_\mathrm{bs}\) and the MAMC\(_\mathrm{bsg}\) are statistically comparable and the accuracy of the MAMC\(_\mathrm{bs}\) is slightly better than that of the MAMC\(_\mathrm{b}\) but always better than that of the MAMC. The accuracy of the MAMC\(_\mathrm{bs}\) is worse than that of the EMAMC and L1 SVM.

In Sect. 2.2, we clarified that the bias term is not optimized by the original MAMC formulation. This is exemplified by the experiments. By optimizing the bias term as proposed in Sect. 2.3, the accuracy is improved drastically. The effect of slope optimization to the accuracy is small. However, by the bias term and slope optimization, the generalization ability is still below that of EMAMC or L1 SVM. This indicates that the equality or inequality constraints are essential in realizing the high generalization ability.

We measured the average CPU time per data set including time for model selection by fivefold cross-validation, training a classifier, and classifying the test data by the trained classifier. We used a personal computer with 3.4 GHz CPU and 16 GB memory. Table 3 shows the results. From the table the MAMC is the fastest and the MAMC\(_\mathrm{bs}\) and MAMC\(_\mathrm{b}\) are comparable to the MAMC. Comparing the MAMC\(_\mathrm{bs}\) and the MAMC\(_\mathrm{bsg}\), the MAMC\(_\mathrm{bsg}\) requires more time because of the grid search. Because the classification performance is comparable, line search seems to be sufficient. The EMAMC, L1 SVM and MAMC\(_\mathrm{bsg}\) are in the slowest group.

3.3 Discussions

The advantage of the MAMC is its simplicity: The coefficient vector of the decision hyperplane is calculated by addition or subtraction of kernel values. The inferior generalization ability of the original MAMC is mitigated by bias and slope optimization, but the improvement is still not sufficient compared to the EMAMC and L1 SVM. Therefore, the introduction of the equality or inequality constraints are essential. But it leads to the LS SVM or L1 SVM and the simplicity of the MAMC is completely lost.

4 Conclusions

We discussed two ways to improve the generalization ability of the maximum average margin classifier (MAMC). One is to optimize the bias term after calculating the weight vector, and the other is to optimize the slope of the decision function by introducing the weight parameter to the average vector of one class. The parameter value is determined by cross validation. To improve the generalization ability further, we introduced the EMAMC, which is the equality constrained MAMC, but this is shown to be equivalent to the LS SVM defined in the empirical feature space.

According to the experiments for two-class problems, we show that the generalization ability is improved by the bias term and slope optimization. However, the obtained generalization ability is inferior to the EMAMC and L1 SVM. Therefore, the unconstrained MAMC is not so powerful as the EMAMC and L1 SVM.

References

Vapnik, V.N.: Statistical Learning Theory. Wiley, New York (1998)

Abe, S.: Support Vector Machines for Pattern Classification, 2nd edn. Springer, London (2010)

Lanckriet, G.R.G., El Ghaoui, L., Bhattacharyya, C., Jordan, M.I.: A robust minimax approach to classification. J. Mach. Learn. Res. 3, 555–582 (2002)

Huang, K., Yang, H., King, I., Lyu, M.R.: Learning large margin classifiers locally and globally. In: Proceedings of the Twenty-First International Conference on Machine Learning (ICML 2004), pp. 1–8 (2006)

Yeung, D.S., Wang, D., Ng, W.W.Y., Tsang, E.C.C., Wang, X.: Structured large margin machines: sensitive to data distributions. Mach. Learn. 68(2), 171–200 (2007)

Xue, H., Chen, S., Yang, Q.: Structural regularized support vector machine: a framework for structural large margin classifier. IEEE Trans. Neural Netw. 22(4), 573–587 (2011)

Peng, X., Xu, D.: Twin Mahalanobis distance-based support vector machines for pattern recognition. Inf. Sci. 200, 22–37 (2012)

Abe, S.: Training of support vector machines with Mahalanobis kernels. In: Duch, W., Kacprzyk, J., Oja, E., Zadrożny, S. (eds.) ICANN 2005. LNCS, vol. 3697, pp. 571–576. Springer, Heidelberg (2005)

Wang, D., Yeung, D.S., Tsang, E.C.C.: Weighted Mahalanobis distance kernels for support vector machines. IEEE Trans. Neural Netw. 18(5), 1453–1462 (2007)

Shen, C., Kim, J., Wang, L.: Scalable large-margin Mahalanobis distance metric learning. IEEE Trans. Neural Netw. 21(9), 1524–1530 (2010)

Liang, X., Ni, Z.: Hyperellipsoidal statistical classifications in a reproducing kernel Hilbert space. IEEE Trans. Neural Netw. 22(6), 968–975 (2011)

Fauvel, M., Chanussot, J., Benediktsson, J.A., Villa, A.: Parsimonious Mahalanobis kernel for the classification of high dimensional data. Pattern Recogn. 46(3), 845–854 (2013)

Reitmaier, T., Sick, B.: The responsibility weighted Mahalanobis kernel for semi-supervised training of support vector machines for classification. Inf. Sci. 323, 179–198 (2015)

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 55(1), 119–139 (1997)

Reyzin, L., Schapire, R.E.: How boosting the margin can also boost classifier complexity. In: Proceedings of the 23rd International Conference on Machine learning, pp. 753–760. ACM (2006)

Gao, W., Zhou, Z.-H.: On the doubt about margin explanation of boosting. Artif. Intell. 203, 1–18 (2013)

Garg, A., Roth, D.: Margin distribution and learning. In: Proceedings of the Twentieth International Conference (ICML) on Machine Learning, Washington, DC, USA, pp. 210–217 (2003)

Pelckmans, K., Suykens, J., Moor, B.D.: A risk minimization principle for a class of parzen estimators. In: Platt, J.C., Koller, D., Singer, Y., Roweis, S.T. (eds.) Advances in Neural Information Processing Systems, vol. 20, pp. 1137–1144. Curran Associates Inc., New York (2008)

Aiolli, F., Da San Martino, G., Sperduti, A.: A kernel method for the optimization of the margin distribution. In: Kůrková, V., Neruda, R., Koutník, J. (eds.) ICANN 2008, Part I. LNCS, vol. 5163, pp. 305–314. Springer, Heidelberg (2008)

Zhang, L., Zhou, W.-D.: Density-induced margin support vector machines. Pattern Recogn. 44(7), 1448–1460 (2011)

Zhou, Z.-H., Zhang, T.: Large margin distribution machine. In: Twentieth ACM SIGKDD Conference on Knowledge Discovery and Data Mining, pp. 313–322 (2014)

Zhou, Z.-H.: Large margin distribution learning. In: El Gayar, N., Schwenker, F., Suen, C. (eds.) ANNPR 2014. LNCS, vol. 8774, pp. 1–11. Springer, Heidelberg (2014)

Rätsch, G., Onoda, T., Müller, K.-R.: Soft margins for AdaBoost. Mach. Learn. 42(3), 287–320 (2001)

Abe, S.: Fusing sequential minimal optimization and Newton’s method for support vector training. Int. J. Mach. Learn. Cybern. 7, 345–364 (2016). doi:10.1007/s13042-014-0265-x

Acknowledgment

This work was supported by JSPS KAKENHI Grant Number 25420438.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2016 Springer International Publishing AG

About this paper

Cite this paper

Abe, S. (2016). Improving Generalization Abilities of Maximal Average Margin Classifiers. In: Schwenker, F., Abbas, H., El Gayar, N., Trentin, E. (eds) Artificial Neural Networks in Pattern Recognition. ANNPR 2016. Lecture Notes in Computer Science(), vol 9896. Springer, Cham. https://doi.org/10.1007/978-3-319-46182-3_3

Download citation

DOI: https://doi.org/10.1007/978-3-319-46182-3_3

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-46181-6

Online ISBN: 978-3-319-46182-3

eBook Packages: Computer ScienceComputer Science (R0)