Abstract

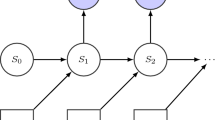

Reinforcement learning agents can acquire the optimal policy to achieve their objectives based on trials and errors. An appropriate design of reward function is essential, because there are variety of reward functions for the same objective whereas different reward functions would give rise to different learning processes. There is no systematic way to determine a good reward function for a given environment and objective. One possible way is finding a reward function to imitate the learning strategy of a reference agent which is intelligent enough to efficiently adapt even variable environments. In this study, we extended the apprenticeship learning framework in order to imitate a learning reference agent, whose policy may change on the process of optimization. For the imitation above, we propose a new inverse reinforcement learning based on that agent’s history of states and actions. When mimicking a reference agent that was trained with a simple 2-state Markov decision process, the proposed method showed better performance than that by the apprenticeship learning.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. The MIT Press, Cambridge (1998)

Russel, S.: Learning agents for uncertain environments (extended abstract). In: COLT 1998 (1998)

Ng, A., Russel, S.: Algorithms for inverse reinforcement learning. In: ICML17 (2000)

Ramachandran, D., Amir, E.: Bayesian inverse reinforcement learning. In: IJCAI (2007)

Ziebert, B., Maas, A., Bagnell, J., et al.: Maximum entropy inverse reinforcement learning. In: AAAI (2008)

Abbeel, P., Ng, A.: Apprenticeship learning via inverse reinforcement learning. In: ICML21 (2004)

Neu, G., Szepesvari, C.: Apprenticeship learning using inverse reinforcement learning and gradient methods. In: UAI 2007 (2007)

Tossou, A.C.Y., Dimitrakakis, C.: Probabilistic inverse reinforcement learning in unknown environments, arXiv (2013)

Shanno, D., Kettler, P.: Optimal conditioning of quasi-Newton methods. Math. Comput. 24, 657–664 (1970)

Acknowledgement

This work was partly supported by the grant-in-aid for next artificial intelligence technology project of the New Energy and Industrial Technology Development Organization (NEDO) of Japan.

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Sakurai, S., Oba, S., Ishii, S. (2015). Inverse Reinforcement Learning Based on Behaviors of a Learning Agent. In: Arik, S., Huang, T., Lai, W., Liu, Q. (eds) Neural Information Processing. ICONIP 2015. Lecture Notes in Computer Science(), vol 9489. Springer, Cham. https://doi.org/10.1007/978-3-319-26532-2_80

Download citation

DOI: https://doi.org/10.1007/978-3-319-26532-2_80

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-26531-5

Online ISBN: 978-3-319-26532-2

eBook Packages: Computer ScienceComputer Science (R0)