Abstract

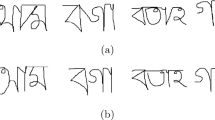

Air-writing, defined as character tracing in a three dimensional free space through hand gestures, is the way forward for peripheral-independent, virtual interaction with devices. While single unistroke character recognition is fairly simple, continuous writing recognition becomes challenging owing to absence of delimiters between characters. Moreover, stray hand motion while writing adds noise to the input, making accurate recognition difficult. The key to accurate recognition of air-written characters lies in noise elimination and character segmentation from continuous writing. We propose a robust and hardware-independent framework for multi-digit unistroke numeral recognition in air-writing environment. We present a sliding window based method which isolates a small segment of the spatio-temporal input from the air-writing activity for noise removal and digit segmentation. Recurrent Neural Networks (RNN) show great promise in dealing with temporal data and is the basis of our architecture. Recognition of digits which have other digits as their sub-shapes is challenging. Capitalizing on how digits are commonly written, we propose a novel priority scheme to determine digit precedence. We only use sequential coordinates as input, which can be obtained from any generic camera, making our system widely accessible. Our experiments were conducted on English numerals using a combination of MNIST and Pendigits datasets along with our own air-written English numerals dataset (ISI-Air Dataset). Additionally, we have created a noise dataset to classify noise. We observe a drop in accuracy with increase in the number of digits written in a single continuous motion because of noise generated between digit transitions. However, under standard conditions, our system produced an accuracy of 98.45% and 82.89% for single and multiple digit English numerals, respectively.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Leap Motion Inc. LEAP Motion (2010). https://www.leapmotion.com/

Microsoft Corporation. Kinect (2010). https://developer.microsoft.com/en-us/windows/kinect/

Amma, C., Gehrig, D., Schultz, T.: Airwriting recognition using wearable motion sensors. In: Proceedings of the 1st Augmented Human International Conference, p. 10. ACM (2010)

Amma, C., Georgi, M., Schultz, T.: Airwriting: hands-free mobile text input by spotting and continuous recognition of 3D-space handwriting with inertial sensors. In: 2012 16th International Symposium on Wearable Computers, pp. 52–59. IEEE (2012)

Chen, M., AlRegib, G., Juang, B.H.: Air-writing recognition–Part I: modeling and recognition of characters, words, and connecting motions. IEEE Trans. Hum.-Mach. Syst. 46(3), 403–413 (2015)

Chen, M., AlRegib, G., Juang, B.H.: Air-writing recognition–Part II: detection and recognition of writing activity in continuous stream of motion data. IEEE Trans. Hum.-Mach. Syst. 46(3), 436–444 (2015)

Dash, A., et al.: Airscript-creating documents in air. In: 2017 14th IAPR International Conference on Document Analysis and Recognition (ICDAR), vol. 1, pp. 908–913. IEEE (2017)

Huang, Y., Liu, X., Jin, L., Zhang, X.: Deepfinger: a cascade convolutional neuron network approach to finger key point detection in egocentric vision with mobile camera. In: 2015 IEEE International Conference on Systems, Man, and Cybernetics, pp. 2944–2949. IEEE (2015)

Junker, H., Amft, O., Lukowicz, P., Tröster, G.: Gesture spotting with body-worn inertial sensors to detect user activities. Pattern Recogn. 41(6), 2010–2024 (2008)

Kim, D.H., Choi, H.I., Kim, J.H.: 3D space handwriting recognition with ligature model. In: Youn, H.Y., Kim, M., Morikawa, H. (eds.) UCS 2006. LNCS, vol. 4239, pp. 41–56. Springer, Heidelberg (2006). https://doi.org/10.1007/11890348_4

Lee, H.K., Kim, J.H.: An hmm-based threshold model approach for gesture recognition. IEEE Trans. Pattern Anal. Mach. Intell. 10, 961–973 (1999)

Oh, J.K., et al.: Inertial sensor based recognition of 3-D character gestures with an ensemble classifiers. In: Ninth International Workshop on Frontiers in Handwriting Recognition, pp. 112–117. IEEE (2004)

Roy, P., Ghosh, S., Pal, U.: A CNN based framework for unistroke numeral recognition in air-writing. In: 2018 16th International Conference on Frontiers in Handwriting Recognition (ICFHR), pp. 404–409. IEEE (2018)

Ye, Z., Zhang, X., Jin, L., Feng, Z., Xu, S.: Finger-writing-in-the-air system using kinect sensor. In: 2013 IEEE International Conference on Multimedia and Expo Workshops (ICMEW), pp. 1–4. IEEE (2013)

Zhang, X., Ye, Z., Jin, L., Feng, Z., Xu, S.: A new writing experience: finger writing in the air using a kinect sensor. IEEE Multimedia 20(4), 85–93 (2013)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Rahman, A., Roy, P., Pal, U. (2020). Continuous Motion Numeral Recognition Using RNN Architecture in Air-Writing Environment. In: Palaiahnakote, S., Sanniti di Baja, G., Wang, L., Yan, W. (eds) Pattern Recognition. ACPR 2019. Lecture Notes in Computer Science(), vol 12046. Springer, Cham. https://doi.org/10.1007/978-3-030-41404-7_6

Download citation

DOI: https://doi.org/10.1007/978-3-030-41404-7_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-41403-0

Online ISBN: 978-3-030-41404-7

eBook Packages: Computer ScienceComputer Science (R0)