Abstract

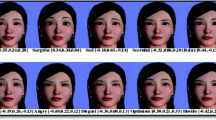

We present work on a new anatomically based 3D parametric lip model for synchronized speech that also supports the lip motion required for facial expressions. The lip model is represented with a B-spline surface and highlevel parameters which define the articulation of the surface. The model parameterization is muscle-based to allow for specification of a wide range of lip motion. The B-spline surface specifies not only the external portion of the lips, but the internal surface as well. This complete geometric representation replaces the original lip geometry of any facial model.

We also describe a method to render the lip model using a procedural texturing paradigm to give color, lighting and surface texture for increased realism. We use our lip model in a text-to-audio-visual-speech system to achieve speech-synchronized facial animation.

Chapter PDF

Similar content being viewed by others

References

Ali Adjoudani, Élaboration d’un modéle de lèvres 3D pour animation en temps réel, Masters thesis, Mémoire de D.E.A. Signal-Image-Parole, Institut National Polytechnique, Grenoble, France, 1993.

Philippe Bergeron, 3-D Character Animation on the Symbolics System, SIGGRAPH ‘87 course notes: 3-D Character Animation by Computer,jul 1987.

Alan W Black, Paul Taylor, Richard Caley and Rob Clark, The Festival Speech Synthesis System, http://www.cstr.ed.ac.uk/projects/festival/.

D. W. Boston, Synthetic Facial Communication, British Journal of Audiology, 7 (1973), pp. 95–101.

N. M. Brooke and Quentin Summerfield, Analysis, Synthesis and Perception of Visible Articulatory Movements, Journal of Phonetics, 11 (jan 1983 ), pp. 63–76.

Michael Cohen and Dominic Massaro, Modeling coarticulation in synthetic visual speech,in N. M.-T. a. D. Thalmann, ed., Models and Techniques in Computer Animation, Springer-Verlag, Tokyo, 1993, pp. 139–156.

Norman P. Erber, Richard L. Sachs and Carol Lee De Filippo, Optical synthesis of articulatory images for lipreading evaluation and instruction, in D. L. McPhearson, ed., Advances in Prosthetic Devices for the Deaf. A Technical Workshop, Rochester, NY: NTID, 1979, pp. 228–231.

Tony Ezzat and Tomaso Poggio, MikeTalk: A Talking Facial Display Based on Morphing Visemes,, Computer Animation ‘88, IEEE Computer Society, Philadelphia, University of Pennsylvania, jun 1998, pp. 96–102.

Victoria Fromkin, Lip positions in American English vowels,Language and Speech, 7 (1964), pp. 215–225.

Marie-Paul Gascuel, An implicit formulation for precise contact modeling between flexible solids,, SIGGRAPH ‘83,1993, pp. 313–320.

Thierry Guiard-Marigny, Animation en temps réel d’un modèle paramétrique de lèvres, Masters thesis, Mémoire de D.E.A Signal-Image-Parole, Institut National Polytechnique, Grenoble, France, 1992.

Thierry Guiard-Marigny, Nicolas Tsingos, Ali Adjoudani, Christian Benoit and Marie-Paule Gascuel, 3D Models of the Lips for Realistic Speech Animation,, Computer Graphic ‘86, Geneve, 1996.

Scott A. King and Richard E. Parent, TalkingHead: A text-to-audiovisual-speech system, OSU-CISRC-2/80-TR05, Computer and Information Science, The Ohio State University, Columbus, Ohio, 2000.

MBROLA, The MBROLA Project, http://www.tcts.fpms.ac.be/synthesis/.

A. A. Montgomery, Development of a model for generating synthetic animated lip shapes,Journal of the Acoustical Society of America, 68 (1980), pp. S58(A).

Frederic I. Parke, A parametric model for human faces, Ph.D. thesis, University of Utah, Salt Lake City, Utah, dec 1974.

J A Provine and L T Bruton, Lip Synchronization in 3-D Model Based Coding for Video-conferencing, Proc. of the IEEE Int. Symposium on Circuits and Systems, Seattle, May 1995, pp. 453–456.

Keith Waters and Thomas M. Levergood, DECface: An Automatic Lip-Synchronization Algorithm for Synthetic Faces, Technical Report CRL 93/4, Digital Equipment Corporation Cambridge Research Lab, Sep 1993.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2001 Springer Science+Business Media New York

About this chapter

Cite this chapter

King, S.A., Parent, R.E., Olsafsky, B.L. (2001). A Muscle-Based 3D Parametric Lip Model for Speech-Synchronized Facial Animation. In: Magnenat-Thalmann, N., Thalmann, D. (eds) Deformable Avatars. IFIP — The International Federation for Information Processing, vol 68. Springer, Boston, MA. https://doi.org/10.1007/978-0-306-47002-8_2

Download citation

DOI: https://doi.org/10.1007/978-0-306-47002-8_2

Publisher Name: Springer, Boston, MA

Print ISBN: 978-1-4757-4930-4

Online ISBN: 978-0-306-47002-8

eBook Packages: Springer Book Archive