Abstract

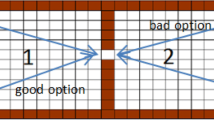

One of the most interesting problems faced by Artificial Intelligence researchers is to reproduce a capability typical of living beings: that of learning to perform motor tasks, a problem known as skill acquisition. A very difficult purpose because the overwhole behavior of an agent is the result of quite a complex activity, involving sensory, planning and motor processing. In this paper, I present a novel approach for acquiring new skills, named Soft Teaching, that is characterized by a learning by experience process, in which an agent exploits a symbolic, qualitative description of the task to perform, that cannot, however, be used directly for control purposes. A specific Soft Teaching technique, named Symmetries, was implemented and tested against a continuous-domained version of well-known pole-balancing.

Chapter PDF

Similar content being viewed by others

References

C. Baroglio. Teaching by shaping. In the ICML Workshop on learning by induction vs. learning by demonstration, Tahoe City, CA, USA, 1995.

C. Baroglio, A. Giordana, M. Kaiser., M. Nuttin, and R. Piola. Learning controllers for industrial robots. Machine Learning, Spec. Iss. on Learning Robots, (23), 1996.

A.G. Barto, R.S. Sutton, and C.W. Anderson. Neuronlike adaptive elements that can solve difficult learning prolems. IEEE Trans. on SMC, SMC-13:834–836, 1986.

J.A. Clouse and P.E. Utgoff. A teaching method for reinforcement learning. In Proc. of the Machine Learning Conference MLC-92, pages 92–101, 1992.

B. Fritzke. Growing Cell Structures — a self-organized network for unsupervised and supervised learning. Neural Networks, 7(9), 1994.

V. Gullapalli. A stochastic reinforcement learning algorithm for learning real valued functions. Neural Networks, 3:671–692, 1990.

K. Hornik, M. Stinchcombe, and H. White. Multilayer feed-forward networks are universal approximators. Neural Networks, 2:359–366, 1989.

M. Kaiser and J. Kreuziger. Integration of symbolic and connectionist processing to ease robot programming and control. In ECAI'94 Workshop on Combining Symbolic and Connectionist Processing, pages 20–29, 1994.

M. Kaiser and F. Wallner. Using machine learning for enhancing mobile robots' skills. In IRTICS-93, 1993.

P. Katenkamp. Constructing controllers from examples. Master Thesis, Univ. of Karlsruhe, Germany, 1995.

L.J. Lin. Self-improving reactive agents based on reinforcement learning, planning and teaching. Machine Learning, 8:293–321, 1992.

M.A.F. McDonald. Approximate discounted dynamic programming is unreliable. Technical Report 94/6, Dept. of Comp. Sci., Univ. of Western Australia, Oct. 1994.

L.X. Wang and J.M. Mendel. Generating fuzzy rules by learning from examples. IEEE Trans. on SMC, SMC-22(6):1414–1427, November 1992.

C.J.C.H. Watkins and P. Dayan. Technical note: Q-learning. Machine Learning, 8:279–292, May 1992.

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 1997 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Baroglio, C. (1997). Exploiting qualitative knowledge to enhance skill acquisition. In: van Someren, M., Widmer, G. (eds) Machine Learning: ECML-97. ECML 1997. Lecture Notes in Computer Science, vol 1224. Springer, Berlin, Heidelberg. https://doi.org/10.1007/3-540-62858-4_71

Download citation

DOI: https://doi.org/10.1007/3-540-62858-4_71

Published:

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-62858-3

Online ISBN: 978-3-540-68708-5

eBook Packages: Springer Book Archive