Abstract

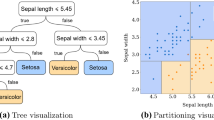

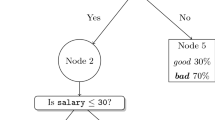

The regression problem is to approximate a function from sample values. Decision trees and decision rules achieve this task by finding regions with constant function values. While recursive partitioning methods are strong in dynamic feature selection and in explanatory capabilities, an essential weakness of these methods is the approximation of a region by a constant value. We propose a new method that relies on searching for similar cases to boost performance. The new method preserves the strengths of the partitioning schemes while compensating for the weaknesses that are introduced with constant-value regions. Our method relies on searching for the most relevant cases using a rule-based system, and then using these cases for determining the function value. Experimental results demonstrate that the new method can often yield superior regression performance.

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Breiman, L.; Friedman, J.; Olshen, R.; and Stone, C. 1984. Classification and Regression Tress. Monterrey, Ca.: Wadsworth.

Breiman, L. 1993. Stacked regression. Technical report, U. of CA. Berkeley.

Efron, B. 1988. Computer-intensive methods in statistical regression. SIAM Review 30(3):421–449.

Friedman, J., and Stuetzle, W. 1981. Projection pursuit regression. J. Amer. Stat. Assoc. 76:817–823.

Friedman, J. 1991. Multivariate adaptive regression splines. Annals of Statistics 19(1):1–141.

LeBlanc, M., and Tibshirani, R. 1993. Combining estimates in regression and classification. Technical report, Department of Statistics, U. of Toronto.

McClelland, J., and Rumelhart, D. 1988. Explorations in Parallel Distributed Processing. Cambridge, Ma.: MIT Press.

Quinlan, J. 1993. Combining instance-based and model-based learning. In International Conference on Machine Learning, 236–243.

Scheffe, H. 1959. The Analysis of Variance. New York: Wiley.

Ting, K. 1994. The problem of small disjuncts: Its remedy in decision trees. In Proceedings of the 10th Canadian Conference on Artificial Intelligence, 91–97.

Weiss, S., and Indurkhya, N. 1991. Reduced complexity rule induction. In Proceedings of IJCAI-91, 678–684.

Weiss, S., and Indurkhya, N. 1993. Rule-based regression. In Proceedings of the 13th International Joint Conference on Artificial Intelligence, 1072–1078.

Weiss, S., and Indurkhya, N. 1994. Decision tree pruning: Biased or optimal? In Proceedings of AAAI-94, 626–632.

Widmer, G. 1993. Combining knowledge-based and instance-based learning to exploit qualitative knowledge. Informatica 17:371–385.

Wolpert, D. 1992. On overfitting avoidance as bias. Technical Report SFI TR 92-03-5001, The Sante Fe Institute.

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 1995 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Indurkhya, N., Weiss, S.M. (1995). Using case data to improve on rule-based function approximation. In: Veloso, M., Aamodt, A. (eds) Case-Based Reasoning Research and Development. ICCBR 1995. Lecture Notes in Computer Science, vol 1010. Springer, Berlin, Heidelberg. https://doi.org/10.1007/3-540-60598-3_20

Download citation

DOI: https://doi.org/10.1007/3-540-60598-3_20

Published:

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-60598-0

Online ISBN: 978-3-540-48446-2

eBook Packages: Springer Book Archive