Abstract

When correct priors are known, Bayesian algorithms give optimal decisions, and accurate confidence values for predictions can be obtained. If the prior is incorrect however, these confidence values have no theoretical base — even though the algorithms’ predictive performance may be good. There also exist many successful learning algorithms which only depend on the iid assumption. Often however they produce no confidence values for their predictions. Bayesian frameworks are often applied to these algorithms in order to obtain such values, however they can rely on unjustified priors.

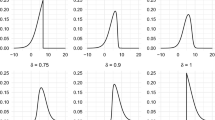

In this paper we outline the typicalness framework which can be used in conjunction with many other machine learning algorithms. The framework provides confidence information based only on the standard iid assumption and so is much more robust to different underlying data distributions. We show how the framework can be applied to existing algorithms. We also present experimental results which show that the typicalness approach performs close to Bayes when the prior is known to be correct. Unlike Bayes however, the method still gives accurate confidence values even when different data distributions are considered.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Thore Graepel, Ralf Herbrich, and Klaus Obermayer. Bayesian Transduction. In Sara A. Solla, Todd K. Leen, and Klaus-Robert Muller, editors, Advances in Neural Information Processing Systems 12: Proceedings of the 1999 Conference, pages 456–462. The MIT Press, 1999.

Ralf Herbrich, Thore Graepel, and Colin Campbell. Bayesian Learning in Reproducing Kernel Hilbert Spaces. Technical report, Technical University of Berlin, Franklinstr. 28/29, 10587 Berlin, 1999.

A. Hoerl and R.W. Kennard. Ridge regression: Biased estimation for nonorthogonal problems. Technometrics, 12(1):55–67, 1970.

T. Melluish, C. Saunders, I. Nouretdinov, and V Vovk. The typicalness framework: a comparison with the Bayesian approach. Technical Report CLRC-TR-01-05, Royal Holloway University of London, 2001.

Ilia Nouretdinov, Tom Melluish, and Volodya Vovk. Ridge Regression Confidence Machine. In Accepted for ICML, 2001.

C. Saunders, A. Gammerman, and V. Vovk. Ridge regression learning algorithm in dual variables. In Proceedings of the 15th International Conference on Machine Learning, 1998.

C. Saunders, A. Gammerman, and V. Vovk. Transduction with Confidence and Credibility. In Proceedings of IJCAI’ 99, volume 2, 1999.

C. Saunders, A. Gammerman, and V. Vovk. Computationally Efficient Transductive Machines. In Proceedings of the Eleventh International Conference on Algorithmic Learning Theory 2000 (ALT’ 00), Lecture Notes in Artificial Intelligenece. Springer-Verlag, 2000.

Craig Saunders. Efficient Implementation and Experimental Testing of Transductive Algorithms for Predicting with Confidence. PhD thesis, Royal Holloway and Bedford New College, University of London, 2000.

Vladimir Vapnik. The Nature of Statistical Learning Theory. Springer, 1995.

V. Vovk, A. Gammerman, and C. Saunders. Machine-learning applications of algorithmic randomness. In Machine Learning, Proceedings of the Sixteenth International Conference (ICML’99), 1999.

Volodya Vovk and Alex Gammerman. Algorithmic theory of randomness and its applications in computer learning. Unpublished manuscript, 2000.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2001 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Melluish, T., Saunders, C., Nouretdinov, I., Vovk, V. (2001). Comparing the Bayes and Typicalness Frameworks. In: De Raedt, L., Flach, P. (eds) Machine Learning: ECML 2001. ECML 2001. Lecture Notes in Computer Science(), vol 2167. Springer, Berlin, Heidelberg. https://doi.org/10.1007/3-540-44795-4_31

Download citation

DOI: https://doi.org/10.1007/3-540-44795-4_31

Published:

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-42536-6

Online ISBN: 978-3-540-44795-5

eBook Packages: Springer Book Archive