Abstract

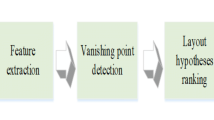

Recovering the spatial layout of cluttered indoor scenes is a challenging problem. Current methods generate layout hypotheses from vanishing point estimates produced using 2D image features. This method fails in highly cluttered scenes in which most of the image features come from clutter instead of the room’s geometric structure. In this paper, we propose to use human detections as cues to more accurately estimate the vanishing points. Our method is built on top of the fact that people are often the focus of indoor scenes, and that the scene and the people within the scene should have consistent geometric configurations in 3D space. We contribute a new data set of highly cluttered indoor scenes containing people, on which we provide baselines and evaluate our method. This evaluation shows that our approach improves 3D interpretation of scenes.

Chapter PDF

Similar content being viewed by others

References

Bao, S.Y., Sun, M., Savarese, S.: Toward coherent object detection and scene layout understanding. In: CVPR (2010)

Bourdev, L., Maji, S., Brox, T., Malik, J.: Detecting people using mutually consistent poselet activations. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010, Part VI. LNCS, vol. 6316, pp. 168–181. Springer, Heidelberg (2010)

Bourdev, L., Malik, J.: Poselets: Body part detectors trained using 3d human pose annotations. In: ICCV (2009)

Chang, C.C., Lin, C.J.: LIBSVM: A library for support vector machines. ACM Transactions on Intelligent Systems and Technology (2011)

Choi, W., Chao, Y.W., Pantofaru, C., Savarese, S.: Understanding indoor scenes using 3d geometric phrases. In: CVPR (2013)

Felzenszwalb, P.F., Girshick, R.B., McAllester, D.: Discriminatively trained deformable part models., http://people.cs.uchicago.edu/pff/latent-release4/

Felzenszwalb, P.F., Girshick, R.B., McAllester, D., Ramanan, D.: Object detection with discriminatively trained part based models. TPAMI (2010)

Fouhey, D.F., Delaitre, V., Gupta, A., Efros, A.A., Laptev, I., Sivic, J.: People watching: Human actions as a cue for single-view geometry. In: Fitzgibbon, A., Lazebnik, S., Perona, P., Sato, Y., Schmid, C. (eds.) ECCV 2012, Part V. LNCS, vol. 7576, pp. 732–745. Springer, Heidelberg (2012)

Hartley, R.I., Zisserman, A.: Multiple View Geometry in Computer Vision, 2nd edn. Cambridge University Press (2004) ISBN: 0521540518

Hedau, V., Hoiem, D., Forsyth, D.: Recovering the spatial layout of cluttered rooms. In: ICCV (2009)

Hedau, V., Hoiem, D., Forsyth, D.: Recovering free space of indoor scenes from a single image. In: CVPR (2012)

Lee, D.C., Gupta, A., Hebert, M., Kanade, T.: Estimating spatial layout of rooms using volumetric reasoning about objects and surfaces. In: NIPS (2010)

Lee, D.C., Hebert, M., Kanade, T.: Geometric reasoning for single image structure recovery. In: CVPR (2009)

Rother, C.: A new approach for vanishing point detection in architectural environments. IVC (2002)

Schwing, A.G., Hazan, T., Pollefeys, M., Urtasun, R.: Efficient structured prediction for 3d indoor scene understanding. In: CVPR (2012)

Wang, H., Gould, S., Koller, D.: Discriminative learning with latent variables for cluttered indoor scene understandingy. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010, Part IV. LNCS, vol. 6314, pp. 497–510. Springer, Heidelberg (2010)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Chao, YW., Choi, W., Pantofaru, C., Savarese, S. (2013). Layout Estimation of Highly Cluttered Indoor Scenes Using Geometric and Semantic Cues. In: Petrosino, A. (eds) Image Analysis and Processing – ICIAP 2013. ICIAP 2013. Lecture Notes in Computer Science, vol 8157. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-41184-7_50

Download citation

DOI: https://doi.org/10.1007/978-3-642-41184-7_50

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-41183-0

Online ISBN: 978-3-642-41184-7

eBook Packages: Computer ScienceComputer Science (R0)