Abstract

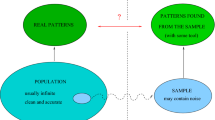

A theory of patterns analysis has to provide a criterion to filter out the relevant information to identify patterns. The set of potential patterns, also called hypothesis class of the problem, defines admissible explanations of the available data and it specifies the context for a patterns analysis task. Fluctuations in the measurements limit the precision which we can achieve to identify such patterns. Effectively, the distinguishible patterns define a code in a fictitious communication scenario where the selected cost function together with a stochastic data source plays the role of a noisy “channel”. Maximizing the capacity of this channel determines the penalized costs of the pattern analysis problem with a data dependent regularization strength. The tradeoff between informativeness and robustness in statistical inference is mirrored in the balance between high information rate and zero communication error, thereby giving rise to a new notion of context sensitive information.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Alon, N., Ben-David, S., Cesa-Bianchi, N., Haussler, D.: Scale-sensitive dimensions, uniform convergence, and learnability. J. ACM 44(4), 615–631 (1997)

Buhmann, J.M., Kühnel, H.: Vector quantization with complexity costs. IEEE Tr. Information Theory 39(4), 1133–1145 (1993)

Buhmann, J.M.: Information theoretic model validation for clustering. In: IEEE International Symposium on Information Theory, Austin Texas. IEEE, New York (2010), http://arxiv.org/abs/1006.0375

Cover, T.M., Thomas, J.A.: Elements of Information Theory. John Wiley & Sons, New York (1991)

Csiczár, I., Körner, J.: Information Theory: Coding theorems for discrete memoryless systems. Academic Press, New York (1981)

Hofmann, T., Buhmann, J.M.: Pairwise data clustering by deterministic annealing. IEEE Tr. Pattern Analysis and Machine Intelligence 19(1), 1–14 (1997)

Rose, K., Gurewitz, E., Fox, G.: Vector quantization by deterministic annealing. IEEE Transactions on Information Theory 38(4), 1249–1257 (1992)

Vapnik, V.N.: Estimation of Dependences Based on Empirical Data. Springer, Heidelberg (1982)

Vapnik, V.N., Chervonenkis, A.Y.: On the uniform convergence of relative frequencies of events to their probabilities. Theory of Probability and its Applications 16, 264–280 (1971)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2011 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Buhmann, J.M. (2011). Context Sensitive Information: Model Validation by Information Theory. In: Martínez-Trinidad, J.F., Carrasco-Ochoa, J.A., Ben-Youssef Brants, C., Hancock, E.R. (eds) Pattern Recognition. MCPR 2011. Lecture Notes in Computer Science, vol 6718. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-21587-2_2

Download citation

DOI: https://doi.org/10.1007/978-3-642-21587-2_2

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-21586-5

Online ISBN: 978-3-642-21587-2

eBook Packages: Computer ScienceComputer Science (R0)