Abstract

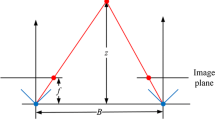

Heavy occlusions in cluttered scenes impose significant challenges to many computer vision applications. Recent light field imaging systems provide new see-through capabilities through synthetic aperture imaging (SAI) to overcome the occlusion problem. Existing synthetic aperture imaging methods, however, emulate focusing at a specific depth layer but is incapable of producing an all-in-focus see-through image. Alternative in-painting algorithms can generate visually plausible results but can not guarantee the correctness of the result. In this paper, we present a novel depth free all-in-focus SAI technique based on light-field visibility analysis. Specifically, we partition the scene into multiple visibility layers to directly deal with layer-wise occlusion and apply an optimization framework to propagate the visibility information between multiple layers. On each layer, visibility and optimal focus depth estimation is formulated as a multiple label energy minimization problem. The energy integrates the visibility mask from previous layers, multi-view intensity consistency, and depth smoothness constraint. We compare our method with the state-of-the-art solutions. Extensive experimental results with qualitative and quantitative analysis demonstrate the effectiveness and superiority of our approach.

Chapter PDF

Similar content being viewed by others

Keywords

References

Isaksen, A., McMillan, L., Gortler, S.J.: Dynamically reparameterrized light fields. In: SIGGRAPH, pp. 297–306 (2000)

Vaish, V., Garg, G., Talvala, E., Antunez, E., Wilburn, B., Horowitz, M., Levoy, M.: Synthetic aperture focusing using a shear-warp factorization of the viewing transform. In: CVPR, pp. 129–134 (2005)

Wilburn, B., Joshi, N., Vaish, V., Talvala, V.E., Antunez, E., Barth, A., Adam, A., Horowitz, M., Levoy, M.: High performance imaging using large camera arrays. ACM T GRAPHIC 24(3), 765–776 (2005)

Zhang, C., Chen, T.: A self-reconfigurable camera array. In: SIGGRAPH (2004)

Joshi, N., Avidan, S., Matusik, W., Kriegman, D.J.: Synthetic aperture tracking: tracking through occlusions. In: ICCV, pp. 1–8 (2007)

Pei, Z., Zhang, Y.N., Chen, X., Yang, Y.H.: Synthetic aperture imaging using pixel labelling via energy minimization. Pattern Recognition 46(1), 174–187 (2013)

Ding, Y.Y., Li, F., Ji, Y., Yu, J.Y.: Synthetic aperture tracking: tracking through occlusions. In: ICCV, pp. 1–8 (2007)

Yang, T., Zhang, Y.N., Tong, X.M., Zhang, X.Q., Yu, R.: A new hybrid synthetic aperture imaging model for tracking and seeing people through occlusion. IEEE TCSVT 23(9), 1461–1475 (2013)

Basha, T., Avidan, S., Hornung, A., Matusik, W.: Structure and motion from scene registration. In: CVPR, pp. 1426–1433 (2012)

Joshi, N., Matusik, W., Avidan, S.: Structure and motion from scene registration. In: SIGGRAPH, pp. 779–786 (2006)

Vaish, V., Wilburn, B., Joshi, N., Levoy, M.: Using plane + parallax for calibrating dense camera arrays. In: CVPR, pp. 2–9 (2004)

Vaish, V., Szeliski, R., Zitnick, C.L., Kang, S.B., Levoy, M.: Reconstructing occluded surfaces using synthetic apertures: stereo, focus and robust methods. In: CVPR, pp. 2331–2338 (2006)

Pei, Z., Zhang, Y.N., Yang, T., Zhang, X.W., Yang, Y.H.: A novel multi-object detection method in complex scene using synthetic aperture imaging. Pattern Recognition 45(4), 1637–1658 (2011)

Davis, A., Levoy, M., Durand, F.: Unstructured light fields. EUROGRAPHICS 31(2), 2331–2338 (2012)

Venkataraman, K., Lelescu, D., Duparr, J., McMahon, A., Molina, G., Chatterjee, P., Mullis, R.: Picam: An ultra-thin high performance monolithic camera array. ACM T. Graphics 32(5), 1–13 (2013)

Bertalmio, M., Sapiro, G., Caselles, V., Ballester, C.: Image inpainting. In: SIGGRAPH, pp. 417–424 (2000)

Tauber, Z., Li, Z.N., Drew, M.S.: Review and preview: disocclusion by inpainting for image-based rendering. IEEE TSMC 37(4), 527–540 (2007)

Boykov, Y., Kolmogorov, V.: An experimental comparison of min-cut/max-flow algorithms for energy minimization in vision. TPAMI 26(9), 1124–1137 (2004)

Lee, S.Y., Yoo, J.T., Kumar, Y., Kim, S.W.: Reduced energy-ratio measure for robust autofocusing in digital camera. Signal Processing Letters 16(2), 133–136 (2009)

Thelen, A., Frey, S., Hirsch, S., Hering, P.: Interpolation improvements in shape-from-focus for holographic reconstructions with regard to focus operators, neighborhood-size, and height value interpolation. TIP 18(1), 151–157 (2009)

Minhas, R., Mohammed, A.A., Wu, Q.M.J., Sid-Ahmed, M.A.: 3D shape from focus and depth map computation using steerable filters. In: Kamel, M., Campilho, A. (eds.) ICIAR 2009. LNCS, vol. 5627, pp. 573–583. Springer, Heidelberg (2009)

Furukawa, Y., Ponce, J.: Accurate, dense, and robust multi-view stereopsis. TPAMI 32(8), 1362–1376 (2010)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Yang, T. et al. (2014). All-In-Focus Synthetic Aperture Imaging. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds) Computer Vision – ECCV 2014. ECCV 2014. Lecture Notes in Computer Science, vol 8694. Springer, Cham. https://doi.org/10.1007/978-3-319-10599-4_1

Download citation

DOI: https://doi.org/10.1007/978-3-319-10599-4_1

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-10598-7

Online ISBN: 978-3-319-10599-4

eBook Packages: Computer ScienceComputer Science (R0)