Abstract

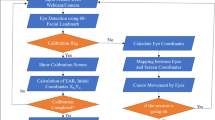

This paper proposes a communication interface with eye-gaze and head gesture. Visual sensorimotor integration with eye-head cooperation is considered, especially head gesture accompanied with vestibulo-ocular reflex is used for selecting object in the screen. Eye-mark recorder and motion tracking system were used to tracking eye movement and head movement, respectively. Nonverbal response animation with eye contact was introduced in the interaction system. In identifying the head gesture, we adopted a modified dynamic programming matching method and fuzzy inference.

Similar content being viewed by others

References

Cleveland, N.R. (1997). Eyegaze Human-Computer Interface for People with Disabilities. In 1st Conference on Automation Technology and Human Performance. Available at http://www.eyegaze.com/doc/cathuniv.htm.

Ditterich, J., Eggert, T., and Straube, A. (2000). The Role of the Attention Focus in the Visual Information Processing Underlying Saccadic Adaptation. Vision Research, 40, 1125–1134.

Gilchrist, I.D., Brown, V., Findlay, J.M., and Clarke, M.P. (1998). Using Eye-Movement System to Control the Head. Proc. Royal Society of London, B265, 1831–1836.

Gips, J., Olibieri, C.P., and Tecce, J.J. (1993). Direct Control of the Computer Through Electrodes Placed Around the Eyes. In M.J. Smith et al. (Eds.), Human-Computer Interaction (pp. 630–635). Elsevier.

Hutchinson, T.E., White, K.P., Martin, W.N., Reichert, K.C., and Frey, L.A. (1989). Human-Computer Interaction Using Eye-Gaze Input. IEEE Trans. Systems, Man, Cybernetics, 19(6), 1527–1534.

Jacob, R.K. (1993). Eye-Gaze Computer Interfaces: What You Look at is What You Get. IEEE Computer, 26, 65–67.

Kitada, T. and Hagiwara, M. (1995). Gesture Recognition Using Fuzzy Inference DP Matching. J. Institute of Electrical Engineers of Japan, 115-C, 496–502.

LaCourse, J.R. and Hludik, F.C. (1990). An Eye Movement Communication-Control System for the Disabled. IEEE Trans. Biomedical Engineering, 37(12), 1215–1220.

McMillan, G.R. and Anderson, T.R. (1997). Nonconventional Controls. In G. Salvendiy (Ed.), Handbook of Human Factors and Ergonomics (pp. 729–771). John Willey & Sons.

Panerai, F. and Sandini, G. (1998). Oculo-Motor Stabilization Reflexes: Integration of Inertial and Visual Information. Neural Networks, 11, 1191–1204.

Park, K.S. and Lim, C.J. (2000). An Efficient Camera Calibration Method for Vision-Based Head Tracking. Int. J. Human-Computer Studies, 52, 879–898.

Park, K.S. and Lim, C.J. (2001). A Simple Vision-Based Head Tracking Method for Eye-Controlled Human/Computer Interface. Int. J. Human-Computer Studies, 54, 319–332.

Sakoe, H. and Chiba, S. (1978). Dynamic Programming Algorithm Optimization for Spoken Word Recognition. IEEE Trans. Acoustics, Speech, and Signal Processing, ASSP-26, 43–49.

Stelmach, L.B., Campsall, J.M., and Herdman, C.M. (1997). Attentional and Ocular Movements. Journal of Experimental Psychology, 23(3), 823–844.

Takahashi, K., Seki, S., Kojima, H., and Oka, R. (1994). Spotting Recognition of Human Gestures from Time-Varying Images. Trans. Institute of Electronics, Information and Communication Engineers, J77-DII, 1552–1561 (in Japanese).

Velichkovsky, B.M., Sprenger, A., and Unema, P. (1997). Towards Gaze-Mediated Interaction: Collecting Solutions of the “Midas Touch Problem.” In S. Howard et al. (Eds.), Human Computer Interaction: Interact'97.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Nonaka, H. Communication Interface with Eye-Gaze and Head Gesture Using Successive DP Matching and Fuzzy Inference. Journal of Intelligent Information Systems 21, 105–112 (2003). https://doi.org/10.1023/A:1024754314969

Issue Date:

DOI: https://doi.org/10.1023/A:1024754314969