Abstract

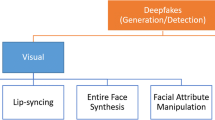

In the last few years, with the advent of deepfake videos, image forgery has become a serious threat. In a deepfake video, a person’s face, emotion or speech are replaced by someone else’s face, different emotion or speech, using deep learning technology. These videos are often so sophisticated that traces of manipulation are difficult to detect. They can have a heavy social, political and emotional impact on individuals, as well as on the society. Social media are the most common and serious targets as they are vulnerable platforms, susceptible to blackmailing or defaming a person. There are some existing works for detecting deepfake videos but very few attempts have been made for videos in social media. The first step to preempt such misleading deepfake videos from social media is to detect them. Our paper presents a novel neural network-based method to detect fake videos. We applied a key video frame extraction technique to reduce the computation in detecting deepfake videos. A model, consisting of a convolutional neural network (CNN) and a classifier network, is proposed along with the algorithm. The Xception net has been chosen over two other structures—InceptionV3 and Resnet50—for pairing with our classifier. Our model is a visual artifact-based detection technique. The feature vectors from the CNN module are used as the input of the subsequent classifier network for classifying the video. We used the FaceForensics++ and Deepfake Detection Challenge datasets to reach the best model. Our model detects highly compressed deepfake videos in social media with a very high accuracy and lowered computational requirements. We achieved 98.5% accuracy with the FaceForensics++ dataset and 92.33% accuracy with a combined dataset of FaceForensics++ and Deepfake Detection Challenge. Any autoencoder generated video can be detected by our model. Our method has detected almost all fake videos if they possess more than one key video frame. The accuracy reported here is for detecting fake videos when the number of key video frames is one. The simplicity of the method will help people to check the authenticity of a video. Our work is focused, but not limited, to addressing the social and economical issues due to fake videos in social media. In this paper, we achieve the high accuracy without training the model with an enormous amount of data. The key video frame extraction method reduces the computations significantly, as compared to existing works.

source Facebook

Similar content being viewed by others

References

Zhu J, Park T, Isola P, Efros AA. Unpaired image-to-image translation using cycle-consistent adversarial networks. In: Proceedings IEEE international conference on computer vision (ICCV). 2017. pp. 2242–2251.

Karras T, Laine S, Aittala M, Hellsten J, Lehtinen J, Aila T. Analyzing and improving the image quality of stylegan. In: Proceedings of IEEE/CVF conference on computer vision and pattern recognition (CVPR); 2020. p. 8107–8116.

Zhao Y, Chen C. Unpaired image-to-image translation via latent energy transport. 2020. arXiv:2012.00649.

Faceswap. Web. https://github.com/deepfakes/faceswap. Accessed 19 Jan 2021.

DFaker. Web. https://github.com/dfaker/df. Accessed 19 Jan 2021.

Chou HTG, Edge N. They are happier and having better lives than i am: the impact of using Facebook on perceptions of others’ lives. Cyberpsychol Behav Soc Netw. 2012;15(2):117–21.

Baccarella C, Wagner T, Kietzmann J, McCarthy I. Social media? It’s serious! understanding the dark side of social media. Eur. Manag. J. 2018;36:431–8.

Allcott H, Gentzkow M. Social media and fake news in the 2016 election. J. Econ. Perspect. 2017;31(2):211–36.

Faceswap. Web. https://faceswap.dev/. Accessed 19 Jan 2021.

DeepFaceLab. Web. https://github.com/iperov/DeepFaceLab. Accessed 19 Jan 2021.

Deepfake Video. Web. https://edition.cnn.com/2019/06/11/tech/zuckerberg-deepfake/index.html. Accessed 19 Jan 2021.

Bregler C, Covell M, Slaney M. Video rewrite: driving visual speech with audio. In: SIGGRAPH'97: Proceedings of the 24th annual conference on computer graphics and interactive techniques; 1997. p. 353–360.

Garrido P, Valgaerts L, Rehmsen O, Thormaehlen T, Perez P, Theobalt C. Automatic Face Reenactment. In: 2014 IEEE Conference on Computer Vision and Pattern Recognition. Columbus, OH, 2014. p. 4217–4224.

Thies J, Zollhofer M, Niessner M, Valgaerts L, Stamminger M, Theobalt C. Realtime expression transfer for facial reenactment. In: Proceedings of ACM SIGGRAPH Asia 2015 ACM transactions on graphics (TOG), Art No. 183, vol. 34; 2015. Accessed 19 Jan 2021.

Suwajanakorn S, Seitz SM, Kemelmacher-Shlizerman I. Synthesizing Obama: learning lip sync from audio. ACM Trans Graph (TOG). 2017;36:4.

Tewari A, Zollhofer M, Kim H, Garrido P, Bernard F, Perez P, Theobalt C. MoFA: model-based deep convolutional face autoencoder for unsupervised monocular reconstruction. In: Proceedings of IEEE international conference on computer vision (ICCV). 2017. pp. 3735–3744.

Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y. Generative adversarial nets. In: Proceedings of advances in neural information processing systems. 2014. p. 2672–2680

Chesney R, Citron D. Deepfakes: a looming crisis for national security, democracy and privacy? Web. 2018. https://www.lawfareblog.com/deepfakes-looming-crisis-national-security-democracy-and-privacy

Kietzmann J, Lee LW, McCarthy IP, Kietzmann TC. Deepfakes: trick or treat? Bus Horiz. 2020;63(2):135–46.

Reid S. The deepfake dilemma: reconciling privacy and first amendment protections. Univ Pa J Const Law. 2020. https://ssrn.com/abstract=3636464 (Forthcoming).

Gerstner E. Face/off: “DeepFake” face swaps and privacy laws. Def Couns J. 2020;87:1.

Westerlund M. The emergence of Deepfake technology: a review. Technol Innov Manag Rev. 2019;9:39–52. https://doi.org/10.22215/timreview/1282. Accessed 19 Jan 2021.

Mitra A, Mohanty SP, Corcoran P, Kougianos E. A novel machine learning based method for deepfake video detection in social media. In: Proceedings of the 6th IEEE international symposium on smart electronic systems (iSES). 2020. (In Press).

Chollet F. Xception: deep learning with depthwise separable convolutions. CoRR. 2016. arxiv:1610.02357. Accessed 19 Jan 2021.

Szegedy C, Vanhoucke V, Ioffe S, Shlens J, Wojna Z. Rethinking the inception architecture for computer vision. 2015. arXiv:1512.00567. Accessed 19 Jan 2021.

He K, Zhang X, Ren S, Sun J. Deep residual learning for image recognition. In: Proceedings of IEEE conference on computer vision and pattern recognition (CVPR). 2016. p. 770–778. Accessed 19 Jan 2021.

Rossler A, Cozzolino D, Verdoliva L, Riess C, Thies J, Niessner M. FaceForensics++: learning to detect manipulated facial images. In: Proceedings of IEEE international conference on computer vision. 2019. p. 1–11. Accessed 19 Jan 2021.

Dolhansky B, Bitton J, Pflaum B, Lu J, Howes R, Wang M, Ferrer CC. The deepfake detection challenge dataset. 2020. https://github.com/deepfakes/faceswap0

Nguyen HH, Yamagishi J, Echizen I. Capsule-forensics: Using capsule networks to detect forged images and videos. In: Proceedings of IEEE international conference on acoustics, speech and signal processing (ICASSP); 2019. p. 2307–2311.

Hashmi MF, Ashish BKK, Keskar AG, Bokde ND, Yoon JH, Geem ZW. An exploratory analysis on visual counterfeits using conv-lstm hybrid architecture. IEEE Access. 2020;8:101293–308. Accessed 19 Jan 2021.

Kumar A, Bhavsar A, Verma R. Detecting deepfakes with metric learning. In: 2020 8th international workshop on biometrics and forensics (IWBF). 2020. p. 1–6.

D’Amiano L, Cozzolino D, Poggi G, Verdoliva L. A PatchMatch-based dense-field algorithm for video copy-move detection and localization. IEEE Trans Circuits Syst Video Technol. 2019;29(3):669–82. Accessed 19 Jan 2021.

Gironi A, Fontani M, Bianchi T, Piva A, Barni M. A video forensic technique for detecting frame deletion and insertion. In: Proceedings of IEEE international conference on acoustics, speech and signal processing (ICASSP); 2014. p. 6226–6230.

Ding X, Yang G, Li R, Zhang L, Li Y, Sun X. Identification of motion-compensated frame rate up-conversion based on residual signals. IEEE Trans Circuits Syst Video Technol. 2018;28(7):1497–512.

Sabir E, Cheng J, Jaiswal A, AbdAlmageed W, Masi I, Natarajan P. Recurrent convolutional strategies for face manipulation detection in videos. In: Proceedings of the IEEE conference on computer vision and pattern recognition workshops; 2019. p. 80–87. Accessed 19 Jan 2021.

Güera D, Delp EJ. Deepfake video detection using recurrent neural networks. In: Proceedings of 15th IEEE international conference on advanced video and signal based surveillance; 2018. p. 1–6. Accessed 19 Jan 2021.

Li Y, Chang M, Lyu S. In Ictu Oculi: Exposing AI created fake videos by detecting eye blinking. In: Proceedings of IEEE international workshop on information forensics and security. 2018. p. 1–7. Accessed 19 Jan 2021.

Afchar D, Nozick V, Yamagishi J, Echizen I. MesoNet: a compact facial video forgery detection network. In: Proceedings of IEEE international workshop on information forensics and security (WIFS). 2018. p. 1–7.

Li Y, Lyu S. Exposing deepfake videos by detecting face warping artifacts. CoRR. 2018. https://github.com/deepfakes/faceswap. Accessed 19 Jan 2021.

Matern F, Riess C, Stamminger M. Exploiting visual artifacts to expose deepfakes and face manipulations. In: Proceedings of IEEE winter applications of computer vision workshops; 2019. p. 83–92. Accessed 19 Jan 2021.

Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition. 2014. arXiv:1409.1556. Accessed 19 Jan 2021.

Yang X, Li Y, Lyu S. Exposing deep fakes using inconsistent head poses. In: Proceedings of IEEE international conference on acoustics, speech and signal processing (ICASSP). 2019. p. 8261–8265. Accessed 19 Jan 2021.

Jung T, Kim S, Kim K. DeepVision: deepfakes detection using human eye blinking pattern. IEEE Access. 2020;8:83144–54. https://github.com/deepfakes/faceswap. Accessed 19 Jan 2021.

Mittal T, Bhattacharya U, Chandra R, Bera A, Manocha D. Emotions don’t lie: an audio-visual deepfake detection method using affective cues. In: Proceedings of the 28th ACM international conference on multimedia; 2020. p. 2823–2832.

Korshunov P, Marcel S. Deepfakes: a new threat to face recognition? Assessment and detection. 2018. Accessed 19 Jan 2021.

Hasan HR, Salah K. Combating deepfake videos using blockchain and smart contracts. IEEE Access. 2019;7:41596–606. Accessed 19 Jan 2021.

Sethi L, Dave A, Bhagwani R, Biwalkar A. Video security against deepfakes and other forgeries. J Discrete Math Sci Cryptogr. 2020;23:349–63.

Ganiyusufoglu I, Ngo LM, Savov N, Karaoglu S, Gevers T. Spatio-temporal features for generalized detection of deepfake videos. 2020.

Li X, Lang Y, Chen Y, Mao X, He Y, Wang S, Xue H, Lu Q. Sharp multiple instance learning for deepfake video detection. In: Proceedings of the 28th ACM international conference on multimedia. 2020. p. 1864–1872.

Charitidis P, Kordopatis-Zilos G, Papadopoulos S, Kompatsiaris I. Investigating the impact of pre-processing and prediction aggregation on the deepfake detection task; 2020. arXiv:2006.07084

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R. Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res. 2014;15(1):1929–58.

Baldi P, Sadowski PJ. Understanding dropout. In: Burges CJC, Bottou L, Welling M, Ghahramani Z, Weinberger KQ, editors. Advances in neural information processing systems, vol. 26; 2013. p. 2814–22. Accessed 19 Jan 2021.

Gloe T, Böhme R. The “Dresden image database” for benchmarking digital image forensics. In: Proceedings of the ACM symposium on applied computing; 2010. p. 1584–1590. Accessed 19 Jan 2021.

Amerini I, Ballan L, Caldelli R, Del Bimbo A, Serra G. A sift-based forensic method for copy-move attack detection and transformation recovery. IEEE Trans. Inf. Forensics Secur. 2011;6(3):1099–110.

Zampoglou M, Papadopoulos S, Kompatsiaris Y. Detecting image splicing in the wild (web). In: Proceedings of IEEE international conference on multimedia expo workshops (ICMEW). 2015. p. 1–6. Accessed 19 Jan 2021.

Dolhansky B, Howes R, Pflaum B, Baram N, Canton-Ferrer C. The deepfake detection challenge (dfdc) preview dataset;2019. arXiv:abs/1910.08854. Accessed 19 Jan 2021.

Rössler A, Cozzolino D, Verdoliva L, Riess C, Thies J, Nießner M. Faceforensics: a large-scale video dataset for forgery detection in human faces. 2018. arXiv:abs/1803.09179.

Bellard F, Niedermayer M. Ffmpeg. Web (2012). https://github.com/deepfakes/faceswap3. Accessed 19 Jan 2021.

Acknowledgements

This article is an extended version of our previous conference paper presented at [23].

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest and there was no human or animal testing or participation involved in this research. All data were obtained from public domain sources.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Mitra, A., Mohanty, S.P., Corcoran, P. et al. A Machine Learning Based Approach for Deepfake Detection in Social Media Through Key Video Frame Extraction. SN COMPUT. SCI. 2, 98 (2021). https://doi.org/10.1007/s42979-021-00495-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s42979-021-00495-x