Abstract

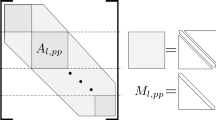

The design and implementation of a high-performance Input/Output (I/O) library for the Korean Integrated Model (KIM, KIM-IO) is described in this paper. The KIM is a next-generation global operational model for the Korea Meteorological Administration (KMA). The horizontal discretization of KIM consists of the spectral-element method on the cubed-sphere grid. The KIM-IO is developed to be a consistent and efficient approach for input and output of essential data in this particular grid structure in a multiprocessing environment. The KIM-IO provides three main features, comprising the sequential I/O, parallel I/O, and I/O decomposition methods, and adopts user-friendly interfaces similar to the Network Common Data Form (NetCDF). The efficiency of the KIM-IO is verified using experiments to analyze the performance of its three features. The scalability is also verified by implementing the KIMIO in the KIM at a resolution of approximately 12 km using the 4th supercomputer of KMA. The experimental results show that both regular parallel I/O and sequential I/O undergo performance degradation with an increasing number of processes. However, the I/O decomposition method in the KIM-IO overcomes this degradation, leading to improvement in scalability. The results also indicate that with using the new I/O decomposition method, the KIM attains good parallel scalability up to Ο (100,000) cores.

Similar content being viewed by others

References

Choi, S.-J., and S.-Y. Hong, 2016: A Global Non-Hydrostatic Dynamical Core Using the Spectral Element Method on a Cubed-Sphere Grid. Asia-Pacific J. Atmos. Sci., 52, 291-307, doi:10.1007/s13143-016-0005-0.

Corbett, P., D. Feitelson, S. Fineberg, Y. Hsu, B. Nitzberg, J.-P. Prost, M. Snirt, B. Traversat, and P. Wong, 1996: Overview of the MPI-IO Parallel I/O Interface. Input/Output in Parallel and Distributed Computer Systems, 362, 127-146, doi: 10.1007/978-1-4613-1401-1_5.

Davies, T., M. J. P. Cullen, A. J. Malcolm, M. H. Mawson, A. Staniforth, A. A. White, and N. Wood, 2005: A new dynamical core for the Met Office’s global and regional modelling of the atmosphere. Quart. J. Roy. Meteor. Soc., 131, 1759-1782, doi:10.1256/qj.04.101.

Dennis, J. M., 2003: Partitioning with space-filling curves on the cubedsphere. Proc. the Workshop on Massively Parallel Processing at IPDPS’03, Nice, France, IPDPS.

Dennis, J. M., J. Edwards, K. J. Evans, O. N. Guba, P. H. Lauritzen, A. A. Mirin, A. St-Cyr, M. A. Taylor, and P. H. Worly, 2011: CAM-SE: A scalable spectral element dynamical core for the community atmosphere model. Int. J. High Perform. Comput. Appl., 26, 74-89, doi:10.1177/109434-2011428142.

Dennis, J. M., J. Edwards, R. Loy, R. Jacob, A. A. Mirin, A. P. Craig, M. Vertenstein, 2012: An application-level parallel I/O library for Earth system models. Int. J. High Perform. Comput. Appl., 26, 43-53, doi:10.1177/1094342011428143.

Deuzeman, A., S. Reker, and C. Urbach, 2012: Lemon: An MPI parallel I/O library for data encapsulation using LIME. Comput. Phys. Commun., 183, 1321-1335, doi:10.1016/j.cpc.2012.01.016.

Gropp, S., E. Lusk, N. Doss, and A. Skjellum, 1996: A high performance, partable implementation of the MPI Message-Passing Interface standard. Parallel Comput., 22, 789-828, doi:10.1016/0167-8191(96) 00024-5

Hong, S.-Y., and Coauthors, 2018: The Korean Integrated Model (KIM) System for global weather forecasting (in press). Asia-Pac. J. Atmos. Sci., 54, doi:10.1007/s13143-018-0028-9.

Huang, X. M., W. C. Wang, H. H. Fu, G. W. Yang, B. Wang, and C. Zhang, 2014: A fast input/output library for high-resolution climate models. Geosci. Model Dev., 7, 93-103, doi:10.5194/gmd-7-93-2014

Li, J., and Coauthors, 2003: Parallel netCDF: A high-performance scientific I/O interface. Proc. the ACM/IEEE Conference on Supercomputing (SC), Phoenix, AZ, USA, ACM, 11 pp.

Message Passing Interface Forum, 2008: MPI: A Message-Passing Interface Standard Version 2.1. [Available online at https://www.mpiforum. org/docs/mpi-2.1/mpi21-report.pdf].

Meswani, M. R., M. A. Laurenzano, L. Carrington, and A. Snavely, 2010: Modeling and Predicting Disk I/O Time of HPC Applications. Proc. 2010 DoD High Performance Computing Modernization Program Users Group Conference, Schaumburg, IL, USA, HPCMP-UGC, 476-486.

Miller, E. L. and H. K. Randy, 1991: Input/output behavior of supercomputing applications. Proc. Supercomputing '91 Proceedings of the 1991 ACM/IEEE conference on Supercomputing, Albuquerque, NM, USA, ACM SIGHPC and IEEE Comp. Soc., 567-576.

Nair, R. D., S. J. Thomas, and R. D. Loft, 2005: A discontinuous Galerkin transport scheme on the cubed sphere. Mon. Wea. Rev., 133, 814-828, doi:10.1175/MWR2890.1.

Rani, M., J. Purser, and F. Mesinger, 1996: A global shallow water model using an expanded spherical cube: gnomonic versus conformal coordinates. Quart. J. Roy. Meteor. Soc., 122, 959-982, doi:10.1002/qj.49712253209.

Rew, R. K., and G. P. Davis, 1990: NetCDF: an interface for scientific data access., IEEE Comput. Graph. Appl., 10, 76-82, doi:10.1109/38.56302.

Sadourny, R., 1972: Conservative finite-difference approximations of the primitive equations on quasi-uniform spherical grids. Mon. Wea. Rev., 100, 136-144.

Sagan, H., 1994: Space-Filling Curves, Springer-Verlag, 193 pp.

Schikuta, E., and H. Wanek, 2001: Parallel I/O. Int. J. High Perform Comput. Appl., 15, 162-168, doi:10.1177/109434200101500208.

Shalf, J., K. Asanovi, D. Patterson, K. Keutzer, T. Mattson, and K. Yelick, 2009: The Manycore Revolution: Will HPC Lead or Follow? SciDAC Review, 14, 40-49.

Skamarock, W. C., J. B. Klemp, J. Dudhia, D. O. Gill, D. M. Barker, M. G. Duda, X.-Y. Huang, W. Wang, and J. G. Powers, 2008: A Description of the Advanced Research WRF Version 3. NCAR Tech. Note. NCAR/TN-475+STR, 113 pp.

Sunderam, V. S., and S. A. Moyer, 1996: Parallel I/O for distributed systems: Issues and implementation. Parallel Comput., 12, 25-38, doi: 10.1016/0167-739X(95)00033-O.

Taylor, M. A., and A. Fournier, 2010: A compatible and conservative spectral element method on unstructured grids. J. Comput. Phys., 229, 5879-5895, doi:10.1177/1094342011428142.

Taylor, M. A., J. Edwards, and A. St.-Cyr, 1997: Petascale atmospheric models for the community climate system model: New developments and evaluation of scalable dynamical cores. J. Phys. Conf. Ser., 125, 012023, doi:10.1088/1742-6596/125/1/012023.

Taylor, M. A., J. Tribbia, and M. Iskandarani, 2008: The spectral element method for the shallow water equations on the sphere. J. Comput. Phys., 130, 92-108, doi:10.1006/jcph.1996.5554.

Thakur, R., E. Lusk, and W. Gropp, 1998: I/O in Parallel Applications: the Weakest Link. Int. J. High Perform Comput. Appl., 12, 389-395, doi:10.1177/109434209801200401. Top500.org, 2017: Top500 List-November 2017. [Available online at https://www.top500.org/lists/2017/11/].

Tseng, Y.-H., and C. Ding, 2008: Efficient Parallel I/O in Community Atmosphere Model (CAM). Int. J. High Perform Comput. Appl., 22, 206-218, doi:10.1177/1094342008090914.

Wedi, N. P., M. Hamrud, and G. Mozdzynski, 2013: A Fast spherical harmonics transform for global NWP and climate models. Mon. Wea. Rev., 141, 3450-3461, doi:10.1175/MWR-D-13-00016.1.

Womble, D. E., and D. S. Greenberg, 1997: Parallel I/O: An introduction. Parallel Comput., 23, 403-417, doi:10.1016/S0167-8191(97)00007-0.

Zou, Y., W. Xue, and S. Liu, 2014: A case study of large-scale parallel I/O analysis and optimization for numerical weather prediction system. Parallel Comput., 37, 378-389, doi:10.1016/j.future.2013.12.039.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Kim, J., Kwon, Y.C. & Kim, TH. A Scalable High-Performance I/O System for a Numerical Weather Forecast Model on the Cubed-Sphere Grid. Asia-Pacific J Atmos Sci 54 (Suppl 1), 403–412 (2018). https://doi.org/10.1007/s13143-018-0021-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13143-018-0021-3