Abstract

We consider minimizing a conic quadratic objective over a polyhedron. Such problems arise in parametric value-at-risk minimization, portfolio optimization, and robust optimization with ellipsoidal objective uncertainty; and they can be solved by polynomial interior point algorithms for conic quadratic optimization. However, interior point algorithms are not well-suited for branch-and-bound algorithms for the discrete counterparts of these problems due to the lack of effective warm starts necessary for the efficient solution of convex relaxations repeatedly at the nodes of the search tree. In order to overcome this shortcoming, we reformulate the problem using the perspective of the quadratic function. The perspective reformulation lends itself to simple coordinate descent and bisection algorithms utilizing the simplex method for quadratic programming, which makes the solution methods amenable to warm starts and suitable for branch-and-bound algorithms. We test the simplex-based quadratic programming algorithms to solve convex as well as discrete instances and compare them with the state-of-the-art approaches. The computational experiments indicate that the proposed algorithms scale much better than interior point algorithms and return higher precision solutions. In our experiments, for large convex instances, they provide up to 22x speed-up. For smaller discrete instances, the speed-up is about 13x over a barrier-based branch-and-bound algorithm and 6x over the LP-based branch-and-bound algorithm with extended formulations. The software that was reviewed as part of this submission was given the Digital Object identifier https://doi.org/10.5281/zenodo.1489153.

Similar content being viewed by others

Notes

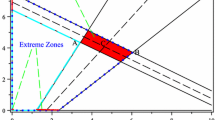

The time per node is similar for all combinations of parameters \(\Omega \), r and \(\alpha \). We plot the average for all instances with \(\Omega =2\).

References

Ahmed, S., Atamtürk, A.: Maximizing a class of submodular utility functions. Math. Program. 128, 149–169 (2011)

Alizadeh, F.: Interior point methods in semidefinite programming with applications to combinatorial optimization. SIAM J. Optim. 5, 13–51 (1995)

Alizadeh, F., Goldfarb, D.: Second-order cone programming. Math. Program. 95, 3–51 (2003)

Atamtürk, A., Goméz, A.,: Submodularity in conic quadratic mixed 0–1 optimization. arXiv preprint arXiv:1705.05918, (2016). BCOL Research Report 16.02, UC Berkeley

Atamtürk, A., Jeon, H.: Lifted polymatroid inequalities for mean-risk optimization with indicator variables. arXiv preprint arXiv:1705.05915, (2017). BCOL Research Report 17.01, UC Berkeley

Atamtürk, A., Narayanan, V.: Cuts for conic mixed-integer programming. In: Fischetti, M., Williamson, D.P. (eds.) Integer Programming and Combinatorial Optimization, pp. 16–29. Springer, Berlin (2007) ISBN 978-3-540-72792-7

Atamtürk, A., Narayanan, V.: Polymatroids and risk minimization in discrete optimization. Oper. Res. Lett. 36, 618–622 (2008)

Atamtürk, A., Narayanan, V.: The submodular 0–1 knapsack polytope. Discrete Optim. 6, 333–344 (2009)

Atamtürk, A., Deck, C., Jeon, H.: Successive quadratic upper-bounding for discrete mean-risk minimization and network interdiction. arXiv preprint arXiv:1708.02371, (2017). BCOL Reseach Report 17.05, UC Berkeley. Forthcoming in INFORMS Journal on Computing

Aybat, N.S., Iyengar, G.: A first-order smoothed penalty method for compressed sensing. SIAM J. Optim. 21, 287–313 (2011)

Belloni, A., Chernozhukov, V., Wang, L.: Square-root lasso: pivotal recovery of sparse signals via conic programming. Biometrika 98, 791–806 (2011)

Belotti, P., Kirches, C., Leyffer, S., Linderoth, J., Luedtke, J., Mahajan, A.: Mixed-integer nonlinear optimization. Acta Numerica 22, 1131 (2013)

Ben-Tal, A., Nemirovski, A.: Robust convex optimization. Math. Oper. Res. 23, 769–805 (1998)

Ben-Tal, A., Nemirovski, A.: Robust solutions of uncertain linear programs. Oper. Res. Lett. 25, 1–13 (1999)

Ben-Tal, A., Nemirovski, A.: Lectures on Modern Convex Optimization: Analysis, Algorithms, and Engineering Applications. MPS-SIAM Series on Optimization. SIAM, Philadelphia (2001)

Ben-Tal, A., El Ghaoui, L., Nemirovski, A.: Robust Optimization. Princeton University Press, Princeton (2009)

Bertsimas, D., Sim, M.: Robust discrete optimization under ellipsoidal uncertainty sets (2004) (unpublished)

Bertsimas, D., King, A., Mazumder, R., et al.: Best subset selection via a modern optimization lens. Ann. Stat. 44, 813–852 (2016)

Bienstock, D.: Computational study of a family of mixed-integer quadratic programming problems. Math. Program. 74, 121–140 (1996)

Borchers, B., Mitchell, J.E.: An improved branch and bound algorithm for mixed integer nonlinear programs. Comput. Oper. Res. 21, 359–367 (1994)

Çay, S.B., Pólik, I., Terlaky, T.: Warm-start of interior point methods for second order cone optimization via rounding over optimal Jordan frames, May 2017. ISE Technical Report 17T-006, Lehigh University

Dantzig, G.B., Orden, A., Wolfe, P.: The generalized simplex method for minimizing a linear form under linear inequality restraints. Pac. J. Math. 5, 183–196 (1955)

Dinh, T., Fukasawa, R., Luedtke, J.: Exact Algorithms for the Chance-Constrained Vehicle Routing Problem, pp. 89–101. Springer, New York (2016)

Efron, B., Hastie, T., Johnstone, I., Tibshirani, R., et al.: Least angle regression. Ann. Stat. 32, 407–499 (2004)

El Ghaoui, L., Oks, M., Oustry, F.: Worst-case value-at-risk and robust portfolio optimization: a conic programming approach. Oper. Res. 51, 543–556 (2003)

Hiriart-Urruty, J.-B., Lemaréchal, C.: Convex Analysis and Minimization Algorithms I: Fundamentals. Springer, New York (2013)

Ishii, H., Shiode, S., Nishida, T., Namasuya, Y.: Stochastic spanning tree problem. Discrete Appl. Math. 3, 263–273 (1981)

Karmarkar, N.: A new polynomial-time algorithm for linear programming. In: Proceedings of the Sixteenth Annual ACM Symposium on Theory of Computing, pp. 302–311. ACM (1984)

Leyffer, S.: Integrating SQP and branch-and-bound for mixed integer nonlinear programming. Comput. Optim. Appl. 18, 295–309 (2001)

Lobo, M.S., Vandenberghe, L., Boyd, S., Lebret, H.: Applications of second-order cone programming. Linear Algebra Appl 284, 193–228 (1998)

Megiddo, N.: On finding primal- and dual-optimal bases. INFORMS J. Comput. 3, 63–65 (1991)

Nemirovski, A., Scheinberg, K.: Extension of Karmarkar’s algorithm onto convex quadratically constrained quadratic problems. Math. Program. 72, 273–289 (1996)

Nesterov, Y.: Smooth minimization of non-smooth functions. Math. Program. 103, 127–152 (2005)

Nesterov, Y., Nemirovski, A.: Interior-Point Polynomial Algorithms in Convex Programming. Society for Industrial and Applied Mathematics (1994). URL http://epubs.siam.org/doi/abs/10.1137/1.9781611970791

Nesterov, Y.E., Todd, M.J.: Primal-dual interior-point methods for self-scaled cones. SIAM J. Optim. 8, 324–364 (1998)

Nikolova, E., Kelner, J.A., Brand, M., Mitzenmacher, M.: Stochastic shortest paths via quasi-convex maximization. In: European Symposium on Algorithms, pp. 552–563. Springer, New York (2006)

Tawarmalani, M., Sahinidis, N.V.: A polyhedral branch-and-cut approach to global optimization. Math. Program. 103(2), 225–249 (2005)

Tibshirani, R.: Regression shrinkage and selection via the lasso. J. R. Stat. Soc Ser. B (Methodological) 58, 267–288 (1996)

Van de Panne, C., Whinston, A.: Simplicial methods for quadratic programming. Naval Res. Log. Q. 11(3–4), 273–302 (1964)

Vielma, J.P., Dunning, I., Huchette, J., Lubin, M.: Extended formulations in mixed integer conic quadratic programming. Math. Program. Comput. (2015). https://doi.org/10.1007/s125312-016-0113-y

Wolfe, P.: The simplex method for quadratic programming. Economet. J. Economet. Soc. 27, 382–398 (1959)

Yildirim, E.A., Wright, S.J.: Warm-start strategies in interior-point methods for linear programming. SIAM J. Optim. 12, 782–810 (2002)

Acknowledgements

This research is supported, in part, by grant FA9550-10-1-0168 from the Office of the Assistant Secretary of Defense for Research and Engineering.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A. Branch-and-bound algorithm

Algorithm 3 describes the branch-and-bound algorithm used in computations. Throughout the algorithm, we maintain a list L of the nodes to be processed. Each node is a tuple (S, B, lb), where S is the subproblem, B is a basis for warm starting the continuous solver and lb is a lower bound on the objective value of S. In line 3 list L is initialized with the root node. For each node, the algorithm calls a continuous solver (line 9) which returns a tuple \((x,{\bar{B}},z)\), where x is an optimal solution of S, \({\bar{B}}\) is the corresponding optimal basis and z is the optimal objective value (or \(\infty \) if S is infeasible). The algorithm then checks whether the node can be pruned (lines 10–11), x is integer (lines 12–15), or it further branching is needed (lines 16–18).

We now describe the specific implementations of the different subroutines. For branching (line 17) we use the maximum infeasibility rule, which chooses the variable \(x_i\) with value \(v_i\) furtherest from an integer (ties broken arbitrarily). The subproblems \(S_{\le }\) and \(S_{\ge }\) in line 18 are created by imposing the constraints \(x_i\le \lfloor v_i \rfloor \) and \(x_i\ge \lceil v_i \rceil \), respectively. The PULL routine in line 5 chooses, when possible, the child of the previous node which violates the bound constraint by the least amount, and chooses the node with the smallest lower bound when the previous node has no child nodes. The list L is thus implemented as a sorted list ordered by the bounds, so that the PULL operation is done in O(1) and the insertion is done in \(O(\log |L|)\) (note that in line 18 we only add to the list the node that is not to be processed immediately). A solution x is assumed to be integer (line 12) when the values of all variables are within \(10^{-5}\) of an integer. Finally, the algorithm is terminated when \(\frac{ub-lb_{best}}{\left| lb_{best}+10^{-10}\right| }\le 10^{-4}\), where \(lb_{best}\) is the minimum lower bound among all the nodes in the tree.

The maximum infeasibility rule is chosen due to its simplicity. The other rules and parameters correspond to the ones used in CPLEX branch-and-bound algorithm in default configuration.

Appendix B. Code

We provide a Java implementation of the proposed algorithms in “Simplex QP-based methods for minimizing a conic quadratic objective over polyhedra,” identified with DOI https://www.doi.org/10.5281/zenodo.1489153. Using the code requires having Java and CPLEX software installed on the computer. We now provide a brief description of the contents of the software, as well as a quick guide on how to run the experiments described in the paper.

1.1 Contents

The main directory contains two scripts, generateScript.bat and generateScript.sh, for running the code in Windows and Linux, respectively. The rest of the key directories are:

- \(\texttt {dist}\) :

-

Contains the executable jar file, LagrangeanConic.jar, for running the code. Also contains a lib directory with required libraries.

- \(\texttt {doc}\) :

-

Javadoc.

- \(\texttt {results}\) :

-

Directory where the results are stored.

- \(\texttt {src}\) :

-

Directory with the source code. Subdirectory src/cplex contains the codes corresponding to the proposed methodology. Specifically,

- \(\texttt {QuadraticSolver.java}\) :

-

Code for Algorithm 1.

- \(\texttt {QuadraticSolverCoordinateLP.java}\) :

-

Algorithm 1 with \(t_0=\infty \).

- \(\texttt {QuadraticSolverBisection.java}\) :

-

Code for Algorithm 2.

- \(\texttt {ConicSolver.java}\) :

-

Code for solving with CPLEX default algorithms.

- \(\texttt {BranchAndBound.java}\) :

-

Code for the branch-and-bound algorithm.

Subdirectory src/instances contains code pertaining to the generation of the instances, subdirectory src/results contains code for processing the results, and subdirectory src/interfaces contains auxiliary code.

1.2 Running the experiments

The file README.txt in the main directory contains detailed instructions for running experiments. We now present a quick summary on how to run the experiments.

The files generateScript.bat and generateScript.sh contain two commands:

-

(1)

java -cp ./dist/LagrangeanConic.jar instances.FileGeneratorLinux #path

-

(2)

./run.bat (or ./run.sh)

In command (1), # should be replaced with a number between 1 and 6, and path should be replaced with the path to CPLEX .dll file. When executing command (1), another script called run.bat or run.sh will be created for running all the experiments corresponding to Table # in the paper. For example, if # is replaced with 1, then the command will create a file that, when executed, runs the code 24 times, one for each precision and algorithm, and stores the results in the directory results. Command (2) simply executes the newly created file.

Rights and permissions

About this article

Cite this article

Atamtürk, A., Gómez, A. Simplex QP-based methods for minimizing a conic quadratic objective over polyhedra. Math. Prog. Comp. 11, 311–340 (2019). https://doi.org/10.1007/s12532-018-0152-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12532-018-0152-7

Keywords

- Simplex method

- Conic quadratic optimization

- Quadratic programming

- Warm starts

- Value-at-risk minimization

- Portfolio optimization

- Robust optimization