Abstract

This article revisits the problem of Bayesian shape-restricted inference in the light of a recently developed approximate Gaussian process that admits an equivalent formulation of the shape constraints in terms of the basis coefficients. We propose a strategy to efficiently sample from the resulting constrained posterior by absorbing a smooth relaxation of the constraint in the likelihood and using circulant embedding techniques to sample from the unconstrained modified prior. We additionally pay careful attention to mitigate the computational complexity arising from updating hyperparameters within the covariance kernel of the Gaussian process. The developed algorithm is shown to be accurate and highly efficient in simulated and real data examples.

Similar content being viewed by others

Notes

Here, \(f'\) should be interpreted as the anti-derivative of \(f''\), i.e., \(f'(x) = \int _0^x f''(t) dt\).

References

Bardenet, R., Doucet, A., Holmes, C.: On Markov chain Monte Carlo methods for tall data. J. Mach. Learn. Res. 18(1), 1515–1557 (2017)

Bernauer, J.C, A1 Collaboration: High-precision determination of the electric and magnetic form factors of the proton. In: AIP Conference Proceedings, vol. 1388. AIP, pp. 128–134 (2011)

Bernauer, J.C., Pohl, R.: The proton radius problem. Sci. Am. 310(2), 32–39 (2014)

Bernauer, J.C., Achenbach, P., Ayerbe Gayoso, C., Böhm, R., Bosnar, D., Debenjak, L., Distler, M.O., Doria, L., Esser, A., Fonvieille, H., et al.: Bernauer et al. reply. Phys. Rev. Lett. 107(11), 119102 (2011)

Bernauer, J.C., Distler, M.O., Friedrich, J., Walcher, T., Achenbach, P., Ayerbe Gayoso, C., Böhm, R., Bosnar, D., Debenjak, L., Doria, L., et al.: Electric and magnetic form factors of the proton. Phys. Rev. C 90(1), 015206 (2014)

Borkowski, F., Simon, G.G., Walther, V.H., Wendling, R.D.: On the determination of the proton rms-radius from electron scattering data. Zeitschrift für Physik A Atoms and Nuclei 275(1), 29–31 (1975)

Bornkamp, B., Ickstadt, K.: Bayesian nonparametric estimation of continuous monotone functions with applications to dose–response analysis. Biometrics 65(1), 198–205 (2009)

Botev, Z.I.: The normal law under linear restrictions: simulation and estimation via minimax tilting. J. R. Stat. Soc. Ser. B (Stat. Methodol.) 79(1), 125–148 (2017)

Brezger, A., Steiner, W.J.: Monotonic regression based on Bayesian p-splines: an application to estimating price response functions from store-level scanner data. J. Bus. Econ. Stat. 26(1), 90–104 (2008)

Cai, B., Dunson, D.B.: Bayesian multivariate isotonic regression splines: applications to carcinogenicity studies. J. Am. Stat. Assoc. 102(480), 1158–1171 (2007)

Carlson, C.E.: The proton radius puzzle. Prog. Part. Nucl. Phys. 82, 59–77 (2015)

Chen, H., Yao, D.D.: Dynamic scheduling of a multiclass fluid network. Oper. Res. 41(6), 1104–1115 (1993)

Cotter, S.L., Roberts, G.O., Stuart, A.M., White, D.: MCMC methods for functions: modifying old algorithms to make them faster. Stat. Sci. 28(3), 424–446 (2013)

Curtis, S.M., Ghosh, S.K.: A variable selection approach to monotonic regression with Bernstein polynomials. J. Appl. Stat. 38(5), 961–976 (2011)

Damien, P., Walker, S.G.: Sampling truncated normal, beta, and gamma densities. J. Comput. Graph. Stat. 10(2), 206–215 (2001)

Geweke, J.: Efficient simulation from the multivariate normal and Student-t distributions subject to linear constraints and the evaluation of constraint probabilities. In: Computing Science and Statistics: Proceedings of the 23rd Symposium on the Interface, pp. 571–578. Interface Foundation of North America, Inc., Fairfax, Virginia (1991)

Goldenshluger, A., Zeevi, A.: Recovering convex boundaries from blurred and noisy observations. Ann. Stat. 34(3), 1375–1394 (2006)

Golub, G.H., van Loan, C.F.: Matrix Computations, 3rd edn. Johns Hopkins University Press, Baltimore (1996)

Higinbotham, D.W., Kabir, A.A., Lin, V., Meekins, D., Norum, B., Sawatzky, B.: Proton radius from electron scattering data. Phys. Rev. C 93(5), 055207 (2016)

Johndrow, J.E, Orenstein, P., Bhattacharya, A.: Scalable MCMC for Bayes shrinkage priors. arXiv preprint arXiv:1705.00841 (2017)

Kelly, C., Rice, J.: Monotone smoothing with application to dose–response curves and the assessment of synergism. Biometrics 46(4), 1071–1085 (1990)

Kotecha, J.H., Djuric, P.M.: Gibbs sampling approach for generation of truncated multivariate Gaussian random variables. In: The Proceedings of the International Conference on Acoustics, Speech, and Signal Processing. IEEE (1999)

Lele, A.S., Kulkarni, S.R., Willsky, A.S.: Convex-polygon estimation from support-line measurements and applications to target reconstruction from laser-radar data. J. Opt. Soc. Am. A 9(10), 1693–1714 (1992)

Lin, L., Dunson, D.B.: Bayesian monotone regression using Gaussian process projection. Biometrika 101(2), 303–317 (2014)

Maatouk, H., Bay, X.: Gaussian process emulators for computer experiments with inequality constraints. Math. Geosci. 49(5), 557–582 (2017)

Meyer, R.F., Pratt, J.W.: The consistent assessment and fairing of preference functions. IEEE Trans. Syst. Sci. Cybern. 4(3), 270–278 (1968)

Meyer, M.C., Hackstadt, A.J., Hoeting, J.A.: Bayesian estimation and inference for generalised partial linear models using shape-restricted splines. J. Nonparametr. Stat. 23(4), 867–884 (2011)

Murray, I., Prescott Adams, R., MacKay, D.J.C.: Elliptical slice sampling. J. Mach. Learn. Res. W&CP 9, 541–548 (2010)

Neal, R.M.: Regression and classification using Gaussian process priors. In: Bernardo, J.M. et al. (eds.) Bayesian statistics, vol. 6, pp. 475–501 (1999)

Neelon, B., Dunson, D.B.: Bayesian isotonic regression and trend analysis. Biometrics 60(2), 398–406 (2004)

Nicosia, G., Rinaudo, S., Sciacca, E.: An evolutionary algorithm-based approach to robust analog circuit design using constrained multi-objective optimization. Knowl. Based Syst. 21(3), 175–183 (2008)

Pakman, A., Paninski, L.: Exact Hamiltonian Monte Carlo for truncated multivariate Gaussians. J. Comput. Graph. Stat. 23(2), 518–542 (2014)

Pohl, R., Antognini, A., Nez, F., Amaro, F.D., Biraben, F., Cardoso, J.M.R., Covita, D.S., Dax, A., Dhawan, S., Fernandes, L.M.P., et al.: The size of the proton. Nature 466(7303), 213–216 (2010)

Prince, J.L., Willsky, A.S.: Constrained sinogram restoration for limited-angle tomography. Opt. Eng. 29(5), 535–545 (1990)

Reboul, L.: Estimation of a function under shape restrictions. Applications to reliability. Ann. Stat. 33(3), 1330–1356 (2005)

Riihimäki, J., Vehtari, A.: Gaussian processes with monotonicity information. In: Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics, pp. 645–652 (2010)

Rodriguez-Yam, G., Davis, R.A., Scharf, L.L.: Efficient Gibbs sampling of truncated multivariate normal with application to constrained linear regression. Technical Report, Department of Statistics, Columbia University (2004)

Shively, T.S., Walker, S.G., Damien, P.: Nonparametric function estimation subject to monotonicity, convexity and other shape constraints. J. Econom. 161(2), 166–181 (2011)

Wood, A.T.A., Chan, G.: Simulation of stationary Gaussian processes in \([0, 1]^d\). J. Comput. Graph. Stat. 3(4), 409–432 (1994)

Yan, X., Higinbotham, D.W., Dutta, D., Gao, H., Gasparian, A., Khandaker, M.A., Liyanage, N., Pasyuk, E., Peng, C., Xiong, W.: Robust extraction of the proton charge radius from electron–proton scattering data. Phys. Rev. C 98(2), 025204 (2018)

Zhou, S., Giulani, P., Piekarewicz, J., Bhattacharya, A., Pati, D.: Reexamining the proton-radius problem using constrained Gaussian processes. Phys. Rev. C 99(5), 055202 (2019)

Acknowledgements

We thank Pablo Giulani for sharing the proton dataset and many insightful discussions. We also thank Shuang Zhou for sharing her R code for our comparison in Sect. 4.

Funding

Funding was provided by National Science Foundation (Grant Nos. DMS 1613156, DMS 1653404).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendices

1.1 Full conditionals

Consider model (9) and the prior specified in Sect. 3.1. The joint distribution is given by:

Then,

\( \xi \mid Y, \xi _0, \sigma ^2, \tau ^2 \) is truncated multivariate Gaussian truncated on \(\mathbb {1}_{{\mathcal {C}}_{\xi }}(\xi )\).

\( \xi _0 \mid Y, \xi , \sigma ^2, \tau ^2 \sim {\mathcal {N}}({\bar{Y}}^*, \sigma ^2/n)\), where, \({\bar{Y}}^*\) is average of components of \(Y^* = Y - \Psi \xi \).

\( \sigma ^2 \mid Y, \xi _0, \xi , \tau ^2 \sim {\mathcal {I}} {\mathcal {G}} \big ( n/2, \Vert Y - \xi _0 {\mathrm {1}}_n - \Psi \xi \Vert ^2 /2 \big )\)

\( \tau ^2 \mid Y, \xi _0, \xi , \sigma ^2 \sim {\mathcal {I}} {\mathcal {G}} \big ((N+1)/2, \xi ^{\mathrm {\scriptscriptstyle T}}K^{-1} \xi /2 \big )\)

Again, consider model (10) and the prior specified in Sect. 3.2. The joint distribution is given by:

Then,

\( \xi \mid Y, \xi _0, \xi _*, \sigma ^2, \tau ^2 \) is truncated multivariate Gaussian truncated on \(\mathbb {1}_{{\mathcal {C}}_{\xi }}(\xi )\).

\( \xi _0 \mid Y, \xi _*, \xi , \sigma ^2, \tau ^2 \sim {\mathcal {N}}({\bar{Y}}^*, \sigma ^2/n)\), \({\bar{Y}}^*\) is average of components of \(Y^* = Y - \xi _* X - \Phi \xi \).

\( \xi _* \mid Y, \xi _0, \xi , \sigma ^2, \tau ^2 \sim {\mathcal {N}}( \sum _{i=1}^{n} x_i y_i^{**}/ \sum _{i=1}^{n} x_i^2, \sigma ^2/ \sum _{i=1}^{n} x_i^2)\), where \(Y^{**} = Y - \xi _0 {\mathrm {1}}_n - \Phi \xi \).

\( \sigma ^2 \mid Y, \xi _0, \xi _*, \xi , \tau ^2 \sim {\mathcal {I}} {\mathcal {G}} \big ( n/2, \Vert Y - \xi _0 {\mathrm {1}}_n - \xi _0 X - \Phi \xi \Vert ^2 /2 \big )\)

\( \tau ^2 \mid Y, \xi _0, \xi _*, \xi , \sigma ^2 \sim {\mathcal {I}} {\mathcal {G}} \big ( (N+1)/2, \xi ^{\mathrm {\scriptscriptstyle T}}K^{-1} \xi /2 \big )\)

Algorithm 1 was used to draw samples from the full conditional distribution of \(\xi \) while sampling from the full conditionals of \(\xi _0\), \(\xi _*\), \(\sigma ^2\) and \(\tau ^2\) are routine.

1.2 Effective sample sizes for the monotone example in Sect. 3.3

We provide some evidence towards the mixing behavior of our Gibbs sampler by computing the effective sample size of the estimated function value at 75 different test points. The effective sample size is a measure of the amount of the autocorrelation in a Markov chain, and essentially amounts to the number of independent samples in the MCMC path. From an algorithmic robustness perspective, it is desirable that the effective sample sizes remain stable across increasing sample size and/or dimension, and this is the aspect we wish to investigate here. We only report results for the monotonicity constraint; similar behavior is seen for the convexity constraint as well.

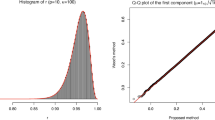

We consider 20 different values for the sample size n with equal spacing between 50 and 1000. Note that the dimension of \(\xi \) itself grows between 25 and 500 as a result. For each value of n, we run the Gibbs sampler for 12,000 iterations with 5 randomly chosen initializations. For each starting point, we record the effective sample size at each of the 75 test points after discarding the first 2,000 iterations as burn-in, and average them over the different initializations. Figure 4 shows boxplots of these averaged effective sample sizes across n which are seen to be quite stable across growing n.

1.3 R code

We used R for the implementation of Algorithm 1 and Durbin’s recursion to find the inverse of the Cholesky factor, with the computation of the inverse Cholesky factor optimized with Rcpp. We provide our code for implementing the monotone and convex function estimation procedures in Sects. 3.1 and 3.2 in the Github page mentioned in Sect. 1. There are six different functions to perform the MCMC sampling for monotone increasing, monotone decreasing, and convex increasing functions with and without hyperparameter updates. Each of these main functions take x and y as inputs along with other available options, and return posterior samples on \(\xi _0\), \(\xi ^*\), \(\xi \), \(\sigma \), \(\tau \) and f along with posterior mean and symmetric 95% credible interval of f on a user-specified grid. A detailed description on the available input and output options for each function can be found within the function files.

Rights and permissions

About this article

Cite this article

Ray, P., Pati, D. & Bhattacharya, A. Efficient Bayesian shape-restricted function estimation with constrained Gaussian process priors. Stat Comput 30, 839–853 (2020). https://doi.org/10.1007/s11222-020-09922-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-020-09922-0