Abstract

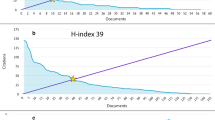

The concept of h-index has been proposed to easily assess a researcher’s performance with a single number. However, by using only this number, we lose significant information about the distribution of citations per article in an author’s publication list. In this article, we study an author’s citation curve and we define two new areas related to this curve. We call these “penalty areas”, since the greater they are, the more an author’s performance is penalized. We exploit these areas to establish new indices, namely Perfectionism Index and eXtreme Perfectionism Index (XPI), aiming at categorizing researchers in two distinct categories: “influentials” and “mass producers”; the former category produces articles which are (almost all) with high impact, and the latter category produces a lot of articles with moderate or no impact at all. Using data from Microsoft Academic Service, we evaluate the merits mainly of PI as a useful tool for scientometric studies. We establish its effectiveness into separating the scientists into influentials and mass producers; we demonstrate its robustness against self-citations, and its uncorrelation to traditional indices. Finally, we apply PI to rank prominent scientists in the areas of databases, networks and multimedia, exhibiting the strength of the index in fulfilling its design goal.

Similar content being viewed by others

Notes

In the sequel of the article for the sake of simplicity, we use the term h-core and h-core-square interchangeably.

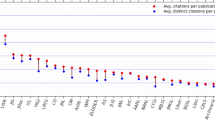

We selected authors with relatively small number of publications and citations for better readability of the figures.

Usually when a publication is cited once or twice during its total “life”, these citations are self-citations.

References

Alonso, S., Cabrerizo, F. J., Herrera-Viedma, E., & Herrera, F. (2009). h-index: A review focused in its variants, computation and standardization for different scientific fields. Journal of Informetrics, 3(4), 273–289.

Anderson, T. R., Hankin, R. K. S., & Killworth, P. D. (2008). Beyond the Durfee square: Enhancing the h-index to score total publication output. Scientometrics, 76, 577–588.

Basaras, P., Katsaros, D., & Tassiulas, L. (2013). Detecting influential spreaders in complex, dynamic networks. IEEE Computer magazine, 46(4), 26–31.

Baum, J. A. C. (2012). The excess-tail ratio: Correcting Journal Impact Factors for Citation Quality. SSRN: http://ssrn.com/abstract=2038102.

Bornmann, L., Mutz, R., & Daniel, H. D. (2010). The h index research output measurement: Two approaches to enhance its accuracy. Journal of Informetrics, 4(3), 407–414.

Chen, D. Z., Huang, M. H., & Ye, F. Y. (2013). A probe into dynamic measures for h-core and h-tail. Journal of Informetrics, 7(1), 129–137.

Cole, S., & Cole, J. R. (1967). Scientific output and recognition—Study in operation of reward system in science. American Sociological Review, 32(3), 377–390.

Cormode, G., Ma, Q., Muthukrishnan, S., & Thompson, B. (2013). Socializing the \(h\)-index. Journal of Informetrics, 7(3), 718–721.

Dorta-González, P., & Dorta-González, M. I. (2011). Central indexes to the citation distribution: A complement to the h-index. Scientometrics, 88(3), 729–745.

Egghe, L. (2006). Theory and practice of the \(g\)-index. Scientometrics, 69(1), 131–152.

Feist, G. J. (1997). Quantity, quality, and depth of research as influences on scientific eminence: Is quantity most important? Creativity Research Journal, 10, 325–335.

Franceschini, F., & Maisano, D. (2010). The citation triad: An overview of a scientist’s publication output based on Ferrers diagrams. Journal of Informetrics, 4(4), 503–511.

García-Pérez, M. A. (2012). An extension of the \(h\)-index that covers the tail and the top of the citation curve and allows ranking researchers with similar \(h\). Journal of Informetrics, 6(4), 689–699.

Hirsch, J. E. (2005). An index to quantify an individual’s scientific research output. Proceedings of the National Academy of Sciences, 102(46), 16,569–16,572.

Hirsch, J. E. (2007). Does the h index have predictive power? Proceedings of the National Academy of Sciences, 104(49), 9,193–19,198.

Hirsch, J. E. (2010). An index to quantify an individual’s scientific research output that takes into account the effect of multiple coauthorship. Scientometrics, 85(3), 741–754.

Jin, B., Liang, L., Rousseau, R., & Egghe, L. (2007). The R- and AR-indices: Complementing the h-index. Chinese Science Bulletin, 52(6), 855–863. doi:10.1007/s11434-007-0145-9.

Katsaros, D., Akritidis, L., & Bozanis, P. (2009). The \(f\) index: Quantifying the impact of coterminal citations on scientists’ ranking. Journal of the American Society for Information Science and Technology, 60(5), 1051–1056.

Kuan, C. H., Huang, H. H., & Chen, D. Z. (2011). Positioning research and innovation performance using shape centroids of h-core and h-tail. Journal of Informetrics, 5(4), 515–528.

Liu, J. Q., Rousseau, R., Wang, M. S., & Ye, F. Y. (2013). Ratios of h-cores, h-tails and uncited sources in sets of scientific papers and technical patents. Journal of Informetrics, 7(1), 190–197.

Rosenberg, M. S. (2011). A biologist’s guide to impact factors. Arizona: Tech. rep., Arizona State University.

Rousseau, R. (2006). New developments related to the Hirsch index. Science Focus, 1(4), 23–25.

Schreiber, M. (2007). Self-citation corrections for the Hirsch index. Europhysics Letters, 78(3), 30002.

Sidiropoulos, A., Katsaros, D., & Manolopoulos, D. (2007). Generalized Hirsch \(h\)-index for disclosing latent facts in citation networks. Scientometrics, 72(2), 253–280.

Spruit, H. C. (2012). The relative significance of the \(h\)-index. Tech. rep., http://arxiv.org/abs/1201.5476v1.

Vinkler, P. (2009). The \(\pi \)-index: A new indicator for assessing scientific impact. Journal of Information Science, 35(5), 602–612.

Vinkler, P. (2011). Application of the distribution of citations among publications in scientometric evaluations. Journal of the American Society for Information Science and Technology, 62(10), 1963–1978.

Woeginger, G. J. (2008). An axiomatic characterization of the Hirsch-index. Mathematical Social Sciences, 56, 224–232.

Ye, F. Y., Leydesdorff, L. (2013). The “Academic Trace” of the Performance Matrix: A Mathematical Synthesis of the h-Index and the Integrated Impact Indicator (I3) http://arxiv.org/abs/1307.3616.

Ye, F. Y., & Rousseau, R. (2010). Probing the \(h\)-core: An investigation of the tail-core ratio for rank distributions. Scientometrics, 84(2), 431–439.

Zhang, C. T. (2009). The \(e\)-index, complementing the \(h\)-index for excess citations. PLoS One, 4(5), e5429.

Zhang, C. T. (2013a). The h\(^\prime \)-index: Effectively improving the h-index based on the citation distribution. PLOS One, 8(4), e59,912.

Zhang, C. T. (2013b). A novel triangle mapping technique to study the h-index based citation distribution. Nature Scientific Reports, 3(1023).

Acknowledgments

The authors wish to thank Professor Sofia Kouidou, Vice-rector of the Aristotle University of Thessaloniki, for stating the basic question that led to the present research.

The authors would also wish to thank Professor Vana Doufexi for reviewing and editing the final release of this article.

The offer of Microsoft to provide gratis their database API is appreciated.

Finally, D.Katsaros acknowledges the support of the Research Committee of the University of Thessaly through the project “Web observatory for research activities in the University of Thessaly”.

Author information

Authors and Affiliations

Corresponding author

Additional information

PI, note here that PI is not related and should not be confused with the term Perfect Index (Woeginger in Math Soc Sci 56: 224–232, 2008).

Rights and permissions

About this article

Cite this article

Sidiropoulos, A., Katsaros, D. & Manolopoulos, Y. Ranking and identifying influential scientists versus mass producers by the Perfectionism Index. Scientometrics 103, 1–31 (2015). https://doi.org/10.1007/s11192-014-1515-0

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-014-1515-0