Abstract

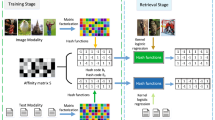

Cross-modal hashing has recently gained significant popularity to facilitate multimedia retrieval across different modalities. Since the acquisition of large-scale labeled training data are very labor intensive, most supervised cross-modal hashing methods are uncompetitive for real applications. With limited label available, this paper presents a novel S emi-S upervised D iscrete H ashing (SSDH) for efficient cross-modal retrieval. In contrast to most semi-supervised cross-modal hashing works that need to predict the label of unlabeled data, our proposed approach groups the labeled and unlabeled data together, and exploits the informative unlabeled data to promote hashing code learning directly. Specifically, the proposed SSDH approach utilizes the relaxed hash representations to characterize each modality, and learns the semi-supervised semantic-preserving regularization to correlate the semantic consistency between the heterogeneous modalities. Accordingly, an efficient objective function is proposed to learn the hash representation, while designing an efficient optimization algorithm to optimize the hash codes for both labeled and unlabeled data. Without sacrificing the retrieval performance, the proposed SSDH method is adaptive to benefit various kinds of retrieval tasks, i.e., unsupervised, semi-supervised and supervised. Experimental results compared with several competitive algorithms show the effectiveness of the proposed method and its superiority over state-of-the-arts.

Similar content being viewed by others

References

Bronstein MM, Bronstein AM, Michel F, Paragios N (2010) Data fusion through cross-modality metric learning using similarity-sensitive hashing. In: Proc. IEEE conference on computer vision and pattern recognition, pp 3594–3601

Cao Y, Long M, Wang J, Yang Q, Philip SY (2016) Deep visual-semantic hashing for cross-modal retrieval. In: Proceedings of ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp 1445–1454

Chen ZD, Li CX, Luo X, Nie L, Xu XS (2019) Scratch: a scalable discrete matrix factorization hashing framework for cross-modal retrieval. IEEE Trans Circuits Sys Video Technol 9(4):1–13

Chua TS, Tang J, Hong R, Li H, Luo Z, Zheng Y (2009) Nus-wide:a real-world web image database from national university of singapore. In: Proc. ACM international conference on image and video retrieval, pp 1–9

Deerwester S, Dumais ST, Furnas GW, Landauer TK, Harshman R (2010) Indexing by latent semantic analysis. J Am Soc Inf Sci Technol 41(6):391–407

Ding G, Guo Y, Zhou J (2014) Collective matrix factorization hashing for multimodal data. In: Proc. IEEE conference on computer vision and pattern recognition, pp 2083–2090

Dong X, Sun J, Duan P, Meng L, Tan Y, Wan W, Wu H, Zhang B, Zhang H (2018) Semi-supervised modality-dependent cross-media retrieval. Multimedia Tools & Applications 77(3):3579–3595

Huiskes MJ, Lew MS (2008) The mir flickr retrieval evaluation. In: Proc. ACM international conference on multimedia information retrieval, pp 39–43

Jiang Q, Li W (2019) Discrete latent factor model for cross-modal hashing. IEEE Trans Image Process 28(7):3490–3501

Jiang QY, Li WJ (2017) Deep cross-modal hashing. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, pp 3132–3240

Li A, Shan S, Chen X, Gao W (2011) Cross-pose face recognition based on partial least squares. Pattern Recogn Lett 32(15):1948–1955

Li C, Deng C, Li N, Liu W, Gao X, Tao D (2018) Self-supervised adversarial hashing networks for cross-modal retrieval

Lin Z, Ding G, Hu M, Wang J (2015) Semantics-preserving hashing for cross-view retrieval. In: Proc. IEEE conference on computer vision and pattern recognition, pp 3864–3872

Liu H, Ji R, Wu Y, Huang F, Zhang B (2017) Cross-modality binary code learning via fusion similarity hashing. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, pp 6345–6353

Liu X, Hu Z, Ling H, Cheung Y (2019) Mtfh: a matrix tri-factorization hashing framework for efficient cross-modal retrieval. IEEE Trans Pattern Anal Mach Intell 9(4):1–16

Long M, Cao Y, Wang J, Yu PS (2016) Composite correlation quantization for efficient multimodal retrieval. In: Proc. International ACM SIGIR conference on research and development in information retrieval, pp 579–588

Mandal D, Chaudhury KN, Biswas S (2017) Generalized semantic preserving hashing for n-label cross-modal retrieval. In: Proc. IEEE conference on computer vision and pattern recognition, pp 2633–2641

Pereira JC, Coviello E, Doyle G, Rasiwasia N, Lanckriet GR, Levy R, Vasconcelos N (2014) On the role of correlation and abstraction in cross-modal multimedia retrieval. IEEE Trans Pattern Anal Mach Intell 36(3):521–535

Rasiwasia N, Pereira JC, Coviello E, Doyle G, Lanckriet GRG, Levy R, Vasconcelos N (2010) A new approach to cross-modal multimedia retrieval. In: Proc. IEEE international conference on multimedia, pp 251–260

Russakovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, Huang Z, Karpathy A, Khosla A, Bernstein M (2015) Imagenet large scale visual recognition challenge. Int J Comput Vis 115(3):211–252

Sharma A, Kumar A, Daume H, Jacobs DW (2012) Generalized multiview analysis: a discriminative latent space. In: Proc. IEEE conference on computer vision and pattern recognition, pp 2160–2167

Shen F, Shen C, Liu W, Shen TH (2015) Supervised discrete hashing. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition, pp 37–45

Simonyan K, Zisserman A (2014) Very deep convolutional networks for large-scale image recognition. Computer Science

Song J, Yang Y, Yang Y, Huang Z, Shen HT (2013) Inter-media hashing for large-scale retrieval from heterogeneous data sources. In: Proceedings of ACM SIGMOD International Conference on Management of Data, pp 785–796

Tang J, Wang K, Shao L (2016) Supervised matrix factorization hashing for cross-modal retrieval. IEEE Trans Image Process 25(7):3157–3166

Toh KC, Yun S (2010) An accelerated proximal gradient algorithm for nuclear norm regularized linear least squares problems. Pacific J Optim 6(615-640):15

Wang D, Shang B, Wang Q, Wan B (2019) Semi-paired and semi-supervised multimodal hashing via cross-modality label propagation. Multimed Tools Appl 78(17):24167–24185

Wang J, Li G, Pan P, Zhao X (2017) Semi-supervised semantic factorization hashing for fast cross-modal retrieval. Multimed Tools Appl 76(19):20197–20215

Xu X, Shen F, Shen HT, Li X (2017) Learning discriminative binary codes for large-scale cross-modal retrieval. IEEE Trans Image Process 26 (5):2494–2507

Zhang D, Li WJ (2014) Large-scale supervised multimodal hashing with semantic correlation maximization. In: Proc. Twenty-eighth AAAI conference on artificial intelligence, pp 2177–2183

Zhang J, Peng Y, Yuan M (2018) Sch-gan: Semi-supervised cross-modal hashing by generative adversarial network. IEEE Transactions on Cybernetics

Zhang L, Ma B, Li G, Huang Q, Tian Q (2017) Generalized semi-supervised and structured subspace learning for cross-modal retrieval. IEEE Trans Multimed 20(1):128–141

Zhou J, Ding G, Guo Y (2014) Latent semantic sparse hashing for cross-modal similarity search. In: Proceedings of ACM SIGIR Conference on Research & Development in Information Retrieval, pp 415–424

Zou F, Bai X, Luan C, Li K, Wang Y, Ling H (2019) Semi-supervised cross-modal learning for cross modal retrieval and image annotation. World Wide Web 22(2):825–841

Acknowledgements

The work was supported by National Science Foundation of China (Nos. 61673185, 61672444 and 61972167), Quanzhou City Science&Technology Program of China (No. 2018C107R), State Key Laboratory of Integrated Services Networks of Xidian University (No. ISN20-11), Promotion Program for graduate student in Scientific research and innovation ability of Huaqiao University (No. 17013083010).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Wang, X., Liu, X., Peng, SJ. et al. Semi-supervised discrete hashing for efficient cross-modal retrieval. Multimed Tools Appl 79, 25335–25356 (2020). https://doi.org/10.1007/s11042-020-09195-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-020-09195-9