Abstract

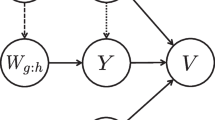

Multimedia content understanding research requires rigorous approach to deal with the complexity of the data. At the crux of this problem is the method to deal with multilevel data whose structure exists at multiple scales and across data sources. A common example is modeling tags jointly with images to improve retrieval, classification and tag recommendation. Associated contextual observation, such as metadata, is rich that can be exploited for content analysis. A major challenge is the need for a principal approach to systematically incorporate associated media with the primary data source of interest. Taking a factor modeling approach, we propose a framework that can discover low-dimensional structures for a primary data source together with other associated information. We cast this task as a subspace learning problem under the framework of Bayesian nonparametrics and thus the subspace dimensionality and the number of clusters are automatically learnt from data instead of setting these parameters a priori. Using Beta processes as the building block, we construct random measures in a hierarchical structure to generate multiple data sources and capture their shared statistical at the same time. The model parameters are inferred efficiently using a novel combination of Gibbs and slice sampling. We demonstrate the applicability of the proposed model in three applications: image retrieval, automatic tag recommendation and image classification. Experiments using two real-world datasets show that our approach outperforms various state-of-the-art related methods.

Similar content being viewed by others

Notes

e.g. a discrete space having count data {0, 1, 2, … , }M × 1 etc

k–th factor is considered as an active factor if \(\mathbf {Z}_{j}^{i^{\prime },k}\) is 1 for some i ′ and j.

Cosine similarity is preferred as it is invariant to the scaling.

A similar dataset has been used before in [8].

The F1@N values for the baseline methods are taken from [6] for reference purpose.

References

Barnard K, Duygulu P, Forsyth D, De Freitas N, Blei DM, Jordan MI (2003) Matching words and pictures. J Mach Learn Res 3:1107–1135

Blei DM, Jordan MI (2003) Modeling annotated data. In: Proceedings of the 26th annual international ACM SIGIR conference on research and development in informaion retrieval. ACM, pp 127–134

Blei DM, McAuliffe JD (2007) Supervised topic models. In: Advances in neural information processing systems

Blei DM, Ng AY, Jordan MI (2003) Latent dirichlet allocation. J Mach Learn Res 3:993–1022

Cao B, Pan S, Zhang Y, Yeung D, Yang Q (2010) Adaptive transfer learning. In: Proceedings of the 24th AAAI conference on artificial intelligence

Chen N, Zhu J, Sun F, Xing E (2012) Large-margin predictive latent subspace learning for multi-view data analysis. IEEE Trans. on Pattern Analysis and Machine Intelligence

Chua T, Tang J, Hong R, Li H, Luo Z, Zheng Y (2009) NUS-WIDE: A real-world web image database from national university of Singapore. CIVR:48:1–48:9

Dunson D, Park J (2008) Kernel stick-breaking processes. Biometrika 95(2):307–323

Ferguson T (1973) A Bayesian analysis of some nonparametric problems. Ann Stat 1(2):209–230

Ferrari V, Tuytelaars T, Van Gool L (2004) Integrating multiple model views for object recognition. In: Computer vision and pattern recognition. IEEE, pp 105–112

Gilks W, Richardson S, Spiegelhalter D (1995) Markov chain Monte Carlo in practice: Interdisciplinary statistics, vol 2. Chapman & Hall/CRC, London, UK

Gupta S, Phung D, Venkatesh S (2012) A Bayesian nonparametric joint factor model for learning shared and individual subspaces from multiple data sources. In: Proceedings of 12th SIAM international conference on data mining, pp 200–211

Gupta S, Phung D, Venkatesh S (2012) A slice sampler for restricted hierarchical beta process with applications to shared subspace learning. In: Uncertainty in artificial intelligence, pp 316–325

Hjort N (1990) Nonparametric Bayes estimators based on beta processes in models for life history data. Ann Stat 18(3):1259–1294

Nikolopoulos S, Zafeiriou S, Patras I, Kompatsiaris I (2012) High order plsa for indexing tagged images. Signal Process

Oliva A, Torralba A (2001) Modeling the shape of the scene: A holistic representation of the spatial envelope. Int’l J Comput Vis 42(3):145–175

Rasiwasia N, Costa Pereira J, Coviello E, Doyle G, Lanckriet GR, Levy R, Vasconcelos N (2010) A new approach to cross-modal multimedia retrieval. In: Proceedings of the international conference on multimedia. ACM, pp 251–260

Russell BC, Torralba A, Murphy KP, Freeman WT (2008) Labelme: A database and web-based tool for image annotation. Int J Comput Vis 77(1):157–173

Shen Y, Fan J (2010) Leveraging loosely-tagged images and inter-object correlations for tag recommendation. In: Proceedings of the international conference on multimedia. ACM, pp 5–14

Teh Y, Görür D, Ghahramani Z (2007) Stick-breaking construction for the Indian buffet process. J Mach Learn Res- Proc Track 2:556–563

Thibaux R, Jordan M (2007) Hierarchical beta processes and the Indian buffet process. J Mach Learn Res- Proc Track 2:564–571

Vidal R (2011) Subspace clustering. IEEE Signal Proc Mag 28(2):52–68

Wang C, Blei D, Li F (2009) Simultaneous image classification and annotation. In: Computer vision and pattern recognition. IEEE, pp 1903–1910

Wu X, Zhang L, Yu Y (2006) Exploring social annotations for the semantic web. In: Proceedibgs of the international conference on world wide web. ACM, pp 417–426

Xing E, Yan R, Hauptmann A (2005) Mining associated text and images with dual-wing harmoniums. Uncertainty in artificial intelligence, pp 633–641

Yang J, Liu Y, Ping E, Hauptmann A (2007) Harmonium models for semantic video representation and classification. SIAM Conference on Data Mining, pp 1–12

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

Sampling u i

This section provides details for sampling u i when the primary medium has real-valued features and the associated media have categorical features. The primary and associated media features are modeled using Gaussian and categorical distributions. This is the model assumed throughout our experiments using real datasets.

Due to the use of Dirichlet process prior, the number of groups (J) can increase/decrease with data. For the existing groups, i.e. for u i = 1, … , J, the conditional Gibbs posterior of u i is given as

where a ψ comes from ψ j ∼ Dirichlet (a ψ ). The number d l i is the frequency of l–th feature and \(n_{c_{i}}\) is the total number of features in i–th item of the associated medium.

For a new group, i.e. when u i = J + 1, the conditional Gibbs posterior of u i is given as

In above expressions, the predictive distribution of Z i, : is given as

where \(f_{u_{i}}^{k}\triangleq \sum \limits _{i^{\prime }}\mathbf {Z}_{u_{i}}^{i^{\prime },k}\). The predictive distribution of W i, : is given as

where D denotes the normalization constant of the Normal-Wishart distribution while s, m, ν and Δ are the parameters characterizing the distribution. Using the parameter set (s 0, m 0, ν 0, Δ0) for the prior distribution, the posterior set \(\left (s_{u_{i}}^{-i},m_{u_{i}}^{-i},\nu _{u_{i}}^{-i},{\Delta }_{u_{i}}^{-i}\right )\) is given as \(s_{u_{i}}^{-i}=s_{0}+N_{u_{i}}^{-i},~m_{u_{i}}^{-i}=\frac {s_{0}m_{0}+{\sum }_{i^{\prime }\in S_{-i}}\mathbf {W}^{i^{\prime },:}}{s_{0}+N_{u_{i}}^{-i}}~\nu _{u_{i}}^{-i}=\nu _{0}+N_{u_{i}}^{-i}\) and \({\Delta }_{u_{i}}^{-i}={\Delta }_{0}+\mathbf {W}^{-i,u_{i}}\left (\mathbf {W}^{-i,u_{i}}\right )^{\mathsf {T}}+s_{0}m_{0}\left (m_{0}\right )^{\mathsf {T}}-s_{u_{i}}^{-i}m_{u_{i}}^{-i}\left (m_{u_{i}}^{-i}\right )^{\mathsf {T}}\) and \(S_{-i}\triangleq \left \{ i^{\prime }|u_{i^{\prime }}=u_{i},i^{\prime }\neq i\right \} \).

Rights and permissions

About this article

Cite this article

Gupta, S., Phung, D. & Venkatesh, S. Modelling multilevel data in multimedia: A hierarchical factor analysis approach. Multimed Tools Appl 75, 4933–4955 (2016). https://doi.org/10.1007/s11042-014-2394-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-014-2394-3