Abstract

There is a need for developmentally appropriate Computational Thinking (CT) assessments that can be implemented in early childhood classrooms. We developed a new instrument called TechCheck for assessing CT skills in young children that does not require prior knowledge of computer programming. TechCheck is based on developmentally appropriate CT concepts and uses a multiple-choice “unplugged” format that allows it to be administered to whole classes or online settings in under 15 min. This design allows assessment of a broad range of abilities and avoids conflating coding with CT skills. We validated the instrument in a cohort of 5–9-year-old students (N = 768) participating in a research study involving a robotics coding curriculum. TechCheck showed good reliability and validity according to measures of classical test theory and item response theory. Discrimination between skill levels was adequate. Difficulty was suitable for first graders and low for second graders. The instrument showed differences in performance related to race/ethnicity. TechCheck scores correlated moderately with a previously validated CT assessment tool (TACTIC-KIBO). Overall, TechCheck has good psychometric properties, is easy to administer and score, and discriminates between children of different CT abilities. Implications, limitations, and directions for future work are discussed.

Similar content being viewed by others

References

Aho, A. V. (2012). Computation and computational thinking. The Computer Journal, 55(7), 832–835. https://doi.org/10.1093/comjnl/bxs074.

Barr, V., & Stephenson, C. (2011). Bringing computational thinking to K-12: what is involved and what is the role of the computer science education community? Inroads, 2(1), 48–54. https://doi.org/10.1145/1929887.1929905.

Barron, B., Cayton-Hodges, G., Bofferding, L., Copple, C., Darling-Hammond, L., & Levine, M. (2011). Take a giant step: a blueprint for teaching children in a digital age. New York: The Joan Ganz Cooney Center at Sesame Workshop Retrieved from https://joanganzcooneycenter.org.

Bell, T., & Vahrenhold, J. (2018). CS unplugged—how is it used, and does it work?. In Adventures between lower bounds and higher altitudes (pp. 497–521). Springer, Cham. https://doi.org/10.1007/978-3-319-98355-4_29.

Bers, M. U. (2010). The TangibleK robotics program: applied computational thinking for young children. Early Childhood Research and Practice, 12(2) Retrieved from http://ecrp.uiuc.edu/v12n2/bers.html/.

Bers, M. U. (2018). Coding as a playground: programming and computational thinking in the early childhood classroom. Routledge. https://doi.org/10.4324/9781315398945.

Bers, M. U., & Sullivan, A. (2019). Computer science education in early childhood: the case of ScratchJr. Journal of Information Technology Education: Innovations in Practice, 18, 113–138. https://doi.org/10.28945/4437.

Bers, M. U., Flannery, L., Kazakoff, E. R., & Sullivan, A. (2014). Computational thinking and tinkering: exploration of an early childhood robotics curriculum. Computers in Education, 72, 145–157. https://doi.org/10.1016/j.compedu.2013.10.020.

Botički, I., Kovačević, P., Pivalica, D., & Seow, P. (2018). Identifying patterns in computational thinking problem solving in early primary education. Proceedings of the 26th International Conference on Computers in Education. Retrieved from https://www.bib.irb.hr/950389?rad=950389

Brennan, K., & Resnick, M. (2012). New frameworks for studying and assessing the development of computational thinking. In Proceedings of the 2012 annual meeting of the American educational research association, Vancouver, Canada (Vol. 1, p. 25).

Cappelleri, J. C., Lundy, J. J., & Hays, R. D. (2014). Overview of classical test theory and item response theory for the quantitative assessment of items in developing patient-reported outcomes measures. Clinical Therapeutics, 36(5), 648–666. https://doi.org/10.1016/j.clinthera.2014.04.006.

Chen, G., Shen, J., Barth-Cohen, L., Jiang, S., Huang, X., & Eltoukhy, M. (2017). Assessing elementary students’ computational thinking in everyday reasoning and robotics programming. Computers in Education, 109, 162–175. https://doi.org/10.1016/j.compedu.2017.03.001.

Code.org (2019). Retrieved from https://code.org/

Core Team, R. (2019). R: a language and environment for statistical computing. Vienna: R Foundation for Statistical Computing Retrieved from https://www.R-project.org/.

Computer Science Teachers Association (CSTA) Standards Task Force CSTA K-12 computer science standards (2011), p. 9 Retrieved from: http://c.ymcdn.com/sites/www.csteachers.org/resource/resmgr/Docs/Standards/CSTA_K-12_CSS.pdf

Cuny, J., Snyder, L., & Wing, J.M. (2010). Demystifying computational thinking for non-computer scientists. Unpublished manuscript in progress, referenced in http://www.cs.cmu.edu/~CompThink/resources/TheLinkWing.pdf

Dagiene, V., & Stupurienė, G. (2016). Bebras--a sustainable community building model for the concept based learning of informatics and computational thinking. Informatics in education, 15(1), 25–44. https://doi.org/10.15388/infedu.2016.02.

Dienes, Z. (2014). Using Bayes to get the most out of non-significant results. Frontiers in Psychology, 5, 781. https://doi.org/10.3389/fpsyg.2014.00781.

Embretson, S. E., & Reise, S. P. (2000). Multivariate applications books series. Item response theory for psychologists. Mahwah, NJ, US: Lawrence Erlbaum Associates Publishers. Retrieved from https://psycnet.apa.org/record/2000-03918-000

Fayer, S., Lacey, A., & Watson, A. (2017). BLS spotlight on statistics: STEM occupations-past, present, and future. Washington, D.C.: U.S. Department of Labor, Bureau of Labor Statistics. Retrieved from https://www.bls.gov.

Fraillon, J., Ainley, J., Schulz, W., Duckworth, D., & Friedman, T. (2018). International Computer and Information Literacy Study: ICILS 2018: technical report. Retrieved from https://www.springer.com/gp/book/9783030193881

Gamer, M., Lemon, J., Fellows, I. & Singh, P. (2019) Package ‘irr’. Various coefficients of interrater reliability and agreement.Retrieved from https://CRAN.R-project.org/package=irr

Grover, S., & Pea, R. (2013). Computational thinking in K–12: a review of the state of the field. Educational Research, 42(1), 38–43. https://doi.org/10.3102/0013189X12463051.

Hinton, P., Brownlow, C., Mcmurray, I., & Cozens, B. (2004). SPSS explained. Abingdon-on-Thames: Taylor & Francis. https://doi.org/10.4324/9780203642597.

Horn, M. (2012). TopCode: Tangible Object Placement Codes. Retrieved from: http://users.eecs.northwestern.edu/~mhorn/topcodes.

ISTE. (2015). CT leadership toolkit. Retrieved from http://www.iste.org/docs/ct-documents/ct-leadershipttoolkit.pdf?sfvrsn=4

Jeffreys, H. (1961). Theory of probability (3rd ed.). Oxford: Oxford University Press.

K-12 Computer Science Framework Steering Committee. (2016). K–12 computer science framework. Retrieved from https://k12cs.org.

Kalelioğlu, F., Gülbahar, Y., & Kukul, V. (2016). A framework for computational thinking based on a systematic research review. Retrieved from https://www.researchgate.net/publication/303943002_A_Framework_for_Computational_Thinking_Based_on_a_Systematic_Research_Review

Kingsbury, G. G., & Weiss, D. J. (1983). A comparison of IRT-based adaptive mastery testing and a sequential mastery testing procedure. In New horizons in testing (pp. 257-283). Academic Press. https://doi.org/10.1016/B978-0-12-742780-5.50024-X.

Lee, I., Martin, F., Denner, J., Coulter, B., Allan, W., Erickson, J., Malyn-Smith, J., & Werner, L. (2011). Computational thinking for youth in practice. ACM Inroads, 2(1), 32–37. https://doi.org/10.1145/1929887.1929902.

Lu, J. J., & Fletcher, G. H. (2009). Thinking about computational thinking. In ACM SIGCSE Bulletin (Vol. 41, No. 1, pp. 260-264). ACM. https://doi.org/10.1145/1539024.1508959.

Marinus, E., Powell, Z., Thornton, R., McArthur, G., & Crain, S. (2018). Unravelling the cognition of coding in 3-to-6-year olds: the development of an assessment tool and the relation between coding ability and cognitive compiling of syntax in natural language. Proceedings of the 2018 ACM Conference on International Computing Education Research - ICER ’18, 133–141. https://doi.org/10.1145/3230977.3230984.

Mioduser, D., & Levy, S. T. (2010). Making sense by building sense: kindergarten children’s construction and understanding of adaptive robot behaviors. International Journal of Computers for Mathematical Learning, 15(2), 99–127. https://doi.org/10.1007/s10758-010-9163-9.

Moore, T. J., Brophy, S. P., Tank, K. M., Lopez, R. D., Johnston, A. C., Hynes, M. M., & Gajdzik, E. (2020). Multiple representations in computational thinking tasks: a clinical study of second-grade students. Journal of Science Education and Technology, 29(1), 19–34. https://doi.org/10.1007/s10956-020-09812-0.

Morey, R. D., & Rouder, J. N. (2015). BayesFactor 0.9. 12-2. Comprehensive R Archive Network Retrieved from https://cran.r-project.org/web/packages/BayesFactor/index.html.

Papert, S. (1980). Mindstorms: children, computers, and powerful ideas. New York: Basic Books. Retrieved from https://dl.acm.org/citation.cfm?id=1095592.

Ramsay, M. C., & Reynolds, C. R. (2000). Development of a scientific test: a practical guide. Handbook of psychological assessment, 21–42. https://doi.org/10.1016/B978-008043645-6/50080-X.

Relkin, E. (2018). Assessing young children’s computational thinking abilities (Master’s thesis). Retrieved from ProQuest Dissertations and Theses database. (UMI No. 10813994).

Relkin, E., & Bers, M. U. (2019). Designing an assessment of computational thinking abilities for young children. In L. E. Cohen & S. Waite-Stupiansky (Eds.), STEM for early childhood learners: how science, technology, engineering and mathematics strengthen learning. New York: Routledge. https://doi.org/10.4324/9780429453755-5.

Resnick, M. (2007). All I really need to know (about creative thinking) I learned (by studying how children learn) in kindergarten in Proceedings of the 6th Conference on Creativity & Cognition (CC ‘07), pp. 1–6, ACM. https://doi.org/10.1145/1254960.1254961.

Rizopoulos, D. (2006). ltm: an R package for latent variable modelling and item response theory analyses. Journal of Statistical Software, 17(5), 1–25. https://doi.org/10.18637/jss.v017.i05.

Rodriguez, B., Rader, C., & Camp, T. (2016). Using student performance to assess CS unplugged activities in a classroom environment. In Proceedings of the 2016 ACM Conference on Innovation and Technology in Computer Science Education (pp. 95-100). ACM. https://doi.org/10.1145/2899415.2899465.

Román-González, M., Pérez-González, J.-C., & Jiménez-Fernández, C. (2017). Which cognitive abilities underlie computational thinking? Criterion validity of the Computational Thinking Test. Computers in Human Behavior, 72, 678–691. https://doi.org/10.1016/j.chb.2016.08.047.

Román-González, M., Pérez-González, J. C., Moreno-León, J., & Robles, G. (2018). Can computational talent be detected? Predictive validity of the Computational Thinking Test. International Journal of Child-Computer Interaction, 18, 47–58. https://doi.org/10.1016/j.ijcci.2018.06.004.

Román-González, M., Moreno-León, J., & Robles, G. (2019). Combining assessment tools for a comprehensive evaluation of computational thinking interventions. In Computational thinking education (pp. 79–98). Springer, Singapore. Retrieved from https://link.springer.com/chapter/10.1007/978-981-13-6528-7_6.

RStudio Team. (2018). RStudio: integrated development for R. Boston: Studio, Inc. Retrieved from http://www.rstudio.com/.

Selby, C. C., & Woollard, J. (2013). Computational thinking: the developing definition. Paper Presented at the 18th annual conference on innovation and Technology in Computer Science Education, Canterbury. Retreived from https://eprints.soton.ac.uk/356481/.

Shute, V. J., Sun, C., & Asbell-Clarke, J. (2017). Demystifying computational thinking. Educational Research Review, 22, 142–158. https://doi.org/10.1016/j.edurev.2017.09.003.

Sullivan, A., & Bers, M. U. (2016). Girls, boys, and bots: gender differences in young children’s performance on robotics and programming tasks. Journal of Information Technology Education: Innovations in Practice, 15, 145–165. https://doi.org/10.28945/3547.

Tang, X., Yin, Y., Lin, Q., Hadad, R., & Zhai, X. (2020). Assessing computational thinking: a systematic review of empirical studies. Computers in Education, 148, 103798. https://doi.org/10.1016/j.compedu.2019.103798.

U.S. Department of Education, Office of Educational Technology (2017). Reimagining the role of technology in education: 2017 National Education Technology Plan update. Retrieved from https://tech.ed.gov/teacherprep.

Vizner M. Z. (2017). Big robots for little kids: investigating the role of Sale in early childhood robotics kits (Master’s thesis). Available from ProQuest Dissertations and Theses database. (UMI No. 10622097).

Wang, D., Wang, T., & Liu, Z. (2014). A tangible programming tool for children to cultivate computational thinking [research article]. https://doi.org/10.1155/2014/428080.

Werner, L., Denner, J., Campe, S., & Kawamoto, D. C. (2012). The fairy performance assessment: measuring computational thinking in middle school. Proceedings of the 43rd ACM Technical Symposium on Computer Science Education, 215–220. https://doi.org/10.1145/2157136.2157200.

Werner, L., Denner, J., & Campe, S. (2014). Using computer game programming to teach computational thinking skills. Learning, Education And Games, 37. Retrieved from https://dl.acm.org/citation.cfm?id=2811150.

Wetzels, R., Matzke, D., Lee, M. D., Rouder, J. N., Iverson, G. J., & Wagenmakers, E. J. (2011). Statistical evidence in experimental psychology: an empirical comparison using 855 t tests. Perspectives on Psychological Science, 6(3), 291–298. https://doi.org/10.1177/1745691611406923.

White House. (2016). Educate to innovate. Retrieved from: https://www.whitehouse.gov/issues/education/k-12/educate-innovate.

Wing, J. M. (2006). Computational thinking. CACM Viewpoint, 49(3), 33–35. https://doi.org/10.1145/1118178.1118215.

Wing, J. M. (2008). Computational thinking and thinking about computing. Philosophical transactions of the royal society of London A: mathematical, physical and engineering sciences, 366(1881), 3717–3725. https://doi.org/10.1098/rsta.2008.0118.

Wing, J. (2011). Research notebook: computational thinking—What and why? The Link Magazine, Spring. Carnegie Mellon University, Pittsburgh. Retrieved from: https://www.cs.cmu.edu/link/research- notebookcomputational-thinking-what-and-why.

Yadav, A., Good, J., Voogt, J., & Fisser, P. (2017). Computational thinking as an emerging competence domain. In Technical and vocational education and training (Vol. 23, pp. 1051–1067). https://doi.org/10.1007/978-3-319-41713-4_49.

Zhang, L., & Nouri, J. (2019). A systematic review of learning computational thinking through Scratch in K-9. Computers in Education, 141, 103607. https://doi.org/10.1016/j.compedu.2019.103607.

Acknowledgments

We sincerely appreciate our Project Coordinator, Angela de Mik, who made this work possible. We would also like to thank all of the educators, parents, and students who participated in this project, as well as members of the DevTech research group at Tufts University.

Funding

This work was generously supported by the Department of Defense Education Activity (DoDEA) grant entitled “Operation: Break the Code for College and Career Readiness.”

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

The authors declare that they have no conflict of interest.

Ethical Approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of the Tufts University Social, Behavioral & Educational IRB protocol no. 1810044.

Informed Consent

Informed consent was obtained from the educators and parents/guardians of participating students. The students gave their assent for inclusion.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

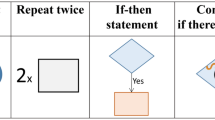

Appendix 1. The six CT domains covered in TechCheck along with examples of the different tasks used to probe those domains

Appendix 2. Difficulty and discrimination indexes for the TechCheck assessment

Question | Difficulty index | Discrimination index | Question | Difficulty index | Discrimination index |

|---|---|---|---|---|---|

1 | − 2.62 | 1.41 | 9 | − 1.00 | 0.73 |

2 | − 2.32 | 1.12 | 10 | − 1.61 | 1.02 |

3 | − 2.35 | 1.29 | 11 | − 1.19 | 1.30 |

4 | − 1.67 | 1.35 | 12 | − 0.22 | 1.20 |

5 | − 2.63 | 0.83 | 13 | − 0.094 | 1.08 |

6 | 0.71 | 0.98 | 14 | − 1.05 | 0.65 |

7 | − 1.76 | 1.06 | 15 | .70 | 0.525 |

8 | − 1.63 | 0.88 | |||

Rights and permissions

About this article

Cite this article

Relkin, E., de Ruiter, L. & Bers, M.U. TechCheck: Development and Validation of an Unplugged Assessment of Computational Thinking in Early Childhood Education. J Sci Educ Technol 29, 482–498 (2020). https://doi.org/10.1007/s10956-020-09831-x

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10956-020-09831-x