Abstract

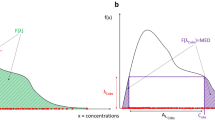

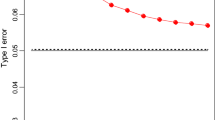

Population pharmacokinetic (PK) (or pharmacodynamic (PD)) modelling aims to analyse the variability of drug kinetics (or dynamics) between numerous subjects belonging to a population. Such variability includes inter- and intra-individual sources leading to important differences between the variation ranges, the relative concentrations and the global shapes of PK profiles. These various sources of variability suggest that the distance metrics between the subjects can be examined under different aspects. Some subjects are so distant from the majority that they tend to be atypical or outliers. This paper presents three multivariate statistical methods to diagnose the outliers within a full population PK dataset, prior to any modelling step. Each method combined all the concentration–time variables to analyse the differences between patients by referring to a distance criterion: (a) Correspondence analysis (CA) used the chi-square distance to highlight the most atypical profiles in terms of relative concentrations; (b) Mahalanobis distance was calculated to extract PK profiles showing atypical shapes due to atypical variations in concentration; (c) Andrews method combined all the concentration variables into a Fourier transformation to give sine–cosine curves showing the clustering behaviours of subjects under the Euclidean distance criterion. After identification of outlier subjects, these methods can also be used to extract the concentration values which cause the atypical states of the patients. Therefore, the outliers will incorporate different variability sources of the PK dataset according to each method and independently of any PK modelling. Finally, a significant positive trend was found between the number of times outlier concentrations were detected (by one, two or three diagnostics) and the NPDE metrics of these concentrations (after a PK modelling): NPDE were highest when the corresponding concentration was detected by more diagnostics a priori. The application of a priori outlier diagnostics is illustrated here on two PK datasets: stimulated cortisol by synacthen and capecitabine administrated orally.

Similar content being viewed by others

References

Gnanadesikan R (1977). Methods for statistical data analysis of multivariate observations. Wiley, New York

Gnanadesikan R and Kettenring JR (1972). Robust estimates, residuals and outlier detection with multiresponse data. Biometrics 28: 81–124

Greenacre MJ (1984). Theory and applications of correspondence analysis. Academic Press, London

Greenacre MJ (1993). Correspondence analysis in practice. Academic Press, London

Mortier F and Bar-Hen A (2004). Influence and sensitivity measures in correspondence analysis. Statistics 38: 207–215

Andrews DF (1972). Plots of high-dimensional data. Biometrics 28: 125–136

Barnett V (1976). The ordering of multivariate data (with discussion). J R Stat Soc A 139: 318–354

Swaroop R, Winter WR (1971) A statistical technique for computer identification of outliers in multivariate data. NASA Tech Notes D-6472

Brendel K, Comets E, Laffont C, Laveille C and Mentré F (2006). Metrics for external model evaluation with an application to the population pharmacokinetics of gliclazide. Pharm Res 23: 2036–2049

Dixon WJ (1950). Analysis of extreme values. Ann Math Stat 21: 488–506

Grubbs FE (1969). Procedures for detecting outlying observations in samples. Technometrics 11: 1–21

Barnett V (1978). The study of outliers: purpose and model. Appl Stat 27: 242–250

Barnett V and Lewis T (1994). Outliers in statistical data. Wiley, New York

Rasmussen JL (1988). Evaluating outlier identification tests: Mahalanobis D Squared and Comrey Dk. Multivariate Behav Res 23: 189–202

Seber GAF (1984). Multivariate observations. Wiley, New York

Rousseeuw PJ and Leroy AM (1987). Robust regression and outlier detection. Wiley, New York

Cerioli A and Riani M (1999). The ordering of spatial data and the detection of multiple outliers. J Comput Graph Stat 8: 239–258

Robinson RB (2005). Identifying outliers in correlated water quality data. J Environ Eng 134: 651–657

Everitt BS and Dunn G (1992). Applied multivariate data analysis. Wiley, New York

Filzmoser P, Garrett RG and Reimann C (2005). Multivariate outlier detection in exploration geochemistry. Comput Geosci 31: 579–587

SAS Institute Inc (1987) JMP3.2. SAS Institute. Carry, North Carolina

Hawkins DM (1980). Identification of outliers. Chapman and Hall, London

Jain AK and Dubes RC (1988). Algorithms for clustering data. Prentice Hall, New Jersey

Boberg J (1999) Cluster analysis: a mathematical approach with applications to protein structures. Academic dissertation, Turku Centre for Computer Science, Turku, Finland

Rousseeuw PJ and Van Zomeren BC (1990). Unmasking multivariate outliers and leverage points. J Am Stat Assoc 85: 633–651

Hampel FR, Ronchetti EM, Rousseeuw PJ and Stahel W (1986). Robust statistics. The approach based on influence functions. Wiley, New York

Escofier B and Pagès J (1991). Presentation of correspondence analysis and multiple correspondence analysis with the help of examples. In: Devillers, J and Karcher, W (eds) Applied multivariate analysis in SAR and environmental studies, pp 1–32. Kluwer Academic Publishers, Dordrecht

Thioulouse J, Chessel D, Dolédec S and Olivier JM (1996). ADE-4: a multivariate analysis and graphical display software. Stat Comput 7: 75–83

Frye C, Freeze WS, Buckingham FK (2004) Microsoft Office Excel 2003 Programming Inside Out. Microsoft Pr, Washington

Gelman A, Carlin JB, Stern HS and Rubin DB (1995). Bayesian data analysis. Chapman and Hall, London

Semmar N, Bruguerolle B, Boullu-Ciocca S and Simon N (2005). Cluster analysis: an alternative method for covariate selection in population pharmacokinetic modelling. J Pharmacokinet Pharmacodyn 32: 333–358

Urien S, Rezaï K and Lokiec F (2005). Pharmacokinetic modelling of 5-FU production from capecitabine — a population study in 40 adult patients with metastatic cancer. J Pharmacokinet Pharmacodyn 32: 817–833

Siegel AF (1988). Statistics and data analysis: an introduction. Wiley, New York

Penny KI and Jolliffe IT (1999). Multivariate outlier detection applied to multiply imputed laboratory data. Stat Med 18: 1879–1895

Chatterjee S and Hadi AS (1988). Sensitivity analysis in linear regression. Wiley, New York

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Semmar, N., Urien, S., Bruguerolle, B. et al. Independent-model diagnostics for a priori identification and interpretation of outliers from a full pharmacokinetic database: correspondence analysis, Mahalanobis distance and Andrews curves. J Pharmacokinet Pharmacodyn 35, 159–183 (2008). https://doi.org/10.1007/s10928-007-9082-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10928-007-9082-0