Abstract

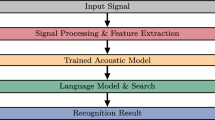

It is well-known that the Arabic language poses non-trivial issues for Automatic Speech Recognition (ASR) systems. This paper is concerned with the problems posed by the complex morphology of the language and the absence of diacritics in the written form of the language. Several acoustic and language models are built using different transcription resources, namely a grapheme-based transcription which uses non-diacriticised text materials, phoneme-based transcriptions obtained from automatic diacritisation tools (SAMA or MADAMIRA), and a predefined dictionary. The paper presents a comprehensive assessment for the aforementioned transcription schemes by employing them in building a collection of Arabic ASR systems using the GALE (phase 3) Arabic broadcast news and broadcast conversational speech datasets LDC (2015), which include 260 h of recorded material. Contrary to our expectations, the experimental evidence confirms that the use of grapheme-based transcription is superior to the use of phoneme-based transcription. To investigate this further, several modifications are applied to the MADAMIRA analysis by applying a number of simple phonological rules. These improvements have a substantial effect on the systems’ performance, but it is still inferior to the use of a simple grapheme-based transcription. The research also examined the use of a manually diacriticised subset of the data in training the ASR system and compared it with the use of grapheme-based transcription and phoneme-based transcription obtained from MADAMIRA. The goal of this step is to validate MADAMIRA’s analysis. The results show that using the manually diacriticised text in generating the phonetic transcription can significantly decrease the WER compared to the use of MADAMIRA diacriticised text and also the isolated graphemes. The results obtained strongly indicate that providing the training model with less information about the data (only graphemes) is less damaging than providing it with inaccurate information.

Similar content being viewed by others

Notes

Diacritics are mainly markers for both vowels and consonants. These markers include short vowels, dagger Alif, sukun, nunation, and gemination marker.

GALE (phase 3) Arabic broadcast News and Broadcast Conversation datasets were developed by the Linguistic Data Consortium (LDC). Obtaining these datasets is exclusively available to LDC members through their website.

SAMA is a software tool for the morphological analysis of Standard Arabic. It is an updated version of Buckwalter Arabic Morphological Analyser (BAMA). SAMA analyses each Arabic word token by providing all possible prefix-stem-suffix segmentations, and lists all possible annotation solutions, with assignment of all diacritic marks, morpheme boundaries, and all Part-of-Speech (POS) tags. The choice is then left to users to select the most appropriate annotation among the generated output. Accessing this tool is exclusively available to LDC members through this link: https://catalog.ldc.upenn.edu/LDC2010L01

MADAMIRA is a toolkit that provides linguistic information such as tokenisation, lemmatisation, diacritisation, and part of speech tagging. It contains models for both MSA and Egyptian. What sets MADAMIRA apart from similar tools is that it takes word context into account, which makes the generated analysis more accurate. Non-commercial license is freely available at: http://innovation.columbia.edu/technologies/CU14012

http://www.speech.cs.cmu.edu

MADAMIRA requires a SAMA-style set of prefix-, stem- and suffix-dictionaries: we used it with the standard SAMA dictionaries with some extensions as discussed here.

This is particularly challenging when these characters appear adjacent to one another, since you need to know whether the first is a consonant or a vowel before you can decide which class the second belongs to; but in order to know whether the first is a vowel or a consonant you need to know which class the second belongs to.

References

Abushariah, M. A.-A. M., Ainon, R., Zainuddin, R., Elshafei, M., & Khalifa, O. O. (2012). Arabic speaker-independent continuous automatic speech recognition based on a phonetically rich and balanced speech corpus. International Arab Journal of Information Technology (IAJIT), 9(1), 84–93.

Abuzeina, D., Al-Khatib, W., Elshafei, M., & Al-Muhtaseb, H. (2011). Cross-word Arabic pronunciation variation modeling for speech recognition. International Journal of Speech Technology, 14(3), 227–236.

AbuZeina, D., Al-Khatib, W., Elshafei, M., & Al-Muhtaseb, H. (2012). Within-word pronunciation variation modeling for Arabic ASRs: A direct data-driven approach. International Journal of Speech Technology, 15(2), 1–11.

Afify, M., Nguyen, L., Xiang, B., Abdou, S. & Makhoul, J. (2005). Recent progress in Arabic broadcast news transcription at bbn. In: Ninth European conference on speech communication and technology.

Al-Anzi, F. S., & AbuZeina, D. (2017). The impact of phonological rules on Arabic speech recognition. International Journal of Speech Technology, 20(3), 715–723.

Al-Anzi, F. S., & AbuZeina, D. (2019). Performance evaluation of Sphinx and htk speech recognizers for spoken Arabic language. International Journal of Innovative Computing, 15(3), 1.

Alghamdi, M., Elshafei, M., & Al-Muhtaseb, H. (2007). Arabic broadcast news transcription system. International Journal of Speech Technology, 10(4), 183–195.

Alghamdi, M., Elshafei, M., & Al-Muhtaseb, H. (2009). Arabic broadcast news transcription system. International Journal of Speech Technology, 10(4), 183–195.

Ali, A., Zhang, Y., Cardinal, P., Dahak, N., Vogel, S., & Glass, J. (2014). A complete Kaldi recipe for building Arabic speech recognition systems. In: Spoken Language Technology Workshop (SLT), 2014 IEEE’. IEEE, pp. 525–529

Ali, M., Elshafei, M., Al-Ghamdi, M., Al-Muhtaseb, H. & Al-Najjar, A. (2008). Generation of Arabic phonetic dictionaries for speech recognition. In: 2008 international conference on innovations in information technology, IEEE, pp. 59–63.

Alsharhan, E., & Ramsay, A. (2017). Improved Arabic speech recognition system through the automatic generation of fine-grained phonetic transcriptions. Information Processing & Management, 56, 343.

Biadsy, F., Moreno, P. J. & Jansche, M. (2012). Google’s cross-dialect Arabic voice search. In: IEEE international conference on acoustics, speech and signal processing (ICASSP), 2012, IEEE, pp. 4441–4444.

Diehl, F., Gales, M. J., Tomalin, M. & Woodland, P. C. (2009). Morphological analysis and decomposition for Arabic speech-to-text systems. In: Tenth annual conference of the international speech communication association.

El-Desoky, A., Gollan, C., Rybach, D., Schlüter, R. & Ney, H. (2009). Investigating the use of morphological decomposition and diacritization for improving Arabic lVCSR. In: Tenth annual conference of the international speech communication association.

El-Desoky, A., Schlüter, R., & Ney, H. (2010). A hybrid morphologically decomposed factored language models for Arabic IVCSR. In: Human language technologies: the 2010 annual conference of the North American chapter of the association for computational linguistics. Association for Computational Linguistics, pp. 701–704.

Elmahdy, M., Gruhn, R., Minker, W. & Abdennadher, S. (2010). Cross-lingual acoustic modeling for dialectal Arabic speech recognition. In: INTERSPEECH, pp. 873–876.

Habash, N., Soudi, A., & Buckwalter, T. (2007). On Arabic transliteration. Arabic computational morphology (pp. 15–22). New York: Springer.

Kirchhoff, K., & Vergyri, D. (2005). Cross-dialectal data sharing for acoustic modeling in Arabic speech recognition. Speech Communication, 46(1), 37–51.

Kirchhoff, K., Vergyri, D., Bilmes, J., Duh, K., & Stolcke, A. (2006). Morphology-based language modeling for conversational Arabic speech recognition. Computer Speech & Language, 20(4), 589–608.

LDC. (2015). Gale phase 3 Arabic broadcast and conversation speech. University of Pennsylvania: Linguistic Data Consortium.

Lewis, M. P. & Gary, F. (2015). Simons and Charles D. Fennig (eds.). 2013, Ethnologue: Languages of the world.

Maamouri, M., Graff, D., Bouziri, B., Krouna, S., Bies, A. & Kulick, S. (2010). Standard Arabic morphological analyzer (sama) version 3.1, Linguistic Data Consortium, Catalog No.: LDC2010L01.

Messaoudi, A., Gauvain, J. & Lamel, L. (2006). Arabic broadcast news transcription using a one million word vocalized vocabulary. In: IEEE international conference on acoustics, speech and signal processing, 2006. ICASSP 2006 Proceedings. 2006, Vol. 1, IEEE, pp. I–I.

Ng, T., Nguyen, K., Zbib, R. & Nguyen, L. (2009). Improved morphological decomposition for Arabic broadcast news transcription. In: IEEE international conference on acoustics, speech and signal processing, 2009. ICASSP 2009, IEEE, pp. 4309–4312.

Pasha, A., Al-Badrashiny, M., Diab, M. T., ElKholy, A., Eskander, R., Habash, N., Pooleery, M., Rambow, O. & Roth, R. (2014). Madamira: a fast, comprehensive tool for morphological analysis and disambiguation of Arabic. In: LREC, vol. 14, pp. 1094–1101.

Ramsay, A., Alsharhan, I., & Ahmed, H. (2014). Generation of a phonetic transcription for modern standard Arabic: A knowledge-based model. Computer Speech & Language, 28(4), 959–978.

Sharma, D. P., & Atkins, J. (2014). Automatic speech recognition systems: challenges and recent implementation trends. International Journal of Signal and Imaging Systems Engineering, 7(4), 220–234.

Vergyri, D., & Kirchhoff, K. (2004). Automatic diacritization of Arabic for acoustic modeling in speech recognition. In: Proceedings of the workshop on computational approaches to Arabic script-based languages. Association for Computational Linguistics

Wells, J. (2002). Sampa for Arabic. http://www.phon.ucl.ac.uk/home/sampa/Arabic.htm.

Young, S., Evermann, G., Gales, M., Hain, T., Kershaw, D., Liu, X., et al. (2002). The htk book. Cambridge University Engineering Department, 3, 175.

Zitouni, I., Sorensen, J. S. & Sarikaya, R. (2006). Maximum entropy based restoration of Arabic diacritics. In: Proceedings of the 21st international conference on computational linguistics and the 44th annual meeting of the association for computational linguistics. Association for Computational Linguistics, pp. 577–584.

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

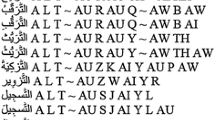

The research uses Buckwalter scheme to romanise Arabic characters in all textual resources, as Arabic characters are not acceptable by HTK. The research also uses SAMPA transcription scheme to represent the phonetic notation in the dictionary. Details are given in the table below. Entries in bold indicate that amendment was applied to the original form.

Rights and permissions

About this article

Cite this article

Alsharhan, E., Ramsay, A. & Ahmed, H. Evaluating the effect of using different transcription schemes in building a speech recognition system for Arabic. Int J Speech Technol 25, 43–56 (2022). https://doi.org/10.1007/s10772-020-09720-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-020-09720-z