Abstract

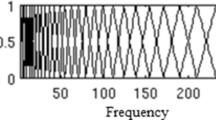

In this paper, we propose a research work on speaker discrimination using a multi-classifier fusion with focus on feature reduction effects. Speaker discrimination consists in the automatic distinction between two speakers using the vocal characteristics of their speeches. A number of features are extracted using Mel Frequency Spectral Coefficients and then reduced using Relative Speaker Characteristic (RSC) along with the Principal Components Analysis (PCA). Several classification methods are implemented to ensure the discrimination task. Since different classifiers are employed, two fusion algorithms at the decision level, referred to as Weighted Fusion and Fuzzy Fusion, are proposed to boost the classification performances. These algorithms are based on the weighting of the different classifiers outputs. Furthermore, the effects of speaker gender and feature reduction on the speaker discrimination task have been examined too. The evaluation of our approaches was conducted on a subset of Hub-4 Broadcast-News. The experimental results have shown that the speaker discrimination accuracy is improved by 5–15% using the (RSC–PCA) feature reduction. In addition, the proposed fusion methods recorded an improvement of about 10% compared to the individual scores of the classifiers. Finally, we noticed that the gender has an important impact on the discrimination performances.

Similar content being viewed by others

References

Benzeghiba, M. et al. (2007). Automatic speech recognition and speech variability: A review. Speech Commununication, 49(10), 763–786.

Bimbot, F. (2009). Automatic speaker recognition. Language and speech processing, pp. 321–354.

Bimbot, F., Magrin-Chagnolleau, I., & Mathan, L. (1995). Second-order statistical measures for text-independent speaker identification. Speech Communication, 17(1–2), 177–192.

Bulgakova, E. et al. (2015). Speaker verification using spectral and durational segmental characteristics. In: International conference on speech and computer. New York: Springer, pp. 397–404.

Burget, L. et al. (2011). Discriminatively trained probabilistic linear discriminant analysis for speaker verification. In: Proceedings of the 36th international conference on acoustics, speech and signal processing, Prague, Czech Republic, May 22–27.

Corinna, C., & Vapnik, V. (1995). Support-vector networks. Machine Learning, 20(3), 273–297.

Dhonde, S. B., & Jagade, S. M. (2015). Feature extraction techniques in speaker recognition: A review. International Journal on Recent Technologies in Mechanical and Electrical Engineering (IJRMEE), 2(no 5), 104–106.

El-Samie, F. E. A. (2011). Information security for automatic speaker identification (1st ed.). New York: Springer.

Ghodsi, A. (2006). Dimensionality reduction a short tutorial, Department of Statistics and Actuarial Science, University of Waterloo, Ontario, Canada, pp. 37–38.

Guandong, X., Zong, Y., & Zhenglu, Y. (2013). Applied data mining. Boston: CRC Press.

Jebara, T. (2012). Machine learning: Discriminative and generative (Vol. 755). New York: Springer.

Kinnunen, T., & Li, H. (2010). An overview of text-independent speaker recognition: From features to supervectors. Speech Communication, 52(1), 12–40.

Lee, C. H., Soong, F. K., & Paliwal, K. (Eds.) (2012). Automatic speech and speaker recognition: Advanced topics, (Vol. 355). New York: Springer.

Lei, Y., & Scheffer, N., et al. (2014). A novel scheme for speaker recognition using a phonetically-aware deep neural network. In Proceedings of the 39th international conference on acoustics, speech and signal processing, Florence, Italy, May 4–9.

Li, W. et al. (2015). Sparsity analysis and compensation for i-vector based speaker verification. In Proceeding of the international conference on speech and computer. New York: Springer.

Man-Wai, M., & Hon-Bill, Y. (2014). A study of voice activity detection techniques for NIST speaker recognition evaluations. Computer Speech & Language, 28(1), 295–313.

Meignier, S. (2002). Indexation en locuteurs de documents sonores: Segmentation d’un document et Appariement d’une collection. Ph.D. thesis, Univ. d’Avignon et des Pays de Vaucluse, France.

Ming, L. et al. (2016). Speaker verification based on the fusion of speech acoustics and inverted articulatory signals. Computer Speech & Language, 36, 196–211.

Nakagawa, S., Longbiao, W., & Ohtsuka, S. (2012). Speaker identification and verification by combining MFCC and phase information. IEEE Transactions on Audio, Speech, and Language Processing, 20(4), 1085–1095.

Nakagawa, S., Wang, L., & Ohtsuka, S. (2012). Speaker identification and verification by combining MFCC and phase information. IEEE Transactions on Audio, Speech, and Language Processing, 20(4), 1085–1095.

Ouamour, S., Guerti, M., & Sayoud, H. (2008). A new relativistic vision in speaker discrimination. Canadian Acoustics, 36(4), 24–35.

Ouamour, S., Sayoud, H., & Guerti, M. (2009). Optimal spectral resolution in speaker authentication application in noisy environment and telephony. International Journal of Mobile Computing and Multimedia Communications (IJMCMC), 1(2), 36–47.

Pribil, J., Pribilova, A., Matousek, J. (2016). GMM-based speaker gender and age classification after voice conversion. In First international workshop on sensing, processing and learning for intelligent machines (SPLINE), Denmark.

Richardson, F., Reynolds, D. A., & Dehak, N. (2015). Deep neural network approaches to speaker and language recognition. IEEE Signal Processing Letters, 22(10), 1671–1675.

Sayoud, H. (2003). Automatic speaker recognition using neural approaches, PhD thesis, USTHB University, Algiers, Algeria.

Shlens, J. (2014). A tutorial on principal component analysis, arXiv preprint arXiv.1404.1100.

Venables, W. N., & Ripley, B. D. (2013). Modern applied statistics with S-PLUS. Berlin: Springer.

Wu, D., & Jie, C. (2015). Multimodel biometrics fusion based on FAR and FRR using triangular norm. International Journal of Computational Intelligence Systems, 8(4), 779–786.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Khennouf, S., Sayoud, H. Speaker discrimination based on fuzzy fusion and feature reduction techniques. Int J Speech Technol 21, 51–63 (2018). https://doi.org/10.1007/s10772-017-9484-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-017-9484-3