Abstract

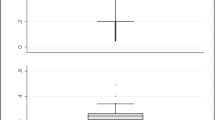

We use resampling of data to explore the basic statistical properties of super-efficient data envelopment analysis (DEA) when used as a benchmarking tool by the manager of a single decision-making unit. Our focus is the gaps in the outputs (i.e., slacks adjusted for upward bias), as they reveal which outputs can be increased. The numerical experiments show that the estimates of the gaps fail to exhibit asymptotic consistency, a property expected for standard statistical inference. Specifically, increased sample sizes were not always associated with more accurate forecasts of the output gaps. The baseline DEA’s gaps equaled the mode of the jackknife and the mode of resampling with/without replacement from any subset of the population; usually, the baseline DEA’s gaps also equaled the median. The quartile deviations of gaps were close to zero when few decision-making units were excluded from the sample and the study unit happened to have few other units contributing to its benchmark. The results for the quartile deviations can be explained in terms of the effective combinations of decision-making units that contribute to the DEA solution. The jackknife can provide all the combinations contributing to the quartile deviation and only needs to be performed for those units that are part of the benchmark set. These results show that there is a strong rationale for examining DEA results with a sensitivity analysis that excludes one benchmark hospital at a time. This analysis enhances the quality of decision support using DEA estimates for the potential of a decision-making unit to grow one or more of its outputs.

Similar content being viewed by others

References

O’Neill L, Rauner M, Heidenberger K, Kraus M (2007) A cross-national comparison and taxonomy of DEA-based hospital efficiency studies. Soc-Econ Plann Sci DOI 10.1016/j.seps.2007.03.001

O’Neill L, Dexter F (2004) Market capture of inpatient perioperative services using data envelopment analysis. Health Care Manage Sci 7:263–273

Dexter F, O’Neill L (2004) Data envelopment analysis to determine by how much hospitals can increase elective inpatient surgical workload for each specialty. Anesth Analg 99:1492–1500

Dexter F, Ledolter J, Wachtel RE (2005) Tactical decision making for selective expansion of operating room resources incorporating financial criteria and uncertainty in sub-specialties’ future workloads. Anesth Analg 100:1425–1432

O’Neill L, Dexter F (2007) Tactical increases in operating room block time based on financial data and market growth estimates from data envelopment analysis. Anesth Analg 104:355–368

Steiner C, Elixhauser A, Schnaier J (2002) The healthcare cost and utilization project: an overview. Eff Clin Pract 5:143–151

O’Neill L, Dexter F (2005) Methods for understanding super-efficient data envelopment analysis results with an application to hospital inpatient surgery. Health Care Manage Sci 8:291–298

Wachtel RE, Dexter F (2008) Tactical increases in operating room block time for capacity planning should not be based on utilization. Anesth Analg 106:215–226

Andersen P, Petersen N (1993) A procedure for ranking efficient units in data envelopment analysis. Manage Sci 39:1261–1264

Zhu J (1996) Robustness of the efficient DMUs in data envelopment analysis. Eur J Oper Res 90:451–460

Banker RD, Das S, Datar S (1989) Analysis of cost variances for management control in hospitals. Res Gov Non-Profit Account 5:69–291

O’Neill L (1998) Multifactor efficiency in data envelopment analysis with an application to urban hospitals. Health Care Manage Scie 1:19–28

Chen Y, Sherman HD (2004) The benefits of non-radial vs. radial superefficiency DEA: An application to burden-sharing amongst NATO member nations. Socio-Econ Plann Sci 38:307–320

Banker RD, Chang H (2006) The super-efficiency procedure for outlier identification, not for ranking efficient units. Eur J Oper Res 175:1311–1320

Cooper WW, Seiford LM, Tone K. Data Envelopment Analysis: A Comprehensive Text with Models, Applications, References and DEA-Solver Software, 2nd edition, Springer, 2006, 309–320

Hahn GJ, Meeker WQ (1991) Statistical intervals. A guide for practitioners. John Wiley & Sons, Inc., New York, pp 82–84

Clopper CJ, Pearson ES (1934) The use of confidence or fiducial limits illustrated in the case of the binomial. Biometrika 26:404–413

Bonett BG (2006) Confidence interval for a coefficient of quartile variation. Comput Stat Data Anal 50:2953–2957

Gonzáles-Lima M, Tapia RA, Thrall RM (1996) On the construction of strong complementary slackness solutions for DEA linear programming problems using a primal-dual interior-point method. Ann Oper Res 66:139–162

Zhang Y, Bartels R (1998) The effect of sample size on the mean efficiency in DEA with an application to electricity distribution in Australia, Sweden and New Zealand. Journal of Productivity Analysis 9:87–204

Simar L, Wilson PW (2000) A general methodology for bootstrapping in non-parametric frontier models. J Appl Stat 27:779–802

Simar L, Wilson PW (1999) Some problems with the Ferrier/Hirshberg bootstrap idea. Journal of Productivity Analysis 11:7–80

Horsky D, Nelson P (2006) Testing the statistical significance of linear programming estimators. Manage Sci 52:128–135

Banker RD (1993) Maximum-likelihood, consistency and data envelopment analysis – A statistical foundation. Manage Sci 39:1265–1273

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Dexter, F., O’Neill, L., Xin, L. et al. Sensitivity of super-efficient data envelopment analysis results to individual decision-making units: an example of surgical workload by specialty. Health Care Manage Sci 11, 307–318 (2008). https://doi.org/10.1007/s10729-008-9055-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10729-008-9055-x