Abstract

The aim of the current study was to investigate to what extent children’s potential for learning is related to their level of cognitive flexibility. Potential for learning was measured through a dynamic testing procedure that aimed to measure how much a child can profit from a training procedure integrated into the testing process, including the amount and type of feedback the children required during this training procedure. The study followed a pre-test–training–post-test control group design. Participants were 153 6–7-year-old children. Half of this group of children were provided with a standardised graduated prompts procedure. The other half of the participants performed a non-inductive cognitive task. Children’s cognitive flexibility was measured through a card sorting test and a test of verbal fluency. Results show that cognitive flexibility was positively related to children’s performance, but only for children in the practice-only condition who received no training. These outcomes suggest that dynamic testing, and more in particular, the graduated prompting procedure, supports children’s cognitive flexibility, thereby giving children with weaker flexibility the opportunity to show more of their cognitive potential as measured through inductive reasoning.

Similar content being viewed by others

Introduction

For educational professionals, it is becoming increasingly important to address the needs of individual students, and teachers are more and more expected to differentiate their instruction and monitor children’s progression more carefully (Bosma et al. 2012; Pameijer and Van Beukering 2015; Rock et al. 2008). When they encounter difficulties in meeting individual children’s educational needs, they are often supported by educational or school psychologists. If a teacher or other educational professional has a question about a child’s cognitive functioning, for instance in cases where a child cannot cope with every day classroom situations, a school or educational psychologist often administers a conventional, static test, for example, an intelligence or school achievement test. However, critics argue that most of these static instruments predominantly measure the effects of past learning experiences and do not result in sufficient information about underlying cognitive processes and strategies in relation to children’s (potential for) learning, or instructional needs in order to provide in-depth input for educational interventions (e.g. Fabio 2005; Grigorenko 2009; Robinson-Zañartu and Carlson 2013). Nor do they provide in-depth information about the relationship between other aspects of a child’s cognitive abilities, such as executive functions, and their relationship with the learning process. In response to these criticisms, dynamic forms of testing have been developed that incorporate training in the assessment process, aimed at both measuring children’s progression in task solving (Sternberg and Grigorenko 2002) and gaining insight into the type of help a child needs in order to learn (e.g. Resing, 2013).

Previous research on dynamic testing has identified different measures of potential for learning, including the amount and the type of feedback received during training, performance after training, the change in performance from the pre-training to post-training stage, and the transfer of learned skills (e.g. Elliott et al. 2010; Sternberg and Grigorenko 2002). Outcomes of these studies have revealed large variability in children in relation to both their instructional needs and level of improvement (Bosma & Resing, 2006, Resing & Elliott, 2011; Fabio 2005; Jeltova et al. 2011). For educational practice, these testing procedures have been found effective in predicting future school achievements (e.g. Caffrey et al. 2008; Campione and Brown 1987; Kozulin & Garb, 2002), and exploring cognitive strategies children employ during the assessment process (e.g. Burns et al. 1992; Resing & Elliott, 2011).

Dynamic testing often employs general inductive reasoning tasks because they are found to play a fundamental role in cognitive development, learning, and instruction (Csapó 1997; Goswami 2001; Klauer and Phye 2008). Inductive reasoning is often defined as the process of moving from the specific to the general, or as the generalisation of single observations and experiences in order to reach general conclusions or derive broad rules and is considered to be a general thinking skill (e.g. Pellegrino, 1985; Holzman et al. 1983), which is related to almost all higher-order cognitive skills (Csapó 1997).

In the current study, we sought to examine potential for learning with a dynamic test of pictorial series completion, utilising graduated prompting techniques during training. Solving pictorial series completion tasks, such as the one used in the present study, requires children to search for different patterns of repeating elements, while at the same time identifying unknown changes in the relationship between these elements (e.g. Resing et al. 2012b; Sternberg & Gardner, 1983).

An important aspect in the potential for learning of individual children is their executive functioning. Executive functioning refers to various inter-related cognitive processes that enable goal-directed behaviour and that are necessary in modulating and regulating thinking and acting (Miller and Cohen 2001; Miyake et al. 2000). In her review, Diamond (2013) describes a frequently proposed three-component model that distinguishes working memory, inhibitory control, and cognitive flexibility. With regard to the relationship between executive functioning and dynamic testing, previous studies have demonstrated that dynamic test outcomes, such as progression after training and instructional needs, are related to children’s executive function skills (Ropovik 2014; Stevenson et al. 2013; Swanson 2006, 2011). However, the majority of these studies focus on the role of working memory and inhibitory control in potential for learning (e.g. Resing et al. 2012b; Swanson 2011; Stevenson et al. 2013; Tzuriel, 2001). In these studies, it appeared that the feedback and help provided during the training procedures of dynamic testing supported the use of working memory and inhibitory control, thereby facilitating children in using and showing more of their potential for learning. However, studies examining the role of cognitive flexibility in children’s potential for learning remain relatively sparse.

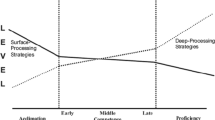

Therefore, in the current study, we sought to explore to what extent different aspects of cognitive flexibility are related to children’s potential for learning as assessed by a graduated prompting procedure. Cognitive flexibility has been defined as a cognitive system that underpins the ability to change perspective, shift attention between tasks or mental sets, and adjust to changing demands and problems. Furthermore, it has been described as the ability to learn from mistakes and feedback, devise and select alternative learning strategies and responses, and form plans and simultaneously process information from multiple sources (Anderson 2002; Deak and Wiseheart 2015; Diamond 2013; Ionescu 2012; Miller and Cohen 2001). In terms of specific classroom behaviour, cognitive flexibility in children is often considered to be the opposite of rigidity, i.e. difficulties in changing between activities, adjusting previously learned behaviours, and learning from mistakes. Previous research has shown that cognitive flexibility plays a considerable role in learning in childhood (e.g. Bull and Scerif 2001; Ropovik 2014), and especially in math-related subjects. These subjects require efficient switching between different task aspects or arithmetical strategies (e.g. Agostino et al. 2010; Yeniad et al. 2013). In addition, cognitive flexibility might be particularly important in the assessment of young children’s potential for learning because significant developmental changes of this particular function occur around 6–7 years of age (e.g. Davidson et al. 2006; Gupta et al. 2009).

Our study aimed to focus on different elements of flexibility in order to expand our understanding of the relationship between this particular executive function and children’s potential for learning. Examining flexibility with different performance measures was expected to provide us with more in-depth understanding of how these flexibility aspects might contribute to the ability to profit from dynamic training, to respond to feedback (e.g. Crone et al. 2004a; Miyake et al. 2000), or to effectively switch between different problem-solving strategies (e.g. Hurks et al. 2004; Troyer et al. 1997).

In conclusion, the main aim of the current study was to analyse whether children’s potential for learning was related to two aspects of cognitive flexibility: (a) children’s cognitive flexibility performance as examined with a card sorting test, measuring children’s ability to efficiently respond to feedback (e.g. Berg, 1948; Cianchetti et al. 2007), and (b) children’s cognitive flexibility examined with a verbal fluency test, measuring children’s ability to efficiently switch between different verbal cognitive clusters (e.g. Troyer et al. 1997; Troyer 2000). By examining performance on these two flexibility aspects, and their relationship with children’s performance on the dynamic test, it was expected to gain a better understanding of how both children’s responsiveness to feedback and their ability to efficiently switch between different cognitive clusters would contribute to children’s potential for learning.

Based on previous research utilising the dynamic series completion test (e.g. Resing & Elliott, 2011, Resing et al., 2012b), it was hypothesised that the graduated prompts training would significantly improve children’s series completion performance from pre- to post-test as compared to non-trained children (1). Given earlier reported relationships between cognitive flexibility and static inductive reasoning tasks (Roca et al. 2010; Van der Sluis et al. 2007), we expected (2) initial differences in static inductive reasoning performance to be related to cognitive flexibility, where children with better performance on the card sorting test and the verbal fluency test would perform better on the static pre-test as compared to children with lower flexibility performances. Regarding the relationship with the dynamic measures of inductive reasoning, it was expected that (3a) the training procedure would attenuate the individual differences in cognitive flexibility skills through the graduated prompts provided. Previous research has shown that dynamic testing, at least partially, supported weaker working memory skills (e.g. Resing et al., 2012b; Stevenson et al., 2013; Swanson 1992, 2006), thereby increasing these children’s opportunity to show their potential for learning during the assessment process. More specifically, we expected that children with lower flexibility scores in the practice-only condition would show less progression from pre- to post-test than children with better flexibility scores, but that this difference would not be found for children in the graduated prompt training condition because the graduated prompts provided during training would partially compensate for differences in flexibility.

In addition, because the prompts in the training procedure were argued to support weaker cognitive flexibility, it was expected that children with weaker flexibility scores required more prompts during training and, moreover, also needed more detailed help in the form of the specific cognitive and step-by-step prompts. With regard to card sorting performance, we expected (3b) that weaker performance—i.e. more perseverative behaviour—would predict a weaker ability to profit from the feedback in the training procedure. This would be reflected by a need for more and more specific prompts. In addition, it was also expected (3c) that children’s verbal fluency performance would be related to the instructional needs during training. The ability to efficiently switch between different problem-solving strategies was expected to result in better access to, and application of, several learned problem-solving strategies, causing the child to need less specific feedback during the training procedure.

Method

Participants

Participants included 156 6–7-year-old children (73 boys and 83 girls; M = 81.0 months, SD = 6.9 months). The children were selected from nine elementary, middle class schools and were all native Dutch speakers. Written informed consent was obtained from all parents and schools. The results of three of these children were excluded from our data file as they did not participate in one or more of the sessions after the pre-test.

Design

A pre-test–training–post-test control group design with randomised blocking was employed, based on children’s age and inductive reasoning performance as measured with a test of visual exclusion (Resing et al., 2012a). Utilising this blocking procedure, per school, pairs of children were formed and allocated, per block, to one of two conditions: (1) a training group in which the children received a two-session training procedure with graduated prompting and (2) a practice-control group in which children solved the pre- and post-test items without additional training (see Table 1). Children in the training group received two trainings because for these young children, only one long training appeared too intensive (Resing et al., 2017).

Instead of participating in the training procedure, the children in the practice-control group individually solved in the same time frame a number of visual-spatial, non-inductive connect-the-dot tasks. Prior to the pre-test, all children were administered a modified version of the Wisconsin Card Sorting Test (M-WCST; Nelson 1976; Schretlen 2010) and a test of verbal fluency (RAKIT Ideational Fluency; Resing et al., 2012a) to obtain measures of children’s cognitive flexibility (see Table 1).

All sessions lasted approximately 30 min and took place individually, at a quiet location in the child’s school. Administration of materials was done by psychology master’s students who received extensive training in advance in all testing and training procedures.

Materials

Baseline tasks

Visual exclusion (blocking procedure)

In order to measure children’s initial inductive reasoning ability, the visual exclusion subtest of the Revised Amsterdam Children’s Intelligence Test (RAKIT-2; Resing et al., 2012a) was used. The subtest measures visual-spatial inductive reasoning ability. In this test, children have to solve 65 items, consisting of four abstract geometric figures, by choosing which of the figures does not belong to the other three. Children are to determine which figure ought to be excluded by reasoning inductively, and applying the exclusion rule to the four figures provided in each item. The visual exclusion subtest is reported to have high reliability (Cronbach’s α = .89; Resing et al., 2012a).

Modified Wisconsin Card Sorting Test (cognitive flexibility)

The Modified Wisconsin Card Sorting Test (Nelson 1976; Schretlen 2010) was utilised to measure children’s cognitive flexibility performance. In this card sorting test, inflexibility is reflected by the amount of perseverative behaviour a child displays, i.e. when a child persists in sorting cards according to a previously incorrect sorting rule (Nelson 1976). Perseverative errors are argued to be most indicative for children’s cognitive flexibility as the shift from perseveration to flexible behaviour is commonly considered the key transition in the early development of cognitive control (e.g. Garon et al., 2008; Zelazo, 2006). Based on four stimulus cards, children are asked to sort 48 response cards according to three sorting rules, namely colour, shape, or number. These rules are not counterbalanced, as in this modified version of the card sorting test, children determine the first sorting criterion themselves. The children are not offered a practice-test (Schretlen 2010). Based on the correctness of their sort, the children are informed whether or not they have applied the correct rule. The M-WCST was administered according to Nelson’s criteria (1976) which meant that after six consecutive correct sorts the child was explicitly told that the sorting criterion had changed. The child determined the first and second sorting criteria (e.g. number and shape), automatically leaving colour (the remaining criterion in this scenario) to be the last sorting criterion. After completing the three sorting criteria, the three criteria were requested again and in the same order. After completing the three criteria twice or after sorting all 48 cards, the procedure was completed. An error was defined as perseverative when (1) the child persisted sorting according to the same rule as that of the previously incorrect response and (2) the child did not switch rules after being told that the rules had changed (Nelson 1976; Obonsawin et al. 1999).

Verbal fluency (cognitive flexibility)

Cognitive flexibility was also measured with a test of semantic verbal fluency (RAKIT Ideational Fluency; Resing et al., 2012a). The children were given five semantic categories for which they were instructed to generate as many items as possible in 60 s. For the first two categories, the children were instructed to ‘name all the things that you can pick up’ and to ‘name all the things that you can drink’. For the third category, the children were asked to ‘name all the places that you can use to hide yourself’ whereas the fourth category was linked to the question ‘name all the things that you can find in the supermarket’. For the fifth and final category, the children were asked to ‘name all the things that you can do on the streets’. To examine flexibility performance, the number of clusters and switches were calculated according to the scoring system as proposed by Troyer and colleagues (Troyer et al. 1997; Troyer 2000). The output of each category was organised in clusters (successively generated words belonging to the same semantic subcategory) and cluster size was counted from the second word of each cluster. The number of switches as index for cognitive flexibility (Hurks et al. 2010; Troyer et al. 1997; Troyer 2000) was calculated as the number of times a child changed from one cluster to another.

Series completion test

Test description

The dynamic series completion test was used to measure children’s progression in inductive reasoning skills. The procedural guidelines, prompting protocol, construction principles, and an analytic model of the series that were the basis for the construction of the dynamic series completion test as used in the current study have been described in Resing and Elliott (2011) and in Resing et al. (2017). All items (pre-test, post-test, and training) consisted of a series of schematic puppets. These series could be completed by identifying the different task aspects, such as the gender of the puppet and the colour and design of the different body parts. At the same time, children had to discover various transformations amongst these aspects of the series. These transformations could be found in changes in the gender of the puppet (male/female), the colour of the different body parts (blue, green, yellow, and pink), or their design (stripes, dots, no design) (for an example, see Fig. 1). The periodicity—the frequency of transformations—and the number of recurring patterns determined the item difficulty of the items. The children were asked to solve the items by actively constructing the correct puppet, consisting of eight tangible body parts, on a plasticised paper puppet shape. For each constructed puppet, the examiner recorded what the constructed puppet looked like, whether the answer was correct or incorrect, and how much time the child needed to solve the series. After each answer, the child was asked to explain why he or she had made that specific puppet.

Series completion: pre- and post-test

The pre- and post-test consisted of 12 items, ranging from easy to difficult. In order to create equal item difficulty levels for pre- and post-test and the two training sessions, fully equivalent items were used that differed only in gender, colour, or design, but not in periodicity or the number of recurring pattern transformations. In other words, pre- and post-test items did not differ in difficulty, but did differ in the specific items used: Changes were made to the gender, colour, and design of the puppets, in order to avoid identical items and with that a repeated practice effect. During pre- and post-test, the children did not receive any feedback regarding their performance.

Series completion: dynamic training

The training phase consisted of two training sessions—conducted with a one-week interval—and during each session, the child was provided with six items of increasing difficulty. As for the pre- and post-test, equal item difficulty levels were created for the two sessions by differentiating in gender, colour, or design, but not in periodicity or number of recurring pattern transformations. In order to complete the series, the children were again asked to construct the correct answer with the tangible body parts. For each item, the child received a general instruction: ‘Here you see another series of puppets. Can you make the correct puppet?’. If the child was not able to solve the item independently after the general instruction, they received help according to a standardised graduated prompting protocol that consisted of four prompts (Resing & Elliott, 2011; Resing et al., 2012b). A schematic structure of the prompting procedure with brief descriptions of the prompts is provided in Fig. 2. If the child failed to construct the correct answer after the general instruction, the first, metacognitive prompt was provided. If the child was unable to construct the correct answer after the metacognitive prompt, the second prompt—a more detailed cognitive prompt—was provided. After another incorrect answer the third prompt was provided. If the third prompt was not sufficient to help the child in constructing the correct answer, the fourth and final prompt was provided through which the child was guided to constructing the correct answer via a step-by-step instruction. The prompts were always provided in a linear fashion—starting with the metacognitive prompt and ending with the step-by-step prompt (see Fig. 2)—and were only provided after an incorrect answer (e.g. Resing & Elliott, 2011).

Scoring

For examining dynamic test scores at the group level, pre-test and post-test accuracy scores were used. In addition, the number of hints children were provided with, and the type of hints they utilised were analysed. For the analyses requiring measures of children’s individual changes in performance as a result of cognitive training, a typicality analysis was used categorising participants as ‘non-learners’, ‘learners’ or ‘high scorers’. This typicality analysis enabled subgroup-wise comparison of individual gains in performance. A pragmatic 1.5 standard deviation rule of thumb was used in order to classify participants according to their learner status (e.g. Waldorf et al., 2009; Wiedl et al., 2001). The overall pre-test performance was used to calculate the 1.5 SD value. Participants were classified as learners if they improved their performance from pre-test to post-test by at least 1.5 SD. Participants who scored between the pre-test upper level minus 1.5 SD on the pre-test were identified as high scorers. Participants who did not meet either of these criteria were classified as non-learners. Multinomial logistic regression models were used in the analyses due to the categorical nature of the learner status variable. We did not make use of individual gain scores because these have often been criticised as not sufficiently reflecting children’s pre-test scores, and accounting for regression to the mean (e.g. Guthke & Wiedl, 1996).

Results

Initial group comparisons

One-way analyses of variance were conducted for the practice-only and the graduated prompts condition to evaluate possible initial differences between children in the two conditions. Basic statistics can be found in Table 2. The children’s age (F(1,152) = 1.12, p = .29) and initial level of inductive reasoning as measured with the visual exclusion task (F(1,152) = .79, p = .39) did not significantly differ between conditions. No significant differences between conditions were found in scores on the M-WCST (F(1,152) = .23, p = .63) and the test of verbal fluency (F(1,152) = 2.13, p = .15). In addition, no significant age differences between conditions were found in performance on the M-WCST (F(1,152) = 1.25, p = .21) and the test of verbal fluency (F(1,152) = .63, p = .92).

Psychometric properties

Cronbach’s alpha was used as a measure for internal consistency of the series completion pre- and post-test. A Cronbach’s α of .74 was found for pre-test and a Cronbach’s α of .78 was found for both conditions of the post-test, practice-control, and training. Regarding the measures of cognitive flexibility, prior research demonstrated that moderate stability estimates were obtained for the M-WCST (number of perseverative errors = .64) (Lineweaver et al. 1999). The interrater consistency was examined for the number of switches on the test of verbal fluency by calculating a two-way random average intra-class correlation coefficient (ICC) and was found to be .81.

Effect of dynamic testing at the group level

To examine whether the graduated prompts training improved series completion skills over practice-control for the whole group of children, a repeated measures ANOVA was conducted with condition (training/control) as between-subjects factor and session (pre-test/post-test) as within-subjects factor. Basic statistics are displayed in Table 3. Conform our expectation, the results of the analysis showed that the overall performance—regardless of condition—significantly improved from pre- to post-test (Wilks’s λ = .78, F(1,151) = 42.53, p = .00, ηp2 = .22), but, more importantly, that children in the graduated prompts condition showed significantly more progress than children in the practice-control condition (Wilks’s λ = .92, F(1,151) = 13.38, p = .00, ηp2 = .08) (see Fig. 3).

Static inductive reasoning performance

We expected initial differences in static inductive reasoning performance to be related to cognitive flexibility, where children with better performance on the card sorting test and the verbal fluency test would perform better on the static pre-test as compared to children with lower flexibility performances (hypothesis 1). We expected initial variability in performance on the series completion pre-test to be related to cognitive flexibility performance. We examined the relationship between the pre-test scores and the two measures of cognitive flexibility, the percentage of perseverative errors on the M-WCST, and the total number of switches on the verbal fluency task. It was found that the correlation between static inductive reasoning performance and percentage of perseverative errors was significant but modest (r = − .19, p = .017), whereas no significant correlation was found with number of switches (r = − .09, p = .27). The results supported our hypothesis partially.

Learning potential indices

To inspect the relationship between cognitive flexibility and children’s dynamic test performance, we analysed the predictive value of cognitive flexibility on two indices of potential for learning: learner status and instructional needs. Regarding the relationship with the dynamic measures of inductive reasoning—learner status and instructional needs during training—it was expected that the training procedure would attenuate the individual differences in cognitive flexibility skills through the graduated prompts provided (hypothesis 2a). In addition, because the prompts in the training procedure were argued to support weaker cognitive flexibility, it was expected that children with weaker flexibility scores required more prompts during training, and also more detailed help in the form of the cognitive-detailed and step-by-step prompts (hypothesis 2b and 2c).

Learner status

To establish the effect of the graduated prompts training on children’s learner status, a binary logistic regression analysis with learner status—learner vs. non-leaner—as dependent variable and condition as factor was performed. Classification of subjects by learner status was based on a 1.5 standard deviation rule of thumb (e.g. Waldorf et al., 2009; Wiedl et al., 2001). This 1.5 SD value was calculated from the overall performance on the pre-test. Following this rule, learners were those subjects who improved their performance from pre-test to post-test by 1.5 SD. High scorers were identified as those children who scored between the pre-test upper level minus 1.5 SD on the pre-test. Non-Learners did not meet either of these criteria (see Table 4 for basic statistics). In the current study, 38 children were classified as learner, whereas 115 children were classified as non-learner.

The outcome of the regression analysis showed that the prediction model was significant (χ2(1) = 5.82, p = .016). Condition significantly predicted whether children were classified as learner or non-learner (b = .93 Wald χ2(1) = 5.52, p = .00). The odds ratio showed that as condition changed from practice-control (0) to graduated prompts (1), the change in the odds of a child being a non-learner to being a learner was 2.53. This indicated that the odds of a child in the graduated prompts condition being a learner were 2.53 times more likely than those of a child in the practice-control condition, supporting the effectiveness of the training.

Next, the predictive value of cognitive flexibility on children’s learner status was examined. The regression analysis was conducted separately for children in the practice-control and training condition, because we wanted to explore potential differences in the relationship between learner status and flexibility. For the children in the practice-control condition, M-WCST performance predicted children’s learner status (χ2(1) = 4.53, p = .03), but this effect was not found for verbal fluency (VF) performance (χ2(1) = 0.14, p = .71). For the children in the graduated prompts condition, neither M-WCST performance (χ2(1) = .66, p = .42) nor VF performance (χ2(1) = 2.19, p = .14) added significantly to the initial model. In other words, better levels of cognitive flexibility as measured with the M-WCST predicted significantly greater progression from pre- to post-test for children in the practice-only condition, but not for children in the graduated prompts condition. These results partially supported the expectation of an attenuating effect of training on the influence of cognitive flexibility.

Number of prompts

A linear multiple regression analysis was performed to examine the relationship between cognitive flexibility scores and number of prompts during training, with the cognitive flexibility variables as the predictors and total number of prompts during training as dependent variable. A paired samples t test revealed a significant difference between number of prompts required during training sessions 1 and 2 (t(79) = 3.85, p = .00), and inspection of the data led to the conclusion that significantly fewer prompts were needed during training session 2. The analyses regarding number of prompts were therefore done separately for training sessions 1 and 2 (for basic statistics see Table 5).

The overall regression model for training 1 appeared not significant (F(2, 77) = .65, p = .52), and none of the predictors added significantly to the model (M-WCST p = .48; VF p = .32). The overall model for training 2 however was significant (F(2,77) = 2.90, p = .04). Number of perseverative errors on the M-WCST contributed significantly to the prediction model (b = .23, t = 2.00, p = .05), but this was not the case for number of switches on the VF task (b = − .19, t = − 1.68, p = .09). This result indicated that the number of prompts required during training session 2 was, modestly, related to children’s flexibility skills, partly supporting our hypothesis.

Types of prompts

Lastly, in addition to the number of prompts required during training, we also examined whether children with different flexibility scores would require different types of prompts during training. More specifically, we expected that children with lower flexibility scores would require more detailed cognitive and step-by-step prompts, as compared to children with higher flexibility scores. We conducted multiple regression analyses for children in the training condition with the cognitive flexibility variables as predictors and the number of times a child required a particular prompt as dependent variable. Again, all analyses were conducted separately for training sessions 1 and 2.

For the first set of analyses, we included the number of times a child required a metacognitive prompt as dependent variable, reflecting the child’s need for this particular type of help. The results showed that the models for both sessions 1 and 2 were non-significant (F(2, 77) = 2.03, p = .14; F(2, 77) = 1.31, p = .28) and that none of the cognitive flexibility measures was a significant predictor of the number of metacognitive prompts during the training sessions (session 1, M-WCST p = .09, VF p = .91; session 2, M-WCST p = .57, VF p = .78). Similar results were found in the second set of analyses in which the number of required (more detailed) cognitive prompts was included as the dependent variable. The models for both sessions 1 and 2 were non-significant (F(2, 77) = .45, p = .64; F(2, 77) = 2.30, p = .11) and none of the flexibility measures added significantly to the prediction model (session 1, M-WCST p = .44, VF p = .19; session 2, M-WCST p = .38, VF p = .23).

The last set of analyses, with the number of times a child required the step-by-step prompt as dependent variable, showed a non-significant model for session 1 (F(2, 77) = 03, p = .97) and non-significant additional predictive values for the flexibility measures (M-WCST p = .85; VF p = .86) as well. However, a significant model was found for training session 2 (F(2, 77) = 5.45, p = .01) in which both flexibility measures significantly contributed to the prediction. A higher percentage of perseverative errors on the M-WCST (representing less flexibility) was found to predict a greater use of the step-by-step prompts (b = .30, t = 2.76, p = .01). The same result was found for the number of switches on the VF task where fewer switches made (representing less flexibility) predicted a greater use of the step-by-step prompt (b = − .25, t = − 2.27, p = .03).

In conclusion, the results indicated that children with lower flexibility scores did not need significantly more detailed metacognitive or cognitive prompts as compared to children with higher flexibility scores, but that they did require more step-by-step prompts. These results partly support our hypothesis.

Discussion

In the present study, dynamic testing principles were applied to assess young children’s potential for learning. To increase our understanding of the cognitive processes involved in children’s ability to learn from feedback and instruction, the role of cognitive flexibility was examined. As an ultimate goal, the study aimed to provide suggestions for creating a better match between children’s individual patterns of abilities and the way they are taught in the classroom.

It was found that performances on the different measures of cognitive flexibility were related to different measures of potential for learning. Fewer perseverative errors on a modified version of the Wisconsin Card Sorting Test (M-WCST) were related to better static inductive reasoning performance, but this was not found for more efficient switching behaviour on a task of VF. Better performance on the M-WCST did predict a more efficient learner status—i.e. learner vs. non-learner—for children in the practice-only condition, but not for children in the graduated prompts condition. Additionally, differences in cognitive flexibility predicted different instructional needs during training. Scores on both measures of cognitive flexibility were found to be related to the types of prompts children needed most during the graduated prompts training, but only M-WCST performance was found to be related to the number of prompts children required. These results will now be discussed in greater detail.

Regarding the effectiveness of the training, it was found that the graduated prompts training led to a larger improvement in children’s series completion skills. Supported by previously reported results regarding the effectiveness of the training in improving children’s inductive reasoning skills (e.g. Resing and Elliott 2011; Resing et al., 2012b; Resing et al., 2017), we can conclude that the training increased children’s likelihood to be classified as learner over non-learner. Apparently, providing children with prompts during training, tapping into their zone of proximal development (Tunteler & Resing, 2010; Sternberg and Grigorenko 2002) helped children to move beyond their actual level of development towards their level of potential development. Considering that these prompts are metacognitive, targeted to the task approach, or cognitive, tailored to the specific items to be solved, provision of the prompts might have an activating effect on children’s metacognition, and possibly other aspects of their executive functioning, helping them structure their task approach. In earlier studies, for example, it has been shown that providing graduated prompts led children to shift from using more heuristic to more analytical strategies (Resing et al., 2012b, 2017).

With regard to the relationship between cognitive flexibility and the indices of potential for learning, it was revealed that cognitive flexibility as measured with the M-WCST was related to learner status of the untrained children, whereas this was not the case for the children trained with the graduated prompts procedure. This indicated that the graduated prompts provided during training compensated for lower cognitive flexibility. Earlier research findings showed that the graduated prompts training changed the relationship between working memory and performance from pre- to post-test (e.g. Swanson 1992) and that the intervention phase during dynamic testing contributed to comparable progression lines for children with lower and higher working memory skills (e.g. Stevenson et al., 2013; Resing et al., 2012b). The results of the current study seem to indicate that the graduated prompts training also compensated for lower cognitive flexibility skills, and perhaps for executive functioning in general. Of course, these findings need to be further investigated in future research, but they provide us with a first indication that deficits in cognitive flexibility can, partially, be compensated for by providing children with, tailored, training, which has ample potential for usage in educational practice.

In this light, the attenuating effect of training was supported by the finding that children with weaker flexibility skills required significantly more prompts during training as compared to the children with better flexibility skills, enabling the children with weaker flexibility skills to achieve the same level of learning as children with better skills. More specifically, children’s cognitive flexibility performance was related to both the number and type of prompts children required. The higher-level prompts—metacognitive and detailed cognitive—were equally required by children with higher and lower cognitive flexibility scores, but the step-by-step prompt proved to be particularly needed by children with weaker flexibility skills. This type of prompt, which aimed to guide the children towards the correct solution through a step-by-step procedure, best aided task performance for children with relatively lower flexibility scores. This finding was supported by previously conducted research amongst children with autism spectrum disorder, who, it is argued, experience more difficulties in their flexibility skills (e.g. Shu et al. 2001; Nelson 1976). They often show a rigidity towards routines, problems in the ability to shift to a different thought or action, and problematic behaviours in response to failure or novel tasks (Corbett et al. 2009; Mueller et al. 2007). In addition, previous research on the effect of verbal strategy instruction in executive functioning tasks, amongst which a task of cognitive flexibility, revealed that the application of verbal strategies had an enhancing or facilitating effect on cognitive flexibility performance (e.g. Cragg and Nation 2009; Fatzer and Roebers 2012; Kray et al. 2008). These findings appear to support the outcomes of our study. During the training procedure, the children were explicitly primed to verbalise their solution strategies. In line with these previous studies, it seems likely that our verbal strategy instructions supported children’s cognitive flexibility and, as a result, facilitated their inductive reasoning processes during the training phase.

A point for discussion concerns the finding that children’s performance on the verbal fluency task was not significantly related to static inductive reasoning performance or to the number of prompts required during training. We expected the number of switches—the index for cognitive flexibility derived from the verbal fluency test—to be related to children’s static inductive reasoning performance because cognitive switching has been argued to reflect the ability to efficiently switch attention—a relatively effortful process—between different task demands, and to adequately employ (newly acquired) different problem-solving strategies (e.g. Hurks 2012; Troyer 2000; Troyer et al. 1997). One explanation for this non-significant result might concern the validity of the verbal fluency task. Although widely used in the assessment of executive functioning, not much is known about its predictive validity, especially not for the relatively new measures derived from this instrument such as number of switches or number of clusters.

An alternative, or additional explanation, might be found in the suggestion that cognitive flexibility reflects a multi-faceted construct that includes the ability to shift between sets, maintain set, divide attention, learn from previously made mistakes, and show knowledge transfer (e.g. Anderson 2002; Crone et al. 2004b; Diamond 2013). Supported by the finding that M-WCST performance was related to static inductive reasoning performance, we might argue that cognitive switching as measured by the VF task did not sufficiently reflect the flexibility skills that were demanded during the static test of series completion performance.

In addition, it appeared that VF task performance was not related to children’s instructional needs during training, whereas M-WCST performance was found to significantly predict both the number of and types of prompts required during training. A plausible explanation might be that children’s ability to utilise prompts given during training—a core component of cognitive flexibility—is a rather important part of the M-WCST as children are explicitly informed about a change in categories. It seems plausible that children’s ability to respond to the switch cue of the M-WCST—i.e. whether or not they were able to switch between sets after they were told that the sorting rules had changed—related to their ability to directly apply new strategies learned from the prompts given during the training phase of the dynamic test. However, the VF was considered a non-cued task (Crone et al. 2004a, 2004b)—children were not told to switch between clusters—which might offer an explanation for why this flexibility task reflected different components of flexibility than those needed during training.

Although the literature supports the assessment of flexibility through card sorting tests and verbal fluency tests (e.g. Diamond 2013; Miyake et al. 2000; Troyer 2000), we would suggest to include a more differentiated pallet of cognitive flexibility in future research, because cognitive flexibility is often argued to consist of multi sub-processes (e.g. Anderson 2002; Ionescu 2012), such as a non-verbal Stroop test (e.g. Diamond and Taylor 1996).

Additionally, the series completion measures we used to obtain information about the potential for learning were dynamic, whereas the measures of cognitive flexibility were not. It appears that supporting children’s cognitive flexibility during dynamic testing can be important in facilitating the potential for learning. However, a more valid understanding of this supportive relationship might be improved in future studies by applying dynamic versions of measures of cognitive flexibility.

Finally, it is suggested that a more explicit assessment of cognitive flexibility in dynamic testing procedures might increase the predictive validity of dynamic test results for the planning of successful learning at school. Including in the training phase feedback and prompts that explicitly target cognitive flexibility will hopefully optimise children’s potential for learning under dynamic testing conditions.

Nevertheless, less perseverative behaviour on the M-WCST was found, although only modestly, to be related to better static inductive reasoning performance and fewer prompts required during training, and weaker performance as measured by the M-WCST or the VF task predicted the need for more low-level prompts. In addition, the graduated prompts training attenuated the effect of cognitive flexibility performance, thereby increasing children’s learning opportunities.

The practical implications of this study for the field of educational psychology suggest that cognitive flexibility represents a cognitive skill with links to young children’s potential for learning. This result has important implications for teaching and instruction. In the classroom, children frequently rely on their cognitive flexibility to perform a range of activities. Weaker flexibility skills lead to failures in simple tasks that require switching between different task demands or strategies (e.g. switching between ‘minus’ and ‘plus’ sums) or between different classroom and learning activities (e.g. switching between math and spelling activities) (for a review, see Diamond 2013). Because learning is a process that builds gradually over time, any weakness in learning that results from poor cognitive flexibility skills may negatively affect subsequent learning successes. Supporting cognitive flexibility skills relies on an early detection of weaknesses or deficits, and on an effective intervention by the teacher or other educational professional. The finding that children with weaker cognitive flexibility skills have different instructional needs, and that some of them seem to benefit more from low-level step-by-step instruction, contributes to our understanding of effective teaching methods that might facilitate the unfolding of young children’s potential for learning. Additionally, the fact that children were asked to verbalise their problem-solving strategies during the training phase of the dynamic test could have had an enhancing effect on children’s dynamic test performance. We, however, did not further analyse this, but in future will analyse whether explicitly prompting the children to verbalise their problem-solving strategies would support executive functioning and, as a result, enhance measures of the potential for learning.

More importantly, the results underline the importance of dynamic testing for children who seem to be hindered by their difficulties in switching between different task or cognitive demands, in learning from feedback or previously made mistakes. Dynamic testing with a graduated prompts procedure appears to support at least some of these children in taking a step towards showing their actual potential for learning. Dynamic testing, moreover, seems to be less biased towards children with executive functioning deficits than more traditional instruments. Therefore, the present study reminds us that it is important to consider testing children dynamically rather than statically, especially when difficulties with their executive functions are expected.

References

Agostino, A., Johnson, J., & Pascual-Leone, J. (2010). Executive functions underlying multiplicative reasoning: problem type matters. Journal of Experimental Child Psychology, 105(4), 286–305.

Anderson, P. (2002). Assessment and development of executive function (EF) during childhood. Child Neuropsychology, 8(2), 71–82. https://doi.org/10.1076/chin.8.2.71.8724.

Berg, E. A. (1948). A simple objective technique for measuring flexibility in thinking. The Journal of general psychology, 39(1), 15–22. https://doi.org/10.1080/00221309.1948.9918159.

Bosma, T., Hessels, M. G., & Resing, W. C. (2012). Teachers’ preferences for educational planning: Dynamic testing, teaching’experience and teachers’ sense of efficacy. Teaching and teacher Education, 28(4), 560–567. https://doi.org/10.1016/j.tate.2012.01.007.

Bosma, T., & Resing, W. C. M. (2006). Dynamic assessment and a reversal task: A contribution to needs-based assessment. Educational and Child Psychology, 23(3), 81. https://doi.org/10.1891/1945-8959.9.2.91.

Bull, R., & Scerif, G. (2001). Executive functionin as a predictor of children’s mathematics ability: inhibition, switching, and working memory. Developmental Neuropsychology, 19(3), 273–293. https://doi.org/10.1207/S15326942DN1903.

Burns, M. S., Delclos, V. R., Vye, N. J., & Sloan, K. (1992). Changes in cognitive strategies in dynamic assessment. International Journal of Dynamic Assessment & Instruction, 2(2), 45–54. https://doi.org/10.1007/978-1-4612-4392-2_13.

Caffrey, E., Fuchs, D., & Fuchs, L. S. (2008). The predictive validity of dynamic assessment: A review. The Journal of Special Education, 41(4), 254–270. https://doi.org/10.1177/0022466907310366.

Campione, J. C., & Brown, A. L. (1987). Linking dynamic assessment with school achievement. In C. S. Lidz (Ed.), Dynamic assessment: an interactional approach to evaluating learning potential (pp. 82–109). New York: Guilford Press.

Cianchetti, C., Corona, S., Foscoliano, M., Contu, D., & Sannio-Fancello, G. (2007). Modified Wisconsin Card Sorting Test (MCST, MWCST): normative data in children 4-13 years old, according to classical and new types of scoring. The Clinical Neuropsychologist, 21(3), 456–478. https://doi.org/10.1080/13854040600629766.

Corbett, B. A., Constantine, L. J., Hendren, R., Rocke, D., & Ozonoff, S. (2009). Examining executive functioning in children with autism spectrum disorder, attention deficit hyperactivity disorder and typical development. Psychiatry Research, 166(2), 210–222. https://doi.org/10.1016/j.psychres.2008.02.005.

Cragg, L., & Nation, K. (2009). Language and the development of cognitive control. Topics in Cognitive Science, 2, 631–642.

Crone, E. A., Jennings, J. R., & Van der Molen, M. W. (2004a). Developmental change in feedback processing as reflected by phasic heart rate changes. Developmental Psychology, 40(6), 1228–1238.

Crone, E. A., Ridderinkhof, K., Worm, M., Somsen, R. J., & Van Der Molen, M. W. (2004b). Switching between spatial stimulus–response mappings: a developmental study of cognitive flexibility. Developmental Science, 7(4), 443–455. https://doi.org/10.1111/j.1467-7687.2004.00365.

Csapó, B. (1997). The development of inductive reasoning: cross-sectional assessments in an educational context. International Journal of Behavioral Development, 20(4), 609–626. https://doi.org/10.1080/016502597385081.

Davidson, M. C., Amso, D., Anderson, L. C., & Diamond, A. (2006). Development of cognitive control and executive functions from 4 to 13 years: evidence from manipulations of memory, inhibition, and task switching. Neuropsychologia, 44(11), 2037–2078.

Deak, G. O., & Wiseheart, M. (2015). Cognitive flexibility in young children: general or task-specific capacity? Journal of Experimental Child Psychology, 138, 31–53.

Diamond, A. (2013). Executive functions. The Annual Review of Psychology, 64, 135–168. https://doi.org/10.1146/annurev-psych-113011-143750, 1

Diamond, A., & Taylor, C. (1996). Development of an aspect of executive control: development of the abilities to remember what I said and to “do as I say, not as I do”. Developmental Psychobiology, 29(4), 315–334.

Elliott, J. G., Grigorenko, E. L., & Resing, W. C. M. (2010). Dynamic assessment: The need for a dynamic approach. In P. Peterson, E. Baker, & B. McGaw (Eds.), International Encyclopedia of Education (Vol. 3, pp. 220–225). Amsterdam, The Netherlands: Elsevier. https://doi.org/10.1016/b978-0-08-044894-7.00311-0.

Fabio, R. A. (2005). Dynamic assessment of intelligence is a better reply to adaptive behavior and cognitive plasticity. The Journal of General Psychology, 132(1), 41–64. https://doi.org/10.3200/GENP.132.1.41-66.

Fatzer, S. T., & Roebers, C. M. (2012). Language and executive functioning: children’s benefit from induced verbal strategies in different tasks. Journal of Educational and Developmental Psychology, 3(1), 1–9.

Garon, N., Bryson, S. E., & Smith, I. M. (2008). Executive function in preschoolers: A review using an integrative framework. Psychological Bulletin, 134(1), 31–60. https://doi.org/10.1037/0033-2909.134.1.31.

Goswami, U. (2001). Analogical reasoning in children. In D. Genthner, K. J. Hoyoak, & B. N. Kokinov (Eds.), The analogical mind: perspectives from cognitive science (pp. 437–470). Cambridge: MIT Press.

Grigorenko, E. L. (2009). Dynamic assessment and response to intervention: two sides of one coin. Journal of Learning Disabilities, 42(2), 111–132. https://doi.org/10.1177/0022219408326207.

Gupta, R., Kar, B. R., & Srinivasan, N. (2009). Development of task switching and post-error-slowing in children. Behavioral and Brain Functions, 5(1), 1–13.

Guthke, J., & Wiedl, K. H. (1996). Dynamisches Testen [Dynamic testing]. Göttingen, Germany: Hogrefe.

Holzman, T. G., Pellegrino, J. W., & Glaser, R. (1983). Cognitive variables in series completion. Journal of Educational Psychology, 75(4), 603–618. https://doi.org/10.1037/0022-0663.75.4.603.

Hurks, P. P. M. (2012). Does instruction in semantic clustering and switching enhance verbal fluency in children? The Clinical Neuropsychologist, 26(6), 1019–1037. https://doi.org/10.1080/13854046.2012.708361.

Hurks, P. P. M., Hendriksen, J. G. M., Vles, J. S. H., Kalff, A. C., Feron, F. J. M., Kroes, M., van Zeben, T. M. C. B., Steyaert, J., & Jolles, J. (2004). Verbal fluency over time as a measure of automatic and controlled processing in children with ADHD. Brain and Cognition, 55(3), 535–544. https://doi.org/10.1016/j.bandc.2004.03.003.

Hurks, P. P. M., Schrans, D., Meijs, C., Wassenberg, R., Feron, F. J. M., & Jolles, J. (2010). Developmental changes in semantic verbal fluency: analyses of word productivity as a function of time, clustering, and switching. Child Neuropsychology: A Journal on Normal and Abnormal Development in Childhood and Adolescence, 16(4), 366–387. https://doi.org/10.1080/09297041003671184.

Ionescu, T. (2012). Exploring the nature of cognitive flexibility. New Ideas in Psychology, 30(2), 190–200.

Jeltova, I., Birney, D., Fredine, N., Jarvin, L., Sternberg, R. J., & Grigorenko, E. L. (2011). Making instruction and assessment responsive to diverse students’ progress: Groupadministered dynamic assessment in teaching mathematics. Journal of Learning Disabilities, 44(4), 381–395. https://doi.org/10.1177/0022219411407868.

Klauer, K. J., & Phye, G. D. (2008). Inductive reasoning: a training approach. Review of Educational Research, 78(1), 85–123. https://doi.org/10.3102/0034654307313402.

Kozulin, A., & Garb, E. (2002). Dynamic assessment of EFL text comprehension. School Psychology International, 23(1), 112–127. https://doi.org/10.1177/0143034302023001733.

Kray, J., Eber, J., & Karbach, J. (2008). Verbal self-instructions in task switching: a compensatory tool for action-control deficits in childhood and old age? Developmental Science, 11(2), 223–236.

Lineweaver, T. T., Bondi, M. W., Thomas, R. G., & Salmon, D. P. (1999). A normative study of Nelson’s (1976) modified version of the Wisconsin Card Sorting Test in healthy older adults. Clinical Neuropsychology, 13(3), 328–347. https://doi.org/10.1076/clin.13.3.328.1745.

Miller, E. K., & Cohen, J. D. (2001). An integrative theory of prefontal cortex function. Annual Review of Neuroscience, 24(1), 167–202. https://doi.org/10.1146/annurev.neuro.24.1.167.

Miyake, A., Friedman, N. P., Emerson, M. J., Witzki, A. H., Howerter, A., & Wager, T. D. (2000). The unity and diversity of executive functions and their contributions to complex “frontal lobe” tasks: a latent variable analysis. Cognitive Psychology, 41(1), 49–100. https://doi.org/10.1006/cogp.1999.0734.

Mueller, M. M., Palkovic, C. M., & Maynard, C. S. (2007). Errorless learning: review and practical application for teaching children with pervasive developmental disorders. Psychology in the Schools, 44(7), 691–700.

Nelson, H. E. (1976). A modified card sorting test sensitive to frontal lobe defects. Cortex, 12(4), 313–324. https://doi.org/10.1016/S0010-9452(76)80035-4.

Obonsawin, M. C., Crawford, J. R., Page, J., Chalmers, P., Low, G., & Marsh, P. (1999). Performance on the Modified Card Sorting Test by normal, healthy individuals: relationship to general intellectual ability and demographic variables. British Journal of Clinical Psychology, 38(1), 27–41. https://doi.org/10.1348/014466599162647.

Pameijer, N., & Van Beukering, T. (2015). Handelingsgerichte diagnostiek in het onderwijs. Een praktijkmodel voor diagnostiek en advisering [Needs based assessment in education. A practice model for assessment and recommendation]. Leuven, BE: Acco.

Pellegrino, J. W. (1985). Inductive reasoning ability. In R. J. Sternberg (Ed.), Human abilities: An information-processing approach (pp. 195–225). New York, NY: W. H. Freeman.

Resing, W. C. M. (2013). Dynamic testing and individualized instruction: Helpful in cognitive education? Journal of Cognitive Education and Psychology, 12(1), 81–95. https://doi.org/10.1891/1945-8959.12.1.81.

Resing, W. C. M., Bleichrodt, N., Drenth, P. J. D. D., & Zaal, J. N. (2012a). Revisie Amsterdamse Kinder Intelligentie Test-2 (RAKIT-2). Gebruikershandleiding [Revised Amsterdam Children’s Intelligence Test-2 (RAKIT-2). User manual]. Amsterdam, The Netherlands: Pearson.

Resing, W. C. M., Xenidou-Dervou, I., Steijn, W. M. P., & Elliott, J. G. (2012b). A “picture” of children’s potential for learning: Looking into strategy changes and working memory by dynamic testing. Learning and Individual Differences, 22(1), 144–150. https://doi.org/10.1016/j.lindif.2011.11.002.

Resing, W. C. M., & Elliott, J. G. (2011). Dynamic testing with tangible electronics: Measuring children’s change in strategy use with a series completion task. British Journal of Educational Psychology, 81(4), 579–605. https://doi.org/10.1348/2044-8279.002006.

Resing, W. C. M., Touw, K. W. J., Veerbeek, J., & Elliott, J. G. (2017). Progress in the inductive strategy-use of children from different ethnic backgrounds: A study employing dynamic testing. Educational Psychology, 37(2), 173–191. https://doi.org/10.1080/01443410.2016.1164300.

Robinson-Zañartu, C., & Carlson, J. (2013). Dynamic assessment. In K. F. Geisinger (Ed.), APA handbook of testing and assessment in psychology (pp. 149–167). Washington, DC: American Psychological Association.

Roca, M., Parr, A., Thompson, R., Woolgar, A., Torralva, T., Antoun, N., Manes, F., & Duncan, J. (2010). Executive function and fluid intelligence after frontal lobe lesions. Brain, 133(1), 234–247. https://doi.org/10.1093/brain/awp269.

Rock, M. L., Gregg, M., Ellis, E., & Gable, R. A. (2008). REACH: a framework for differentiating classroom instruction. Preventing School Failure: Alternative Education for Children and Youth, 52(2), 31–47. https://doi.org/10.3200/PSFL.52.2.31-47.

Ropovik, I. (2014). Do executive functions predict the ability to learn problem-solving principles? Intelligence, 44(1), 64–74. https://doi.org/10.1016/j.intell.2014.03.002.

Schretlen, D. J. (2010). Modified Wisconsin Card Sorting Test (M-WCST). Lutz: Psychological Assessment Resources.

Shu, B. C., Tien, A. Y., & Chen, B. C. (2001). Executive function deficits in non-retarded autistic children. Autism, 5(2), 165–174. https://doi.org/10.1177/1362361301005002006.

Sternberg, R. J., & Gardner, M. K. (1983). Unities in inductive reasoning. Journal of Experimental Psychology: General, 112, 80–116. https://doi.org/10.1037//0096-3445.112.1.80.

Sternberg, R. J., & Grigorenko, E. L. (2002). Dynamic testing: the nature and measurement of learning potential. New York: Cambridge University Press.

Stevenson, C. E., Heiser, W. J., & Resing, W. C. M. (2013). Working memory as a moderator of training and transfer of analogical reasoning in children. Contemporary Educational Psychology, 38(3), 159–169. https://doi.org/10.1016/j.cedpsych.2013.02.001.

Swanson, H. L. (1992). Generality and modifiability of working memory among skilled and less skilled readers. Journal of Educational Psychology, 84(4), 473–488.

Swanson, H. L. (2006). Cross-sectional and incremental changes in working memory and mathematical problem solving. Journal of Educational Psychology, 98(2), 265–281. https://doi.org/10.1037/0022-0663.98.2.265.

Swanson, H. L. (2011). Dynamic testing, working memory, and reading comprehension growth in children with reading disabilities. Journal of Learning Disabilities, 44(4), 358–371. https://doi.org/10.1177/0022219411407866.

Troyer, A. K. (2000). Normative data for clustering and switching on verbal fluency tasks. Journal of Clinical and Experimental Neuropsychology, 22(3), 370–378. https://doi.org/10.1076/1380-3395(200006.

Troyer, A. K., Moscovitch, M., & Winocur, G. (1997). Clustering and switching as two components of verbal fluency: evidence from younger and older healthy adults. Neuropsychology, 11(1), 138–146. https://doi.org/10.1037/0894-4105.11.1.138.

Tunteler, E., & Resing, W. C. M. (2010). The effects of self- and other-scaffolding on progression and variation in children’s geometric analogy performance: A microgenetic research. Journal of Cognitive Education and Psychology, 9(3), 251–272. https://doi.org/10.1891/1945-8959.9.3.251.

Tzuriel, D. (2001). Dynamic assessment of young children. New York, NY: Kluwer Academic.

Van der Sluis, S., De Jong, P. F., & Van der Leij, A. (2007). Executive functioning in children, and its relations with reasoning, reading, and arithmetic. Intelligence, 35(5), 427–449. https://doi.org/10.1016/j.intell.2006.09.001.

Waldorf, M., Wiedl, K. H., & Schöttke, H. (2009). On the concordance of three reliable change indexes: An analysis applying the dynamic Wisconsin Card Sorting Test. Journal of Cognitive Education and Psychology, 8(1), 63–80. https://doi.org/10.1891/1945-8959.8.1.63.

Wiedl, K. H., Wienöbst, J., Schöttke, H. H., Green, M. F., & Nuechterlein, K. H. (2001). Attentional characteristics of schizophrenia patients differing in learning proficiency on the Wisconsin Card Sorting Test. Schizophrenia Bulletin, 27(4), 687–695. https://doi.org/10.1093/oxfordjournals.schbul.a006907.

Yeniad, N., Malda, M., Mesman, J., Van IJzendoorn, M. H., & Pieper, S. (2013). Shifting ability predicts math and reading performance in children: a meta-analytical study. Learning and Individual Differences, 23, 1–9.

Zelazo, P. D. (2006). The Dimensional Change Card Sort (DCCS): a method of assessing executive function in children. Nature Protocols, 1(1), 297–301. https://doi.org/10.1038/nprot.2006.46.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Written informed consent was obtained from all parents and schools. All procedures, including the informed consent and the recruitment of participants, were reviewed and approved by the institutional Committee Ethics in Psychology (CEP).

Additional information

Femke Stad. Department of Developmental and Educational Psychology, Leiden University, Leiden, The Netherlands

Current themes of research:

Dynamic testing and cognitive flexibility.

Most relevant publications in the field of Psychology of Education:

Bosma, T., Stevenson, C. E., & Resing, W. C. M. (2017, online). Differences in need for instruction: dynamic testing in children with arithmetic difficulties. Journal of Education and Training Studies, 5, xx. DOI.org/10.11114/jets.v5i6.2326

Vogelaar, B., & Resing, W.C.M. (accepted, 2017). Changes over time and transfer of analogy-problem solving of gifted and non-gifted children in a dynamic testing setting. Educational Psychology.

Vogelaar, B., Bakker, M., Hoogeveen, L., & Resing, & W.C.M. (Open access, 2017). Dynamic testing of gifted and average-ability children’s analogy problem-solving: does executive functioning play a role? Psychology in the Schools. Psychol Schs. 2017;00:1–15. https://doi.org/10.1002/pits.22032

Veerbeek, J. Verhaegh, J., Elliott, J.G., & Resing, W.C.M. (Online, januari 2017): Process oriented measurement using electronic tangibles. Journal of Education and Learning, 155–170. dx.doi.org/10.5539/jel.v6n2p155

Touw, K.W.J., Vogelaar, B., Verdel, R., Bakker, M., & Resing, W.C.M. (2017). Children’s progress in solving figural analogies: are outcomes of dynamic testing helpful for teachers? Educational and Child Psychology, 34, 21–38.

Resing, W.C.M., Touw, K.W.J., Veerbeek, J., & Elliott, J.G. (2017). Progress in the inductive strategy use of children from different ethnic backgrounds: a study employing dynamic testing. Educational Psychology, 37(2), 173–191.https://doi.org/10.1080/01443410.2016.1164300

Resing, W.C.M., Laughlan, F., & Elliott, J.G. (2017). Guest-editorial: bridging the gap between psychological assessment and educational instruction. Educational and Child Psychology, 34 (1), 6–8.

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (online september 2016). Progression paths in children’s problem solving: the influence of dynamic testing, initial variability, and working memory. Journal of Experimental Child Psychology, 153 (2017), 83–109. dx. doi.org/10.1016/j.jcep.2016.09.004

Vogelaar, B., Bakker, M., Elliott, J.G., & Resing, W.C.M. (online november 2016). Dynamic testing and test anxiety amongst gifted and average-ability children. British Journal of Educational Psychology. Online published November 24, 2016. dx.doi:10.1111/bjep.12136

Vogelaar, B., & Resing, W.C.M. (2016). Talented and non-gifted children’s progression in analogical reasoning in a dynamic test setting. Journal of Cognitive Education and Psychology, 15, 349–367. dx.doi:10.1891/1945-8959.15.3.349

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (2016). Dynamic testing and transfer: an examination of children’s problem solving strategies. Learning and Individual Differences, 49, 110–119. dx.doi.org.10.1016/j.lindif.2016.05.011

Cole, R., Gibbs, S. & Resing, W.C.M. (2016). Guest editorial: neuroscience and educational psychology. Educational and Child Psychology, 33 (1), 5–7.

Stevenson, C.E., Heiser, W., & Resing, W.C.M. (2016). Dynamic testing of analogical reasoning in 5-6 year olds: multiple choice versus constructed training. Journal of Psychoeducational Assessment, 34(6), 550–565. dx.doi: 10.1177/0734282915622912

Stevenson, C.E., Heiser, W.J. & Resing, W.C.M. (2016). Assessing cognitive potential of children with culturally diverse backgrounds. Learning and Individual Differences, 47, 27–36. https://doi.org/10.1016/j.lindif.2015.12.025

Elliott, J.G., & Resing, W.C.M. (2015). Can intelligence testing inform educational intervention for children with reading disability? Journal of intelligence, 3(4), 137–157. https://doi.org/10.3390/jintelligence3040137

Resing, W.C.M., Tunteler, E., & Elliott, J.G. (2015). The effect of electronic scaffolding on the progression of tangible series completion in children: a microgenetic study. Journal of Cognitive Education and Psychology, 14, 231–251.

Karl Wiedl. Department of Clinical Psychology, University of Osnabrück, Osnabrück, Germany

Current themes of research:

Dynamic testing in various populations.

Most relevant publications in the field of Psychology of Education:

Bosma, T., Stevenson, C. E., & Resing, W. C. M. (2017, online). Differences in need for instruction: dynamic testing in children with arithmetic difficulties. Journal of Education and Training Studies, 5, xx. DOI.org/10.11114/jets.v5i6.2326

Vogelaar, B., & Resing, W.C.M. (accepted, 2017). Changes over time and transfer of analogy-problem solving of gifted and non-gifted children in a dynamic testing setting. Educational Psychology.

Vogelaar, B., Bakker, M., Hoogeveen, L., & Resing, & W.C.M. (Open access, 2017). Dynamic testing of gifted and average-ability children’s analogy problem-solving: does executive functioning play a role? Psychology in the Schools. Psychol Schs. 2017;00:1–15. https://doi.org/10.1002/pits.22032

Veerbeek, J. Verhaegh, J., Elliott, J.G., & Resing, W.C.M. (Online, januari 2017): Process oriented measurement using electronic tangibles. Journal of Education and Learning, 155–170. dx.doi.org/10.5539/jel.v6n2p155

Touw, K.W.J., Vogelaar, B., Verdel, R., Bakker, M., & Resing, W.C.M. (2017). Children’s progress in solving figural analogies: are outcomes of dynamic testing helpful for teachers? Educational and Child Psychology, 34, 21–38.

Resin,g, W.C.M., Touw, K.W.J., Veerbeek, J., & Elliott, J.G. (2017). Progress in the inductive strategy use of children from different ethnic backgrounds: a study employing dynamic testing. Educational Psychology, 37(2), 173–191.https://doi.org/10.1080/01443410.2016.1164300

Resin,g, W.C.M., Laughlan, F., & Elliott, J.G. (2017). Guest-editorial: bridging the gap between psychological assessment and educational instruction. Educational and Child Psychology, 34 (1), 6–8.

Resin,g, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (online september 2016). Progression paths in children’s problem solving: the influence of dynamic testing, initial variability, and working memory. Journal of Experimental Child Psychology, 153 (2017), 83–109. dx. doi.org/10.1016/j.jcep.2016.09.004

Vogel,aar, B., Bakker, M., Elliott, J.G., & Resing, W.C.M. (online november 2016). Dynamic testing and test anxiety amongst gifted and average-ability children. British Journal of Educational Psychology. Online published November 24, 2016. dx.doi:10.1111/bjep.12136

Vogel,aar, B., & Resing, W.C.M. (2016). Talented and non-gifted children’s progression in analogical reasoning in a dynamic test setting. Journal of Cognitive Education and Psychology, 15, 349–367. dx.doi:10.1891/1945-8959.15.3.349

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (2016). Dynamic testing and transfer: an examination of children’s problem solving strategies. Learning and Individual Differences, 49, 110–119. dx.doi.org.10.1016/j.lindif.2016.05.011

Cole, R., Gibbs, S. & Resing, W.C.M. (2016). Guest editorial: neuroscience and educational psychology. Educational and Child Psychology, 33 (1), 5–7.

Stevenson, C.E., Heiser, W., & Resing, W.C.M. (2016). Dynamic testing of analogical reasoning in 5-6 year olds: multiple choice versus constructed training. Journal of Psychoeducational Assessment, 34(6), 550–565. dx.doi: 10.1177/0734282915622912

Stevenson, C.E., Heiser, W.J. & Resing, W.C.M. (2016). Assessing cognitive potential of children with culturally diverse backgrounds. Learning and Individual Differences, 47, 27–36. https://doi.org/10.1016/j.lindif.2015.12.025

Elliott, J.G., & Resing, W.C.M. (2015). Can intelligence testing inform educational intervention for children with reading disability? Journal of intelligence, 3(4), 137–157. https://doi.org/10.3390/jintelligence3040137

Resing, W.C.M., Tunteler, E., & Elliott, J.G. (2015). The effect of electronic scaffolding on the progression of tangible series completion in children: a microgenetic study. Journal of Cognitive Education and Psychology, 14, 231–251.

Bart Vogelaar Department of Developmental and Educational Psychology, Leiden University, Leiden, The Netherlands

Current themes of research:

Context of giftedness.

Most relevant publications in the field of Psychology of Education:

Bosma, T., Stevenson, C. E., & Resing, W. C. M. (2017, online). Differences in need for instruction: dynamic testing in children with arithmetic difficulties. Journal of Education and Training Studies, 5, xx. DOI.org/10.11114/jets.v5i6.2326

Vogelaar, B., & Resing, W.C.M. (accepted, 2017). Changes over time and transfer of analogy-problem solving of gifted and non-gifted children in a dynamic testing setting. Educational Psychology.

Vogelaar, B., Bakker, M., Hoogeveen, L., & Resing, & W.C.M. (Open access, 2017). Dynamic testing of gifted and average-ability children’s analogy problem-solving: does executive functioning play a role? Psychology in the Schools. Psychol Schs. 2017;00:1–15. https://doi.org/10.1002/pits.22032

Veerbeek, J. Verhaegh, J., Elliott, J.G., & Resing, W.C.M. (Online, januari 2017): Process oriented measurement using electronic tangibles. Journal of Education and Learning, 155–170. dx.doi.org/10.5539/jel.v6n2p155

Touw, K.W.J., Vogelaar, B., Verdel, R., Bakker, M., & Resing, W.C.M. (2017). Children’s progress in solving figural analogies: are outcomes of dynamic testing helpful for teachers? Educational and Child Psychology, 34, 21–38.

Resing, W.C.M., Touw, K.W.J., Veerbeek, J., & Elliott, J.G. (2017). Progress in the inductive strategy use of children from different ethnic backgrounds: a study employing dynamic testing. Educational Psychology, 37(2), 173–191.https://doi.org/10.1080/01443410.2016.1164300

Resing, W.C.M., Laughlan, F., & Elliott, J.G. (2017). Guest-editorial: bridging the gap between psychological assessment and educational instruction. Educational and Child Psychology, 34 (1), 6–8.

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (online september 2016). Progression paths in children’s problem solving: the influence of dynamic testing, initial variability, and working memory. Journal of Experimental Child Psychology, 153 (2017), 83–109. dx. doi.org/10.1016/j.jcep.2016.09.004

Vogelaar, B., Bakker, M., Elliott, J.G., & Resing, W.C.M. (online november 2016). Dynamic testing and test anxiety amongst gifted and average-ability children. British Journal of Educational Psychology. Online published November 24, 2016. dx.doi:10.1111/bjep.12136

Vogelaar, B., & Resing, W.C.M. (2016). Talented and non-gifted children’s progression in analogical reasoning in a dynamic test setting. Journal of Cognitive Education and Psychology, 15, 349–367. dx.doi:10.1891/1945-8959.15.3.349

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (2016). Dynamic testing and transfer: an examination of children’s problem solving strategies. Learning and Individual Differences, 49, 110–119. dx.doi.org.10.1016/j.lindif.2016.05.011

Cole, R., Gibbs, S. & Resing, W.C.M. (2016). Guest editorial: neuroscience and educational psychology. Educational and Child Psychology, 33 (1), 5–7.

Stevenson, C.E., Heiser, W., & Resing, W.C.M. (2016). Dynamic testing of analogical reasoning in 5-6 year olds: multiple choice versus constructed training. Journal of Psychoeducational Assessment, 34(6), 550–565. dx.doi: 10.1177/0734282915622912

Stevenson, C.E., Heiser, W.J. & Resing, W.C.M. (2016). Assessing cognitive potential of children with culturally diverse backgrounds. Learning and Individual Differences, 47, 27–36. https://doi.org/10.1016/j.lindif.2015.12.025

Elliott, J.G., & Resing, W.C.M. (2015). Can intelligence testing inform educational intervention for children with reading disability? Journal of intelligence, 3(4), 137–157. https://doi.org/10.3390/jintelligence3040137

Resing, W.C.M., Tunteler, E., & Elliott, J.G. (2015). The effect of electronic scaffolding on the progression of tangible series completion in children: a microgenetic study. Journal of Cognitive Education and Psychology, 14, 231–251.

Merel Bakker. Department of Developmental and Educational Psychology, Leiden University, Leiden, The Netherlands

Current themes of research:

Dynamic testing but in the context of giftedness.

Most relevant publications in the field of Psychology of Education:

Bosma, T., Stevenson, C. E., & Resing, W. C. M. (2017, online). Differences in need for instruction: dynamic testing in children with arithmetic difficulties. Journal of Education and Training Studies, 5, xx. DOI.org/10.11114/jets.v5i6.2326

Vogelaar, B., & Resing, W.C.M. (accepted, 2017). Changes over time and transfer of analogy-problem solving of gifted and non-gifted children in a dynamic testing setting. Educational Psychology.

Vogelaar, B., Bakker, M., Hoogeveen, L., & Resing, & W.C.M. (Open access, 2017). Dynamic testing of gifted and average-ability children’s analogy problem-solving: does executive functioning play a role? Psychology in the Schools. Psychol Schs. 2017;00:1–15. https://doi.org/10.1002/pits.22032

Veerbeek, J. Verhaegh, J., Elliott, J.G., & Resing, W.C.M. (Online, januari 2017): Process oriented measurement using electronic tangibles. Journal of Education and Learning, 155–170. dx.doi.org/10.5539/jel.v6n2p155

Touw, K.W.J., Vogelaar, B., Verdel, R., Bakker, M., & Resing, W.C.M. (2017). Children’s progress in solving figural analogies: are outcomes of dynamic testing helpful for teachers? Educational and Child Psychology, 34, 21–38.

Resing, W.C.M., Touw, K.W.J., Veerbeek, J., & Elliott, J.G. (2017). Progress in the inductive strategy use of children from different ethnic backgrounds: a study employing dynamic testing. Educational Psychology, 37(2), 173–191.https://doi.org/10.1080/01443410.2016.1164300

Resing, W.C.M., Laughlan, F., & Elliott, J.G. (2017). Guest-editorial: bridging the gap between psychological assessment and educational instruction. Educational and Child Psychology, 34 (1), 6–8.

Resing, W.C.M., Bakker, M., Pronk, C.E., & Elliott, J.G. (online september 2016). Progression paths in children’s problem solving: the influence of dynamic testing, initial variability, and working memory. Journal of Experimental Child Psychology, 153 (2017), 83–109. dx. doi.org/10.1016/j.jcep.2016.09.004