Abstract

Understanding the structure of financial markets deals with suitably determining the functional relation between financial variables. In this respect, important variables are the trading activity, defined here as the number of trades N, the traded volume V, the asset price P, the squared volatility \(\sigma ^2\), the bid-ask spread S and the cost of trading C. Different reasonings result in simple proportionality relations (“scaling laws”) between these variables. A basic proportionality is established between the trading activity and the squared volatility, i.e., \(N \sim \sigma ^2\). More sophisticated relations are the so called 3/2-law \(N^{3/2} \sim \sigma P V /C\) and the intriguing scaling \(N \sim (\sigma P/S)^2\). We prove that these “scaling laws” are the only possible relations for considered sets of variables by means of a well-known argument from physics: dimensional analysis. Moreover, we provide empirical evidence based on data from the NASDAQ stock exchange showing that the sophisticated relations hold with a certain degree of universality. Finally, we discuss the time scaling of the volatility \(\sigma \), which turns out to be more subtle than one might naively expect.

Similar content being viewed by others

1 Introduction

Understanding the structure of financial markets is of obvious relevance for traders, investors and regulators. Among others, the relation between trading activity and price variability received a lot of attention in the financial literature over the last five decades. The pioneers of this field, e.g. Clark [9], Epps and Epps [14] and Tauchen and Pitts [30], defined trading activity via trading volume and derived a proportionality relation between the trading volume and the price variability. The rationale behind this definition and the implied relation is the widely-cited aphorism, “it takes volume to move prices”. We refer to Karpoff [17] for a survey of these early works on the price–volume relation.

Due to minor empirical evidence for the hypotheses developed in these early approaches, the volume-based definition of trading activity has been replaced by the number of trades. This definition is caused by a substantial link between the observed price variability and the number of trades (see Jones et al. [16], Ané and Geman [4] as well as Dufour and Engle [12]). For example, Jones et al. [16] find no predictive power in the volume for the price variability but that the number of trades scales proportionally to the squared volatility. This scaling relation will be the starting point of our discussion. Building on the aforementioned ideas numerous other studies followed, e.g. [2, 20]. In particular, let us point out the contribution by Wyart et al. [31], who argue that the price volatility per trade, i.e., (price) \(\times \) (volatility) \(\times \) (number of trades)\(^{-1/2}\), is proportional to the bid-ask-spread. This connection can be seen as a somewhat refined version of the relation proposed by Jones et al. [16].

More recently, general relations between financial quantities have been derived based on the invariance of markets’ microstructure, see Kyle and Obizhaeva [18]. In particular, the authors postulate a trading invariance principle which (in contrast to the above relations) is formulated on the latent level of meta-orders.Footnote 1 Andersen et al. [3] and Benzaquen et al. [6] confirm empirically that an analogue of this invariance principle holds true for intradaily observable quantities. The fundamental relation may then be formulated as follows: the nominal value of the exchanged risk during a period of time, defined as the product (volatility) \(\times \) (traded volume) \(\times \) (price), is proportional to the number of trades to the power 3 / 2. This so called intraday trading invariance principle and its connection to the relations proposed by Jones et al. [16] and Wyart et al. [31] is the focus of the present paper.

Our aim is to critically analyze these three relations as well as variants thereof by applying a method well known from physics: dimensional analysis. It is a tool which allows for the falsification of a proposed relation, e.g. of the above mentioned formulas for the number of trades, but not for its verification. This principle is similar in spirit to K. Popper’s approach to epistemology which in turn is inspired by the classical theory of statistics: There one can possibly reject a null hypothesis, but never prove it. Similarly, dimensional analysis can only isolate those functional relations between variables involving certain “dimensions” which do not violate the obvious scaling invariance of these dimensions. Hence, it a priori rules out those functional relations which are in conflict with these scaling requirements. But this does not imply that the identified functional relations, which are in accordance with the scaling requirements, describe the reality in a reasonable way. This has to be confirmed by other methods. In the present setting the ultimate challenge is, of course, to fit to empirical data. To complete the picture, we perform an empirical analysis of the relations described above and show that the intraday trading invariance principle provides an appropriate fit to empirical data, but fails to be a “universal law”.

In dimensional analysis one uses the rather obvious argument that a meaningful relation between quantities involving some “dimensions” should not be affected by the units in which these “dimensions” are measured. In the present context the relevant “dimensions” are time, shares, and money, denoted as \(\mathbb {T}, \mathbb {S}\) and \(\mathbb {U}\), respectively. We shall also use an additional argument, namely “leverage neutrality” as introduced by Kyle and Obizhaeva [19]. We emphasize that these authors were the first to combine the concepts of “leverage neutrality” and dimensional analysis. The assumption of leverage neutrality is based on the Modigliani–Miller theorem (see [24]) and leads to a scaling invariance principle which, mathematically speaking, is perfectly analogous to the dimensional scaling requirements mentioned above.

The remainder of the paper is structured as follows. In Sect. 2, we first deduce the proportionality between the number of trades and the price variability as proposed by Jones et al. [16] from dimensional arguments. Next, we derive the more involved scaling relations proposed by Benzaquen et al. [6] as well as Wyart et al. [31], again using dimensional analysis, and discuss the assumption of leverage neutrality in this context. Having a theoretical foundation for the discussed relations, we then turn to the empirical analysis in Sect. 3: Based on data from the NASDAQ stock market, we show that the relation proposed by Benzaquen et al. [6] fits the data rather well. In Sect. 4, we take a closer look at volatility and analyze implications of different time scalings thereof. We conclude with some empirical results in this respect. A reminder on the Pi-theorem from dimensional analysis as well as proofs for all considered relations can be found in the Appendix.

2 The trading invariance principle

We are interested in explaining the arrival rate of trades in a given stock measured as

-

\(N = N_t^{t+T}\quad \,\)the number of trades within a fixed time interval \([t,t+T]\) so that N is measured per units of time. Following the notation from [26], this link between the variable N and its dimensional unit is therefore given by

$$\begin{aligned}{}[N] = \mathbb {T}^{-1}. \end{aligned}$$

Let us identify the variables (and their dimensions \([\cdot ]\)) which are likely to influence the number of trades N in a given interval \([t,t+T]\). Three obvious candidates are:

-

\(V = V_t^{t+T}\quad \,\,\) the traded volume of the stock during the time interval \([t,t+T]\), measured in units of shares per time

$$\begin{aligned}{}[V] = \mathbb {S}/\mathbb {T}. \end{aligned}$$ -

\(P = P_t^{t+T}\quad \,\,\) the average price of the stock in the interval \([t,t+T]\), measured in units of money per share

$$\begin{aligned}{}[P] = \mathbb {U}/\mathbb {S}. \end{aligned}$$ -

\(\sigma ^2= (\sigma ^2)_t^{t+T}=\mathbb {V}\text {ar} \left( \log (P_{t+T}) - \log (P_t)\right) \quad \) the variance of the log-price over the time interval \([t,t+T]\). We assume

$$\begin{aligned}{}[\sigma ^2] = \mathbb {T}^{-1}. \end{aligned}$$

If the price process \((P_t)_{t\ge 0}\) follows, e.g. the Black–Scholes model, see (24), we clearly find the above scaling \([\sigma ^2]=\mathbb {T}^{-1}\) and shall retain this assumption in most of the paper. However, the scaling of \(\sigma ^2\) turns out to be more subtle than it seems at first glance. In Sect. 4 below, we shall investigate the implications of a scaling relation \([\sigma ^2] = \mathbb {T}^{-2H},\) where \(H \in (0,1)\) may be different from 1 / 2. For instance, such a scaling may result from price processes based on a fractional Brownian motion \((B^H_t)_{t\ge 0}\) with Hurst parameter \(H \in (0,1)\), see [23].

Based on these identified dimensions, let us turn to the basic idea of dimensional analysis: the validity of a considered relation should not depend on whether we measure time \(\mathbb {T}\) in seconds or in minutes, shares \(\mathbb {S}\) in single shares or in packages of hundred shares, and money \(\mathbb {U}\) in Euros or in Euro-cents.

Definition 1

(Dimensional invariance). A function \(h:\mathbb {R}^n_+ \rightarrow \mathbb {R}_+\) relating the quantity of interest U to the explanatory variables \(W_1,\dots ,W_n\), i.e,

is called dimensionally invariant if it is invariant under rescaling the involved dimensions (in our case \(\mathbb {S}, \mathbb {T}\) and \(\mathbb {U}\)).

As a first—and rather naive—approach we analyze the assumption that the three variables \(\sigma ^2,P\) and Vfully explain the number of trades N.

Proposition 1

Assume that the number of trades N depends only on the three quantities \(\sigma ^2,P\) and V, i.e.,

where the function \(g:\mathbb {R}_+^3\rightarrow \mathbb {R}_+\) is dimensionally invariant. Then, there is a constant \(c>0\) such that the number of trades N obeys the relation

The proof relies on elementary linear algebra and is given in Appendix B below (compare also the proof of Theorem 1 below which is similar). Recall that relation (2) goes back to Jones et al. [16].

As mentioned in the introduction, one should read the present “dimensional” argument in favor of relation (2) as a pure “if\(\dots \)then\(\dots \)” assertion: ifN really is fully explained by \(\sigma ^2,P\) and Vand the obvious scaling invariances of \(\mathbb {S}\), \(\mathbb {T}\) and \(\mathbb {U}\) are satisfied, then (2) is the only possible relation. As we shall see below, the empirical data does not reconfirm the validity of (2). In other words, we have to turn the above statement upside down: as (2) is not reconfirmed by empirical data, the variables \(\sigma ^2,P\) and V cannot fully explain the quantity N. It is therefore natural to introduce more/other quantities in order to explain the number of trades N.

Regarding the uniqueness of the function g in (1), the mathematical reason for the unique choice of g given by (2) is that we have three scaling relations (pertaining to the invariance of the “dimensions” \(\mathbb {S}, \mathbb {U}\) and \(\mathbb {T}\)) as well as the three explanatory variables \(\sigma ^2, P\) and V. This leads to three linear equations in three unknowns, yielding a unique solution.

Let us now try to go beyond the scope of relation (1) by considering further explanatory variables. Motivated by Wyart et al. [31], we consider the following quantity as relevant for the number of trades N in a given interval \([t,t+T]\), additionally to \(\sigma ^2, P\) and V:

-

\(S = S_t^{t+T}\quad \,\,\) the average bid-ask spread in the interval \([t,t+T]\), measured in units of money per share

$$\begin{aligned}{}[S] = \mathbb {U}/\mathbb {S}. \end{aligned}$$

Following Benzaquen et al. [6], it is also convenient to alternatively consider the quantity

-

\(C=C_t^{t+T}\quad \,\,\) the average cost per trade in the interval \([t,t+T]\), measured in units of money

$$\begin{aligned}{}[C] = \mathbb {U}. \end{aligned}$$

To visualize things, suppose that for some stock we observe in average during the time interval \([t,t+T]\) an ask price of EUR12.30 and a bid price of EUR12.20 so that the bid-ask spread S equals 10 cents. If the average trade size in the interval \([t,t+T]\), denoted by \(Q=Q_t^{t+T}\), is 500 shares, we obtain that the average cost per trade \(C = QS\) is EUR50. A discussion of the difference between using S rather than C as an explanatory variable can be found at the end of this section. For now, let us follow Benzaquen et al. [6] for our derivation of the intraday trading invariance principle and pass to the set \(\sigma ^2, P,V\) and C of explanatory variables, i.e.,

for some function \(g:\mathbb {R}_+^4\rightarrow \mathbb {R}_+\). As we now have four explanatory variables, the three equations yielded by the scale invariance of the dimensions \(\mathbb {S}, \mathbb {U}\) and \(\mathbb {T}\) are not sufficient anymore to imply an (essentially) unique solution for g. In fact, the four explanatory variables above combined with the three invariance relations pertaining to \(\mathbb {S}\), \(\mathbb {T}\) and \(\mathbb {U}\) only yield a general solution of (3) of the form

where \(f:\mathbb {R}_+ \rightarrow \mathbb {R}_+\) is an arbitrary function whose generality cannot be restricted by only relying on arguments pertaining to dimensional analysis with respect to the three dimensions \(\mathbb {S}\), \(\mathbb {T}\) and \(\mathbb {U}\) (see Appendix B).

Hence, in order to obtain such a crisp result as in (2), an additional “dimensional invariance” is required. Kyle and Obizhaeva [19] found a remedy: a no-arbitrage type argument, referred to as “leverage neutrality”.Footnote 2 This concept is inspired by the findings of Modigliani and Miller [24] (compare [26]): Consider a stock of a company, and suppose that the company changes its capital structure by paying dividends or by raising new capital. The Modigliani–Miller theorem tells us precisely which features of the company are not affected by a change in the capital structure. This allows us to establish how certain quantities behave when varying the leverage in terms of the relation between debt and equity of a company.

From a conceptual point of view, the assumption of leverage neutrality gives a constraint on the behavior of the quantities \(N, \sigma ^2, P, V, C\) (resp. S) in case of changing the firm’s capital structure. This constraint can be understood as an additional though synthetic dimension in our analysis, which we refer to as the Modigliani–Miller “dimension” \(\mathbb {M}\). The Modigliani–Miller “dimension” \(\mathbb {M}\) of a share of a company is measured in terms of the leverage \(\mathcal {L}\), i.e., the quantity

Multiplying \(\mathcal {L}\) by a factor \(A > 1\) is equivalent to paying out \((1-A^{-1})\) of the equity as cash-dividends. On the other hand, multiplying \(\mathcal {L}\) by a factor \(0<A<1\) corresponds to raising new capital in order to increase the firm’s equity by a factor \(A^{-1}\). Following Kyle and Obizhaeva [19] as well as [26], we are led to the following assumption:

Leverage Neutrality Assumption

([19, 26]). Scaling the Modigliani–Miller “dimension” \(\mathbb {M}\) by a factor \(A \in \mathbb {R}_+\) implies that

-

N, V and C (as well as S) remain constant,

-

P changes by a factor \(A^{-1}\),

-

\(\sigma ^2\) changes by a factor \(A^2\).

To recapitulate: Setting \(A=2\) corresponds to paying out half of the equity as dividends so that each share yields a dividend of \((1-A^{-1})P=P/2\). The stock price is, thus, multiplied by \(A^{-1}=1/2\) while the volatility \(\sigma \) is multiplied by \(A=2\). The remaining quantities are not affected by changing the leverage, in accordance with the insight of Modigliani and Miller [24] and the recent work by Kyle and Obizhaeva [19]. The economic reason is that the value of the assets of the corresponding company and hence the associated risk does not change.

Definition 2

(Leverage neutrality). A function \(h:\mathbb {R}_+^n \rightarrow \mathbb {R}_+\) relating the quantity N to the explanatory variables \(\sigma ^2, P, V, C\) and S, i.e,

is called leverage neutral if it is invariant when rescaling the Modigliani–Miller dimension \(\mathbb {M}\) of the variables \(N, \sigma ^2, P, V, C, S\) as defined in the assumption above.

We can now derive the following relation, which is the focus of the present paper. It relies on the basic fact that under the “Leverage Neutrality Assumption” we now find four linear equations in order to determine four unknowns. Note that Benzaquen et al. [6] coined this relation the “3/2-law”.

Theorem 1

((3 / 2)-law). Suppose the “Leverage Neutrality Assumption” holds and that the number of trades N depends only on the four quantities \(\sigma ^2, P, V\) and C, i.e.,

where the function \(g:\mathbb {R}_+^4\rightarrow \mathbb {R}_+\) is dimensionally invariant and leverage neutral. Then, there is a constant \(c>0\) such that the number of trades N obeys the relation

The proof follows from the general Pi-theorem reviewed in Appendix A. For the convenience of the reader, we also present a direct proof of Theorem 1. Although slightly longish and repetitive, we hope that it helps the intuition.

Proof of Theorem 1

First, we make the following ansatz for the function g in (5):

where \(c>0\) is a constant and \(y_1,\dots ,y_4\) are unknown real numbers. Looking at the first row of Table 1 yields the relation

Indeed, when passing from counting shares in packages of 100 units rather than in single units, the number P is replaced by 100P while the number V is replaced by V / 100. Since the function g in (7) is assumed to be dimensionally invariant, g should remain unchanged by this passage, i.e.,

which is only possible if (8) holds true. Looking at the other rows of Table 1 we therefore get the system of linear equations

whose unique solution is

which gives (6) as one possible solution of (5).

We still have to show the uniqueness of (6). To do so, it is convenient to pass to logarithmic coordinates: suppose that there is a function \(G:\mathbb {R}^4 \rightarrow \mathbb {R}\) such that \(\log (N) = G\left( \log (\sigma ^2),\log (P), \log (V),\log (C)\right) \) or equivalently,

where we write \(\left( \log (\sigma ^2),\log (P), \log (V), \log (C)\right) \) as \((X_1, X_2, X_3, X_4)\). We have to show that G has the form

where \(y_1, y_2, y_3, y_4\) are given by (10) and const is a real number. Denote by \(r_1:= -e_2 + e_3\) the first row of Table 1, considered as a vector in \(\mathbb {R}^4\), where \((e_i)_{i=1}^{4}\) is the canonical basis of \(\mathbb {R}^4\). Similarly as in (9), the first row of Table 1 and dimensional invariance imply that

Clearly we can replace \(\log (100)\) by any real number. Speaking abstractly, this means that \(G:\mathbb {R}^4\rightarrow \mathbb {R}\) must be constant on any straight line parallel to the vector \(r_1\). A similar argument applies to \(r_2 = e_2 + e_4\) and \(r_4=2e_1 - e_2\). As regard \(r_3= -e_1-e_3\) the situation is slightly different, as the third row of Table 1 also involves a non-zero entry of N.

The third row of Table 1 and (11) imply that for any \(\lambda \in \mathbb {R}\),

Setting const \(:= G(0,0,0,0)\), we have

which uniquely determines G on the one-dimensional space spanned by \(r_3 = -e_1-e_3\) in \(\mathbb {R}^4\). As we have seen that G also must be constant along each line in \(\mathbb {R}^3\) parallel to \(r_1, r_2\) and \( r_4\), and as \(r_1, r_2, r_3, r_4\) span the entire space \(\mathbb {R}^4\), we conclude that there is only one choice for the function G, up to the constant \(\text {const} = G(0,0,0,0)\). \(\square \)

For an alternative derivation of relation (6), we pass from considering \(\sigma ^2\), the variability of the relative price changes, to considering \(\sigma _B^2\), the variability of the absolute price changes. This will allow us to reduce the two explanatory variables \(\sigma ^2\) and P to one explanatory variable \(\sigma _B^2 = \sigma ^2 P^2\). We call \(\sigma _B\) the Bachelier volatility as it corresponds to Bachelier’s original model from 1900, see [5]. Recall that the dynamics of the price process \((P_t)_{t\ge 0}\) of the Black–Scholes versus the Bachelier model are

where \(W_t\) is a standard Brownian motion. Defining \(\sigma _B=\sigma P\) the two models coincide remarkably well as long as \(P_t\) does not move too much (compare e.g. [29]). We therefore define

-

\(\sigma _B^2 =\sigma ^2 P^2\) the Bachelier volatility in the interval \([t,t+T]\). Plugging in the dimensions \([\sigma ^2]=\mathbb {T}^{-1}\) and \([P] = \mathbb {U}\mathbb {S}^{-1}\), we obtain

$$\begin{aligned}{}[\sigma _B^2] = \mathbb {U}^2 \mathbb {S}^{-2} \mathbb {T}^{-1}. \end{aligned}$$

A glance at Table 2 reveals that \(\sigma ^2_B\) has Modigliani–Miller dimension \(\mathbb {M}\) equal to zero (just as the other variables V, C and N). This enables us to derive the assertion of Theorem 1 by using only the three obvious scaling invariances, but without imposing a priori the requirement of leverage neutrality.

Corollary 2

Suppose the number of trades N depends only on the three quantities \(\sigma _B^2,V\) and C, i.e.,

where the function \(g:\mathbb {R}_+^3\rightarrow \mathbb {R}_+\) is dimensionally invariant. Then, there is a constant \(c>0\) such that the number of trades N obeys the relation

The proof is analogous to (and even easier than) the above proof. Note that Proposition 1 and Corollary 2 both only rely on the very convincing invariance assumption with respect to \(\mathbb {S}\), \(\mathbb {T}\) and \(\mathbb {U}\), but not on the “Leverage Neutrality Assumption”.

Anticipating that relation (14) gives a superior fit to empirical data than relation (2) we can draw the following conclusion: the choice of \(\sigma _B^2, V, C\) as explanatory variables for the quantity N is superior to the choice \(\sigma ^2, P, V\) made in Proposition 1 above.

Here is a “dimensional argument” why we should expect a better result from Corollary 2 as compared to Proposition 1. It follows from the very approach of dimensional analysis that everything hinges on the assumption that the chosen explanatory variables indeed “fully explain” the dependent variable. Of course, in reality such an assumption will—at best—only be approximately satisfied. The art of the game is to find a combination of explanatory variables which “best” explain the resulting variable. The choice of the variables \(\sigma ^2_B, V, C\) as in Corollary 2automatically implies that the “Leverage Neutrality Assumption” is satisfied as shown in Table 2. Indeed, the variables \(\sigma ^2_B, V, C\) as well as N have a zero entry for the Modigliani–Miller dimension \(\mathbb {M}\). Therefore, any function relating these variables is automatically leverage neutral. This is in contrast to the choice of variables \(\sigma ^2, P, V\) in Proposition 1 as Table 1 reveals that P and \(\sigma ^2\) have a non-trivial dependence on \(\mathbb {M}\). It follows that formula (2) does not satisfy the invariance relation dictated by the “Leverage Neutrality Assumption”.

Finally, we examine the implications of substituting the cost per trade C by its more common counterpart, the bid-ask spread S, introduced above. In fact, in the present context it is equivalent to use either C or S as explanatory variables for the number of trades N—provided that the traded volume V is already one of the explanatory variables. Indeed, we have the relation \(C = SQ = SV/N\) since the average trade size Q in the interval \([t,t+T]\) is given by the traded volume V divided by the number of trades N. Hence, if we know the functional relation between N and V, we also know the functional relation between N and Q and can therefore pass from S to \(C=SQ\) and vice versa. Thus, we may restate Theorem 1 (and, equivalently, Corollary 2) in terms of the bid-ask spread S rather than the cost per trade C in the following corollary.

Corollary 3

Suppose that the number of trades N depends only on the three quantities \(\sigma _B^2\), V and S, i.e.,

where the function \(g:\mathbb {R}_+^3\rightarrow \mathbb {R}_+\)dimensionally invariant. Then, there is a constant \(c>0\) such that the number of trades N obeys the relation

We observe that the variables \(\sigma ^2_B, V\) and S again have no Modigliani–Miller dimension \(\mathbb {M}\), i.e., they are invariant under changes of the leverage. Therefore, formula (16) satisfies the invariance principle given by the “Leverage Neutrality Assumption”. We note again that given the relations \(C = SQ = SV/N\) as well as \(\sigma _B^2 = \sigma ^2 P^2\) the two equations (6) and (16) are indeed equivalent.

Relation (16) is precisely the one proposed by Wyart et al. [31]. By rearranging the terms, we find that

The interpretation is that the squared Bachelier volatility per trade is proportional to the square of the spread. If we elaborate further on (17), we find that

Without loss of generality, we can determine the price P on the left hand side of (18) as midquote price, i.e., the average of the best ask- and bid price. Then, S / P refers to the so called proportional bid-ask spread which can be used to approximate a dealer’s “round trip” transaction costs. Clearly, the approximate round-trip costs increase in the volatility of a relative price change and decrease in the trading activity.

Summing up this section, we have seen that the relation \(N \sim \sigma ^2\) proposed by Jones et al. [16] follows from the restrictive assumption that the number of trades Nonly depends on the quantities \(\sigma ^2, P\) and V as well as dimensional arguments (see Proposition 1). Going beyond the latter relation, it seems reasonable to include information concerning the bid-ask spread in our analysis. Depending on whether we choose the trading cost C or the bid-ask spread S directly, we are led to either the 3/2-law \(N^{3/2} \sim \sigma P V /C\) proposed by Benzaquen et al. [6] (see Theorem 1) or to the relation \(S \sim \sigma _B/\sqrt{N}\) proposed by Wyart et al. [31] (see Corollary 3). When proving the two latter relations we have seen that the assumption of leverage neutrality comes into play. Alternatively, we can also consider the product \(\sigma ^2 P^2\), rather than \(\sigma ^2\) and P separately. This consideration of the “Bachelier volatility” \(\sigma _B = \sigma P\) reduces the complexity of the problem inasmuch as the assumption of leverage neutrality is not needed anymore. Again, the actual validity of any of the above scaling laws should be confirmed by exhaustive empirical analysis.

3 Empirical evidence

3.1 Degrees of universality and relevant literature

We now turn to the empirical analysis of relation (2) as well as of the 3 / 2-law (6). When collecting data for the quantities N, \(\sigma ^2\), V, P and C, one has to specify the considered asset and the considered time period as well as the length T of the time interval over which the data is aggregated. We cannot expect that the constant c appearing in relations (2) resp. (6) is the same for each considered interval and each possible interval length and each considered asset in either one of the relations. We can only hope that a given relation holds on average. Based on the nomenclature introduced in Benzaquen et al. [6], we therefore distinguish the following three degrees of universality attached to the validity of relations (2) and (6):

-

1.

No universality The relation holds on average for a fixed asset and a fixed interval length. However, the constant c varies significantly for different assets and different interval lengths.

-

2.

Weak universality The relation holds on average for some assets and some interval lengths with similar values from the constant c.

-

3.

Strong universality The relation holds on average for all assets and all interval lengths with similar values from the constant c.

Note that this distinction does not allow for the possibility that the validity attached to a given relation changes over time, simply because we consider only one specific time period.

Let us shortly discuss the relevant empirical evidence which can be found in the literature before turning to our own empirical analysis. Andersen et al. [3] conducted an important empirical study in the present context. They test the relation

where I is independently and identically distributed across assets and time for E-mini S&P 500 futures contract. Neglecting the price P, they show that relation \(N^{3/2} \sim V \sigma \) holds when averaging within and across trading days for this particular asset. In fact, their data fits the latter relation nearly perfectly compared to the relations \(V \sim \sigma ^2\) resp. \(N\sim \sigma ^2\) proposed by Tauchen and Pitts [30] resp. Jones et al. [16]. Benzaquen et al. [6] address the same question by examining eleven additional futures contracts as well as 300 US stocks. Aiming to confirm that \(\beta = 3/2\) in the relation \(N^{\beta } \sim \sigma P V\), they estimate \(\beta \) for each considered stock individually. They find that \(\hat{\beta } = 1.54\,\pm \,0.11\), where the uncertainty here is the root mean square cross-sectional dispersion. Thus, these authors note that this provides evidence that the relation \(N^{3/2} \sim \sigma P V \) holds also on the stock market and not only on the very liquid futures market. Moreover, they show that the distribution of I in (19) depends significantly on the studied asset and thus, conclude that relation (19) holds only with weak universality. As an additional contribution, the authors reveal that the inclusion of the trading cost C is beneficial in the sense that their proposed invariant \(\mathcal {I} = \sigma P V C^{-1} N^{-3/2}\) is almost constant for different assets.

Finally, let us mention the evidence in the earlier work by Wyart et al. [31]. These authors show that relation (17) describes the data very well when the right level of aggregation is chosen. When examining the France Telecom stock, S and \(\sigma _B/\sqrt{N}\) are averaged over two trading days, while in case of NYSE stocks these quantities are averaged over an entire year. The constant c in relation (17) is found to lie between 1.2 and 1.6. Moreover, the authors note that the typical intraday pattern of the considered quantities is in line with (17): The U-shaped pattern of the volatility \(\sigma _B\) is explained by the decline of the bid-ask spread S and an increase of the number of trades N within the trading day.

3.2 Description of data

Our empirical analysis is based on limit order book data provided by the LOBSTER database (https://lobsterdata.com). The considered sampling period begins on January 2, 2015 and ends on August 31, 2015, leaving 167 trading days. Among all NASDAQ stocks, \(d=128\) sufficiently liquid stocks with high market capitalizations are chosen. Stocks are considered to be “sufficiently liquid” as long as the aggregated variables (defined below) can be reasonably treated as continuously distributed, i.e., the empirical distributions of the aggregated variables do not have points with obviously concentrated mass. Observations made during the thirty minutes after the opening of the exchange as well as trading halts are removed.

Let us fix an interval length \(T\in \{30, 60, 120, 180, 360\}\) min for which a developed hypothesis is tested. For the sake of illustration, set the length of the considered time interval T to 60min. This interval length balances the tradeoff between sufficient aggregation of the data on the one hand and some intraday variability on the other hand. As a result, we are left with \(n=1002\) non-overlapping time intervals with equal length \(T=60\) min. Let us concentrate on a specific asset \(i \in \lbrace 1,\dots ,d \rbrace \) (omitting the index i for ease of notation in the remainder of Sect. 3.2) and let \(j\in \lbrace 1,\dots ,n\rbrace \) refer to an arbitrary interval. Suppose the trades in the considered interval j arrive at irregularly spaced transaction times \(t_1,t_2,\ldots ,t_{N_j}\). Then,

-

\(N_j\) denotes the number of trades in the interval j,

-

\(Q_j\)\(= N_j^{-1} \sum _{k=1}^{N_j} Q_{t_k}\) denotes the average size of the trades in the interval j, where \(Q_{t_k}\) denotes the number of shares traded at time \(t_k\),

-

\(V_j\)\(= N_j \times Q_j\) is the traded volume in the interval j,

-

\(P_j\)\(= N_j^{-1} \sum _{k=1}^{N_j} P_{t_k}\) denotes the average midquote price in the interval j, where \(P_{t_k} = (A_{t_k} + B_{t_k})/2\) and \(A_{t_k}\) (resp. \(B_{t_k}\)) denotes the best ask (resp. bid) price after the transaction at time \(t_k\),

-

\(\hat{\sigma }_j^2\) denotes the estimated squared volatility in the interval j,

-

\(S_j\)\(= N_j^{-1} \sum _{k=1}^{N_j} S_{t_k}\) denotes the average bid-ask spread in the interval j, where \(S_{t_k} = A_{t_k} - B_{t_k}\) is the bid-ask spread after the transaction at time \(t_k\), and

-

\(C_j\)\(=Q_j\times S_j\) is the cost per trade in the interval j.

Note the following four details: Firstly, even though transaction times are recorded on a nano-second level, a time-stamp \(t_k\) is recorded L-times (\(t_{k_1}, \dots , t_{k_L}\)) in the raw dataset when a market order is executed against L limit orders at time \(t_k\). Such a multiple entry of the same time-stamp enters the number of trades \(N_j\) only once (not L-times). The size \(Q_{t_k}\) of the trade at time \(t_k\) is determined by summing the L-records in the dataset \(Q_{t_{{k}_{\ell }}}\), \(\ell =1,\ldots ,L\), i.e., \(Q_{t_k} = \sum _{\ell =1}^L Q_{t_{k_\ell }}\). The midquote price \(P_{t_k}\) and the bid-ask spread \(S_{t_k}\) related to the merged market order of size \(Q_{t_k}\) are computed as volume-weighted averages

Secondly, the aggregated variables, i.e., the average market order size \(Q_j\), the average midquote price \(P_j\) and the average bid-ask spread \(S_j\) of interval j, are in fact not computed by the sample averages as state above. Since simple sample averages are sensitive with respect to outliers, e.g. huge market orders, \(Q_j\), \(P_j\) and \(S_j\) are based on robust averages. In detail, we compute trimmed means of \(Q_{t_1},\ldots ,Q_{t_{N_{j}}}\), \(P_{t_1},\ldots ,P_{t_{N_{j}}}\) and \(S_{t_1},\ldots ,S_{t_{N_{j}}}\) to obtain \(Q_j\), \(P_j\) and \(S_j\) respectively. These trimmed means discard the upper 0.5% and the lower 0.5% of the corresponding ordered data and compute the average based on the remaining 99% of the data.

Thirdly, the estimated squared volatility \(\sigma _j^2\) is computed as realized variance in interval j

The properties of the estimator \(\hat{\sigma }_j^2\) are well understood for a variety of models for the efficient price process \((P_t)_{t\ge 0}\). For example, if the dynamics of the efficient price process follows the stochastic model \(d P_t=\sigma P_t dW_t\), with \(\sigma >0\), the estimator \(\hat{\sigma }_j^2\) converges weakly in probability to \(\sigma ^2 T\) (the quadratic variation of the increments of \(\left( \log (P_t)\right) _{t\ge 0}\)) as the number of transactions within interval j becomes dense (as \(N_j\rightarrow \infty \)). The limit of \(\hat{\sigma }_j^2\), however, does not coincide with the quadratic variation of the efficient price process, if the observed midquote price is contaminated by market microstructure noise. This noise, for instance, arises from market imperfections such as price discreteness or informational content in price changes, see [7]. To check the robustness of our analysis with respect to the presence of market microstructure noise, several results below can likewise be confirmed by replacing the realized variance by the noise-robust estimator of the quadratic variation proposed in [15]. It should be noticed that a distortion of the analysis by the bid-ask bounce is already avoided by considering midquote prices rather than transaction prices. The interested reader will find a gentle introduction explaining how noisy price observations erode the realized variance in [1].

Last but not least, note that Benzaquen et al. [6] in fact define the cost per trade by \(\widetilde{C}_j = N_{j}^{-1} \sum _{k=1}^{N_j} Q_{t_k} S_{t_k}\). This slight difference in the definitions becomes obviously negligible, if the bid-ask spread \(S_{t_k}\) is constant over the entire interval j. The results presented below are robust with respect to the employed version of the cost per trade as we shall see.

3.3 \(N \sim \sigma ^2\) versus \(N^{3/2} \sim \sigma P V /C\)

To check which of the relations \(N \sim \sigma ^2\) and \(N^{3/2} \sim \sigma P V /C\) is superiorly supported by data, we consider for each stock (\(i=1,\ldots ,d\)) a multiplicative model of the form

where \(\varepsilon _{ij}\), \(j=1,\ldots ,n\), is an error term that satisfies standard regularity conditions and \(\alpha _i\), \(\beta _i\) and \(\gamma _i\) are unknown real valued parameters. A logarithmic transformation of (21) yields the linear model

Since dimensional analysis imposes the restriction \(\beta _i+\gamma _i=1\) on the parameters \(\beta _i\) and \(\gamma _i\), the value \(\gamma _i=0\) would imply the relation \(N\sim \sigma ^2\), whereas \(\gamma _i = 2/3\) would imply the relation \(N^{3/2} \sim \sigma P V /C\) from Theorem 1. The estimation of the coefficients \(\beta _i\) and \(\gamma _i\) subject to the restriction \(\beta _i+\gamma _i=1\) therefore allows us to infer which of the two discussed relations is backed by stronger empirical evidence.

Before turning to the constrained estimation of the parameters \(\beta _i\) and \(\gamma _i\), it deserves to be emphasized that the functional relation between the logarithmic dependent variable \(\log (N_j)\) and the logarithmic explanatory variable \(\log (\hat{\sigma }_{ij} P_{ij} V_{ij}/C_{ij})\) can be reasonably assumed to be linear for all stocks \(i=1,\dots ,d\). To conclude this, we have visually inspected the bivariate point-clouds of dependent and explanatory variable. Figure 1 illustrates this relation for the stocks of the American Airline Group, Inc. (AAL) and Apple Inc. (AAPL). The remaining 126 stocks show similar patterns.

For each stock (\(i=1,\dots ,d\)) and all interval lengths \(T\in \{30, 60, 120, 180, 360\}\) min, we estimate the parameters \(\beta _i\) and \(\gamma _i\) in (22) by ordinary least squares subject to the constraint \(\beta _i+\gamma _i=1\). The corresponding estimate of interest is denoted by \(\hat{\gamma }_i\). To present the results of these regressions in an informative and compact way, Fig. 2 shows kernel density estimates of \(\hat{\gamma }_i\) across i and for fixed T.

First, let us come to the main result of this section and concentrate on the solid graphs in Fig. 2 referring to the standard setting based on the realized variance \({\hat{\sigma }}_{ij}^2\) defined in (20) and the cost per trade \(C_{ij} = Q_{ij} \times S_{ij}\). If the parameter \(\gamma _i\) of the linear model (22) is equal to zero, then the underlying variables satisfy the simple relation \(N\sim \sigma ^2\). Similarly, if the parameter \(\gamma _i\) is equal to 2 / 3, then we can conclude that the 3/2-law from Theorem 1 holds. As seen in Fig. 2, the averages of the estimates \({\hat{\gamma _i}}\) (across i for different T) are clearly much closer to 2 / 3 than to zero for all considered interval lengths T. This result supports the claim made in Sect. 2 that there is stronger empirical support for the 3/2-law (or equivalently for the relation \(N \sim (\sigma P / S)^2\)) than for the relation \(N \sim \sigma ^2\).

Regarding the robustness of this insight, we have re-conducted the above regression analysis for two slightly different scenarios. One alternative setting considers replacing the realized variance in the linear model (22) by the market microstructure noise robust estimator of the quadratic variation of [15]. The dashed graphs in Fig. 2 are related to density estimates relying on corresponding parameter estimates \({\hat{\gamma _i}}\), \(i=1,\ldots ,d\). The second modification of the initial setting replaces the cost per trade \(C_j\) in the linear model (22) by the variant \(\widetilde{C}_j\) of [6]. The dotted graphs in Fig. 2 refer to corresponding density estimates. Despite some deviation in the estimates \(\hat{\gamma }_i\) for these two alternative settings from the initial one, the solid, dashed and dotted graphs document a rather similar pattern among the estimates of the parameters \(\gamma _i\) for all interval lengths \(T\in \{30, 60, 120, 180, 360\}\) min. These similarities lead to the conclusion that neither market microstructure noise nor the exact definition of the cost per trade erode the overall relation between the dependent and explanatory variables. In the remaining part of the manuscript, we take a closer look on the 3/2-law and try to find reasonable explanations for the systematic deviations of the estimates \({\hat{\gamma _i}}\) from 2 / 3.

3.4 On the universality of the 3/2-law

In order to check the validity and universality of the 3 / 2-law, \(N^{3/2} = c \cdot \sigma P V / C\) (or equivalently of the relation \(N = c^2 \cdot (\sigma P / S)^2\)), we examine the variation of the constant c across assets and interval lengths. Hence, we do not rely on the estimators \(\hat{\gamma }_i\) computed in Sect. 3.3. Instead, we compute for a fixed interval length T the quantity

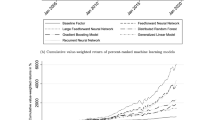

where n is the number of non-overlapping time intervals with equal length T. The left panel of Fig. 3 shows the estimates \(\hat{c}_i\) for different values of T. Note that the rainbow-color-code refers to the ordered values of \(\hat{c}_i\) for \(T=120\) min. As we recover the same rainbow-pattern also for the other interval lengths \(T \in \lbrace 30, 60, 180, 360 \rbrace \) min, we can conclude that there is little variation of the estimates \(\hat{c}_i\) for a fixed stock i across different interval lengths T. This small variation of \(\hat{c}_i\) for fixed i and varying \(T\in \{30, 60, 120, 180, 360\}\) min endows the 3/2-law with a certain degree of universality. However, the present cross-sectional dispersion in \(\hat{c}_i\) across different assets i, i.e., the fact that depending on the considered stock the estimates \(\hat{c}_i\) range from two to five, does not allow awarding the 3/2-law with strong universality. Thus, we draw the same conclusion as Benzaquen et al. [6] that the 3/2-law holds with weak universality. For completeness, the kernel density estimate in the right panel of Fig. 3 illustrates the distribution of the estimates \(\hat{c}_i\), \(i=1,\ldots ,d\) for \(T=120\) min.

4 A closer look on volatility

We have seen that the volatility \(\sigma \) plays a dominant role in explaining the trading activity N. The squared volatility \(\sigma ^2\) of a given stock during a fixed interval \([t,t+T]\) was defined as the variance of the change of the log-price

When specifying the definition of \(\sigma ^2\) in this way we had in mind the Black–Scholes model,

where, fixing the normalization \(T=1\), formula (23) indeed recovers the constant \(\sigma \) in (24). Going beyond Black–Scholes, consider a price process of the form

where \((\sigma _t)_{t \ge 0}\) is an arbitrary stochastic process (satisfying suitable regularity conditions). In this case, formula (23) should, of course, be interpreted conditionally on the sigma-algebra \(\mathcal {F}_t\) and we obtain the “Wald identity”

This implies in particular that, as long as we are in the framework of processes of the form (25), the above chosen scaling

is the only reasonable choice.

But let us have a closer look at what we are actually doing here. The above reasoning tacitly assumes that we are starting from a stochastic model of a price process. The present situation, however, dictates a different point of view: we start from empirical tick data observed during the interval \([t,t+T]\). Even when we make the heroic assumption that this data is accurately modeled, e.g. by the Black Scholes model (24), the number \(\sigma ^2\) which we plug into the formula \(N=g(\sigma ^2,\dots )\) can only be an estimator of \(\mathcal {\sigma }^2\) obtained from the data at hand. This implies that, strictly speaking, we should write our formulas as \(N= g(\hat{\sigma }^2,\dots )\) in dependence of the estimated squared volatility \(\hat{\sigma }^2\). The gist of the argument is that for the purpose of dimensional analysis the scaling which is relevant is that of the estimator of the volatility rather than that of the true volatility (whatever this is). To be concrete, suppose that we are given price data \((P_{t_k})_{k=1,\dots ,N}\) for a grid \(t\le t_{1}<\dots <t_N \le t+T\) in the interval \([t,t+T]\). An obvious choice for the estimator of the squared volatility, which is also used in Sect. 3 above, is

Clearly, this estimator has the dimension \([\hat{\sigma }^2] = \mathbb {T}^{-1}\) if we suppose that the typical distance \(\Delta t_k = t_{k+1}- t_k\) (in absolute terms) does not depend on whether we measure time in seconds or in minutes. Hence, for the estimator \({\hat{\sigma }}^2\), the hypothesis \([\hat{\sigma }^2] = \mathbb {T}^{-1}\) underlying the dimensional analysis in Sect. 2 is satisfied.

However, we can also think of other estimators. Fix \(H\in (0,1)\) and define the estimator \(\hat{\sigma }^2(H)\) by

To motivate this estimator, consider the model

where \(\sigma >0\) is a fixed number and \((W_t^H)_{t\ge 0}\) is a fractional Brownian motion with Hurst parameter H, starting at \(W_0^H=0\). In this case, the estimator \(\hat{\sigma }^2(H)\) in (28) is a consistent estimator for the parameter \(\sigma ^2\) in (29). But the estimator \(\hat{\sigma }^2(H)\) now scales differently in time than the quadratic estimator \(\hat{\sigma }^2\) (see [10, 27]), namely

Models for the price process \((P_t)_{t\ge 0}\) involving fractional Brownian motion as in (29) have been proposed, notably by B. Mandelbrot, already more than 50 years ago [22, 23] and there may be good reasons not to rule them out a priori.

Here is another example where a sub-diffusive behavior of the price process \((P_t)_{t^\ge 0}\) occurs, due to a micro-structural effect: the discrete nature of the prices in the real world (compare Benzaquen et al. [6]; we thank Jean-Philippe Bouchaud for bringing this phenomenon to our attention). To present the idea in its simplest possible form, suppose that the price process \((\check{P}_t)_{t\ge 0}\) is given by

where \((W_t)_{t \ge 0}\) is a standard Brownian motion and \(\text {int}(x)\) denotes the integer closest to the real number x, i.e., \(\text {int}(x) = \sup \lbrace n \in \mathbb {Z}: n \le x + 0.5 \rbrace \). Fix again an interval \([t,t+T]\) and consider the quantity

For small \(T>0\), we show in Appendix C that

for some constant \(c>0\). Hence, if the interval length T is sufficiently small, we recover that \([\check{\sigma }^2 ] = \mathbb {T}^{-1/2}\), rather than the usual scaling in the dimension time, i.e., \(\mathbb {T}^{-1}\).

This observation indicates, that if the interval length T is small compared to the width of the price grid, i.e., the tick value, we observe a sub-diffusive behavior of the price process even if the “efficient”, unobserved price process is assumed to be a diffusion. We refer to Robert and Rosenbaum [28] for a detailed discussion of how to account for the discrete nature of prices. For now, this rough argument should only serve as motivation that there might be plenty of reasons why the scaling \([\sigma ^2] = \mathbb {T}^{-1}\) is, in practical situations, not as clearly granted as it might seem at first glance.

For all these reasons we drop in this section the convenient dimensional assumption \([\sigma ^2] = \mathbb {T}^{-1}\) and replace it by the subsequent more general assumption.

H-Assumption.There is\(H \in (0,1)\)such that the squared volatility estimator\(\hat{\sigma }^2(H)\)has dimension

Proposition 2

(\((1+H)\)-law). Suppose that the “Leverage Neutrality Assumption” as well as the “H-Assumption” hold true and that the number of trades N depends only on the four quantities \(\hat{\sigma }^2(H), P, V\) and C, i.e.,

where the function \(g:\mathbb {R}_+^4\rightarrow \mathbb {R}_+\) is dimensionally invariant and leverage neutral. Then, there is a constant \(c>0\) such that the number of trades N obeys the relation

The proof is analogous to the proof of Theorem 1 and is given in Appendix B.

The hypothesis of the above proposition assumes that \(H \in (0,1)\) is known a priori. As H is typically unknown in practical applications, we can therefore ask the following question: For which H does relation (31) fit the empirical data best? We address this question in the following subsection.

4.1 Empirical evidence under the H-Assumption

According to arguments from dimensional analysis, the constant c and the parameter H from Eq. (31) should at best be identical for all stocks and all interval lengths T. The empirical results above, however, have revealed cross-sectional dispersion which might be related to the restrictive assumption \([\hat{\sigma }^2]=\mathbb {T}^{-1}\). This restriction motivates the empirical exercise of this section: Can we determine an \(H\in (0,1)\) in (31) that minimizes the cross-sectional dispersion across the estimates of c?

Following Proposition 2, we therefore compute the estimates \(\hat{c}_i(H)\) for different H as

where \(\hat{\sigma }_{ij}^2(H)\) is defined in (28), \(H \in (0,1)\). Both variables \(N_{ij}^{H}\) and \(\hat{\sigma }_{ij}(H)\) increase as H increases, so that it is not obvious how \({\hat{c}}_i(H)\) behaves when H increases. We find empirically that overall the constant \(\hat{c}_i(H)\) typically increases in H. Addressing the above question therefore requires a scale invariant measure for the variation in \(\hat{c}_i(H)\) such as the Gini-coefficient which is given by

for the ordered data \(x_{[1]}<x_{[2]}<\ldots <x_{[n]}\). Note that the Gini-coefficient \(\mathcal {G}(x_1,\ldots ,x_n)\in [0,1]\) is interpreted as a measure for inequality. If all values \(x_1,\ldots ,x_n\) are equal, \(\mathcal {G}\) equals zero. In case of strong heterogeneity in \(x_1,\ldots ,x_n\) the Gini-coefficient approaches one.Footnote 3

Now, we minimize the Gini-coefficient of \(\left( \hat{c}_i(H) \right) _{i=1,\dots ,d}\) with respect to H in order to find

The left panel of Fig. 4 plots the Gini-coefficient in dependence of H for different interval length T. We roughly find that \(\widehat{H}=0.22\) for \(T=30\) min, \(\widehat{H}=0.23\) for \(T=60\) min, \(\widehat{H}=0.25\) for \(T=120\) min, \(\widehat{H}=0.27\) for \(T=180\) min and \(\widehat{H}=0.31\) for \(T=360\) min. The rainbow-color-code of Fig. 3 has been transferred to the right panel of Fig. 4. In contrast to Fig. 3 yet, we present the quantities \(\hat{c}_i(\widehat{H})\) in dependence of the optimal \(\widehat{H}\) for the given interval length T. In case \(T=120\) min for instance, the estimates \(\hat{c}_i(H=0.25)\) range from 1.2 to 2.6 for different assets i. On an absolute scale, the variation seems to be smaller compared to Fig. 3, where the estimates \(\hat{c}_i(H=0.5)\) lie between 2 and 4.5 for the same interval length \(T=120\) min. In relative terms though, the difference between the variation in \(\hat{c}_i(H=0.25)\) and \(\hat{c}_i(H=0.5)\) is not so significant, as \(\mathcal {G}\left( \hat{c}_1(H=0.25),\dots ,\hat{c}_n(H=0.25) \right) = 0.11\) compared to \(\mathcal {G}\left( \hat{c}_1(H=0.5),\dots ,\hat{c}_n(H=0.5) \right) = 0.14\) for \(T=120\) min.

For now, we can only speculate on reasons why the optimal \(\widehat{H}\) is strikingly smaller than 1 / 2 for all interval lengths T. The quantity \(\hat{c}_i(H)\) relies on tick-by-tick data, so that an obvious explanation for these unexpected optimal values of H are market microstructure effects. To be more concrete, Benzaquen et al. [6] observe similar to our results a sub-diffusive behavior for so called large tick future contracts. Large tick assets are defined such that their bid-ask spread is almost always equal to one tick, see e.g. [13]. Most of the stocks in our sample can be categorized as large tick stocks based on this definition.

The left panel illustrates the Gini-coefficient in dependence of H for \(T=30\) min (solid), \(T=60\) min (long-dashed), \(T=120\) min (dashed), \(T=180\) min (dashed-dotted) and \(T=360\) min (dotted). The right panel shows the computed values for \(\hat{c}_i(\widehat{H})\) such that \(\widehat{H}\) minimizes the Gini-coefficient for fixed \(T\in \{30, 60, 120, 180, 360\}\) min

When referring to market microstructure effects, however, it deserves to be stressed that the value \(H=1/2\) is implied by numerous models for the efficient price process \((P_t)_{t\ge 0}\), which are backed by empirical evidence and take market microstructure effects into account. Hence, the scaling of the squared volatility through time implied by \(H=1/2\) seems suitable in many applications. We also note that the Gini-coefficient \(\mathcal {G}\) in Fig. 4 does not vary drastically when H ranges between the optimal \({\widehat{H}} \approx 0.25\) and the traditional \(H=1/2\), namely roughly between \(\mathcal {G}= 0.12\) and \(\mathcal {G}= 0.15\). Hence, the value of H does not seem to play a very significant role in explaining the heterogeneity of the value of \({\hat{c}}_{ij}(H)\). Nevertheless, a better understanding of the behavior of \({\widehat{H}}\) seems to us a challenging topic for future research.

5 Conclusion

Finding laws relating the trading activity (defined here as the number of trades N within a given time interval) to other relevant market quantities has been the subject of numerous investigations. The earliest contribution dating as far back as the beginning of the 1970s. Two decades later, Jones et al. [16] suggested the relation \(N\sim \sigma ^2\) based on an extensive empirical study. Other landmark contributions include the relation \(N \sim (\sigma P/S)^2\) of Madhavan et al. [21] resp. Wyart et al. [31] and the so called 3 / 2-law \(N^{3/2}\sim \sigma P V/C\) of Benzaquen et al. [6], which were obtained using market microstructure arguments and supported by empirical evidence. In the first part of the paper we show that all these scaling laws can be derived using arguments relying on dimensional analysis. The relation \(N\sim \sigma ^2\) follows from the assumption that N is fully explained by the squared volatility \(\sigma ^2\), the asset price P and the traded volume V, and the assumption that the relation between these quantities is invariant under changes of the dimensions shares \(\mathbb {S}\), time \(\mathbb {T}\) and money \(\mathbb {U}\). The somewhat refined relation \(N^{3/2}\sim \sigma PV/C\) is obtained when assuming that N depends only on \(\sigma ^2, P, V\) and the cost of trading C, and assuming in addition, that an invariance principle known as “Leverage Neutrality” holds true. This “Leverage Neutrality Assumption” can be seen as a no-arbitrage condition enabling us to obtain a unique functional relation from the assumption \(N= g(\sigma ^2, P, V, C)\). Substituting the quantity C by the bid-ask spread S in the latter assumption, we derive the relation \(N \sim (\sigma P/S)^2\), which is shown to be equivalent to the 3 / 2-law. Alternatively, we can consider the volatility of the relative price change instead of the absolute price change, i.e., assume \(N = g(\sigma ^2P^2, V, C)\) resp. \(N = g(\sigma ^2P^2, V, S)\). This assumption simplifies the analysis in that a unique solution for \(g(\cdot ,\cdot ,\cdot )\) can be obtained without recourse to the “Leverage Neutrality Assumption”. Since our theoretical analysis relies on a set of well-defined, but not necessarily realistic assumptions, the validity of any of the aforementioned scaling laws needs to be confirmed through an empirical analysis.

Based on data from the NASDAQ stock exchange, we provide empirical evidence that the 3 / 2-law \(N^{3/2}=c\cdot \sigma PV/C\) (or equivalently \(N = c^2\cdot (\sigma P/S)^2\)) fits the data clearly better than \(N\sim \sigma ^2\). In fact, the 3 / 2-law holds for a fixed asset and a fixed interval length. However, the estimated value of the constant c strongly depends on the considered asset. In the language of Benzaquen et al. [6], this means that the 3 / 2-law holds with weak universality.

Finally, we note that both our theoretical and empirical analysis relied on the assumption that the scaling of \(\sigma ^2\) is inversely proportional to time \(\mathbb {T}\). This hypothesis is clearly debatable as it tacitly assumes diffusive price behaviors, and ignores e.g. the discrete nature of prices. A closer look at the scaling of \(\sigma ^2\) suggests the scaling \([\sigma ^2] = \mathbb {T}^{-2H}\) for some \(H \in (0,1)\) that can be seen e.g. as the Hurst parameter of a fractional Brownian motion. Repeating our dimensional arguments, the latter scaling of \(\sigma ^2\) yields the relation \(N^{1+H}\sim \sigma ^2PV/C\). An essential drawback of this more general situation is that the parameter H is unknown. We formulate an optimality criterion for the choice of H. It should yield the most homogeneous estimates for the proportionality coefficients \({\hat{c}}_i(H)\). A preliminary analysis implies that, on average, the optimal \({\widehat{H}}\) is of the order 0.25, i.e., quite different from the assumption \(H= 0.5\). Although the overall effect of this passage from \(H=0.5\) to \({\widehat{H}} \approx 0.25\) turns out to have only mild effects on the issue of universality of the corresponding laws, we believe that this phenomenon merits further investigation.

Notes

A meta-order, also referred to as bet, is a collection of trades originating from the same trading decision of a single investor.

Note that Kyle and Obizhaeva [19] use the argument of leverage neutrality in the context of market impact. But, of course, the same idea applies in the present situation.

The coefficient of variation defined as the ratio of the standard deviation to the sample average could be employed as an alternative to the Gini-coefficient. The presented results are widely robust with respect to the chosen measure of standardized dispersion.

References

Aït-Sahalia, Y., Yu, J.: Highfrequency market microstructure noise estimates and liquidity measures. Ann. Appl. Stat. 3(1), 422–457 (2009)

Andersen, T.G.: Return volatility and trading volume: an information flow interpretation of stochastic volatility. J. Finance 51(1), 169–204 (1996)

Andersen, T.G., Bondarenko, O., Kyle, A.S., Obizhaeva, A.A.: Intraday trading invariance in the E-miniS&P 500 futures market. Available at SSRN: 2693810 (2016)

Ané, T., Geman, H.: Order flow, transaction clock, and normality of asset returns. J. Finance 55(5), 2259–2284 (2000)

Bachelier, L.: Théorie de la spéculation. Annales scientifiques de l’École Normale Supérieure 17(3), 21–86 (1900). https://doi.org/10.24033/asens.476

Benzaquen, M., Donier, J., Bouchaud, J.-P.: Unravelling the trading invariance hypothesis. Mark. Microstruct. Liq. 02(03n04), 1650009 (2016)

Black, F.: Noise. J. Finance 41(3), 528–543 (1986)

Bluman, G., Kumei, S.: Symmetries and Differential Equations, vol. 154. Springer, New York (2013)

Clark, P.K.: A subordinated stochastic process model with finite variance for speculative prices. Econometrica 41(1), 135–155 (1973)

Coutin, L.: An introduction to(stochastic) calculus with respect to fractional Brownian motion. In: Séminaire de Probabilités, X.L. (ed.) Volume 1899 of Lecture Notes in Mathematics, pp. 3–65. Springer, New York (2007)

Curtis, W., Logan, J.D., Parker, W.: Dimensional analysis and the Pi theorem. Linear Algebra Appl. 47, 117–126 (1982)

Dufour, A., Engle, R.F.: Time and the price impact of a trade. J. Finance 55(6), 2467–2498 (2000)

Eisler, Z., Bouchaud, J.-P., Kockelkoren, J.: The price impact of order book events: market orders, limit orders and cancellations. Quant. Finance 12(9), 1395–1419 (2012)

Epps, T.W., Epps, M.L.: The stochastic dependence of security price changes and transaction volumes: implications for the mixture-of-distributions hypothesis. Econometrica 44(2), 305–321 (1976)

Hautsch, N., Podolskij, M.: Preaveraging-based estimation of quadratic variation in the presence of noise and jumps: theory, implementation, and empirical evidence. J. Bus. Econ. Stat. 31(2), 165–183 (2013)

Jones, C.M., Kaul, G., Lipson, M.L.: Transactions, volume, and volatility. Rev. Financ. Stud. 7(4), 631–651 (1994)

Karpoff, J.M.: The relation between price changes and trading volume: a survey. J. Financ. Quant. Anal. 22(1), 109–126 (1987)

Kyle, A.S., Obizhaeva, A.A.: Market microstructure invariance: empirical hypotheses. Econometrica 84(4), 1345–1404 (2016)

Kyle, A.S., Obizhaeva, A.A.: Dimensional analysis, leverage neutrality, and market microstructureinvariance. Available at 2785559 (2017)

Liesenfeld, R.: A generalized bivariate mixture model for stock price volatility and trading volume. J. Econom. 104(1), 141–178 (2001)

Madhavan, A., Richardson, M., Roomans, M.: Why do security prices change? A transaction-level analysis of NYSEstocks. Rev. Financ. Stud. 10(4), 1035–1064 (1997)

Mandelbrot, B.: The variation of certain speculative prices. J. Bus. 36(4), 394–419 (1963)

Mandelbrot, B.B., Ness, J.W.V.: Fractional Brownian motions, fractional noises and applications. SIAM Rev. 10(4), 422–437 (1968)

Modigliani, F., Miller, M.H.: The cost of capital, corporation finance and the theory of investment. Am. Econ. Rev. 48(3), 261–297 (1958)

Pobedrya, B.E., Georgievskii, D.V.: On the proof of the \(\pi \)-theorem in dimension theory. Russ. J. Math. Phys. 13(4), 431–437 (2006)

Pohl, M., Ristig, A., Schachermayer, W.,Tangpi, L.: The amazing power of dimensional analysis: quantifying market impact. Mark. Microstruct. Liq. 1850004 (2018)

Pratelli, M.: A remark on the 1/H-variation of the fractional Brownian motion. Séminaire de Probabilités 43, 215–219 (2011)

Robert, C.Y., Rosenbaum, M.: A new approach for the dynamics of ultra-high-frequency data: the model with uncertainty zones. J. Financ. Econom. 9(2), 344–366 (2010)

Schachermayer, W., Teichmann, J.: How close are the option pricing formulas of Bachelier and Black–Merton–Scholes? Math. Finance 18(1), 155–170 (2008)

Tauchen, G.E., Pitts, M.: The price variability–volume relationship on speculative markets. Econometrica 51(2), 485–505 (1983)

Wyart, M., Bouchaud, J.-P., Kockelkoren, J., Potters, M., Vettorazzo, M.: Relation between bid ask spread, impact and volatility in order driven markets. Quant. Finance 8(1), 41–57 (2008)

Acknowledgements

Open access funding provided by Austrian Science Fund (FWF). We thank Jean-Philippe Bouchaud, Rama Cont, Friedrich Hubalek and particularly Mathieu Rosenbaum for helpful comments as well as interesting discussions.

Author information

Authors and Affiliations

Corresponding author

Additional information

Dedicated to Georg Pflug.

The authors acknowledge support by the Vienna Science and Technologie Fund (WWTF) through project MA14-008. M. Pohl and W. Schachermayer are furthermore supported by the Austrian Science Fund (FWF) under the grants P25815 and P28861. W. Schachermayer additionally appreciates support by the WWTF project MA16-021.

Appendices

Dimensional analysis and the Pi-Theorem

In order to formally prove the results of Sects. 2 and 4, which in done in Appendix B, we need the Pi-Theorem from dimensional analysis. For completeness, we therefore provide the following reminder of this important theorem from dimensional analysis, which can also be found in [26]. Additionally, the interested reader is referred to Chapter 1 of the book by Bluman and Kumei [8] as well as to Pobedrya and Georgievskii [25] for a historical perspective and to [11] for a purely mathematical treatment of dimensional analysis. We formalize the assumptions behind dimensional analysis in proper generality. However, for the purpose of the present paper we shall only need the degree of generality covered by Corollaries 5 and 6 below.

Assumption 1

(Dimensional analysis).

-

(i)

Let the quantity of interest \(U \in \mathbb {R}_+\) depend on n quantities \(W_1,\dots ,W_n \in \mathbb {R}_+\), i.e.,

$$\begin{aligned} U = h(W_1,W_2,\dots ,W_n), \end{aligned}$$(32)for some function \(h:\mathbb {R}_+^n \rightarrow \mathbb {R}_+\).

-

(ii)

The quantities \(U,W_1,\dots ,W_n\) are measured in terms of m fundamental dimensions labelled \(L_1,\dots ,L_m\), where \(m \le n\). For any positive quantity X, its dimension [X] satisfies \([X] = L^{x_1}_1\cdots L^{x_m}_m\) for some \(x_1,\dots ,x_m \in \mathbb {R}\). If \([X]=1\), the quantity X is called dimensionless.

The dimensions of the quantities \(U,W_1,W_2,\dots ,W_n\) are known and given in the form of vectors a and \(b^{(i)}\in \mathbb {R}^m\), \(i = 1, \dots , n\), satisfying \([U] = L_1^{a_1}\cdots L_m^{a_m}\) and \([W_i] = L_1^{b_{1i}}\cdots L_m^{b_{mi}}\), \(i = 1,\dots ,n\). Denote by \(B = (b^{(1)},b^{(2)}, \dots ,b^{(n)})\) the \(m \times n\) matrix with column vectors \(b^{(i)}= (b_{1i},\dots , b_{mi})^\top \), \(i=1,\ldots ,n\).

-

(iii)

For the given set of fundamental dimensions \(L_1,\dots ,L_m\), a system of units is chosen in order to measure the value of a quantity. A change from one system of units to another amounts to rescaling all considered quantities. In particular, dimensionless quantities remain unchanged and formula (32) is invariant under arbitrary scaling of the fundamental dimensions.

We can now state the main result from dimensional analysis (see [8]).

Theorem 4

(Pi-Theorem). Under Assumption 1, let \(x^{(i)} := (x_{1i},\dots ,x_{ni})^\top \), \(i=1,\dots ,k:= n-{\text {rank}}(B)\) be a basis of the solutions to the homogeneous system \(Bx=0\) and \(y:= (y_1,\dots ,y_n)^\top \) a solution to the inhomogeneous system \(By = a\) respectively. Then, there is a function \(f:\mathbb {R}_+^k \rightarrow \mathbb {R}_+\) such that

where \(\pi _i := W_1^{x_{1i}} \cdots W_n^{x_{ni}}\) are dimensionless quantities, for \(i=1,\dots ,k\).

We shall only need the special cases \(k=0\) and \(k=1\), which are spelled out in the two subsequent corollaries.

Corollary 5

Under Assumption 1, suppose that \({\text {rank}}(B)=n\) and let \(y:=(y_1,\dots , y_n)^\top \) be the unique solution to the linear system \(By = a\). Then there is a constant \({\text {const}}>0\) such that

Corollary 6

Under Assumption 1, suppose that \({\text {rank}}(B) = n - 1\) and let \(x:= (x_1,\dots , x_n)^\top \) and \(y:=(y_1,\dots , y_n)^\top \) be non-trivial solutions to the homogeneous and inhomogeneous systems \(Bx = 0\) and \(By = a\) respectively. Then there is a function \(f: \mathbb {R}_+ \rightarrow \mathbb {R}_+\) such that

Proofs of Sects. 2 and 4

In this section, we provide formal arguments for the results presented in Sects. 2 and 4. The proofs are based on Corollaries 5 and 6 above.

Proof of Proposition 1

Combining relation (1) and the dimensions of the quantities \(\sigma ^2,P, V\) and N, we obtain that the matrix B as well as the vector a are given by

Table 1 illustrates how B and a relate to the considered quantities and their dimensions. As the matrix B has full rank, i.e., rank\((B) = 3\), applying Corollary 5 yields

for some constant \(c>0\), where \(y=(y_1, y_2, y_3)^\top \) is the unique solution of the linear system \(By=a\) which is given by \(y=\left( 1,0,0\right) ^\top \). \(\square \)

Proof of Relation (4)

Combining relation (3) and the dimensions of the quantities \(\sigma ^2,P, V \) and C as well as N, the matrix B as well as the vector a become

The vector \(x= (-1,1,1,-1)^\top \) is a solution of the homogeneous system \(Bx=0\), and the vector \(y = (1,0,0,0)^\top \) is a solution of the inhomogeneous system \(By=a\). Thus, relation (4) follows from Corollary 6. \(\square \)

Proof of Theorem 1

Combining the dimensions of the quantities considered in relation (5) and the “Leverage Neutrality Assumption”, we obtain that the matrix B as well as the vector a are given by

As the matrix B has full rank, i.e., rank\((B) = 4\), applying Corollary 5 yields

for some constant \(c>0\), where \(y=(y_1, y_2, y_3, y_4)^\top \) is the unique solution of the linear system \(By=a\) which is given by \(y=\left( 1/3,2/3, 2/3, -2/3 \right) ^\top \). \(\square \)

Proof of Corollary 2

Considering the dimensions of the quantities \(\sigma _B,V,C\), we obtain that the matrix B as well as the vector a are given by

As the matrix B has full rank, i.e., rank\((B) = 3\), applying Corollary 5 yields

for some constant \(c>0\), where \(y=(y_1, y_2, y_3)^\top \) is the unique solution of the linear system \(By=a\) which is given by \(y=\left( 1/3,2/3,-2/3\right) ^\top \). This shows (14). \(\square \)

Proof of Corollary 3

As explained before the statement of Corollary 3, the conditions (5) and (15) are equivalent. Thus, it holds

Since \(C=SV/N\), the corollary follows. \(\square \)

Proof of Proposition 2

The proof is the same as that of Theorem 1 except that in the present case the matrices B and a are given by

The unique solution y of the linear system \(By = a\) is \(y = 1/(1+H)\cdot (1/2,1,1,-1)^\top \). Applying Corollary (5) gives the desired result. \(\square \)

Integer part of Brownian motion

With the notation from Sect. 4, we want to show that as \(T\searrow 0\)

for some constant \(c>0\). Recall that \(\left( \log (\check{P}_t)\right) _{t\ge 0}\) is given by

where \((W_t)_{t \ge 0}\) is a standard Brownian motion and \(\text {int}(x)\) denotes the integer closest to the real number x, i.e., \(\text {int}(x) = \sup \lbrace n \in \mathbb {Z}: n \le x + 0.5 \rbrace \).

To present the idea in its simplest possible form, note that for fixed \(t>0\), say \(t=1\) and T small, it is straightforward to verify that

So that \(\mathbb {V}\text {ar} \left( \log (\check{P}_{t+T}) - \log (\check{P}_t) \right) \) is of order \(T^{1/2}\), as \(T\searrow 0\), rather than of the usual order T. In the above sketchy argument we used the fact that, for every \(t>0\),

for some constant \(c>0\).

To furnish a more precise result, we make—contrary to our usual assumption \(W_0=0\)—the assumption that the Brownian motion starts from a random variable \(W_0\) which is uniformly distributed on \([-1/2,1/2]\). Then, we can formulate the following more quantitative result for fixed \(t=0\).

Proposition 3

Assume that \(W_0\) is uniformly distributed on \([-1/2,+1/2]\). Then,

Proof

Note that

where \(\log (\check{P}_{0})\) is in fact zero as we assumed that \(W_0\sim \) Uni(1 / 2, 1 / 2). In the following \((B_t)_{t\ge 0}\) denotes a standard Brownian motion starting at \(B_0=0\) such that \(W_T = B_T + W_0\). Then,

We now use that fact for \(x\rightarrow \infty \), \(\Phi (-x) \approx \phi (x)/x\), where \(\phi (x) = \exp (-x^2/2)/\sqrt{2\pi }\) is the probability density function of the standard normal distribution (we thank Friedrich Hubalek for pointing this out to us). It follows that for small T

which concludes the proof. \(\square \)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Pohl, M., Ristig, A., Schachermayer, W. et al. Theoretical and empirical analysis of trading activity. Math. Program. 181, 405–434 (2020). https://doi.org/10.1007/s10107-018-1341-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-018-1341-x